I will share my own experience with customers. If you want to save with Azure SQL Database you have to optimize your database and its programming objects. Just last week a customer saw 30% less DTU usage on peak hours by just adding less than 10 indexes to their database. Adding missing indexes to the database is the easiest way to lower DTU consumption.

When the database reaches the DTU limit and stay there for some minutes, poor performance and connection timeouts may come along.

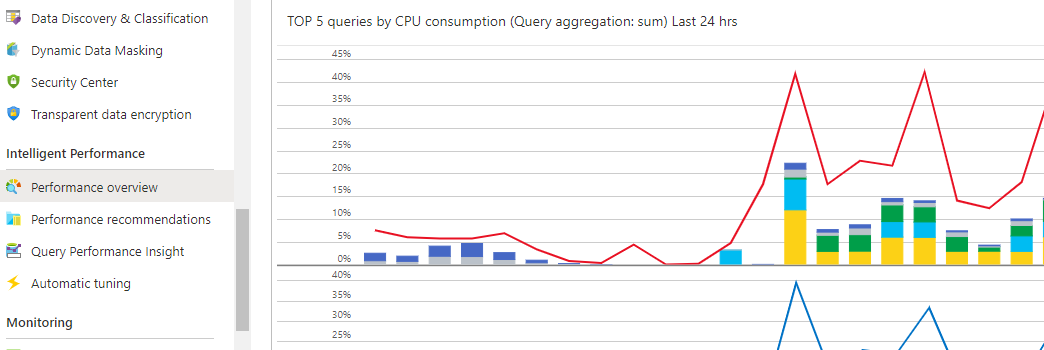

Optimize top worst queries in terms of performance. Identify them on the "Performance Overview" section for your Azure SQL Database (Azure portal - left vertical panel). See image below. You make a click on the chart and you get details of the queries involved.

Verify also deadlocks are not occurring. Resolve possible deadlocks issues.

IO intensive workloads are more intended for premium tiers.