Hi @SKinmond , @KranthiPakala-MSFT

Are there any updates on the issue?

We are experiencing exactly the same.

Not that winscp is working just fine with the same credentials.

Kind Regards

Eugene

This browser is no longer supported.

Upgrade to Microsoft Edge to take advantage of the latest features, security updates, and technical support.

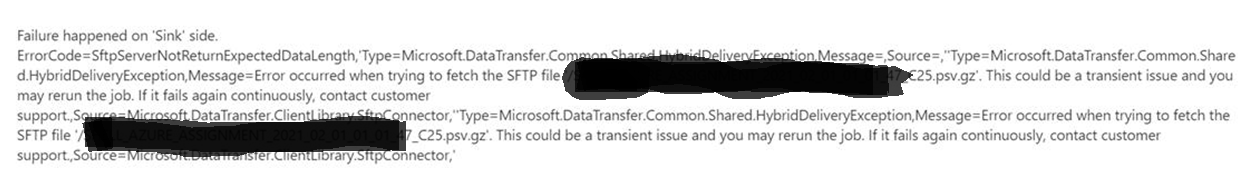

I'm getting a repeated error when attempting to ingest multiple PSV.GX files, in batch, from an SFTP server (LINUX) into Azure. Pipeline connection has been checked (all fine) and new connection created for the sake of testing to rule this out. Same error every time.

We've moved files from an existing folder on the SFTP server to a new folder and tried again. Again fails. If attempting to ingest a single file, this usually works. But due to nature of our project, we need to batch ingest a number of files daily.

Seems no limit on file size so this shouldn't be an issue? Strange thing is, this worked fine for a while, only just now started failing.

Seems to fully read the total number of files to be ingested (e.g. 45 files in a folder) but only writes 43 before failing with the error.

Also, the failure always specifies the latest dated file in that folder.

Other point to note is if we manually download the PSV.GZ files from the SFTP server (LINUX) to a windows machine, then re-upload them, ingestion then works successfully. Obviously this is not a viable option or workaround going forward but... Anyone experienced the same issue? Any pointers or solutions? Many thanks!

Hi @SKinmond , @KranthiPakala-MSFT

Are there any updates on the issue?

We are experiencing exactly the same.

Not that winscp is working just fine with the same credentials.

Kind Regards

Eugene

The issue got resolved by Microsoft Support: there is an undocumented feature "disableChunking": true that solved the problem which is yet to be released