Hi @Vitalii Stadnyk ,

Did you finally find a solution to get the user routed to a healthy instance in step 3?

I am facing the same issue with Azure Load Balancer (standard tier). When an application pool goes down, it takes about 10-20 seconds for health probe to mark VM as unhealthy. From this point new connections are routed to another VM in the backend pool. But existing connections are still being routed to the unhealthy instance.

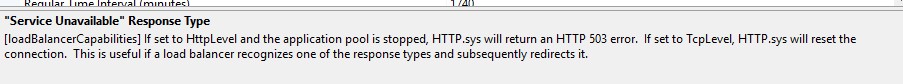

I have set "Service Unavailable" Response Type for IIS Application Pool to TcpLevel (from HttpLevel) but it makes no difference.

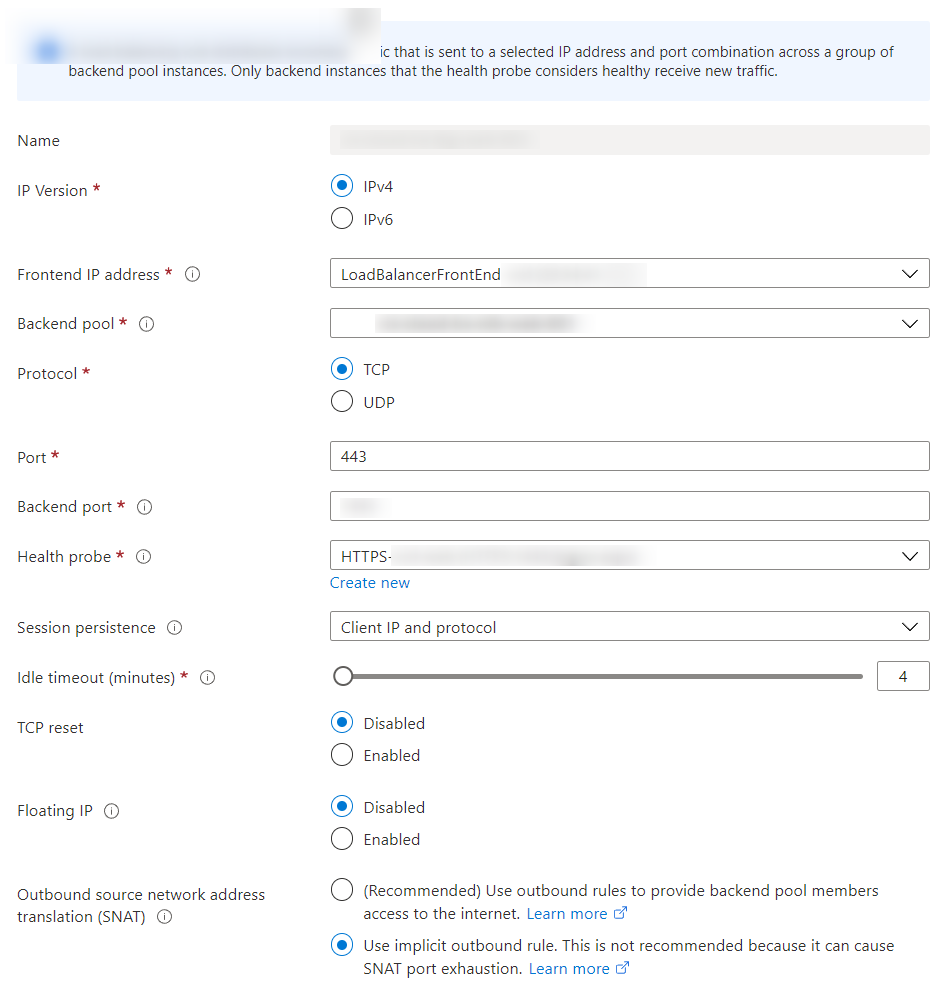

Here you can see the Azure Load Balancer configuration:

Values for Session persistence, Floating IP and Outbound SNAT must be configured this way, due to other restrictions.

I've tested with different values for TCP reset - turned it on and off. But this makes no difference to reported behavior.

I believe Session persistence could speed up switching to healthy VM, because user could basically get a new route on every request. But we explicitly want to have session persistence, so user "stays" on a vm - as long as it's healthy.