Slaying the Virtual Memory Monster

If you have ever heard one of my talks on Windows Mobile development, you may remember me ranting a bit about Virtual Memory issues. It’s one of those topics that many developers never need to worry about, however, if you are one of the devs that have fought this battle – you can appreciate why I think it deserves some attention. Especially now because…

It’s easy to be a Windows Mobile Developer

When developers first start working with Windows Mobile, they are often surprised at just how much they can do and how much knowledge they can reuse. Built on top of Windows CE, the platform inherits all the flexibility and power of a proven operating system used for years on small footprint and special purpose devices. If you have done any desktop development, you will find most of the same APIs and libraries you have come to depend on. Windows Mobile may have fewer or smaller versions of the APIs, but many are nearly identical to the desktop. In the same respect, the Compact Framework provides a comprehensive subset of the full .NET Framework functionality. All in all, it’s a natural extension of what you already know how to do. Visual Studio enables mobile development beside all your other projects so -- everyone can be a Windows Mobile developer. This is a good thing.

It’s the same but it’s different

As similar as Windows Mobile and CE development is to the desktop world, there are some fundamental differences that new mobile developer should take time to understand. Remember, the WM 5 and 6 platforms are built on CE 5.x— designed with a very small memory footprint in mind. Most notably, the virtual memory space is considerably smaller than our desktop platforms and DLLs have special requirements for loading into the same memory area across applications. Did I mention that the platform may automatically shutdown applications when memory gets low and that only 32 processes can run at the same time? Many of these differences are easy enough to accommodate, but if you don’t take the time to learn some of these unique rules of mobile development, you may find yourself face to face with the nastiest of these -- the Virtual Memory Monster.

Did someone say monster? Yeah, well… we could just say “a rock and a hard place” but you wouldn’t remember that post and it isn’t very exciting is it? If we’re going to tackle a problem, let’s give it a name. The “Virtual Memory Monster” is actually a condition when your process runs out of VM space long before your device runs out of RAM. As a result, you can see a number of “memory” related failures that never really point to a specific piece of code. Sometimes they don’t even point to a specific application. It’s a difficult condition to address even when you know what is going on. To the novice WM developer, it can be just plain baffling. It doesn’t happen a lot, but it happens. Let’s talk about it…

First, let’s agree on the terms

This is not a new topic. It’s been around for years with previous versions of CE and Windows Mobile, but it still may be the most misunderstood blocker in the Windows Mobile handbook of “gotchas”. It can be tricky to talk about, so we need to lay down some fundamentals. I’m going to take a few liberties in my explanation for the sake of keeping it simple, but I encourage you to read the technical papers linked to the end of this post for deeper insight.

· Virtual Memory – When I talk about Virtual Memory, I’m really talking about addressable memory space. Think of this as an abstracted “work area” for a process. On the desktop, we had a 4GB address space and each application would get a 2GB area by default. On Windows Mobile, each application has to work with a 32MB slot. That means, in a perfect world, you have a 32MB VM area to leverage in your process space.

· RAM – RAM is the physical resource each process consumes to fill memory requests. Your process may have a 32MB virtual address space, but you don’t see 32MB of RAM disappear from the system when you start a process right? When the process loads resources and commits memory, RAM is then “consumed” to fill this need. For example, each process starts with a default heap that is created within your virtual memory area. It grows as your application allocates objects and as a result, consumes memory out of the available system RAM and begins to use up your 32MB address space.

· RAM vs. VM - RAM and VM are two completely different categories of memory that are sometimes (and mistakenly) used interchangeably. They have different failure characteristics when they run out. When you run out of RAM, you are out of physical memory. When you run out of VM, you run out of space to use the memory. Having tons of RAM won’t help your process if the VM space is full. Similarly, you can run out of RAM and still have plenty of VM space left in a process.

Yet another way to think about it…

Imagine a conference room with a limited number of seats. Let’s make a rule that if you want to get any work done, it has to happen in the conference room. A WM process is like the conference room. It’s where the “work” happens and the virtual address space is much like the seating arrangement. You may have hundreds of employees, but if they don’t fit inside the room and have a seat, you can’t use them – just like RAM won’t do you any good if your process VM space is full.

The devil is in the details…

A large, resource hungry application could simply run out of VM space by using a lot of RAM and/or loading a lot of components. Those are pretty easy to spot and deal with because they stand out like a sore thumb. The real problem comes from a combination of contributing factors that are quite harmless by themselves but problematic when mixed together.

1) As memory is getting bigger and cheaper – devices are shipping with more of it. When you can actually allocate 32MB worth of RAM and still have some to spare, then it’s easier to exhaust your VM space.

2) Developers are building desktop sized apps for Windows Mobile without thinking about (or worrying enough) about memory implications on each other

3) Windows Mobile inherits CE’s age-old technique for loading DLLs

Technical Explanation

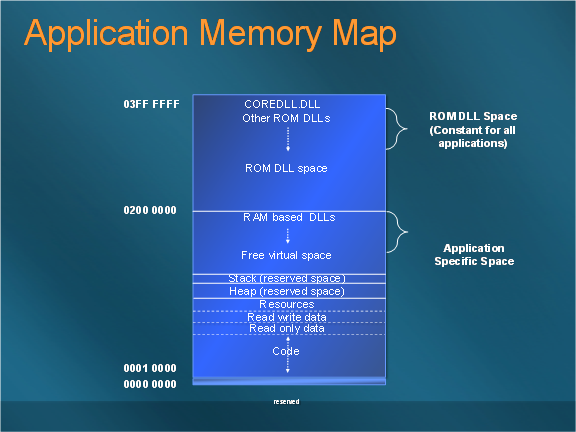

All the contributing factors share responsibility for VM related failures, but the little “optimization” technique that CE used to be efficient sort of becomes the bad guy. Without going into great detail about WHY it works the way it does, let’s just jump to the HOW. I’ll first say that some DLLs loaded into ROM are not really part of this problem. For the most part, most live in a special place in memory between 0x02000000 – 0x03ffffff and specifically set aside for ROM components outside the VM area we’re worried about. Managed DLLs are also special cases (we’ll get to that later). All other DLLs and components (which are not part of the device ROM) that are loaded into your process space deserve the special attention. When they are loaded, they must be mapped into exactly the same VM position in any process that uses them. To do this they all share some restrictions about where and how components get loaded. A DLL cannot be loaded into a position being used by any other DLL. (pause and ponder) Let’s walk through it…

A Windows Mobile process space starts with 32MB of virtual memory. When you start an app, the EXE is loaded at the bottom of this space (above a little reserved spot) starting at 0x10000 (64k) and grows upwards (static data, heap, stack, etc.). The heap will grow upward into the process space as you start allocating objects in your app. Everything grows up – from the bottom of the space to the top except DLLs. DLLs are special -- they are loaded at the very top of the address space under any other previously loaded DLLs and work their way down – always considering the load position of other DLLs and loading at an address underneath them. In a perfect world, this all starts up around position 0x2000000 (32MB). I say “in a perfect world” because there is almost always other components loaded ahead of you by the OEM, operator, and other applications. Realistically, the starting DLL load position is often somewhere between the 14-18MB mark on a freshly reset, commercial device and can vary greatly depending on what “ships” on the device. Net, net -- your free VM (“work area”) can vary and you have two sides of the VM space – one growing up and one growing down. When they meet – it’s often “game over” for loading any more components into that process and the failures can start. The platform tries really hard to make it all work automatically and transparently. By loading DLLs at the top of the address space and at a location under any other system wide DLLs, it usually avoids collisions and conflicts across the VM space – but not always.

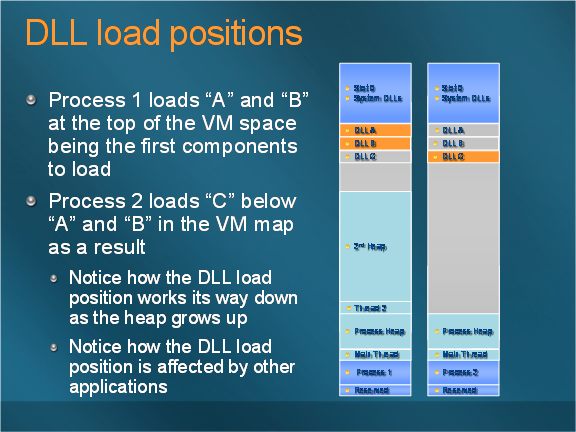

For example – when process 1 loads a “A” DLL, it loads in the top of its VM space. The next “B” DLL has to load below the first one. If process 2 now loads a “C” DLL, it loads in the new process but it does so in a VM position below the DLLs from process 1 – keeping space open in case process 2 needs to load “A” or “B” at some point. It also means that Now if process 2 loads a C.DLL into memory at 0x00120000 – and process 1 wants to load the same DLL, then VM at 0x00120000 needs to be free in process 1 as well. If the heap in process 1 grows beyond 0x00120000 and then process 1 attempts to load a DLL it fails. Why? The VM area where the DLL must load has been used. You can imagine how complicated this can get with 32 processes – all pulling in DLLs that have to consider each other’s load positions.

What makes this a real challenging problem is that no single application is responsible for the issue. Since it’s perfectly legal for any application load a DLL and every other app has to load components in a position lower than its predecessor, it becomes a little dependant ecosystem. The problem is typically a result of multiple apps all contributing “a little” to a larger issue. If you are the last guy to load your components, you may have to load them very low in the address spaced. Now if your application is “big” – your process may already consume much of the VM space by simply loading the EXE and setting up the heap (remember, it grows up). Your heap may have already grown into the addressable area you need to load that DLL. This is the most common failure scenario.

DLLs are treated like VIPs

Back to the conference room analogy… think of a DLL like a VIP you invited to sit in on your meeting. You give him the best seat in the room (top of the memory space) and let everyone else in the building know he is here. Word gets out and so others teams leave an empty seat in their conference rooms so that if our “special guest” decides to attend another meeting, there’s an open seat right where they expect it. Maybe other VIPs show up and pretty soon, you have a bunch of seats reserved. Then you have to use the reserved seats because space gets tight… and the VIP shows up! Sparks fly! This is similar to how Windows Mobile treats DLLs in when they are loaded into a process space.

Why don’t desktop developers struggle with this?

Running out of VM space is not a new problem nor a mobile specific problem, but there are reasons desktop developers rarely deal with it. First of all, this problem is hard to create when your address space is significantly larger than your available RAM. In the desktop world, Windows used a 4GB model with 2GB of that space dedicated for each process. It’s fairly rare for a single application to use 2GB of RAM. Many systems didn’t even have this kind of RAM available. Most desktop systems would run out of RAM or start paging to disk (setting off a big red flag) before they ever filled up the VM space. When you load a DLL in the desktop world, you don’t have to worry about DLL load positions.

What makes Windows Mobile particular different from the desktop is that it has a small 32MB VM space and special rules about how DLLs load within it. A 2MB DLL loaded in a desktop process is a drop in the VM bucket and has little impact on anything. A 2MB component loaded on Windows Mobile is a sizable chunk of your VM and can place pressure on other apps because of DLL loading rules. Devices are starting to ship with more RAM that makes it easier to fill up a VM space. Combine plentiful RAM, resource intensive applications, and DLL load restrictions and you have the makings for some interesting VM problems. By the way – CE 6 changes all this, but Windows Mobile (both versions 5.0 and 6) is still built on CE v5.x.

Identifying the failure…

When you run out of RAM, it is easy to understand and identify. The system tells you that memory is low and you can observe the memory status easily in system information. Running out of VM space is… well, nearly invisible. RAM looks fine and there is no way to visibly determine or verify VM issues within the UI. Components start failing to load so apps may crash or fail to start. Drivers may fail to work as expected. Ever have to disable Bluetooth to start an application? Ever had to start applications in a certain order to make it all work? While not the only cause, VM issues are often the cause of these failures and they can leave you scratching you head and going in circles with a debugger.

So who needs to be concerned and how do you avoid/debug this problem? … I mean, slay the monster?

Every developer should at least understand the concepts—VM size, DLL load rules, and the size of their application footprint. If you are building or loading native components from your application it’s especially important. If you have a memory intensive application it’s important. If you are building out an enterprise device configuration, you should do some investigation to make sure all the apps you load are good “memory” citizens and don’t create problems for each other.

It’s too much for one post so I wanted to touch on some fundamentals first. In the next part of this post, I’m going to cover examples, techniques, and tools you can use to identify, profile, and work around common VM issues. In the meantime, I suggest a couple of additional articles on the CE and WM memory architecture.

Windows CE .NET Advanced Memory Management

https://msdn2.microsoft.com/en-us/library/ms836325.aspx

Effective Memory, Storage, and Power Management in Windows Mobile 5.0

https://msdn2.microsoft.com/EN-US/library/aa454885.aspx

Cheers,

Reed