AI Announcements at the Build Day 1 keynote

So many exciting announcements in the Artificial Intelligence space at the Build keynote this morning! In case you missed it, here’s the lowdown. You can watch the keynote recording at https://channel9.msdn.com/Events/Build/2017/KEY01.

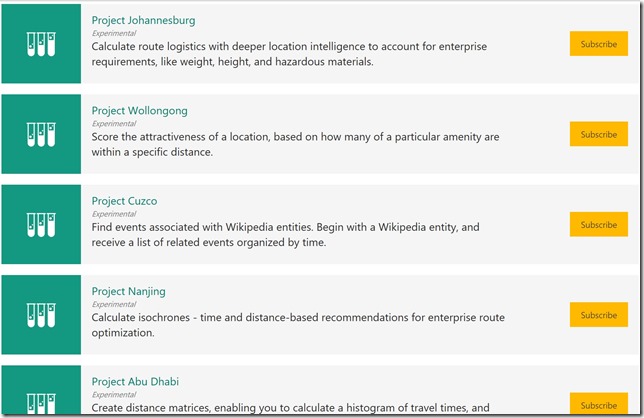

Cognitive Services Labs

https://labs.cognitive.microsoft.com/

The Labs give developers an early look at the exciting emerging Cognitive Services technologies. You can try out and provide feedback before these technologies become generally available. This is basically a playground to explore this research and get a sense for what may be coming.

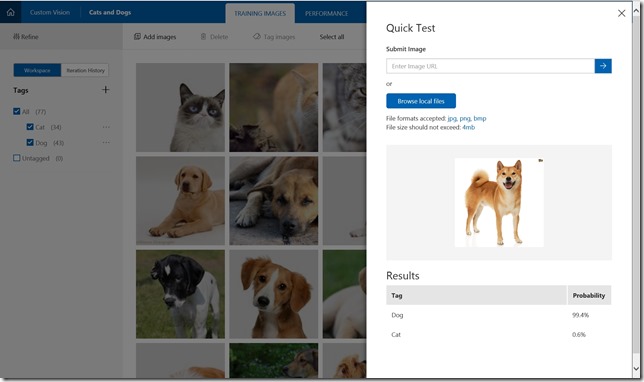

Custom Vision (codenamed IRIS)

You’ve seen LUIS for custom language understanding and CRIS for custom speech understanding! Now we are excited to introduce codename IRIS for custom vision understanding. Custom Vision will allow you to build a classifier to distinguish between images of different things. I built a quick classifier to distinguish between cats and dogs. I uploaded 34 images of cats and 43 images of dogs (just grabbed from a Bing image search for “cat” and “dog”). After you upload a group of photos, you can apply a tag to all of them, so it was easy to tag one batch as “cat” and the other batch as “dog”. After training the model, I grabbed some new photos of cats and dogs that were not in my training set to test it. It performed pretty well, so I tried to stump it. I found an image of a dog with a curly tail that looks vaguely cat-like, so I tried with that. Wow!

Project Prague

Project Prague

Project Prague is an SDK which enables gesture-based controls in your applications. It requires an Intel RealSense SR300 camera.

One of their demos is a PowerPoint add-in that allows you to rotate and move objects with gestures. They also have a game (made with Unity) where the user can pull back and launch a slideshot to knock stuff down (like Angry Birds in 3D). Another app used camera overlays in conjunction with gestures (watch me drop the mic below!). Check these apps out in their demo video.

Video Indexer

The Video Indexer combines several existing Cognitive Services into a neat service that provides insights into videos. This includes audio transcription, detecting when each face appears in the video, speaker indexing, visual text recognition, face identification, voice activity detection, scene detection, sentiment analysis, key frame extraction, key word extraction, translation, and content moderation.

PowerPoint Translator

https://aka.ms/powerpointtranslator

Presentation Translator is an Office add-in for PowerPoint that enables presenters to display translated subtitles in real time. It currently supports speaking in 10 languages (Arabic, Mandarin Chinese, English, French, German, Italian, Japanese, Portuguese, Russian, and Spanish) and subtitling into 60+ languages. The text of the PowerPoint can be translated as well while preserving the original formatting (including translation between left-to-right and right-to-left languages).

Bots

There are enough Bot Framework announcements that they warrant their own blog post! The Bot Framework team did an incredible job of summarizing their announcements at https://blog.botframework.com/2017/05/10/Build/. *Please* go read that post because I’m not doing it justice here, but among the announcements are:

- New channels: Cortana, Bing, and Skype for Business

- Adaptive Cards

- Bot Framework Payment Request API

- LUIS improvements

- Speech support

- Documentation, Analytics, and Publishing Flow improvements

- Azure Bot Service v.Next

- New AI MVP Award

Related Blog Posts

Bot Framework Blog: Bot Framework at Microsoft Build 2017

Machine Learning Blog: Now Serving: More AI with your big data

Data Platform Insider Blog: Serving AI with data: A Summary of Build 2017 Data Innovations

Building Apps Blog: Cortana Skills empowers developers to build intelligent experiences for millions of users

Guggs’ Blog: Microsoft Build 2017: Redefining Business with AI and My New Role