Capacity Management vs. Capacity Planning

This is the first in a series of posts around a topic that is very important to me. I have published and presented most everything here in the last couple of years internally in a number of venues:

- At internal SharePoint PG presentations (originally)

- As part of the MCM SharePoint 2009 Capacity Planning training (which I wrote and delivered)

- In an internal ThinkWeek 2009 paper

- As parts of various TechReadys (an internal TechEd like event)

- And other venues...

However I have never put it out in something that was available outside of Microsoft. Now that it seems to be catching on (internally with SharePoint at least), I think it is time I went "public". So this will be a series of posts around that, including the best material from the ThinkWeek paper and dozens of presentations. So here we go:

The Ideal

We want to contrast the concepts of “Capacity Planning” and “Capacity Management”. Capacity Planning is what you do up-front, however it has a “throwaway” flavor to it which (as we will see) is particularly prevalent in the SharePoint world. The idea that a “Capacity management” methodology be used for the entire lifecycle of a deployment is not a new idea, and is actually the methodology that is promoted by both MOF and ITIL the two most widely promoted and accepted operational methodologies (at least in the Microsoft world in which we move) – and in fact they are very similar since MOF was mostly inspired by ITIL.

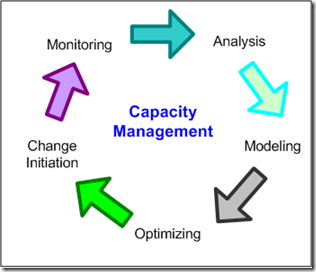

Both MOF and ITIL require a continuously improving process for a given deployment. Both require that some kind of modeling be used at all stages in a process that continually runs to active an optimal deployment even in changing situations. A high-level diagram of this process taken from the MOF documentation is shown here:

There can be some debate as to what “Modeling” consists of, at the minimum we would expect some process that would allow us to vary our resource configuration and somehow predict what kind of performance we can expect.

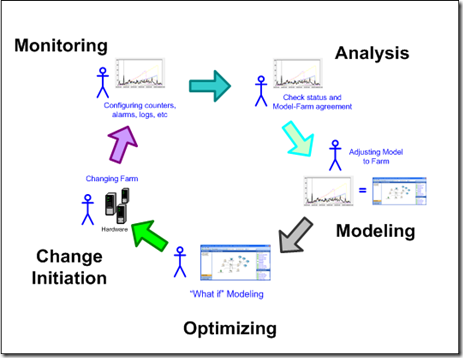

So how would we expect this to work in a SharePoint deployment situation? We show a more detailed diagram here:

In more detail:

Modeling – Here we model our installation – presumably using the demand model described below. For example the SharePoint Capacity Planner (SPCP) uses this model. This will give us a prediction as to what kind of resources we will need to host this demand. The result of this step is a model that we can use in the Optimizing step to make decisions. Green considerations (like the carbon footprint) should be part of this model.

Optimizing – The optimizing step is similar to the modeling step. In this step various assumptions and alternatives are considered using our model. At the end we have a configuration (possibly just a change to an existing config) that we want to implement.

Change Initiation – Perhaps the word “deployment” would fit better here, however in this step the configurations that we decided in the preceding step are implemented. This can be a very complicated step but it is not in the scope of this document.

Monitoring – Once we are live we will start monitoring – collecting logs and performance counters, monitoring event logs, and other relevant artifacts.

Analysis – In the analysis phase we take the artifacts gathered in the Monitoring phase and analyze them to find problems and evaluate the quality of the deployment. At this point we will normally have at least some divergences from what our model predicted. So in the next step we will need to update and adjust our model to reflect this.

Note that there is a key difference between the first time we hit the Modeling step, and the second time. In the first time we are operating with no existing deployment data and can use our model “as-is”. In the second and subsequent rounds we need to adjust the model to reflect reality.

The Reality

There is a SharePoint Capacity Planning (SPCP) model used in the field for SharePoint 2007 – and it is often used in the first cycle of planning. However the model has fundamental shortcomings when it is attempted to use it in more than one cycle of planning. If there are differences in what the model predicted and what we measured, then we need to adjust (or calibrate) the model. Some common reasons for this are:

1. The user workload is different than anticipated.

2. The hardware that is being used behaves differently than the model predicts.

3. The actual transaction costs are different because of customization.

4. The actual transaction costs are different because the product has evolved.

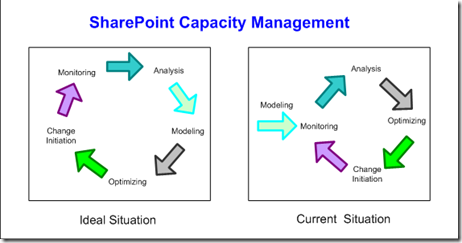

Only the first point here can be directly addressed by SPCP (assuming we know how to actually measure the workload). However the other three are fixed - SPCP does not make provisions for adjusting or calibrating those values and we cannot use the model in this cycle. So we end up with a truncated Capacity Management Cycle as follows:

The end result is that modeling is performed only in the “planning phase”, and this sets us up for the “The Abyss”, described in the following section. While we do have a continuingly improving process - our deployments get better over time - it is a truncated one, because the model must be left out of the cycle – the model’s predictions cannot improve to match our experience. And a particular insidious result is that our future planning does not benefit from this experience.

it is possible to design a model without these shortcomings. We will talk about how to do this in future posts.

Notes: Most of this text was written in early 2009, and some things have changed since then:

- The importance of lifecycle capacity management is recognized by the PG and some new features in 2010 support this (partly).

- Additionally SPCP has been withdrawn from support, without a substitute. This is a shame, I think they should have fixed it instead of killing it. But it is an opportunity for someone maybe.

- Some people argue that this does not matter, that it is simply a starting point. However I think it does, and I explain why in the next post.