Virtual Machine Provisioning

| Caution |

| Test the script(s), processes and/or data file(s) thoroughly in a test environment, and customize them to meet the requirements of your organization before attempting to use it in a production capacity. (See the legal notice here) |

Note: The workflow sample mentioned in this article can be downloaded from the Opalis project on CodePlex: https://opalis.codeplex.com |

Overview

The Virtual Machine Provisioning workflow is a process shell that provides the core structure typical for provisioning processes. The workflow includes examples of how the following provisioning features could be implemented:

**

Request Driven Provisioning – The workflow uses an intermediate database for the registration of new requests. External systems (such as a Service Catalog or a Service Desk) only need to register new approved requests in this database in order for the workflow to process them.

Billing – The workflow allows the registration of customers by email domain. Individual customers have a “balance” and different sized VMs can have different costs associated with them. When a VM request is received, the workflow checks to make sure the customer has funding sufficient to pay for the VM. If not, they are notified of insufficient funds.

SLA Driven Provisioning – Different customers can have different SLA levels associated with them (“Gold”, “Silver”, “Bronze”, etc). These SLA levels can drive the provisioning process such that customers with lower level SLAs can be provisioned into VM clusters with higher occupancy levels (i.e. more heavily leveraged environments) vs. customers with higher SLA levels that might be provisioned into less heavily leveraged systems or dedicated environments.

Capacity Driven Provisioning – Cluster Capacity is evaluated prior to selecting the target for the VM. This permits the VM to be created in such a way as to optimize cluster resources aligned with the aforementioned customer SLAs.

Service Desk Synchronization – Just because a process is automated doesn’t mean it’s not subject to governance and control requirements. Requests can be registered and tracked in an external Service Desk. This means that provisioning process can be governed just like any other IT process. It also means that status of current and past provisioning requests can be viewed in the Service Desk platform just as if it were any other type of request processed by IT.

VM Decommissioning – VMs are registered in a CMDB and an expiration date is flagged. When VMs “expire” they are decommissioned. An external “renewal process” (not part of the workflow) could be used to bump the expiry date, perhaps for an additional charge. This is an enhancement to the billing feature that provides sustained accountability for VMs, addressing issues such as so called “VM sprawl”.

The Virtual Machine Provisioning sample consists of three sub-folders of Opalis workflows:

“0. Setup” – This folder contains the basic workflows needed to configure the sample to work in a typical install of Opalis. This includes the creation of a Provisioning Database with three key tables (Requests, Customers and Inventory tables). Additionally there is a workflow to populate the tables with demo data. Finally, there is a workflow to generate a “sample” request that can be used as demo/test data or as a sample of how one could inject a request from an external system.

“1. Virtual Machine Provisioning” – These are the primary top-line workflows, of which there are three. The first monitors the Requests database table for new VM creation requests and initiates processing when one is received. The second processes requests. The third is a “scubbing” workflow that looks at the inventory table on a periodic basis for already provisioned and registered VMs that have exceeded their expiration date and retires them.

“2. Child Workflows” – These are the secondary modular workflows. Most of these are stub workflows designed for workflow authors to customize the provisioning process to meet their specific needs. That is to say, these workflows provide a place for authors to add interaction with their Service Desk, Virtualization Platform, Service Catalog, etc. The workflows provide the basic plumbing to support the features of the top-line processes, but don’t actually make use of any Opalis Integration Packs.

Setup

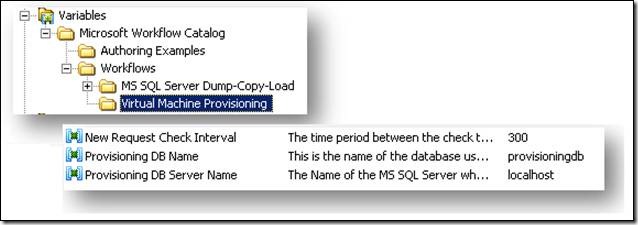

There are two steps required to prepare the Virtual Machine Provisioning sample. First, one must edit the Opalis Variables for the solution to provide details on the environment where it is to be run. Then one must run an Opalis Workflow to create the required database and tables the solution uses to keep track of Requests, Customers and Inventory.

The required variables can be found in the Opalis Designer under “Variables:Microsoft Workflow Catalog:Virtual Machine Provisioning”. There are three variables that must be configured.

“New Request Check Interval” – This is the time period between subsequent checks of the Requests database table. Units are in seconds. Hence a value of 300 (the default) means that every 5 minutes the sample will check the Requests table to see if new VMs have been requested and if so process these requests. While it is possible to have very small values for this interval, it’s recommended that the value be set to an initial value that is roughly half the time required to provision a single VM. For example, if it takes 10 minutes to provision a single VM them set the initial value to 5 minutes (300 seconds). One can lower or raise this value based on individual results. The recommendation is only offered as a starting point for tuning the sample.

“Provisioning DB Name” – This is the name of the database where the provisioning tables will be created. There is no reason to change the value from the default (“provisoningdb”), however there is likewise no reason why a different value can not entered. Any non-default value would have to be a database name permissible in MS SQL Server.

“Provisioning DB Server Name” – This is the name of the MS SQL Server database server where the provisioning database will be created. The Opalis Action server must be able to resolve the name of the database server and also have network connectivity. Also, the sample uses MS SQL Server Windows Authentication. Hence the Opalis Action Server service must be configured to use a login ID that has permissions to create/drop/read/write (database owner) to a database on the target server. MS SQL Server authentication could also be used, but one would have to modify the workflow to make the change in authentication mechanisms.

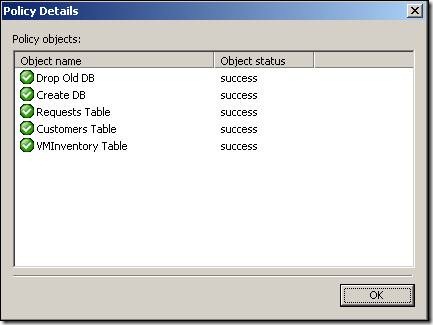

Once the three Variables have been configured, one can run the “0. Setup” policy found in the Virtual Machine Provisioning workflow folder. Note: This workflow first drops the database if it already exists, so if one has already installed and is using the sample, you would drop your existing database if you don’t back it up prior to re-running the setup. This workflow can be run from the Opalis Designer by selecting the Workflow and clicking the  button.

button.

Verify the workflow ran properly by looking at the Log History that will eventually appear below the workflow itself. Double-clicking on the log entry will bring up the activity-by-activity status. Successful execution of each stop will show a series of  icons next to the workflow activity names.

icons next to the workflow activity names.

Solution Reset and Demo Data

Contained in the “0. Setup” folder are two workflows designed to support the VM Provisioning Sample:

“ 2. Reset and Create Sample Data” – This workflow truncates the Provisioning Database data tables and repopulates the Customers table with sample test data for a handful of fictitious customers.

“3. Create Sample Request” – This workflow injects a request into the Provisioning Database Requests table as if a user had just requested a VM be created. The details behind the VM creation align with the test customer data that can be created using “2. Reset and Create Sample Data”. It’s intened to provide a way to test the sample but can also be used to provide a live demonistration of the sample.

These workflows can be run from the Opalis Designer by selecting the Workflow and clicking the ![clip_image006[1] clip_image006[1]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image006%5B1%5D_thumb.jpg) button. Verify they ran properly by looking at the Log History that will eventually appear below the workflow itself. Double-clicking on the log entry will bring up the activity-by-activity status. Successful execution of each stop will show a series of

button. Verify they ran properly by looking at the Log History that will eventually appear below the workflow itself. Double-clicking on the log entry will bring up the activity-by-activity status. Successful execution of each stop will show a series of ![clip_image010[1] clip_image010[1]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image010%5B1%5D_thumb.jpg) icons next to the workflow activity names.

icons next to the workflow activity names.

Top-Level Workflows

There are three top-level workflows that can be found in the “1. Virtual Machine Provisioning” folder:

“1. Monitor for New Requests” – This workflow is designed to persistently run, checking for new requests with a frequency defined by the request polling interval defined in the Environment Variable “New Request Check Interval”. When new entries are found it reviews the request, verifies the customer has the funds required to create the VM, charges the customer and initiates the creation of the VM. It will launch multiple provisioning processes if there are multiple requests. It works in a “fire and forget” fashion, meaning it launches the provisioning process without waiting for it to finish. This means the actual provisioning process itself is responsible for indicating whether it has been successful of has failed. Think of this workflow as a “request engine” whose sole function is to qualify requests and package them up so they can be processed.

“2. Process Requests” – This workflow processes a single request and only runs when it’s called by a parent process (i.e. it is not persistent). It gets called multiple times by the “1. Monitor for New Requests” workflow (based on how many new requests are discovered in a given polling interval.)

“3. Decommission Expired Virtual Machines” – This workflow is persistent. It checks on a regular basis for VMs in the Inventory table that have exceeded their expiration date. VMs that have expired can be decommissioned. Note: An external process could be provided that would “bump” the expiration date, such as a regular billing process or a process involving business case justification to retain the resource. Such processes would be external to this sample.

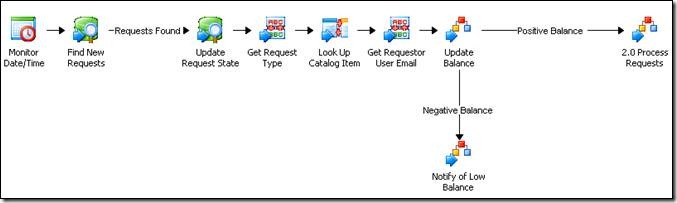

“1. Monitor for New Requests”

This workflow is meant to run persistently. Based on the time interval defined by the Variable “New Request Check Interval” (default = 300 seconds/5 minutes), this workflow checks to see if new entries have been created in the Provisioning Database Requests table. New entries are updated to indicate that they are being processed (so they aren’t re-processed the next time the policy runs).

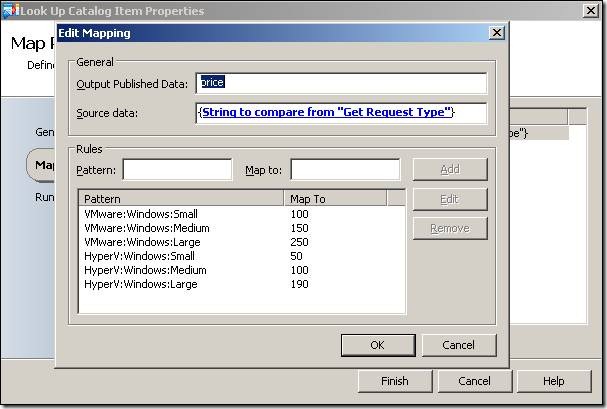

The workflow looks up the type of VM the customer has ordered and charges their account for the VM cost. Different sized VMs have different costs associated with them. The “Get Request Type” activity in the middle of the workflow is a “Map Published Data” foundation activity that is essentially a mini-product catalog that maps incoming request types to VM attributes and costs. This “Map Published Data” activity could be replaced with a CMDB lookup or some other data table. The values shown here are for illustrative purposes and are designed to support the sample. One could change the cost of the VMs by editing the data table values. Notice the VM type, OS Platform and VM Size are all specified by a colon delimited list. This would be used later in the workflow to determine aspects of how the provisioning is performed.

Note: The definition of what a “Small”, “Medium” and “Large” VM are defined in a different workflow (the “Check Capacity” child workflow described later in this document).

“2. Process Requests”

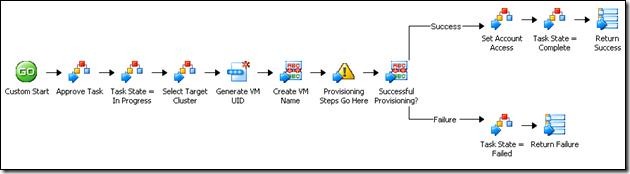

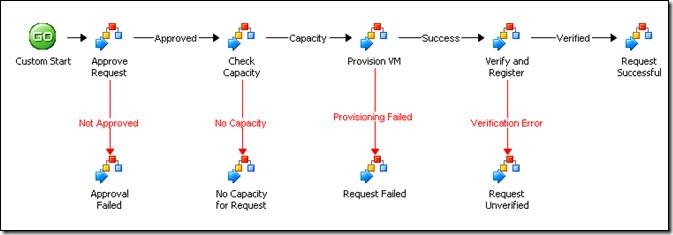

The top-level provisioning workflow can be found in the “1. Virtual Machine Provisioning” folder. It defines a 5-phase provisioning process. Each phase is typical for most provisioning scenarios, however not all implementations will require all phases. Some implementations will require additional phases. The intent of the sample is to provide a typical sample pattern that can be used as a starting point.

The phases provided for in the sample are:

**

Approve Request – Perform necessary actions required to gain approval of the request. In most cases, because this is an automated process with key controls in place, these requests are pre-approved. Sometimes this pre-approval actually needs to be logged in a Service Desk solution (i.e. although it’s pre-approved, it still needs to by marked as approved for governance and compliance reasons). Note: All request are assumed to have been paid for (i.e. that the customer has sufficient balance in their account to fund the creation of the VM). Billing is dealt with in the “1. Monitor for New Requests” workflow and not in the “2. Process Request” workflow. Essentially, VM creation requests for which cannot be funded don’t reach the “2. Process Request” workflow. They are flagged and stopped in the “1. Monitor for New Requests” workflow.

Check Capacity – This is the phase where the VM environment is tested to validate sufficient capacity to process the request. This involves first identifying the customers SLA and the environment into which their VM will be provisioned. Then the environment must be queried to determine where the VM is to be provisioned.

Provision VM – The actual provisioning steps take place here. Once the workflow reaches this point it has a funded and qualified request. Al l VM creation (cloning, etc) would need to be contained within this phase.

Verify and Register – This phase queries the newly created VM and verifies that it matches the request from the customer, essentially validating that the customer is getting the VM they are paying for. Also, this phase registers the newly created asset in the VM Inventory. One could extend this policy to register the VM in a CMDB (or modify the workflow to replace the VM Inventory with a CMDB entirely). This phase sets the expiration date for the VM in the VM Inventory table, which is they date used to expire the VM when it reaches the end of its life and hasn’t been renewed.

Request Successful – This final phase is where change records would be closed out, customers notified, etc. It is the final phase of the provisioning process.

Note: Error handling (the red arrows in the top-line workflow) is all done by a single error handler process. The information about the error is passed to the Trigger of the error handler. The error handler workflow (a child workflow) currently only produces Opalis Platform Events, however it can be modified to provide a wide range of responses to error conditions based on the context of an exception (for example, take different actions based on the message text of the error.)

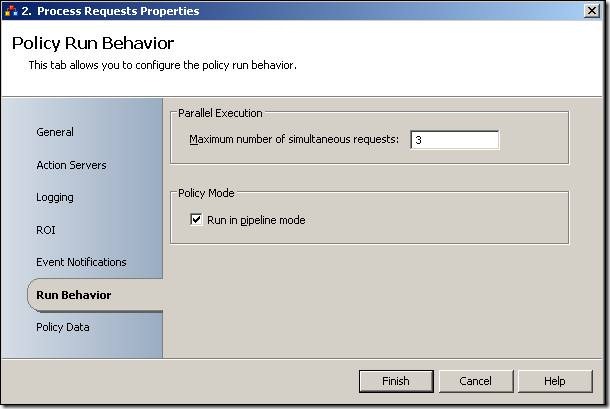

Each execution of this workflow processes a single request for a single VM. Multiple executions would run in parallel (essentially allowing for multiple requests for multiple VMs to be processed). Right-clicking on the workflow tab “2. Process Requests” provides the Properties page for the workflow where under “Run Behavior” one can define the number of concurrent VMs/Requests the sample can process in parallel. A value of “3”, for example, would permit the solution to process up to three VM provisioning processes in parallel. This value can be tuned to meet a given implementations requirements (anticipated volume, etc).

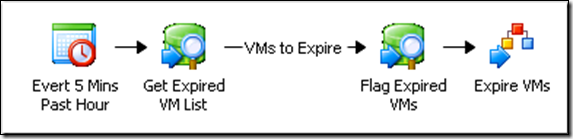

“3. Decommission Expired Virtual Machines”

This is a very simply workflow. Every 5 minutes (configurable if you edit the first activity) this workflow checks to see if VMs in the VM Inventory table have expired. An expired VM would be one that has exceeded its retention period. VMs that have expired are flagged as such and a workflow is triggered that “Expires” the VM. Exactly what “expired” could mean is entirely up to the needs of a given implementation. In some cases the workflow could simply notify the user of the expiration and bump the expiration flag and date (essentially creating a “nag” email that reminds users of the existence of VMs). Customers could be re-billed and their expiration date extended. Reports could be generated. Really, there are a vast number of possibilities depending on how one might want the VM aging process to work.

This workflow is meant to run persistently, meaning it runs all the time. It is configured to run at 5 minutes past the hour, however the time can be changed by editing the first activity. It was set to run just past the top of the hour to avoid the effect of potentially colliding with other processes that run at the top of the hour.

Child Workflows

There are 12 child workflows that support the top-line processes. Most of these workflows are process stubs, meaning that they don’t actually do anything other than return a successful status back to the parent. The intent is to provide an area where workflow authors can add their own steps into these child workflows to build out their own provisioning processes unique to their environment. Some of the workflows provide additional features, such as interacting with the billing tables, qualifying provisioning requests to prepare for a capacity check, etc. In most case a “Send Platform Event” Foundation Activity is used to indicate where authors should insert their own workflow steps:

Approve Request

This is a workflow stub. It is intended to provide an area where an author can interact with a Service Desk or similar system to log the receipt of the request and self-approve its fulfillment.

Approve Task

This is a workflow stub. It is intended to provide an area where an author can interact with a Service Desk or similar system to log individual steps in a provisioning process. Many Service Desk solutions break change records into individual sequences of Tasks (sometimes called “Activities”) and these must be executed in sequence for a change to properly execute. As the provisioning process executes, this child workflow stub is called at points where one might want log change task completion in a Service Desk.

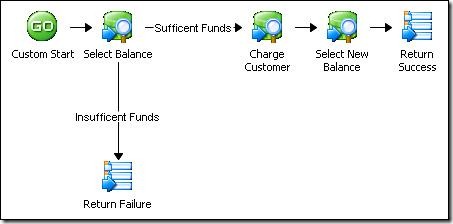

Check and Update Customer Balance

This workflow looks at the email address of a customer and charges their account a certain amount for a VM request. The return amount is the new balance. If it’s negative then there was not sufficient funds for the transaction.

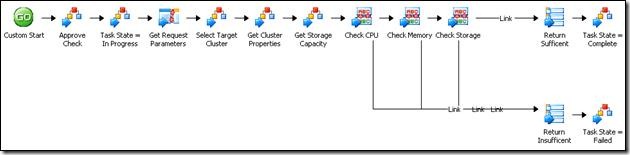

Check Capacity

This workflow looks at the VM request and collects the metrics it needs to evaluate capacity. I calls a number of child workflows including “Select Target Cluster” (to get the cluster a customer would be associated with), “Get Cluster Properties” and “Get Storage Capacity”. It then verifies there is sufficient capacity to fill the request.

Configure Account Access

This is a stub process. It’s intended to permit steps to be inserted into the provisioning process that would set up an initial login account/password for the VM.

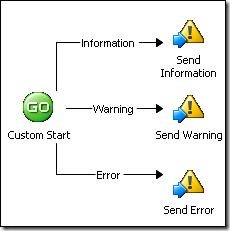

Error Processing

This workflow is used for error processing for the entire sample. When there is a problem this workflow is called and an Opalis Platform Event is sent. This could be augmented to provide alternate notification mechanisms, opening new tickets in a Service Desk, or engaging some sort of remediation process.

Expire VM

This is a process stub. One would put the steps required to expire a VM, such as shutting it down, taking a snapshot, performing a backup, etc.

Get Cluster Properties

This is a process stub. One would put the steps required to collect the performance metrics associated with a VM cluster.

Get Storage Capacity

This is a process stub. One would put the steps required to collect information from a storage subsystem to determine if there is enough disk space for the new VM to be created.

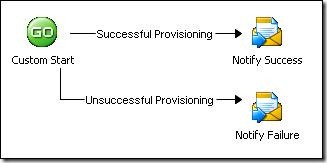

Notify Customer via Email

This is a simple workflow that tells the customer their VM is ready and provides the access information. One will need to edit the “Send Email” activities before this workflow will function properly. This sends email via the SMTP protocol.

Provision VM

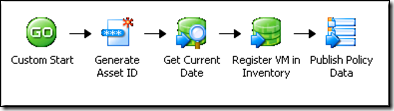

This workflow generates a unique ID for a VM and provides a “holding area” for workflow activities that actually create the requested VM. One can delete the Opalis Platform Event (yellow triangle) in the workflow and paste VM Creation activities to take its place.

This workflow generates a unique ID for a VM and provides a “holding area” for workflow activities that actually create the requested VM. One can delete the Opalis Platform Event (yellow triangle) in the workflow and paste VM Creation activities to take its place.

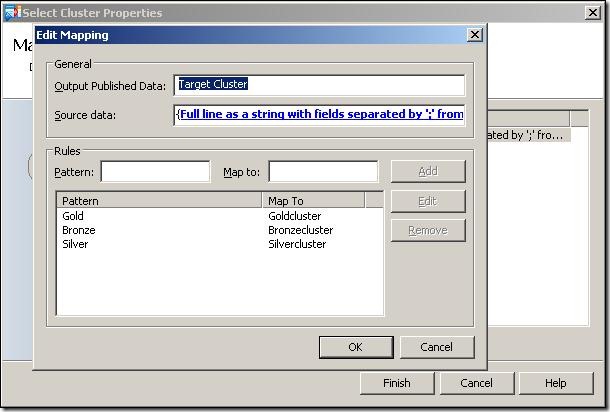

Select Target Cluster

This workflow queries the Customer database and determines the SLA level for a given customer’s email address. Then a cluster is selected for this customer via the “Map Published Data” Foundation Activity labeled “Select Cluster” in the workflow. The cluster name is determined by the SLA Level, and one can edit the “Select Cluster” activity to change the names of the clusters associated with specific SLA levels:

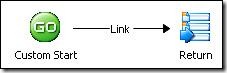

Update Request State

This is a process stub. It could be used to update the status of a Request (the original customers request) to a state such as “In Progress” or “Completed”. This could update a Service Desk or perhaps a web portal. It’s designed to provide a place where workflow authors can add steps required no keep their customers up-to-date about the state of their request for a new VM.

Update Task State

This is a process stub related to the aforementioned “Approve Task” child workflow. It could be used to update the status of a Task (or “Activity”) to a state such as “In Progress” or “Completed”. It’s designed to provide a place for people to add workflow activities needed to interact with a Service Desk or like system.

Verify and Register Asset

This workflow is designed to allow workflow authors to add activities to discovery and verify that the VM was properly provisioned. Likewise, it registers the VM in the VM Inventory table of the Provisioning database. The VM registration is time-stamped to the VM can be expired after a certain period of time.

| Share this post : |  |

![clip_image026[1] clip_image026[1]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B1%5D_thumb.jpg)

![clip_image026[2] clip_image026[2]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B2%5D_thumb.jpg)

![clip_image026[3] clip_image026[3]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B3%5D_thumb.jpg)

![clip_image026[4] clip_image026[4]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B4%5D_thumb.jpg)

![clip_image026[5] clip_image026[5]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B5%5D_thumb.jpg)

![clip_image026[6] clip_image026[6]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B6%5D_thumb.jpg)

![clip_image026[7] clip_image026[7]](https://msdntnarchive.blob.core.windows.net/media/TNBlogsFS/BlogFileStorage/blogs_technet/opalis/WindowsLiveWriter/VirtualMachineProvisioning_B4CF/clip_image026%5B7%5D_thumb.jpg)