Microsoft Infrastructure as a Service Virtualization Platform Foundations

This document discusses the virtualization platform infrastructure components that are relevant for a Microsoft IaaS infrastructure and provides guidelines and requirements for building an IaaS virtualization platform infrastructure using Microsoft products and technologies.

Table of Contents (for this article)

2 Virtualization Platform Architecture

2.1 Virtualization Platform Features

2.2 Hyper-V Guest Virtual Machine Design

This document is part of the Microsoft Infrastructure as a Service Foundations series. The series includes the following documents:

Chapter 1: Microsoft Infrastructure as a Service Foundations

Chapter 2: Microsoft Infrastructure as a Service Compute Foundations

Chapter 3: Microsoft Infrastructure as a Service Network Foundations

Chapter 4: Microsoft Infrastructure as a Service Storage Foundations

Chapter 5: Microsoft Infrastructure as a Service Virtualization Platform Foundations (this article)

Chapter 6: Microsoft Infrastructure as a Service Design Patterns–Overview

Chapter 7: Microsoft Infrastructure as a Service Foundations—Converged Architecture Pattern

Chapter 8: Microsoft Infrastructure as a Service Foundations-Software Defined Architecture Pattern

Chapter 9: Microsoft Infrastructure as a Service Foundations-Multi-Tenant Designs

For more information about the Microsoft Infrastructure as a Service Foundations series, please see Chapter 1: Microsoft Infrastructure as a Service Foundations

Contributors:

Adam Fazio – Microsoft

David Ziembicki – Microsoft

Joel Yoker – Microsoft

Artem Pronichkin – Microsoft

Jeff Baker – Microsoft

Michael Lubanski – Microsoft

Robert Larson – Microsoft

Steve Chadly – Microsoft

Alex Lee – Microsoft

Yuri Diogenes – Microsoft

Carlos Mayol Berral – Microsoft

Ricardo Machado – Microsoft

Sacha Narinx – Microsoft

Tom Shinder – Microsoft

Jim Dial – Microsoft

Applies to:

Windows Server 2012 R2

System Center 2012 R2

Windows Azure Pack – October 2014 feature set

Microsoft Azure – October 2014 feature set

Document Version:

1.0

1 Introduction

The goal of the Infrastructure-as-a-Service (IaaS) Foundations series is to help enterprise IT and cloud service providers understand, develop, and implement IaaS infrastructures. This series provides comprehensive conceptual background, a reference architecture and a reference implementation that combines Microsoft software, consolidated guidance, and validated configurations with partner technologies such as compute, network, and storage architectures, in addition to value-added software features.

The IaaS Foundations Series utilizes the core capabilities of the Windows Server operating system, Hyper-V, System Center, Windows Azure Pack and Microsoft Azure to deliver on-premises and hybrid cloud Infrastructure as a Service offerings.

As part of Microsoft IaaS Foundations series, this document discusses the virtualization platform infrastructure components that are relevant to a Microsoft IaaS infrastructure and provides guidelines and requirements for building a virtualization platform infrastructure using Microsoft products and technologies. These components can be used to compose an IaaS solution based on private clouds, public clouds (for example, in a hosting service provider environment) or hybrid clouds. Each major section of this document will include sub-sections on private, public and hybrid infrastructure elements. Discussions of public cloud components are scoped to Microsoft Azure services and capabilities.

2 Virtualization Platform Architecture

2.1 Virtualization Platform Features

2.1.1 Hyper-V Host and Guest Scale-Up

Hyper-V in Windows Server 2012 R2 supports:

- A host system that has up to 320 logical processors

- A host system with 4 terabytes (TB) of physical memory.

- Virtual machines with up to 64 virtual processors and up to

- Virtual machines with 1 TB of memory

- Hosts running up to 8,000 virtual machines on a 64-node failover cluster

2.1.2 Hyper-V over SMB 3.0

By enabling Hyper-V to use SMB file shares for VHDs, you have an option that is simple to provision with support for CSV, inexpensive to deploy, and offers performance capabilities and features that rival those available with Fibre Channel SANs. SMB 3.0 can be leveraged for SQL Server and Hyper-V workloads.

Hyper-V over SMB requires:

- One or more computers running Windows Server 2012 R2 with the Hyper-V and File and Storage Services roles installed.

- A common Active Directory infrastructure. (The servers that are running AD DS do not need to be running Windows Server 2012 R2.)

- Failover clustering on the Hyper-V side, on the File and Storage Services side, or both.

Hyper-V over SMB supports a variety of flexible configurations that offer several levels of capabilities and availability, which include single-node, dual-node, and multiple-node file server modes.

2.1.3 Virtual Machine Mobility

Hyper-V live migration makes it possible to move running virtual machines from one physical host to another with no effect on the availability of virtual machines to users. Hyper-V in Windows Server 2012 R2 introduces faster and simultaneous live migration inside or outside a clustered environment.

In addition to providing live migration in the most basic of deployments, this functionality facilitates more advanced scenarios, such as performing a live migration to a virtual machine between multiple, separate clusters to balance loads across an entire data center.

If you use live migration, you will see that live migrations can now use higher network bandwidths (up to 10 Gbps) to complete migrations faster. You can also perform multiple simultaneous live migrations to move many virtual machines quickly.

2.1.3.1 Hyper-V Live Migration

Hyper-V in Windows Server 2012 R2 lets you perform live migrations outside a failover cluster. The two scenarios for this are:

- Shared storage-based live migration. In this instance, the VHD of each virtual machine is stored on a local CSV or on a central SMB file share, and live migration occurs over TCP/IP or the TCP/IP over SMB transport. You perform a live migration of the virtual machines from one server to another while their storage remains on the central local CSV or SMB file share.

- “Shared-nothing” live migration. In this case, the live migration of a virtual machine from one non-clustered Hyper-V host to another begins when the hard drive storage of the virtual machine is mirrored to the destination server over the network. Then you perform the live migration of the virtual machine to the destination server while it continues to run and provide network services.

In Windows Server 2012 R2, improvements have been made to live migration, including:

- Live migration compression. To reduce the total time of live migration on a system that is network constrained, Windows Server 2012 R2 provides performance improvements by compressing virtual machine memory data before it is sent across the network. This approach utilizes available CPU resources on the host to reduce the network load.

- SMB Multichannel. Systems that have multiple network connections between them can utilize multiple network adapters with SMB multichannel to simultaneously to support live migration operations and achieve higher total throughput

- SMB Direct-based live migration. Live migrations that use SMB over RDMA-capable adapters use SMB Direct to transfer virtual machine data, which provides higher bandwidth, multichannel support, hardware encryption, and reduced CPU utilization during live migrations.

- Cross-version live migration. Live migration from servers running Hyper-V in Windows Server 2102 to Hyper-V in Windows Server 2012 R2 are supported to provide zero downtime. However, this only applies when you are migrating virtual machines from Hyper-V in Windows Server 2102 to Hyper-V in Windows Server 2012 R2, because down-level live migrations (moving from Hyper-V in Windows Server 2012 R2 to a previous version of Hyper-V) are not supported.

2.1.4 Storage Migration

Windows Server 2012 R2 supports live storage migration, which lets you move VHDs that are attached to a virtual machine that is running. When you have the flexibility to manage storage without affecting the availability of your virtual machine workloads, you can perform maintenance on storage subsystems, upgrade storage appliance firmware and software, and balance loads while the virtual machine is in use.

Windows Server 2012 R2 provides the flexibility to move VHDs on shared and non-shared storage subsystems if an SMB network shared folder on Windows Server 2012 R2 is visible to both Hyper-V hosts.

2.1.5 Hyper-V Virtual Switch

The Hyper-V virtual switch in Windows Server 2012 R2 is a Layer 2 virtual switch that provides programmatically managed and extensible capabilities to connect virtual machines to the physical network. The Hyper-V virtual switch is an open platform that lets multiple vendors provide extensions.

You can manage the Hyper-V virtual switch and its extensions by using Windows PowerShell, or programmatically by using WMI or through the use of graphically rich Hyper-V Manager.

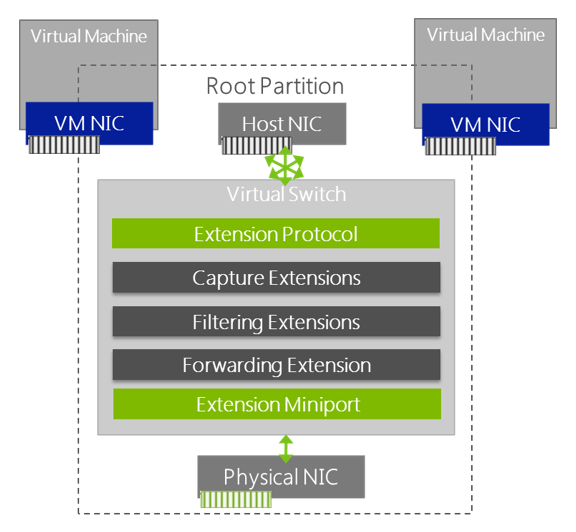

The Hyper-V virtual switch is a module that runs in the root (also referred to as parent or host) partition of Windows Server 2012 R2. The switch module can create multiple virtual switch extensions per host. All virtual switch policies—including QoS, VLAN, and ACLs—are configured per virtual network adapter.

Any policy that is configured on a virtual network adapter is preserved during a virtual machine state transition, such as a live migration. Each virtual switch port can connect to one virtual network adapter. In the case of an external virtual port, it can connect to a single physical network adapter on the Hyper-V server or to a NIC team.

The table below lists the various types of Hyper-V virtual switch extensions.

Network Packet Inspection |

Inspect network packets, but does not change them |

sFlow and network monitoring |

NDIS filter driver |

Network Packet Filter |

Inject, modify, and drop network packets |

Security |

NDIS filter driver |

Network Forwarding |

Non-Microsoft forwarding that bypasses default forwarding |

Cisco Nexus 1000V, OpenFlow, Virtual Ethernet Port Aggregator (VEPA), and proprietary network fabrics |

NDIS filter driver |

Firewall/Intrusion Detection |

Filter and modify TCP/IP packets, monitor or authoriz connections, filter IPsec-protected traffic, and filter RPCs |

Virtual firewall and connection monitoring |

WFP callout driver |

Only one forwarding extension can be installed per virtual switch (overriding the default switching of the Hyper-V virtual switch), although multiple capture and filtering extensions can be installed. In addition, by monitoring extensions, you can gather statistical data by monitoring traffic at different layers of the switch. Multiple monitoring and filtering extensions can be supported at the ingress and egress portions of the Hyper-V virtual switch. The figure below shows the architecture of the Hyper-V virtual switch and the extensibility model.

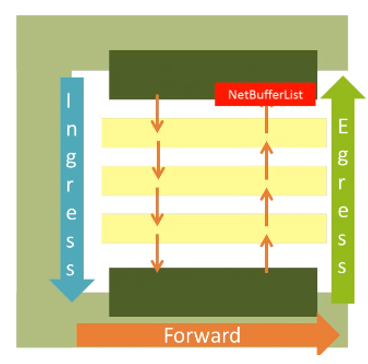

The Hyper-V virtual switch data path is bidirectional, which allows all extensions to see the traffic as it enters and exits the virtual switch. The NDIS send path is used as the ingress data path, while the NDIS receive path is used for egress traffic. Between ingress and egress, forwarding of traffic occurs by the Hyper-V virtual switch or by a forwarding extension.

Windows Server 2012 R2 provides Windows PowerShell cmdlets for the Hyper-V virtual switch that lets you build command-line tools or automated scripts for setup, configuration, monitoring, and troubleshooting. Windows PowerShell also helps non-Microsoft vendors build tools to manage their Hyper-V virtual switch.

2.1.5.1 Monitoring/Port Mirroring

Many physical switches can monitor the traffic that flows from specific ports through virtual machines. The Hyper-V virtual switch also provides port mirroring, which can help you designate which virtual ports should be monitored and to which virtual port the monitored traffic should be directed for further processing.

For example, a security-monitoring virtual machine can look for anomalous patterns in the traffic that flows through other virtual machines on the switch. In addition, you can diagnose network connectivity issues by monitoring traffic that is bound for a particular virtual switch port.

2.1.6 Virtual Fibre Channel

Windows Server 2012 R2 provides virtual Fibre Channel ports within the Hyper-V guest operating system. This lets you connect to Fibre Channel directly from virtual machines when virtualized workloads have to connect to existing storage arrays. This protects your investments in Fibre Channel, lets you virtualize workloads that use direct access to Fibre Channel storage, lets you cluster guest operating systems over Fibre Channel, and offers an important new storage option for servers that are hosted in your virtualization infrastructure.

Fibre Channel in Hyper-V requires:

- One or more installations of Windows Server 2012 R2 with the Hyper-V role installed. Hyper-V requires a computer with processor support for hardware virtualization.

- A computer that has one or more Fibre Channel host bus adapters (HBAs), each of which has an updated HBA driver that supports virtual Fibre Channel. Updated HBA drivers are included with the HBA drivers for some models.

- Virtual machines that are configured to use a virtual Fibre Channel adapter, which must use Windows Server 2012 R2, Windows Server 2012, Windows Server 2008 R2, or Windows Server 2008 as the guest operating system.

- Connection only to data logical unit numbers (LUNs). Storage that is accessed through a virtual Fibre Channel that is connected to a LUN cannot be used as boot media.

Virtual Fibre Channel for Hyper-V provides the guest operating system with unmediated access to a SAN by using a standard World Wide Port Name (WWPN) that is associated with a virtual machine HBA. Hyper-V users can use Fibre Channel SANs to virtualize workloads that require direct access to SAN LUNs. Virtual Fibre Channel also allows you to operate in advanced scenarios, such as running the Windows failover clustering feature inside the guest operating system of a virtual machine that is connected to shared Fibre Channel storage.

Midrange and high-end storage arrays are capable of advanced storage functionality that helps offload certain management tasks from the hosts to the SANs. Virtual Fibre Channel presents an alternate hardware-based I/O path to the virtual hard disk stack in Windows software. This allows you to use the advanced functionality that is offered by your SANs directly from virtual machines running Hyper-V.

For example, you can use Hyper-V to offload storage functionality (such as taking a snapshot of a LUN) on the SAN hardware by using a hardware Volume Shadow Copy Service (VSS) provider from within a virtual machine running Hyper-V.

Virtual Fibre Channel for Hyper-V guest operating systems uses the existing N_Port ID Virtualization (NPIV) T11 standard to map multiple virtual N_Port IDs to a single physical Fibre Channel N_Port. A new NPIV port is created on the host each time a virtual machine that is configured with a virtual HBA is started. When the virtual machine stops running on the host, the NPIV port is removed.

2.1.7 VHDX

Hyper-V in Windows Server 2012 R2 supports the updated VHD format (called VHDX) that has much larger capacity and built-in resiliency. The principal features of the VHDX format are:

- Support for virtual hard disk storage capacity of up to 64 TB

- Additional protection against data corruption during power failures by logging updates to the VHDX metadata structures

- Improved alignment of the virtual hard disk format to work well on large sector physical disks

The VHDX format also has the following features:

- Larger block sizes for dynamic and differential disks, which allows these disks to attune to the needs of the workload

- Four-kilobyte (4 KB) logical sector virtual hard disk that allows for increased performance when it is used by applications and workloads that are designed for 4 KB sectors

- Efficiency in representing data (called “trim”), which results in smaller files size and allows the underlying physical storage device to reclaim unused space. (Trim requires directly attached storage or SCSI disks and trim-compatible hardware.)

- The virtual hard disk size can be increased or decreased through the user interface while the virtual hard disk is in use if they are attached to a SCSI controller.

- Shared access by multiple virtual machines to support guest clustering scenarios.

2.1.8 Guest Non-Uniform Memory Access (NUMA)

Hyper-V in Windows Server 2012 R2 supports non-uniform memory access (NUMA) in a virtual machine. NUMA refers to a computer memory design that is used in multiprocessor systems in which the memory access time depends on the memory location relative to the processor.

By using NUMA, a processor can access local memory (memory that is attached directly to the processor) faster than it can access remote memory (memory that is local to another processor in the system). Modern operating systems and high-performance applications (such as SQL Server) have developed optimizations to recognize the NUMA topology of the system, and they consider NUMA when they schedule threads or allocate memory to increase performance.

Projecting a virtual NUMA topology in a virtual machine provides optimal performance and workload scalability in large virtual machine configurations. It does so by letting the guest operating system and applications (such as SQL Server) utilize their inherent NUMA performance optimizations. The default virtual NUMA topology that is projected into a Hyper-V virtual machine is optimized to match the NUMA topology of the host.

2.1.9 Dynamic Memory

Dynamic Memory, which was introduced in Windows Server 2008 R2 SP1, helps you use physical memory more efficiently. By using Dynamic Memory, Hyper-V treats memory as a shared resource that can be automatically reallocated among running virtual machines. Dynamic Memory adjusts the amount of memory that is available to a virtual machine, based on changes in memory demand and on the values that you specify.

In Windows Server 2012 R2, Dynamic Memory has a new configuration item called “minimum memory.” Minimum memory lets Hyper-V reclaim the unused memory from the virtual machines. This can result in increased virtual machine consolidation numbers, especially in VDI environments.

In Windows Server 2012 R2, Dynamic Memory for Hyper-V allows for the following:

- Configuration of a lower minimum memory for virtual machines to provide an effective restart experience.

- Increased maximum memory and decreased minimum memory on virtual machines that are running. You can also increase the memory buffer.

Windows Server 2012 introduced Hyper-V Smart Paging for robust restart of virtual machines. Although minimum memory increases virtual machine consolidation numbers, it also brings a challenge. If a virtual machine has a smaller amount of memory than its startup memory and it is restarted, Hyper-V needs additional memory to restart the virtual machine.

Because of host memory pressure or the states of the virtual machines, Hyper-V might not always have additional memory available, which can cause sporadic virtual machine restart failures in Hyper-V environments. Hyper-V Smart Paging bridges the memory gap between minimum memory and startup memory to let virtual machines restart more reliably.

2.1.10 Virtual Machine Replication

You can use failover clustering to create highly available virtual machines, but this does not protect your company from an entire data center outage if you do not use hardware-based SAN replication across your data centers. Hyper-V Replica fills an important need by providing a failure recovery solution for an entire site, down to a single virtual machine.

Hyper-V Replica is closely integrated with Windows failover clustering, and it provides asynchronous, unlimited replication of your virtual machines over a network link from one Hyper-V host at a primary site to another Hyper-V host at a replica site, without relying on storage arrays or other software replication technologies.

In Windows Server 2012 R2, Hyper-V Replica supports replication between a source and target server running Hyper-V, and it can support extending replication from the target server to a third server (known as extended replication). The servers can be physically co-located or geographically separated.

In addition, Hyper-V Replica tracks the Write operations on the primary virtual machine and replicates these changes to the replica server efficiently over a WAN. The network connection between servers uses the HTTP or HTTPS protocol.

Finally, Hyper-V Replica supports integrated (Windows) for domain members and certificate-based authentication for non-domain members. Connections that are configured to use Windows integrated authentication are not encrypted by default. For an encrypted connection, use certificate-based authentication. Hyper-V Replica provides support for replication frequencies of 15 minutes, 5 minutes, or 30 seconds.

In Windows Server 2012 R2, you can access recovery points up to 24-hours old.

2.1.11 Resource Metering

Hyper-V in Windows Server 2012 R2 supports resource metering, which is a technology that helps you track historical data on the use of virtual machines and gain insight into the resource use of specific servers. In Windows Server 2012 R2, resource metering provides accountability for CPU and network usage, memory, and storage IOPS.

You can use this data to perform capacity planning, monitor consumption by business units or customers, or capture data that is necessary to help redistribute the costs of running a workload. You could also use the information that this feature provides to help build a billing solution, so that you can charge customers of your hosting services appropriately for their usage of resources.

2.1.12 Enhanced Session Mode

Enhanced session mode allows redirection of devices, clipboard, and printers from clients that are using the Virtual Machine Connection tool. The enhanced session mode connection uses a Remote Desktop Connection session through the virtual machine bus (VMBus), which does not require an active network connection to the virtual machine as would be required for a traditional Remote Desktop Protocol connection.

Enhanced session mode connections provide additional capabilities beyond simple mouse, keyboard, and monitor redirection. It supports capabilities that are typically associated with Remote Desktop Protocol sessions, such as display configuration, audio devices, printers, clipboards, smart card, USB devices, drives, and supported Plug and Play devices.

Enhanced session mode requires a supported guest operating system such as Windows Server 2012 R2 or Windows 8.1, and it may require additional configuration inside the virtual machine. By default, enhanced session mode is disabled in Windows Server 2012 R2, but it can be enabled through the Hyper-V server settings.

2.2 Guest Virtual Machine Design

Standardization is a key tenet of private cloud architectures and virtual machines. A standardized collection of virtual machine templates can drive predictable performance and greatly improve capacity planning capabilities.

As an example, the following table illustrates the composition of a basic virtual machine template library. Note that this should not replace proper template planning by using System Center Virtual Machine Manager.

Template 1—Small |

2 vCPU, 4 GB memory, 50 GB disk |

VLAN 20 |

Windows Server 2008 R2 |

1 |

Template 2—Medium |

8 vCPU, 16 GB memory, 100 GB disk |

VLAN 20 |

Windows Server 2012 R2 |

2 |

Template 3—X-Large |

24 vCPU, 64 GB memory, 200 GB disk |

VLAN 20 |

Windows Server 2012 R2 |

4 |

2.2.1 Virtual Machine Storage

2.2.1.1 Dynamically Expanding Virtual Hard Disks

Dynamically expanding VHDs provide storage capacity as needed to store data. The size of the VHD file is small when the disk is created, and it grows as data is added to the disk. The size of the VHD file does not shrink automatically when data is deleted from the virtual hard disk; however, you can use the Edit Virtual Hard Disk Wizard to make the disk more compact and decrease the file size after data is deleted.

2.2.1.2 Fixed-Size Disks

Fixed-size VHDs provide storage capacity by using a VHDX file that is in the size that is specified for the virtual hard disk when the disk is created. The size of the VHDX file remains fixed, regardless of the amount of data that is stored. However, you can use the Edit Virtual Hard Disk Wizard to increase the size of the VHDX, which in turn increases the size of the VHDX file.

By allocating the full capacity at the time of creation, fragmentation at the host level is not an issue. However, Fragmentation inside the VHDX itself must be managed within the guest.

2.2.1.3 Differencing Virtual Hard Disks

Differencing VHDs provide storage to help you make changes to a parent VHD without changing the disk. The size of the VHD file for a differencing disk grows as changes are stored to the disk.

2.2.1.4 Pass-Through Disks

Pass-through disks allow Hyper-V virtual machine guests to directly access local disks or SAN LUNs that are attached to the physical server, without requiring the volume to be presented to the host server.

The virtual machine guest accesses the disk directly (by using the GUID of the disk) without having to utilize the file system of the host. Given that the performance difference between fixed disk and pass-through disks is now negligible, the decision is based on manageability.

For instance, a disk that is formatted with VHDX is hardly portable if the data on the volume will be very large (hundreds of gigabytes), given the extreme amounts of time it takes to copy. For a backup scheme with pass-through disks, the data can be backed up only from within the guest.

When you are utilizing pass-through disks, no VHDX file is created because the LUN is used directly by the guest. Because there is no VHDX file, and there is no dynamic sizing or snapshot capability.

Pass-through disks are not subject to migration with the virtual machine, and they are only applicable to standalone Hyper-V configurations. Therefore, they are not recommended for common, large scale Hyper-V configurations. In many cases, virtual Fibre Channel capabilities supersede the need to leverage pass-through disks when you are working with Fibre Channel SANs.

2.2.1.5 In-guest iSCSI Initiator

Hyper-V can also utilize iSCSI storage by directly connecting to iSCSI LUNs that are utilizing the virtual network adapters of the guest. This is mainly used for access to large volumes on SANs to which the Hyper-V host is not connected, or for guest clustering. Guests cannot boot from iSCSI LUNs that are accessed through the virtual network adapters without utilizing an iSCSI initiator.

2.2.1.6 Storage Quality of Service

The storage Quality of Service (QoS) in Windows Server 2012 R2 provides the ability to specify a maximum operations per second (IOPS) value for a virtual hard disk in Hyper-V. This protects tenants in a multitenant Hyper-V infrastructure from consuming excessive storage resources, which can impact other tenants (often referred to as a “noisy neighbor”).

Maximum and minimum values are specified in terms of normalized IOPS where every 8 K of data is counted as an I/O. Administrators can configure storage QoS for a virtual hard disk that is attached to a virtual machine. Configurations include setting maximum and minimum values for the IOPS for the virtual hard disk. Maximum values are enforced and minimum values generate administrative notifications.

2.2.2 Virtual Machine Networking

Hyper-V guests support two types of virtual switch adapters: synthetic and emulated.

- Synthetic adapters Make use of the Hyper-V VMBus architecture. They are high-performance, native devices. Synthetic devices require that the Hyper-V integration services are installed in the guest operating system.

- Emulated adapters Available to all guests, even if integration services are not available. They perform much more slowly, and they should be used only if synthetic devices are unavailable.

You can create many virtual networks on the server running Hyper-V to provide a variety of communications channels. For example, you can create virtual switches to provide the following types of communication:

- Private network. Provides communication between virtual machines only.

- Internal network. Provides communication between the host server and virtual machines.

- External network. Provides communication between a virtual machine and a physical network by creating an association to a physical network adapter on the host server.

2.2.3 Virtual Machine Compute

2.2.3.1 Virtual Machine Generations

In Windows Server 2012 R2, Hyper-V virtual machine generation is a new feature that determines the virtual hardware and functionality that is presented to the virtual machine. Hyper-V in Windows Server 2012 R2 supports the following two virtual machine generation types:

- Generation 1 virtual machines These represent the same virtual hardware and functionality that was available in previous versions of Hyper-V, which provides BIOS-based firmware and a series of emulated devices.

- Generation 2 virtual machines These provide the platform capability to support advanced virtual features. Generation 2 virtual machines deprecate legacy BIOS and emulators from virtual machines, which can potentially increase security and performance. Generation 2 virtual machines provide a simplified virtual hardware model including Unified Extensible Firmware Interface (UEFI) firmware.

Several legacy devices are removed from generation 2 virtual machines. Generation 2 virtual machines have a simplified virtual hardware model provided through the VMBus as synthetic devices (such as networking, storage, video, and graphics). This model supports Secure Boot, boot from a SCSI virtual hard disk or virtual DVD, or PXE boot by using a standard network adapter. In addition, generation 2 virtual machines support Unified Extensible Firmware Interface (UEFI) firmware.

Support for several legacy devices is also removed from generation 2 virtual machines, including Integrated Drive Electronics (IDE) and legacy network adapters. Generation 2 virtual machines are only supported in Windows Server 2012 R2, Windows Server 2012, Windows 8.1, and Windows 8 (64-bit versions).

2.2.3.2 Virtual Processors and Fabric Density

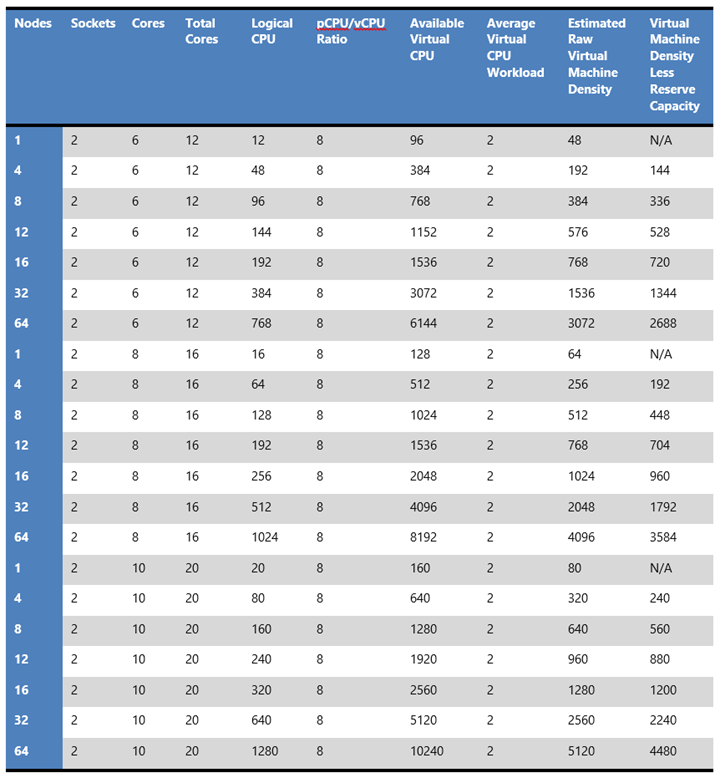

Providing sufficient CPU density is a matter of identifying the required number of physical cores to serve as virtual processors for the fabric. When performing capacity planning, it is proper to plan against cores and not hyperthreads or symmetric multithreads (SMT). Although SMT can boost performance (approximately 10 to 20 percent), SMT is not equivalent to cores.

A minimum of two CPU sockets is recommended for cloud pattern configurations which are discussed in the patterns document. Combined with a minimum 6-core CPU model, 12 physical cores per scale unit host can be made available to the virtualization layer of the fabric.

Most modern server class processors support a minimum of six cores, with some supporting up to 10 cores. Given the average virtual machine requirement of two virtual CPUs, a two-socket server that has the midrange of six-core CPUs provides 12 logical CPUs. This provides a potential density of between 96 and 192 virtual CPUs on a single host.

As an example, the table below outlines the estimated virtual machine density based on a two-socket, six-core processor that uses a predetermined processor ratio. Note that CPU ration assumptions are highly dependent on workload analysis and planning and should be factored into any calculations. For this example, the processor ratio would have been defined through workload testing, and an estimation of potential density and required reserve capacity could then be calculated.

There are also supported numbers of virtual processors in a specific Hyper-V guest operating system.

For more information, please see Hyper-V Overview on Microsoft TechNet.

2.2.4 Linux-Based Virtual Machines

Linux virtual machine support in Windows Server 2012 R2 and System Center 2012 R2 is focused on providing more parity with Windows-based virtual machines. The Linux Integration Services are now part of the Linux kernel version 3.x.9 and higher. This means that it is no longer a requirement to install Linux Integration Services as part of the image build process. For more information, please see The Linux Kernel Archives.

Linux virtual machines can natively take advantage of the following:

- Dynamic Memory. Linux virtual machines can take advantage of the increased density and resource usage efficiency of Dynamic Memory. Memory ballooning and hot add are supported.

- Support for online snapshots. Linux virtual machines can be backed up live, and they have consistent memory and file systems. Note that this is not app-aware consistency similar to what would be achieved with Volume Shadow Copy Service in Windows.

- Online resizing of VHDs. Linux virtual machines can expand VHDs in cases where the underlying LUNs are resized.

- Synthetic 2D frame buffer driver. Improves display performance within graphical apps.

This can be performed on Windows Server 2012 or Windows Server 2012 R2 Hyper-V.

2.2.5 Automatic Virtual Machine Activation

Automatic Virtual Machine Activation makes it easier for service providers and large IT organizations to use the licensing advantages that are offered through the Windows Server 2012 R2 Datacenter edition—specifically, unlimited virtual machine instances for a licensed system.

Automatic Virtual Machine Activation allows customers to license the server, which provides as many resources as desired and as many virtual machine instances of Windows Server as the system can deliver. When guests are running Windows Server 2012 R2 and hosts are running Windows Server 2012 R2 Datacenter, guests automatically activate. This makes it easier and faster to deploy cloud solutions based on Windows Server 2012 R2 Datacenter.

Automatic Virtual Machine Activation (AVMA) requires that the host operating system (running on the bare metal) is running Hyper-V in Windows Server 2012 R2 Datacenter. The supported guest operating systems (running in the virtual machine) are:

- Windows Server 2012 R2 Standard

- Windows Server 2012 R2 Datacenter

- Windows Server 2012 R2 Essentials

3 Public Cloud

The Microsoft Azure virtualization platform supports 64-bit virtual machines running a variety of operating systems. These virtual machines can be standalone virtual machines that do not have any connectivity to any other virtual machines, or you can create virtual machines that connect to one another over an Azure Virtual Network. These virtual machines can also be connected to the Internet over a direct path through an Azure located Internet gateway or by routing Internet-bound communications through an on-premises network gateway.

Details of the Microsoft Azure virtualization platform were covered in the Microsoft Infrastructure as a Service Compute Foundations article. Please refer to that article for information on the public cloud component of Microsoft Infrastructure as a Service solutions.

4 Hybrid Cloud

Similar to public cloud, the Microsoft Azure virtualization platform can be integrated with your on-premises Hyper-V based virtualization platform. More detailed information on this integration will be included in the articles that discuss the Microsoft Infrastructure as a Service Management Foundations.