Modern Datacenter Architecture Patterns-Multi-Site Data Tier

The Multi-Site Data Tier design pattern details the Azure features and services required to deliver data tier services that can provide predictable performance and high availability across geographic boundaries.

Table of Contents

1.1 Pattern Requirements and Service Description

Prepared by:

Jeff Baker – Microsoft

Julia Hazelwood – Microsoft

Tom Shinder – Microsoft

Joel Yoker – Microsoft

Cloud Platform Integration Framework Overview and Patterns:

Cloud Platform Integration Framework – Overview and Architecture

Modern Datacenter Architecture Patterns-Multi-Site Data Tier

Modern Datacenter Architecture Patterns - Offsite Batch Processing Tier

Modern Datacenter Architecture Patterns-Global Load Balanced Web Tier

1 Overview

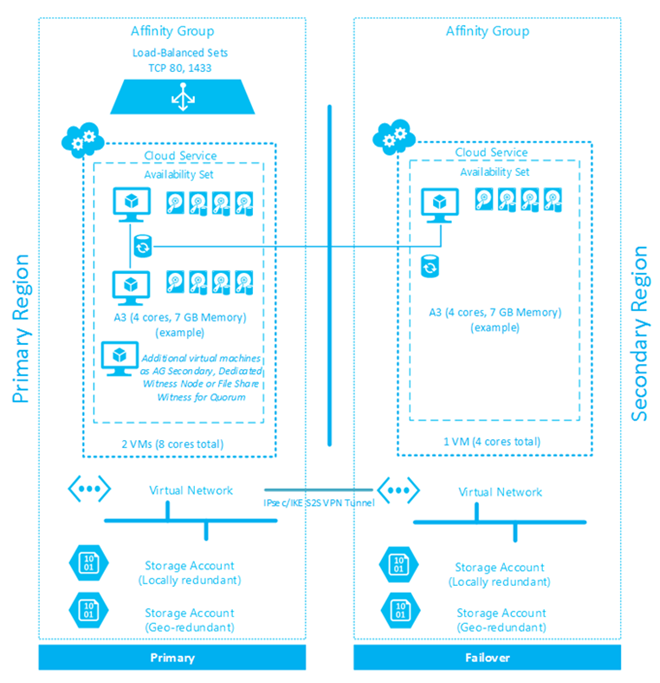

The Multi-Site Data Tier design pattern details the Azure features and services  required to deliver data tier services that can provide predictable performance and high availability across geographic boundaries. A full list of Microsoft Azure regions and the services available within each is provided within the Microsoft Azure documentation site. For the purposes of this design pattern a data tier is defined as a tier of service providing traditional data platform services in either an isolated manner or as part of a multi-tiered application. Within this pattern, load balancing of the data tier is provided both locally within the region and across regions.

required to deliver data tier services that can provide predictable performance and high availability across geographic boundaries. A full list of Microsoft Azure regions and the services available within each is provided within the Microsoft Azure documentation site. For the purposes of this design pattern a data tier is defined as a tier of service providing traditional data platform services in either an isolated manner or as part of a multi-tiered application. Within this pattern, load balancing of the data tier is provided both locally within the region and across regions.

Introduced with SQL Server 2012, AlwaysOn Availability Groups is a high-availability and disaster-recovery feature that is fully supported on Azure Infrastructure Services. Detailed information and the official support announcement for AlwaysOn Availability Groups on Windows Azure Infrastructure Service can be found in the article AlwaysOn Availability Groups.

This document provides an architectural overview of a Multi-Site Data Tier in Azure utilizing SQL AlwaysOn Availability Groups. With an optional read-only secondary node in an additional Azure datacenter for additional functionality and disaster recovery. Using SQL AlwaysOn in Azure provides a high-availability data tier that can be consumed by web or application tiers.

While this document focuses on architectural patterns and practices, full deployment guidance can be found in the official tutorials, which outline the configuration of AlwaysOn Availability Groups in Azure and the configuration of the AlwaysOn Availability Group Listener.

1.1 Pattern Requirements and Service Description

The Multi-Site Data Tier pattern is designed to provide high-availability in the primary site and recoverability in the secondary site. The Azure virtual machines that make up the SQL AlwaysOn Availability Group nodes reside in an Azure availability set resulting in a 99.95% uptime Service Level Agreement (SLA) for the virtual machine components of this architectural pattern. The 99.95 percent uptime is rationalized across Microsoft Azure architectural constructs to support the number of planned and unplanned failures across Azure regions, within an Azure datacenter, and across Azure virtual machines which can be supported within a year while still meeting the SLT.

Being a foundational architectural pattern, it is expected that the data tier outlined in this document will be composed with other tiers (such as a web tier) to support a larger solution architecture or design pattern.

A foundational set of design principles has been employed to make sure that the Multi-Site Data Tier architectural pattern is optimized to deliver a robust set of capabilities and functionalities with the highest possible uptime.

The following design principles are the basis for this architectural pattern.

- High availability: The Multi-Site Data Tier pattern is designed to deliver 99.95% scheduled uptime using standard Microsoft Azure Services. Leveraging Azure availability sets within a given Azure region along with hosting the data tier across Azure regions helps make sure that the platform is able to deliver this SLT. The ability to meet this SLT is also determined by a customer’s internal operational processes, tools and the reliability of the web application itself.

- Service continuity: Best practices from Microsoft Azure architectures are incorporated into the architecture and is part of the pattern to handle unscheduled service outages.

- Quality: The Multi-Site Data Tier is designed based on lessons learned from internal Microsoft deployments and Microsoft Azure recommended practices helping make sure the highest quality and reliability from the design.

- Management: Microsoft System Center and Azure management capabilities will provide the necessary platform for the management of the Multi-Site Data Tier as defined within the Cloud Platform Integration Framework.

- Administration: Using Microsoft Windows PowerShell™, the Microsoft Azure Portal and SQL Server management tools, administrators can perform any tasks associated with Microsoft Azure and the data tier hosted within the service.

Common requirements for the Multi-Site Data Tier using SQL AlwaysOn Availability Groups with an Availability Group Listener include:

- Active Directory Domain Services (domain controllers hosted in Azure virtual machines) and DNS configured in one or more Azure virtual machines and accessible by all SQL nodes

- Two Azure virtual machines configured as a Windows Failover Cluster in the primary Azure region and one Azure virtual machine in the secondary Azure region

- Load-balanced endpoint for Availability Group Listener traffic

- Primary and Secondary SQL nodes must reside in the same cloud service, and part of the same availability set

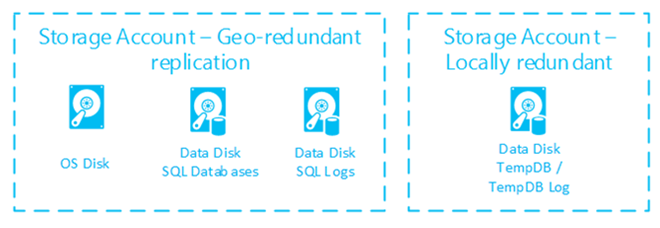

- One or more storage accounts configured as geo-redundant for use by SQL virtual machine operating system (OS) disks and SQL backups (virtual machine data disks)

- One or more storage accounts configured as locally-redundant for use by SQL data and log file disks (virtual machine data disks)

- Regional Virtual Network (VNet) established in each Azure region

- Site-to-Site VPN connection between Azure Regional VNets in the primary and secondary Azure region

- ACL set on the load-balanced endpoint for the Availability Group Listener in the primary Azure region with a permit rule needed for the cloud service IP address of the asynchronous SQL node in the secondary Azure region.

- Asynchronous secondary SQL Availability Group node(s) at secondary Azure region should be excluded from voting for cluster quorum witness.

- For configurations of this pattern with an even number of cluster nodes in the primary Azure region an additional file share witness or dedicated witness cluster node must be deployed within the primary Azure region to allow for proper quorum configuration. Note: Node and disk majority is not a possible quorum configuration in Azure.

- Virtual machines for SQL nodes must be at least an A3 in size as SQL Server Enterprise is required for SQL AlwaysOn configuration

- The Azure virtual machine type must be set to Standard for SQL nodes due to load-balanced endpoints being used

2 Architecture Pattern

This document describes a pattern for providing access to Microsoft SQL Server content over multiple geographies for the purposes of availability and redundancy. Critical services are illustrated below without attention to the application or web tier that will access the data itself. The diagram below is a simple illustration of the relevant services and how they are used as part of this pattern.

Each of the main service areas are outlined in more detail following the diagram.

2.1 Pattern Dependencies

The primary dependency of the Multi-Site Data Tier architectural patternis the additional service tiers typically found within a multi-tier web application. These services typically include a web tier and application tier and may also include other business logic components which provide additional capabilities to the Solution.

For these services, it is critical to provide alignment with the Azure region and more specifically the affinity groups which contain the Azure services outlined above. Where possible, aligning other service tiers within the same Azure affinity group helps make sure resources associated with this architecture are co-located within a given Azure datacenter. This has the added benefit of increasing performance between Azure services and minimizing costs associated with egress traffic.

2.2 Azure Services

The Multi-Site Data Tier architectural pattern is comprised of the following Azure services:

- Virtual Machines

- Regional Virtual Networks

- Storage Accounts

- Cloud Services

- Availability Sets

- Load-Balanced Endpoints

- Affinity Groups

This pattern consists of Azure virtual machines hosting the Microsoft SQL Server Enterprise Edition workload located in two separate Azure regions.

From a compute perspective a minimum of three Azure virtual machines must be deployed to support local availability of the SQL data and remote recoverability and serviceability.

Azure affinity groups are established at each selected Azure region to help make sure that resources associated with the data tier (Azure compute and storage resources) are co-located within a given Azure datacenter.

A single cloud service per region will be required to host the resources residing within this tier. This has the added benefit of improving performance between interconnected components found within this tier.

Across regions, the SQL content is synchronized through the Availability Group asynchronous replication process. Local availability of the data tier is addressed through a combination of Availability Group synchronous replication, Availability Group Listener, Azure availability sets and load balanced endpoints.

From a network perspective, the Azure virtual machines hosted in each cloud service must have endpoints (load-balanced at the primary Azure region) exposed for SQL replication and listener connections. Each SQL Server within the cloud service will respond using the Azure load balancer on the port defined within the endpoint when configured as a load-balanced set to distribute traffic across systems within this tier. It is recommended that endpoints (including load-balanced sets) be configured only for the services which should be exposed to other tiers, including external access.

Additionally, access control lists (ACLs) can be configured on each defined endpoint to provide restricted access to ports required for administration or communications between services in other tiers. Each region must have a virtual network defined to support communication between resources within the tier and between other tiers which may be found in a multi-tier web application (such as a web or application component found within the Solution). The virtual networks must include adequate, non-overlapping, address space to support the compute instances and have DNS servers defined to support DNS queries across service tiers and VNets. To support availability between regions a Windows Failover Cluster consisting of the SQL Server nodes in both primary and secondary regions must be configured as documented at the following link.

Storage components include two or more storage accounts per region. Each storage account should be configured to be located in the affinity group defined within each region (as opposed to a specific region) to help make sure the storage services will be in the same data center with the Azure compute services outlined above. Each storage account should be configured to use geo-redundant storage or locally-redundant storage as applicable to support availability and data consistency as outlined in the “Geo-replication not supported for data and log files on separate disks” article. While the primary location (Azure region) can be selected, the secondary location is paired with another Azure region by default. Details on Azure location pairings can be found at the following link. Within each storage account a container should be created to contain the virtual hard disks associated with the virtual machines outlined above. Storage accounts can additionally be configured to monitor transaction statistics and log read, write and/or delete requests for a specified number of days.

2.3 Pattern Considerations

Considerations for the Multi-Site Data Tier architectural patterninclude the securing of exposed endpoints and the subscription compute limits within a given cloud service. For each component of Microsoft Azure, a series of subscription and service limits are defined by the service. These limits are subject to change and are published at the following link.

Microsoft Azure limits fall into the categories of default and maximum limits. Default limits are those which exist on every Azure subscription and can be increased through a request to Microsoft support whereas maximum limits define the upper boundary of a given service or capability within Azure. Limits can be raised by contacting Microsoft support as outlined through the Azure portal as outlined in this article, however the request cannot exceed the maximum limits outlined for each Azure service listed above.

The primary constraint that typically is encountered with Microsoft SQL server deployment on Azure Virtual machines is the number of data disks per virtual machine, IOPS per data disk and total IOPS and throughput performance of a single storage account and the total number of available storage accounts. The default number of storage accounts (currently 20) and max IOPS per disk (currently 500) can sometimes be exceeded by intensive SQL server deployments which either need to scale up or scale out to meet performance needs within this tier.

For example, two deployments of a two node SQL Availability Group with 16 data disks assigned to each node would exceed the recommended limit of 40 highly used VHDs in a single storage account. This recommendation is in place in order to avoid the 20,000 IOPS per storage account limit.

3 Interfaces and End Points

As outlined earlier, the Azure virtual machines hosted in each cloud service will typically expose endpoints to support  connections to the AlwaysOn Listener or potentially to support connection directly to a SQL node. It is recommended that endpoints (including load-balanced sets) be configured only for the services which should be exposed to other tiers, including external access.

connections to the AlwaysOn Listener or potentially to support connection directly to a SQL node. It is recommended that endpoints (including load-balanced sets) be configured only for the services which should be exposed to other tiers, including external access.

In hybrid enterprise scenarios the desired access for these applications may be restricted to only be accessed by the internal organization. In addition, endpoints for remote management services may be required.

When using the portal to create virtual machines, Azure defines administrative endpoints for remote management using PowerShell and Remote Desktop Protocol by default. In addition, this tier may also require other TCP/IP ports to support bi-directional communication with other tiers of the Solution, which is typical for multi-tier applications. In most cases all these endpoints, along with other administrative ports, should be secured to specific subnets within Azure. To support this, access control lists (ACLs) should be configured on each defined endpoint to provide security for ports required for administration or communications between services in other tiers.

A load-balanced endpoint is required to be used for communication with the SQL AlwaysOn AG Listener. Specific endpoint requirements include:

- Public/private ports aligning with port used by SQL instances in the AlwaysOn Availability Group

- Load-Balanced Set created

- Direct Server Return enabled

- Alternate probe port of 59999 used

When using and ACL (Access Control List) on the endpoint keep in mind the following scenarios:

- When an ACL rule with Permit is added to an endpoint, all other subnets are denied

- When a Deny rule is added all other subnets are permitted

- To carve out a specific range, you may use a combination of Permit and Deny rules for the endpoint

4 Availability and Resiliency

Microsoft provides clearly defined Service Level Agreements (SLAs) for each service provided within Azure. Each architectural pattern is comprised of one or more Azure services and details about each individual Azure service can be found on the Microsoft Azure Service Level Agreement website. For the Multi-Site Data Tier architectural pattern, the Azure services required carry the following SLAs:

Azure Service |

Service Level Agreement |

Cloud Service |

99.95% |

Virtual Network Gateway |

99.9% |

Virtual Machines (deployed in the same availability set) |

99.95% |

Geo Redundant Storage (GRS) |

99.9% |

Read Access-Geo Redundant Storage (RA-GRS) |

99.99% |

Locally Redundant Storage (LRS) |

99.9% |

ExpressRoute |

99.9% |

The composite SLA of the Multi-Site Data Tier architectural pattern Azure service components is 99.9%.

The key factors effecting availability is the redundancy of architectural components found within and across Azure regions. As described above, a minimum of two Azure virtual machines must be deployed to support local availability of the data tier. Microsoft Azure supports availability at the region level with fault domains and upgrade domains.

Fault domains are a physical availability construct and failure can take down all services within a given fault domain, of which at least two are available per subscription.

An update domain is a logical availability construct which provides resiliency against planned failures (updates) to the underlying fabric the services reside within. Five update domains are available per subscription. While fault domains cannot be configured directly, update domains for the Multi-Site Data Tier architectural pattern can be defined through the use of Azure availability sets. To support planned or unplanned downtime events, the use of availability sets will help make sure that a given virtual machine will be available and meet the SLAs outlined above.

As discussed previously, endpoints within the data tier cloud service of the primary Azure region will be configured as a load-balanced set to distribute traffic across systems within this tier. The databases in the SQL AlwaysOn Availability Group are made highly-available by using a Load-Balanced Endpoint for the Listener which would perform an automatic failover to a secondary node in a virtual machine within the same Availability Set. In the event of an Azure Data Center failure, SQL databases exist on geo-redundant storage accounts, and a manual failover could be performed to a secondary data center where an asynchronous node in the AG resides. The secondary asynchronous node can reside in another subscription / VNET in the same region, or another region all of which are linked by a site-to-site VPN connection.

5 Scale and Performance

A key consideration for the performance and scale of the Multi-Site Data Tier architectural pattern is the size of the virtual machine instances running within each cloud service at each datacenter. It is important that during testing the underlying Solution is tested to determine the minimum instance size to meet performance requirements (both minimum and maximum). As stated earlier, A3 is the minimum size that should be deployed with SQL Enterprise Edition.

The minimum number of virtual machine instances necessary for this scenario are as follows:

- Two Azure virtual machines (primary and secondary SQL Availability Group nodes) in the same Could Service in the same VNet in the primary Azure region

- At least one Azure virtual machine (additional secondary SQL Availability Group node) in another Cloud Service in a VNet connected by a site-to-site VPN connection in the secondary Azure region

Additional Azure virtual machines may be deployed as secondary instances added to the SQL AlwaysOn Availability Group to be used as read-only sources.

6 Cost

An important consideration when deploying any Solution within Microsoft Azure is the cost of ownership. Costs related to on-premises cloud environments typically consist of up-front investments compute, storage and network resources, while costs related to public cloud environments such as Azure are based on the granular consumption of the services and resources found within them. Costs can be broken down into two main categories:

- Cost factors

- Cost drivers

Cost factors consist of the specific Microsoft Azure services which have a unit consumption cost and are required to compose a given architectural pattern. Cost drivers are a series of configuration decisions for these services within a given architectural pattern that can increase or decrease costs.

Microsoft Azure costs are divided by the specific service or capability hosted within Azure and continually updated to keep pace with the market demand. Costs for each service are published publicly on the Microsoft Azure pricing calculator. It is recommended that costs be reviewed regularly during the design, implementation and operation of this and other architectural patterns

6.1 Cost Factors

When using Azure virtual machines, the two factors which impact cost are the size of virtual machine and the storage of the virtual machine data. Microsoft Azure provides a predefined set of available virtual machine sizes which provide an array of CPU and memory configurations within the service. Virtual machines are measured (and therefore charged) by the hour of use and de-allocated virtual machines (those which are turned off) carry no charge. The storage accounts used to host the virtual machine hard disks carry a charge per gigabyte (GB) regardless of the state of the data found within it.

Finally, while ingress network traffic is included in the Azure service, the egress of network traffic across an Azure virtual network carries a cost that must be considered with a geo-distributed Solution based on the Multi-Site Data Tier architectural pattern.

6.2 Cost Drivers

As stated earlier, cost drivers consist of the configurable options of the Azure services required when implementing an architectural pattern which can impact the overall cost of the Solution. These configuration choices can have both a positive or negative impact on the cost of ownership of a given solution within Azure, however they may also potentially impact the overall performance and availability of the Solution depending on the selections made by the organization.

Cost drivers can be categorized by their level of impact (high, medium and low). Cost drivers for the Multi-Site Data Tier architectural pattern are summarized in the table below.

Level of Impact |

Cost Driver |

Description |

| High | State of Azure virtual machine instances |

Azure compute costs are driven by the number of running instances and the size of the virtual machine. Unlike running virtual machines, those which are in an offline state and are de-allocated do not charge compute costs per hour. |

| High | Number of Azure virtual machine instances |

Azure compute costs are also driven the number of running virtual machines in a cloud service. To support local availability constructs, the architectural pattern uses multiple virtual machines in the primary Azure region using availability sets. Additional virtual machines may be added to provide increased availability in the primary or secondary region or to provide increased application performance through the use of read only secondary’s. |

| Medium | Size (and type) of Azure virtual machine instances |

A consideration for Azure compute costs include the virtual machine instance configuration size (and type). Instances range from low CPU and memory configurations to CPU and memory intensive sizes. Higher memory and CPU core allocations carry higher per hour operating costs. Options include using a fewer number large instances vs. a larger number of small instances to address performance requirements. With the Multi-Site Data Tier architectural pattern utilizing virtual machines that will run Microsoft SQL Server Enterprise Edition a minimum instance size of A3 (4 cores, 7 GB memory) is required. |

| Low | Virtual machine hard disk size |

Azure storage carries a cost per GB and provisioned virtual machines (online and offline) are stored within the defined storage account within the Azure subscription. While still a cost consideration, the virtual machine hard disk sizes are not expected to have a significant impact on the overall cost of solutions using this pattern. |

| Low | Egress data transfer between Azure regions |

One price metric for the Multi-Site Data Tier architectural pattern is the amount of data replicated to the secondary node(s) in the secondary Azure region. Data egress refers to data transferred out of an Azure region. When the data in your storage account is accessed by an application that is not running in the same region, whether that application is a cloud service or some other type of application, then you are charged for data egress. While this is a cost that must be taken into consideration, the Solution would need to generate many terabytes of replication data each month for this cost factor to become a major driver of the over Solution cost. |

7 Operations

Cloud Platform Integration Framework (CPIF) extends the operational and management functions of Microsoft Azure, System  Center and Windows Server to support managed cloud workloads. As outlined in CPIF, Microsoft Azure architectural patterns support deployment, business continuity and disaster recovery, monitoring and maintenance as part of the operations of the Multi-Site Data Tier architectural pattern.

Center and Windows Server to support managed cloud workloads. As outlined in CPIF, Microsoft Azure architectural patterns support deployment, business continuity and disaster recovery, monitoring and maintenance as part of the operations of the Multi-Site Data Tier architectural pattern.

Deployment of this pattern can be achieved through the standard Azure Management Portal and Azure PowerShell. For deployment using PowerShell, several examples are provided at the Azure Script Center. Relevant examples include the Deploy a Windows Azure Virtual Machine with Two Data Disks sample and the Deploy Windows Azure Virtual machines to an Availability Set and Load Balanced on an Endpoint sample. Several full tutorials also exist that cover the full end to end deployment process from the virtual machines to the SQL Availability Group components.

Additionally the Multi-Site Data Tier architectural pattern can be created using the Azure Resource Group capability which is in preview. Templates can be used in conjunction with Azure resource groups to deploy the necessary components for this pattern, such as the sample for updating PowerShell deployment scripts to use Azure Resource Manager template.

Monitoring of this pattern and associated resources can be achieved in two ways using System Center and Microsoft Azure. The virtual machines (IaaS) can be monitored using System Center 2012 R2 Operations Manager. Like on-premises resources, Operations Manager provides management and monitoring capabilities for virtual machines running within Azure, monitoring critical services using standard management packs.

Operations Manager can also provide full monitoring and alerting for the SQL Server workloads deployed in the virtual machines through the use of the SQL Server Management Pack. When the management pack is deployed, Operations Manager monitors SQL Server components such as database engine instances, databases, and SQL Server agents. The monitoring provided by this management pack includes availability and configuration monitoring, performance data collection, and default thresholds. You can integrate the monitoring of SQL Server components into your service-oriented monitoring scenarios.

In addition to health monitoring capabilities, this management pack includes dashboard views, extensive knowledge with embedded inline tasks, and views that enable near real-time diagnosis and resolution of detected issues. Official guidance on monitoring Availability Groups can be found in the article Monitoring of Availability Groups.

Maintenance of the Multi-Site Data Tier architectural pattern falls into two categories:

- Maintenance of the virtual machines

- Maintenance of SQL Server

For the maintenance of SQL Server, appropriate maintenance plans should be leveraged. Specific guidance on Availability Group upgrades and updates can be found in the article Upgrade and Update of Availability Group Servers with Minimal Downtime and Data Loss.

When using Azure virtual machines it is the responsibility of the customer organization to perform platform updating. This can be accomplished through tools such as Windows Server Update Services or System Center Configuration Manager Software Updates. In any case care must be taken with the synchronous nodes of the Availability Group to failover the Primary node prior rebooting in order to avoid any long recovery from an unplanned failover.

As discussed previously, the use of availability sets can help make sure the data tier spans update and fault domains, allowing the data tier to be resilient to outages caused by Azure platform updates. Solutions deployed in Azure virtual machines must also consider the update of the Azure fabric as well and require the same level of availability planning outlined earlier in this pattern guide.

From a business continuity and disaster recovery perspective, the availability constructs outlined earlier should help make sure that solutions deployed using the Multi-Site Data Tier architectural pattern are designed to support continuity of operations in the event of failure of one or more services both locally and across Azure regions. Backup of the tier must still be maintained by the organization using native Azure capabilities, through Microsoft products or third party solutions. The use of geo-redundant and locally-redundant storage as specified within this pattern supports the ability to restore data across regions in the event of local failure.

The disks should be distributed in storage accounts with geo-redundant and locally redundant settings based on the purpose of the disk:

It is important to set the storage account for the data disk(s) containing the SQL data and logs to locally redundant in order maintain accurate time stamps in the event of a DR situation requiring a full recovery. See the “Geo-replication not supported for data and log files on separate disks” section of the article High Availability and Disaster Recovery for SQL Server in Azure Virtual Machines.

8 Architecture Anti-Patterns

As with any architectural design there are both recommended practices and approaches which are not desirable. Anti-patterns for the Multi-Site Data Tier include:

for the Multi-Site Data Tier include:

- Single Region Deployment

- Single Virtual Machine Deployment (in the Primary Region)

Within the Multi-Site Data Tier architectural pattern there are multiple decisions which could impact the availability of the entire application infrastructure or Solution which depend on this tier. In many cases, a data tier serves other tiers of Solution, including search, business logic components, application, and web tiers.

For these reasons, it is important to avoid decisions which impact the availability of this tier. While scalability and performance are important for many solutions, unlike other constructs these configuration choices can be reviewed and revisited over time with little configuration changes required and minimal impact to overall cost.

Deployment to a single Azure region potentially exposes the application to the failure or inaccessibility of a given Azure region. While the loss of an entire region is an unlikely event, in order to withstand failures of this magnitude the pattern should include deployment to a second Azure region as outlined in the sections above. In addition, deployment of a single virtual machine in the primary Azure region may appear on the surface to reduce costs, however this does not protect against planned and unplanned availability within a given Azure datacenter or region.

Events such as regular updates and intermittent failures would not be protected by Azure fault and update domains, leaving consumers of the service to be redirected to another region, which could have an impact on end-user performance depending on their latency to the region designated for failover. While impact to the end-user experience is never considered an acceptable outcome in normal operations, during times of service outage having diminished capacity can sometimes be acceptable given the rarity of this type of catastrophic event. However, when availability is only planned between regions, not within a given region (as would be the case in a single virtual machine deployment), the frequency of this type of outage would be higher due to expected and planned maintenance cycles of the Azure fabric.

For these reasons the architectural anti-patterns for this tier will typically focus on those decisions which impact the availability of the deployed solution.