How It Works: The I/O Path: SQL Server Running in Windows Azure Virtual Machine (IaaS)

Note: This blog is based on behavior as of June 2013. At Microsoft we continue to evolve and enhance our products so the behavior may change over time.

The I/O path for SQL Server, running on Windows Azure Virtual Machines, uses Windows Azure storage (often referred to as XStore.) The following is a link to a whitepaper, written by the SQL Server development team, explaining various I/O aspects and tuning needs for this environment:

https://go.microsoft.com/fwlink/?LinkId=306266

I have been testing and driving workloads using SQL Server in our IaaS environment(s). Exercising test patterns from SQLIOSim, T-SQL, Tempdb stress, BCP, and the RML utility test suites. This work has afforded me the opportunity to work closely with both the SQL Server and Windows Azure development teams to better understand how to tune SQL Server for a Windows IaaS deployment.

The previously mentioned white paper points out many of the interactions but I would like to dive into some specific areas to help you understand your Windows Azure IaaS, SQL Server deployment capabilities and options.

Caching

Caching happens at multiple levels (SQL Server, VHD/VHDx, XStore, …). SQL Server requires stable media (data retained across power outages) in order to uphold the ACID properties of the database. It should not surprise you that I spent a fair amount of time understanding the various caches and how SQL Server I/O patterns interact with them from both data safety/stability and performance angles.

SQL Server Data and Log Files: FILE_FLAG_WRITETHROUGH/Forced Unit Access (FUA) Writes

SQL Server does all database and log file writes (including TEMPDB) forcing write though. This means the system is not allowed to return the success of a WriteFile* operation until the system guarantees the data has been stored in stable media. This is regardless of the cache settings at any level of the system. The data must be stored in a cache that is battery backed or written to physical media that will survive a power outage.

SQL Server Backups

When performing a backup SQL Server does NOT set the FILE_FLAG_WRITETHOUGH, instead the backup allows caching of the data to take place. When backup has written all of the data it issues the FlushFileBuffers command on the backup file(s). This acts like FUA in that the FlushFileBuffers must guarantee all data stored in cache has been written to stable media before returning success from the API.

Other Files

There are other files such as the SQL Server error log, BCP output, … that a SQL Server deployment will use. These usually do NOT use FILE_FLAG_WRITETHROUGH nor FlushFileBuffers. This behavior is not unique to Windows IaaS it has been the design and implementation for many releases of the SQL Server product. I am only mentioning this because it can make a difference to you as to where you store the data or leverage various caching mechanisms.

Drive: VHD/VHDx Cache (Drive Caching)

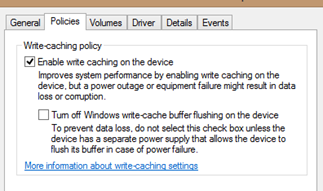

The first level of caching appears at the drive level. Accessing the drive’s properties exposes the drive level caching policy. When enabled the drive is allowed to cache data within the physical drive cache (for physical disks) and virtual implementations.

Drive: XStore Caching

The virtual disks attached to your Windows IaaS VM are stored in Windows Azure storage (the XStore.) The XStore provides host level caching and performance optimizations for your VM. XStore caching is controlled outside of the individual drive cache settings, previously shown. (Drive level cache settings do not impact the XStore cache settings.)

There are three cache settings (None, ReadOnly, ReadWrite) for the XStore. By default a data disk is set to NONE and an OS Disk is set to Read Write. The data disk can be configured to any of the 3 settings while an OS disk is limited to the Read Only or Read Write options.

To alter the XStore cache settings you must use a command such as the following: (The cmdlet’s are part of the Windows Azure Management cmdlets package.)

get-azurevm 'TestSub' 'TestMach' | set-azureosdisk -HostCaching "ReadOnly" | update-azurevm

Note: The configuration change does not take place until the VM is restarted.

You will find that most documentation points to the use of one or more data drives for SQL Server database and log files and I agree with this assessment but I would like to explain a bit more as to why I agree.

OS Drive Default: Read Write

To understand the recommendation it helps to understand a bit of how the XStore cache is implemented. The XStore cache can use a combination of the host’s RAM as well as disk space, on the local host to support the cache. When a read or write takes place the local cache is consulted. As you can imagine, the local cache can be faster than making a request to the Windows Azure storage cluster. To see this in action it helps if I show you some high level scenarios.

Note: These scenarios are designed to provide you a 10,000 foot view of the architecture.

| Action | FUA | XStore Read Cache | XStore Write Cache | Outcome |

| WriteFile* | Yes | Yes | Yes |

Data is written all the way to the XStore (stable media.) |

| No | Yes | Yes |

Data is NOT in stable media, will be written by XStore caching, LRU algorithms. This is not used by the SQL Server database or log files because FUA=YES but BCP out is a usage example. This may be helpful because the BCP can leverage the cache and allow the cache to optimally send data to the XStore. Note: For temporary files, such as BCP not TEMPDB, you may consider the (D:) scratch drive, provided. It is local and no XStore caching is involved. |

|

| Yes | Yes | No | The write is propagated directly to the XStore. | |

| Yes | No | No | The write is propagated directly to the XStore. | |

| ReadFile* | N/A | Yes | Yes |

If the block is present in the read cache the portion of the block requested to the reader is directly serviced. |

The SQL Server use cases become more clear once you understand the XStore caching behavior and combine it with SQL Server caching behavior.

SQL Server Log File

The log file is typically a serially moving, write only entity. SQL Server maintains a cache of log block to avoid physical I/O for actions such as a transaction rollback or provide data to Always On, Database Mirroring, Replication, … If you place the log on a drive that allows XStore write caching you are seldom taking advantage of the write cache. First the log is written with FUA enabled so a write has to go though the cache, all the way to the XStore. Secondly, since the log has a much higher ratio of writes to reads you are likely not using the XStore cache effectively but forcing local XStore cache writes for every backend, FUA XStore write.

SQL Server Data File

The data file is opened with FUA enabled and the SQL Server uses a large buffer pool to maintain database pages in cache. The larger your VM size the more buffer pool cache SQL Server can take advantage of, avoiding physical I/O activities.

The same write behavior is true for database pages as described in the log section above. When you then apply the XStore read cache capabilities, they may not be performance improving over what SQL Server is already caching in buffer pool. As shown in the scenario table a read, via XStore read cache enablement, can result in a fetch of a larger block into the local XStore read cache. This could be helpful for subsequent SQL Server read requests but you also incur a possible write to the local XStore cache to maintain the data in the XStore cache. Your application read pattern may also be sporadic and defeat the intent of the XStore read cache.

BCP Out

You may get an advantage of writing to a XStore, write enabled drive by allowing the XStore to cache and optimially flush information to the backend store.

BCP In

This is one of the tests that the XStore read cache improved performance for. The read ahead action of the larger blocks used by the XStore allowed the streaming of the read bytes to be faster than from a XStore drive with read caching disabled.

TEMPDB Scratch Drive – No Thanks

It can be a bit confusing that you have a (D: scratch) drive so why not use it for TEMPDB. The reason is that scratch drive is a shared resource between all VMs on the host. You are given a sandbox drive in the VM so others can’t see your data and you can’t see theirs but the physical media is shared. While the system attempts to avoid it, it does mean a noisy neighbor could impact consistent I/O throughput on the scratch drive and change your performance predictability.

Replicas of Data

The Windows Storage for the VHDs atomically replicates your data to 3 separate, physical media destinations. This is done during the write request in a way that the write is assured quorum to the devices before the write is considered complete, proving your VHDs with a high degree of data storage safety.

Remote Replicas (Geo-Replication)

The default for the VHD storage is to provide 3 local replicas of the data. A remote replica can also be established. The remote replica is currently NOT safe for SQL Server use. The remote replica is maintained asynchronously and the system, currently, does not provide the ability to group VHDs into a consistency group. Without consistency groups it is unsafe to assume SQL Server, with files on multiple VHDs maintain the write ordering across all the VHDs and as such the database won’t be recoverable.

At the current time you should not leverage the remote, Windows Azure storage replication capabilities for SQL Server as it is not supported. You should leverage SQL Server technologies that provide the capability (Always On, Database Mirroring, Log Shipping, Backup to Blob Storage, …)

Recap / Recommendation

What am I trying to say? – Test! As pointed out in the SQL Server whitepaper, the XStore is perfectly safe for SQL Server ACID requirements. However, the types of I/O pattern(s) your application(s) drive dictate how you can leverage the XStore caching capabilities.

Most implementations start with the SQL Server database and log files on a data drive, XStore caching disabled and only after testing enable various levels of XStore, caching behavior.

Bob Dorr - Principal SQL Server Escalation Engineer