Idea on how Government can publish data in near real time

The idea of Open Government and Gov 2.0 is not a new one. However it seems that while governments are making great strides toward a more open system through efforts such as Data.gov and Data.gov.au, there appears to be a bit of a disconnect between publication and consumption.

At the recent BarCamp Canberra (#bcc2011) there were many discussions on Gov 2.0 and Open Gov in Australia. I admit that my session on Enabling Digital Society: The Government Part was one of them. (slide deck is here but rather meaningless without commentary). I commend the AU Gov for getting Data.Gov.Au our there, but even they were saying that there are issues because people aren’t taking advantage of the data. I imagine that the expectation was that people would create mash-ups, killer apps, and amazing data visualisations of the published data. After all, isn’t this what we expected?

At the moment, in most of these kinds of initiatives, the data being published is in XML, XLS/CSV, TXT and even PDF format. Nothing wrong with that. We have data in formats that are readable by machines, and people. The data is there and you can certainly write an application to read the XML and do whatever you want with it. However, other than XML these formats aren’t exactly easy to consume, nor are they easy to publish on a regular basis. It amounts to publishing files in most cases. Although some XML formats are drawn from databases which is a good solution.

But the data is stagnating as soon as it is produced and as we’ve seen with the Data.gov in the US, it might be years before it is updated again. This would be fine if it was census data or election data which is only collected once every few years. but for data that would change more frequently, you want a system that can automatically publish the updates to the publically accessible distribution point. You also want it published in a format that does not require any mash-ups or applications that utilise the data to have to obtain a file, and then modify the file or screen scrape the contents for updates.

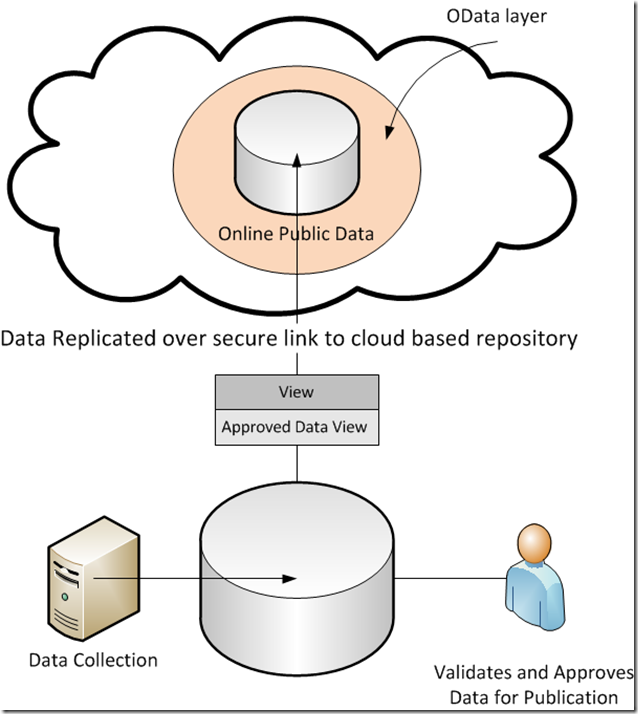

So how do we do it? Well, with the proliferation of cloud based computing services, standardised protocols, and modern databases we can pull this off. Here’s the general overview:

- Identify the data sets that the information will be sourced from, and what filtered or approved data will be made available (this is already done in most cases)

- Create a ‘published’ view on the data that will be used as a replication source to the public repository. This view simply filters out anything that has not been flagged as ‘approved’ or ‘public’

- Set up an online data repository (ODR) based on open standards that has high availability and scalability.

- Configure data replication from the on-premise public view to the online repository

- Configure Open Data access to the online repository

- Advertise and let the mash-fest begin

Does that sound simplistic? Perhaps, but aside from the political rambling of who wants to allow accessibility to the data, the technology to enable this scenario, in this simple fashion is available right now.

Remember, we aren’t talking about sensitive or private data here. We are talking about government collected data that is intended to be published for public use. So we don’t have to worry too much about security or data sovereignty because this is open public data just like the stuff that is currently being published on data.gov and data.gov.au.

This would allow the most flexible and creative use of the data. You could easily publish other formats of the data through simple services that draw from the same repository. This would allow an almost real-time publication of data from government agencies to the public. As data is approved for publication it is automatically picked up by the View, and replicated to the public data repository.

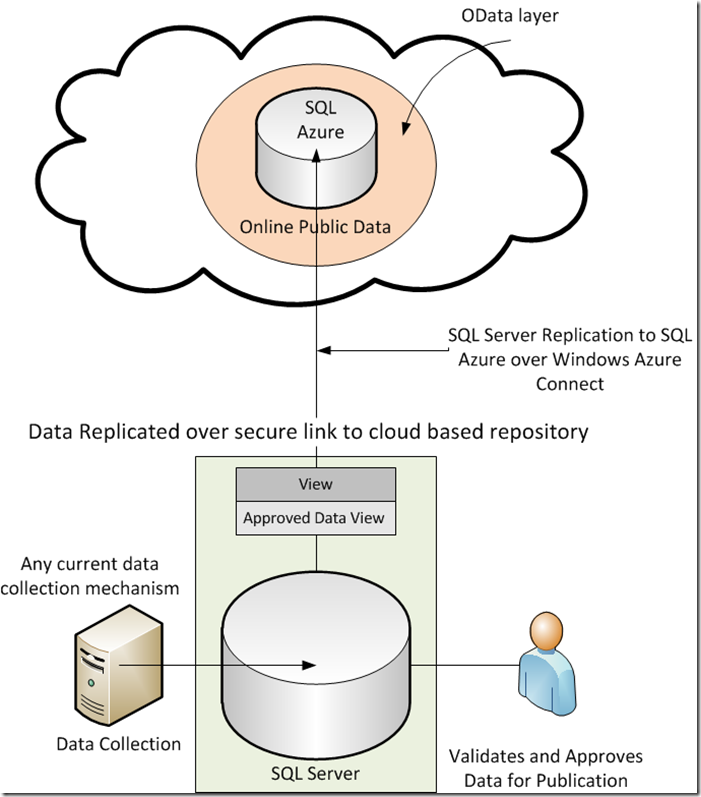

Real Technology Available to Do This Now

Here is another view of the diagram pointing out how currently available technologies would be used to enable this scenario.

Another nice benefit of using this technology is that it’s relatively simple to setup and start consuming.

Free Security Benefits

If you have the view established on the local DB rather than publishing to the ODR and filtering there to prevent any accidental exposure. This way only approved data leaves the on premise database so there is no possibility of that.

The data is protected in transit over the Azure Connect link. Azure Connect establishes a secure point to point link over IPV6 / IPSec so that the on premises SQL Server can only communicate with the SQL Azure instance specified and vice versa. The on premises SQL Server will not accept communications from anything else on that link.

A secondary benefit of using a cloud based repository is that unknown people, potentially malicious hackers, never touch the agency’s infrastructure. You can do a one-way push of the data to the cloud so there is no traffic coming in to your network. This is a huge security benefit.

So with a little investment in the right approach, we can set up a near real time public data publication system that provides real value to citizens. This value can be realised either directly, or through third party applications and mash-ups. The technologies in play are not new, and we know how to use them, we just have to expand our thinking on what we can do with it.

I admit this is only the tip of the iceberg, and that technology really isn’t the issue. It’s getting the Government agencies to collaborate, think of citizens first, and do what it takes to be successful. it’s a big ask and I don’t underestimate the internal challenges. But we have to start somewhere.