What is continuous delivery?

Continuously delivering value has become a mandatory requirement for organizations. To deliver value to your end users, you must release continually and without errors.

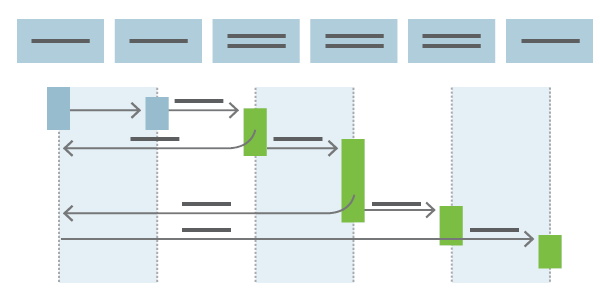

Continuous delivery (CD) is the process of automating build, test, configuration, and deployment from a build to a production environment. A release pipeline can create multiple testing or staging environments to automate infrastructure creation and deploy new builds. Successive environments support progressively longer-running integration, load, and user acceptance testing activities.

Before CD, software release cycles were a bottleneck for application and operations teams. These teams often relied on manual handoffs that resulted in issues during release cycles. Manual processes led to unreliable releases that produced delays and errors.

CD is a lean practice, with the goal to keep production fresh with the fastest path from new code or component availability to deployment. Automation minimizes the time to deploy and time to mitigate (TTM) or time to remediate (TTR) production incidents. In lean terms, CD optimizes process time and eliminates idle time.

Continuous integration (CI) starts the CD process. The release pipeline stages each successive environment to the next environment after tests complete successfully. The automated CD release pipeline allows a fail fast approach to validation, where the tests most likely to fail quickly run first, and longer-running tests happen only after the faster ones complete successfully.

The complementary practices of infrastructure as code (IaC) and monitoring facilitate CD.

Progressive exposure techniques

CD supports several patterns for progressive exposure, also called "controlling the blast radius." These practices limit exposure to deployments to avoid risking problems with the overall user base.

CD can sequence multiple deployment rings for progressive exposure. A ring tries a deployment on a user group, and monitors their experience. The first deployment ring can be a canary to test new versions in production before a broader rollout. CD automates deployment from one ring to the next.

Deployment to the next ring can optionally depend on a manual approval step, where a decision maker signs off on the changes electronically. CD can create an auditable record of the approval to satisfy regulatory procedures or other control objectives.

Blue/green deployment relies on keeping an existing blue version live while a new green version deploys. This practice typically uses load balancing to direct increasing amounts of traffic to the green deployment. If monitoring discovers an incident, traffic can be rerouted to the blue deployment still running.

Feature flags or feature toggles are another technique for experimentation and dark launches. Feature flags turn features on or off for different user groups based on identity and group membership.

Modern release pipelines allow development teams to deploy new features fast and safely. CD can quickly remediate issues found in production by rolling forward with a new deployment. In this way, CD creates a continuous stream of customer value.

Next steps

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for