Restore a database into the SQL Server big data cluster master instance

Applies to:

SQL Server 2019 (15.x)

This article describes how to restore an existing database into the master instance of a SQL Server 2019 Big Data Clusters. The recommended method is to use a backup, copy, and restore approach.

Important

The Microsoft SQL Server 2019 Big Data Clusters add-on will be retired. Support for SQL Server 2019 Big Data Clusters will end on February 28, 2025. All existing users of SQL Server 2019 with Software Assurance will be fully supported on the platform and the software will continue to be maintained through SQL Server cumulative updates until that time. For more information, see the announcement blog post and Big data options on the Microsoft SQL Server platform.

Backup your existing database

First, backup your existing SQL Server database from either SQL Server on Windows or Linux. Use standard backup techniques with Transact-SQL or with a tool like SQL Server Management Studio (SSMS).

This article shows how to restore the AdventureWorks database, but you can use any database backup.

Tip

Download the AdventureWorks backup.

Copy the backup file

Copy the backup file to the SQL Server container in the master instance pod of the Kubernetes cluster.

kubectl cp <path to .bak file> master-0:/var/tmp/<.bak filename> -c mssql-server -n <name of your big data cluster>

Example:

kubectl cp ~/Downloads/AdventureWorks2022.bak master-0:/var/tmp/AdventureWorks2022.bak -c mssql-server -n clustertest

Then, verify that the backup file was copied to the pod container.

kubectl exec -it master-0 -n <name of your big data cluster> -c mssql-server -- bin/bash

cd /var/

ls /tmp

exit

Example:

kubectl exec -it master-0 -n clustertest -c mssql-server -- bin/bash

cd /var/

ls /tmp

exit

Restore the backup file

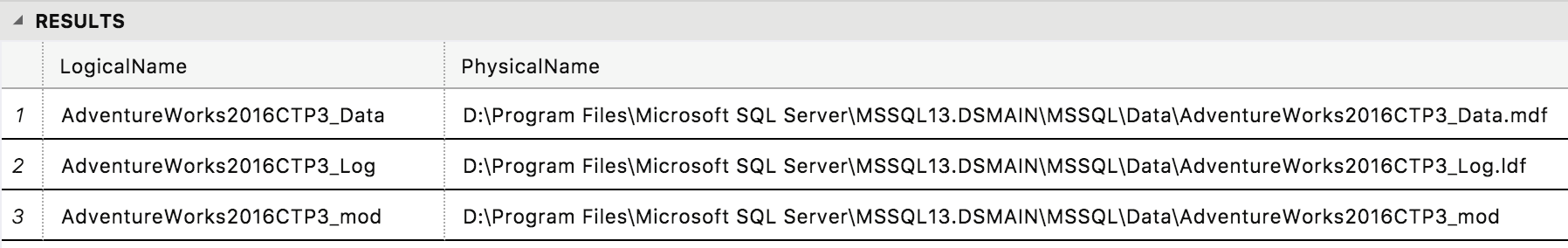

Next, restore the database backup to master instance SQL Server. If you are restoring a database backup that was created on Windows, you will need to get the names of the files. In Azure Data Studio, connect to the master instance and run this SQL script:

RESTORE FILELISTONLY FROM DISK='/tmp/<db file name>.bak'

Example:

RESTORE FILELISTONLY FROM DISK='/tmp/AdventureWorks2022.bak'

Now, restore the database. The following script is an example. Replace the names/paths as needed depending on your database backup.

RESTORE DATABASE AdventureWorks2022

FROM DISK='/tmp/AdventureWorks2022.bak'

WITH MOVE 'AdventureWorks2022_Data' TO '/var/opt/mssql/data/AdventureWorks2022_Data.mdf',

MOVE 'AdventureWorks2022_Log' TO '/var/opt/mssql/data/AdventureWorks2022_Log.ldf',

MOVE 'AdventureWorks2022_mod' TO '/var/opt/mssql/data/AdventureWorks2022_mod'

Configure data pool and HDFS access

Now, for the SQL Server master instance to access data pools and HDFS, run the data pool and storage pool stored procedures. Run the following Transact-SQL scripts against your newly restored database:

USE AdventureWorks2022;

GO

-- Create the SqlDataPool data source:

IF NOT EXISTS(SELECT * FROM sys.external_data_sources WHERE name = 'SqlDataPool')

CREATE EXTERNAL DATA SOURCE SqlDataPool

WITH (LOCATION = 'sqldatapool://controller-svc/default');

-- Create the SqlStoragePool data source:

IF NOT EXISTS(SELECT * FROM sys.external_data_sources WHERE name = 'SqlStoragePool')

CREATE EXTERNAL DATA SOURCE SqlStoragePool

WITH (LOCATION = 'sqlhdfs://controller-svc/default');

GO

Note

You will have to run through these setup scripts only for databases restored from older versions of SQL Server. If you create a new database in SQL Server master instance, data pool and storage pool store procedures are already configured for you.

Next steps

To learn more about the SQL Server Big Data Clusters, see the following overview:

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for