I've chacked in on some different algorithms and the issue appears when i'm using n-grams block for getting features. When i'm using feature hashing for example it looks like working well.

Azure ML real-time inference endpoint deloyment stuck on transitioning status

I can't use ml real-time inference endpoint becouse it's stuck on transitioning status (more than 20 hours). Could you help me with that?

10 additional answers

Sort by: Newest

-

April Frommer 1 Reputation point

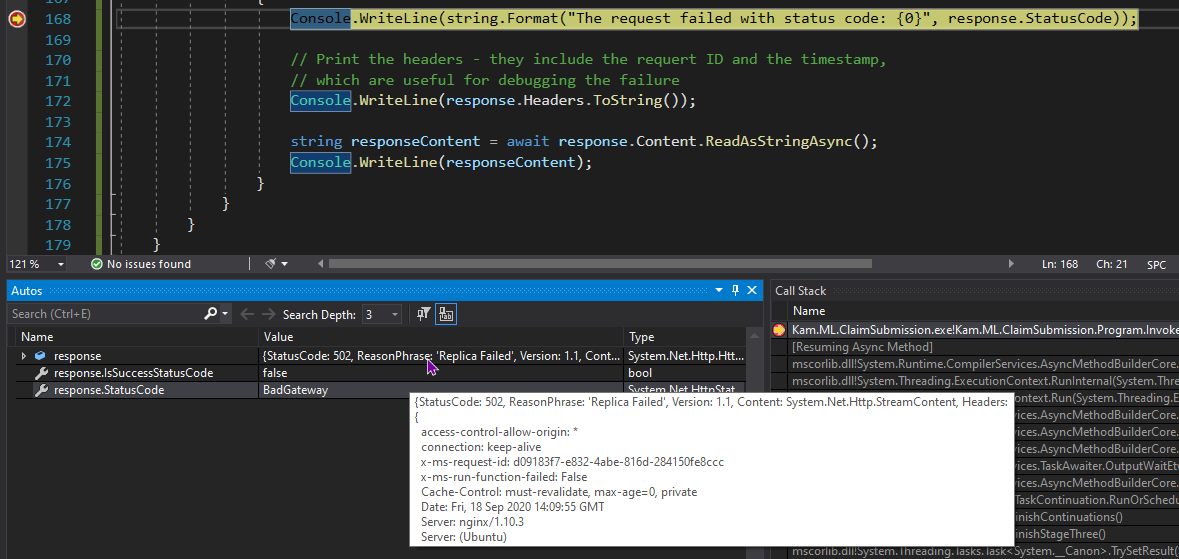

2020-09-18T14:17:54.91+00:00 I was also able to get Healthy status after republishing my endpoint. However, I am also receiving the bad gateway message.

Here's a screenshot from my console app where I am receiving the error.

-

Mateusz Orzymkowski 96 Reputation points

2020-09-18T10:44:58.407+00:00 Unfortunately re-try not helps.

There is bearer token auth enabled.

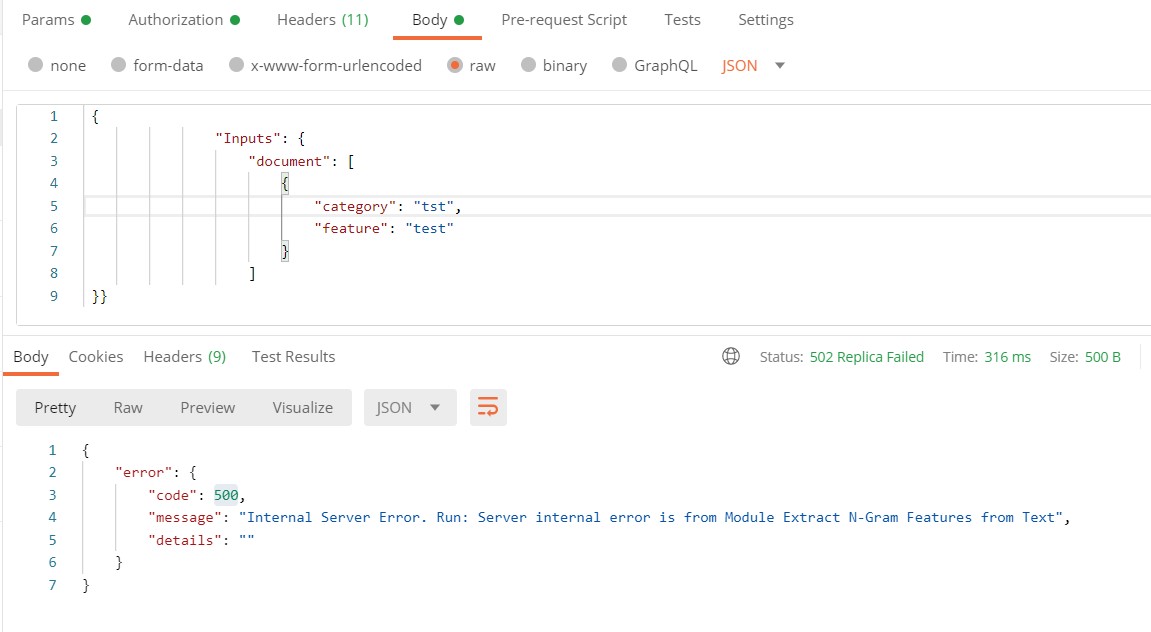

And error from postman depends on request body.First example:

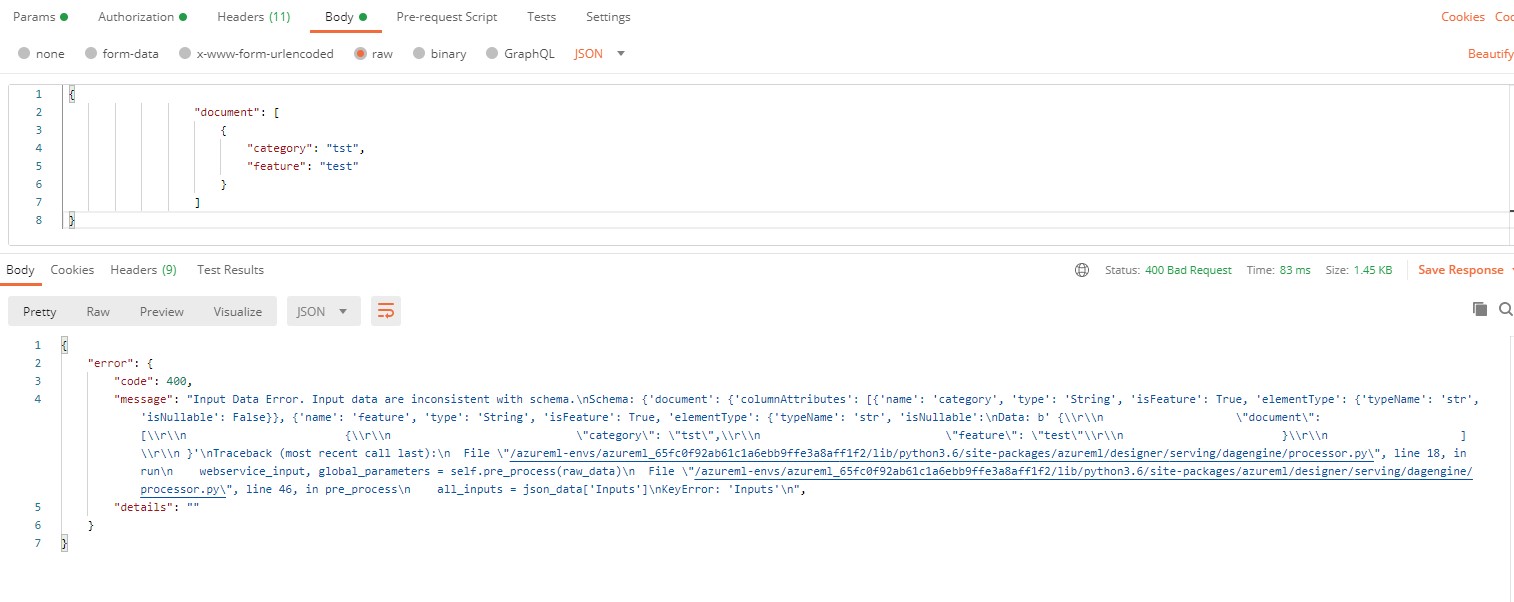

Second example:

In second case it looks like i've made some mistake in request body, but in first response i'm getting error from typical server site. Or maybe i've made mistake in body in both cases? Could you help me with that?

Thank you in advance!

-

Chifeng Cai 81 Reputation points

2020-09-18T10:30:21.113+00:00 Good to know deployment succeed. For endpoint testing issue:

- does re-try help?

- what's the error response code in postman? any authentication enabled for this service?

-

Mateusz Orzymkowski 96 Reputation points

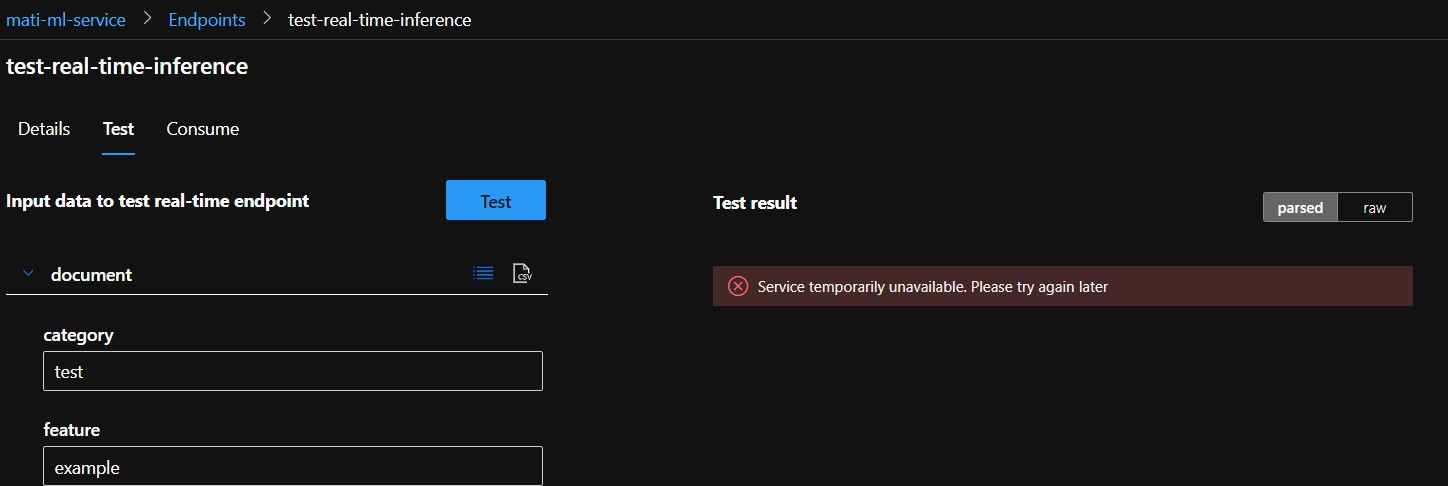

2020-09-18T08:24:21.877+00:00 Hello ChifengCai-4095, thank you for your answer. Yes, now I can finish my deployment process, it's seems like working, but i think i found one more issue. When I try to test this endpoint by using test fold or in postman request i'm getting new error.

If you need some more info or some stack trace in json from response I could prepare that.

One more question, meybe I should create a new topic for that issue or it can be continued in this one?Thank you all guys for quick answers.