Windows Azure autoscaling now built-in

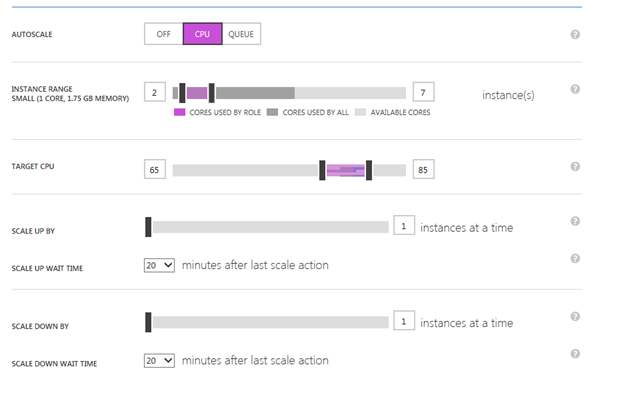

One of the key benefits that the Windows Azure platform delivers is the ability to rapidly scale your application in the cloud in response to fluctuations in demand. Up to now, you had to write custom scripts or use other tools like Wasabi or MetricsHub in order to enable that. Last week during //Build, Scott Guthrie announced that autoscaling capabilities are available natively on the platform (also summarized in this post). This means that in most common scenarios you are no longer required to host Wasabi yourself. It’s now much easier to scale your apps by configuring the rules right within the Windows Azure portal. Just go to the Scale tab for your cloud services or VMs. The sample below shows the knobs for configuring autoscaling based on CPU utilization.

It’s still in preview and only supports basic metrics (CPU utilization and the Azure queue length). Nevertheless, Windows Azure autoscaling addresses the needs of the majority of Azure customers. It is quite simple and elegant. In fact, it’s so simple and intuitive you don’t need a tutorial for it. We recommend you consider it first before looking at any other options or tools.

If your scenarios require more advanced features (such as other perf counters, time-based constraints, composite rules, growth rates, custom metrics or actions), Wasabi may still be a good choice in the meantime. Many of its features are on the backlog of the Windows Azure autoscaling team and they will be added to the future releases of the platform in due course. As far as Wasabi goes, there’re no plans for future releases. This is aligned with p&p’s deprecation philosophy, on which you can read more on here.

To help you understand the differences between the current version of the built-in autoscaling feature and Wasabi, see the table below:

Feature |

Windows Azure Autoscale as of 6/26/2013 |

Wasabi as of 6/26/2013 |

Integrated into the Windows Azure portal |

Yes |

No |

Metrics supported |

CPU and queue length |

CPU, queue length and other Windows perf counters; current instance count; |

Ease of setting up |

Extremely easy (built-in service in the platform and the portal) |

Medium (requires hosting a component) |

Requires dedicated storage for data points |

No (and that’s good thing!) |

Yes (can use Azure blob storage or local file storage or custom) |

Ease of configuration |

Extremely easy |

Medium (requires configuring storage account credentials and management certificates) |

Impact on the target app |

None |

Need to enable relevant perf counters to be captured in WAD |

Support web sites |

Yes |

No |

Support cloud services (web/worker roles) |

Yes |

Yes |

Support VM roles |

Yes |

Untested |

Custom metrics |

No, an API is planned |

|

Custom actions |

No |

|

Cool-down period support |

Yes |

Yes * |

Timetable-based scaling |

No, but planned |

|

Composite rules |

No, currently under consideration |

Yes (expressions, nesting and aggregate functions supported) |

Scale groups |

No |

|

History of scaling decisions with reasons |

Yes |

|

Application throttling |

No |

|

Upgradeability |

Automatic |

Manual |

Release |

Preview |

RTW |

* Wasabi has 2 knobs: one to enable the cool-down periods after any scaling action and another one to optimize the costs around the hourly billing boundaries. With Windows Azure now supporting more granular billing, it’s recommended not to use Wasabi’s optimizing stabilizer.

During the keynote Scott Guthrie gave an example of Skype, one of the largest Internet services in the world. Like most apps Skype sees fluctuations in loads which result in unused capacity during non-peak times. By moving to Windows Azure and with the use of autoscaling, Skype will realize >40% of cost savings (vs running their own data centers or with no autoscaling).

Other autoscaling case studies can be found here.

Regardless whether you choose to use the new autoscaling feature (which you should!) or Wasabi, your application still needs to be designed for elasticity (for guidance, review most of the concepts in Wasabi docs, the Developing Multi-tenant Application for the Cloud guide (3rd edition) and the CQRS Journey guide).