The SQL Swiss Army Knife #6 - Evaluating compression gains

Download code here: view_CompressionGains.sql

Hello all,

Here is another one focusing on SQL scripts that may help DBAs, following the series "SQL Swiss Army Knife". This time we are evaluating table and index compression, specifically an approach on how to select the best compression method for a database object.

There is a great deal of good documentation and blog posts about compression, so I won’t get into any concepts. On a 10000ft view, this feature was introduced with SQL Server 2008 and enables data compression within a database (on heaps, indexes, indexed views and partitioned objects) thus reducing its size. Apart from this gain, as a expected but nice side-effect, I/O is also optimized, because compressed data takes fewer data pages to store, and reads from disk are ultimately “faster”.

This feature also takes additional CPU resources while compressing and decompressing data, so it is important to know the workload on the specific database/instance/host combo when deciding how and what to compress.

Bearing this in mind, it is highly recommended that you start by reading about table and index compression here and the related whitepaper here before moving on with any choice. The whitepaper I just mentioned is the basis for the choice algorithm I use in this script.

Also, there is a caveat with using this script, because table and index compression (and even the system SP sp_estimate_data_compression_savings that this script wraps around) is an Enterprise only feature, and outputs the savings that can occur when you enable a table or partition for row or page compression. Furthermore, this script is all the more trustworthy as instance uptime increases (or full business cycles go through it), because it uses sys.dm_db_index_operational_stats to assert read and write ratio. Keep in mind that it is meant to provide mere guidance on the best compression method for a given object, providing enough memory and CPU resources are available.

Note that this script will execute on the context of the current database. Also be aware that this may take awhile to execute on large objects, because if the IS locks taken by the sp_estimate_data_compression_savings cannot be honored, the SP will be blocked.

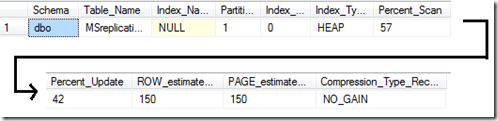

The output will resemble this:

There is a column [Percent_Update] which is the percentage of update operations on a specific table, index, or partition, relative to total operations on that object. The lower the percentage of Updates (that is, the table, index, or partition is infrequently updated), the better candidate it is for page compression.

Another column is [Percent_Scan] which is the percentage of scan operations on a table, index, or partition, relative to total operations on that object. The higher the value of Scan (that is, the table, index, or partition is mostly scanned), the better candidate it is for page compression.

And yet another column is [Compression_Type_Recommendation] that can have four possible outputs indicating where there is most gain, if any: ‘PAGE’, ‘ROW’, ‘NO_GAIN’ or ‘?’. When the output is ‘?’ this approach could not give a recommendation, so as a rule of thumb I would lean to ROW if the object suffers mainly UPDATES, or PAGE if mainly INSERTS, but this is where knowing your workload is essential. When the output is ‘NO_GAIN’ well, that means that according to sp_estimate_data_compression_savings no space gains will be attained when compressing, as in the above output example, where compressing would grow the affected object.

Hope you find it useful.

EDIT (03-12-2013): Fixed divide by zero error

EDIT (11/03/2016): Moved to GitHub

Until next time!

Disclaimer: I hope that the information on these pages is valuable to you. Your use of the information contained in these pages, however, is at your sole risk. All information on these pages is provided "as -is", without any warranty, whether express or implied, of its accuracy, completeness, fitness for a particular purpose, title or non-infringement, and none of the third-party products or information mentioned in the work are authored, recommended, supported or guaranteed by Ezequiel. Further, Ezequiel shall not be liable for any damages you may sustain by using this information, whether direct, indirect, special, incidental or consequential, even if it has been advised of the possibility of such damages.