Hyper-V: Host Configuration

Hello Folks,

Last week I wrote about the fact that we have been getting more and more questions regarding Hyper-V. Maybe it's the fact that we keep moving in the “leader” quadrant of the Gartner Magic Quadrant for x86 Server Virtualization Infrastructure. Or maybe its just because IT professional are finally realizing that Hyper-V is definitely worth a try.

Hyper-V is included with Windows Server 2012 R2, it’s available as a free download as Hyper-V Server 2012 R2. I highly encourage you to download the evaluation version and take it for a test drive.

You can also check out the Microsoft Virtual Academy modules on the subject. It’s a great way to learn at your own pace.

- Windows Server 2012 Jump Start: Preparing for the Datacenter Evolution

- Introduction to Hyper-V Jump Start

- Windows Server 2012 R2 Virtualization

- Server Virtualization with Windows Server Hyper-V and System Center

But for now lets look at the hosts configuration options for Hyper-V.

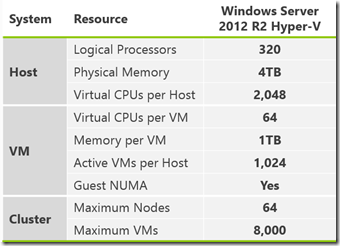

For the physical hosts we now support

- Up to 320 logical processors

- 4TB physical memory per host

- Up to 1,024 virtual machines per host

When clustered together Hyper-V 2012 R2 support for up to 64 physical nodes & 8,000 virtual machines per cluster. As for the virtual machines themselves, we now support for up to 64 virtual processors and 1TB memory per VM and we supports in-guest NUMA.

So how can I deploy Hyper-V?

Well, pretty much in the same ways you can deploy other virtualization platforms, with some subtle differences. You can download the ISO file from the web, burn it to a DVD (or attach it to a Virtual CD in an iLO-type approach), and boot the machine, installing in the way many are familiar with. The installation, depending on your hardware, will take around 20 minutes, end to end, but may be much faster on more modern hardware. Or, you could extract those same files from the ISO, but place them on a bootable USB, and boot from there, installing Windows Server & Hyper-V onto the local disk.

Or, you could deploy Hyper-V over the network, which would be similar to the approach taken by VMware, From a Hyper-V perspective, this network deployment could be via the core, inbox Windows Deployment Services, or, enhanced with VMM. However this last one requires a baseboard management controller (BMC) with a supported out-of-band management protocol. VMM supports the following out-of-band management protocols

- Intelligent Platform Management Interface (IPMI) versions 1.5 or 2.0

- Data Center Management Interface (DCMI) version 1.0

- System Management Architecture for Server Hardware (SMASH) version 1.0 over WS-Management (WS-Man)

- Custom protocols such as Integrated Lights-Out (iLO).

so we deployed our Hyper-v server using the USB boot option.

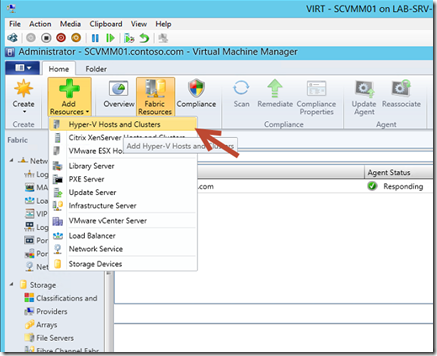

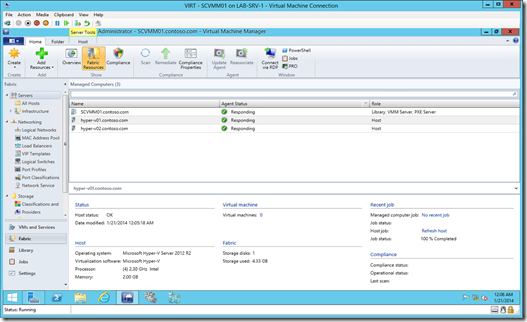

Virtual Machine Manager 2012 R2 provides a complete, centralized hardware configuration for Hyper-V hosts that allows the us to configure local storage, networking, Virtual Switches, BMC settings, Migration Settings (such as LM network, simultaneous migrations) etc. so we will add our 2 Hyper-V host to SCVMM so we can manage their configuration.

1- In SCVMM in the Fabric Section, click Add Resources, and select Hyper-V Hosts and Clusters

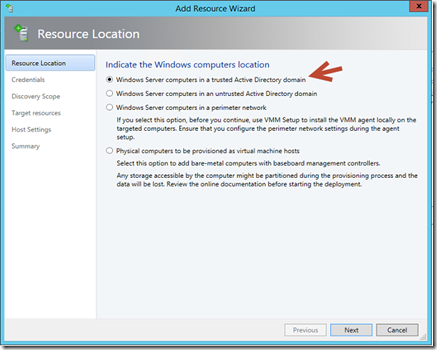

2- Since both our Hyper-V servers are domain members, we will pick Windows Server Computers in a trusted Active Directory domain

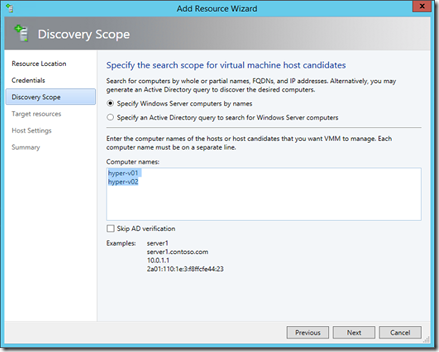

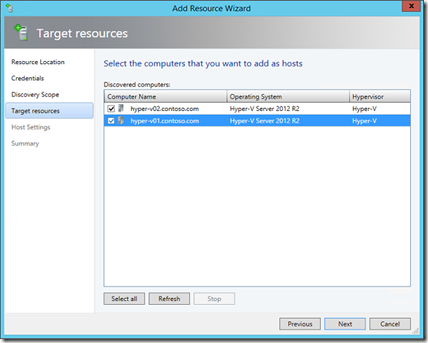

3- After selecting our “Run As Account” , we specified which hosts we want to include.

4- Confirm the selection, and click Next, and click Next on the Host Settingspage

5- and click Next on the Host Settings page, and Finish(both server might need to be rebooted in order to allow the SCVMM agents to be activated)

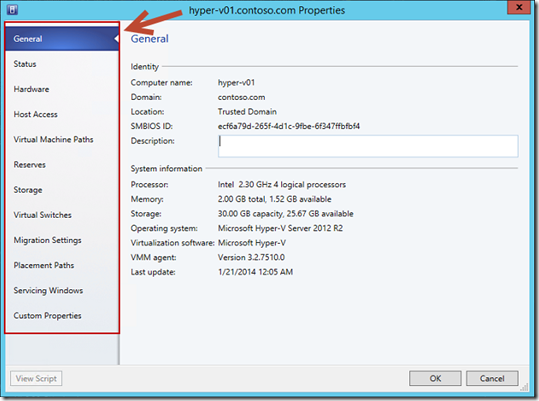

Now that we have deployed our hosts, what kind of configuration changes and controls can we apply? Well, lets have a look at some of the key configuration options. If we look at the properties of a HYPER-V01, we can configure the general hardware settings, around local storage, networks, baseboard management controller settings, along with CPU and memory settings

In the storage section, the admin can control granular storage settings, such as adding an iSCSI or FC array LUN to the host, or an SMB share.

In the Virtual Switches tab, you’ll see a list of the virtual switches (like the ones we talked about earlier in the course) and their bindings to the underlying physical network cards. We can create new standard switches, or logical switches, which we’ll talk about later, from this interface.

Under Migration Settings, this allows the administrator to define which network is used for Live Migration, and in addition, the number of simultaneous live migrations, along with the authentication protocol.

In our next Hyper-V Post we will cover setting up the Storage.

until then, get the evals, setup your lab.

We are just getting started!

Cheers!

Pierre Roman | Technology Evangelist

Twitter | Facebook | LinkedIn