Conor vs. Extended Events

I have been playing with the XEvent feature in SQL 2012 (RC0) and there are a few gotchas that I hit. I figured I’d post them up here so that others could learn from my experiences.

I was trying to do something pretty simple – get the % Processor Used computation from the performance counters. SQL Server exposes these through an XEvent, so I thought I’d get that data through the event.

1: CREATE EVENT SESSION test_session

2: ON SERVER

3: ADD EVENT sqlserver.perfobject_processor (WHERE instance_name = '_Total')

4: ADD TARGET package0.asynchronous_file_target( SET filename='c:\temp\file_name2.xel', metadatafile='c:\temp\file_name2.xem')

5: GO

6: alter event session test_session

7: on server

8: state = start

9: GO

10: -- do some things that consume CPU that you wish to measure

11: alter event session test_session

12: on server

13: state = stop

14: GO

15: drop event session test_session on server

16: GO

The key parts to note here are:

1. On line 3, I added a filter to only get the _Total counter from the event stream – otherwise you get more data for each NUMA node + processor independently. I have only aspirations for the basics, so I filtered out what I did not need to keep the event size smaller.

2. Events get captured only after you do the command on lines 6-8. The session is initially “paused”.

3. The eventing mechanism will create files that start with the file prefix (file_name2). In my simple examples, only one file was created to capture events.

4. there can be a delay getting the events to the file – if you are trying to read events in real-time (while the session is active), then be aware that the async target is really async ![]() .

.

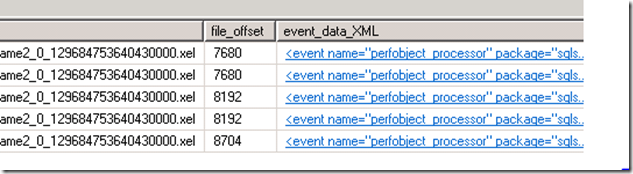

So I did this and captured some events. Then I tried to read the data which is where I got lost in the weed for a bit (and where I thought it would be good to write up what I learned for everyone). So here is what I first saw when I started trying to read the event stream:

1: SELECT *, CAST(event_data AS XML) AS 'event_data_XML'

2: FROM sys.fn_xe_file_target_read_file('c:\temp\file_name2*.xel', 'c:\temp\file_name2*.xem', NULL, NULL)

Well, things are stuck inside of some XML CLOBs that were fabricated over the file. Let’s see if we can crack those open.

1: <event name="perfobject_processor" package="sqlserver" timestamp="2011-12-16T02:16:08.271Z">

2: <data name="percent_c1_time">

3: <value>13621740000000</value>

4: </data>

5: <data name="percent_c2_time">

6: <value>0</value>

7: </data>

8: <data name="percent_c3_time">

9: <value>0</value>

10: </data>

11: <data name="percent_dpc_time">

12: <value>14304784695</value>

13: </data>

14: <data name="percent_idle_time">

15: <value>13725218712606</value>

16: </data>

17: <data name="percent_interrupt_time">

18: <value>2452374718</value>

19: </data>

20: <data name="percent_maximum_frequency">

21: <value>0</value>

22: </data>

23: <data name="percent_priority_time">

24: <value>13737850815579</value>

25: </data>

26: <data name="percent_privileged_time">

27: <value>131372654125</value>

28: </data>

29: <data name="percent_processor_time">

30: <value>13725218712606</value>

31: </data>

(more of the same – shorted for space).

We want the percent_processor_time field. Interestingly, it does not look like a percentage ![]() . So I thought I’d double-check the descriptions for the events:

. So I thought I’d double-check the descriptions for the events:

1: select description from sys.dm_xe_objects where name like '%processor%'

“Returns a set of counters associated with the Processor performance object. The event occurs once every 15 seconds for each processor in the system.”

This means we’ll have fewer events than the normal perf counter and they will be fired every 15seconds instead of every second – fine.

1: select description from sys.dm_xe_object_columns where object_name like '%processor%' and name = 'percent_processor_time'

“Shows the percentage of time that the processor is executing application or operating system processes other than Idle.”

Hrm. Well, this seems off. We are probably seeing the raw perf counter instead of the actual percentage. (Conor is off talking to the team that owns this to get the description fixed).

So, assuming it is the raw counter, how do we get something useful out of this?

After much Binging, I found a place where the computation is explained: Using Raw Perf Counter Performance Data

A few key tidbits:

1. PERF_100NSEC_TIMER_INV - The counter is 100 nanosecond ticks – great!

2. The key inputs the calculations are:

N1 = objInstance1.PercentProcessorTime

D1 = objInstance1.TimeStamp_Sys100NS

3. The formula is (1 - ((N2 - N1)/(D2-D1)))*100, meaning that we take the counter difference from two samples and then we divide by the difference in the timestamp counter (100ns granularity).

So now I can try to create the same computation in SQL. There were a few gotchas, however:

1. The datediff function returns an int (32 signed bits), and using datediff with nanosecond precision overflows. So I used microseconds and multiplied by 10 to get to 100ns ticks

2. Furthermore, the event data does not contain the raw timestamp value – it just has the timestamp in millisecond precision as a datetime2. (So that’s something I’ll talk to the team about)

3. We get a chance to use our new windowing functions in SQL 2012 to do the stream computation from one sample to the next. I used the lag function to get that to work.

Here’s the end result. I included a few of the component parts of the function as extra columns so that you can validate that it is working yourself ![]() :

:

-- this query mostly computes % Processor Time from the XEvent raw counter

select d2 as timestamp, N2-N1, datediff(mcs,D1,D2)*10, 1.0-((N2-N1)/(10.0*datediff(mcs,D1,D2))) as real_percent_processor_time from

(

select percent_processor_time as N2,

lag(percent_processor_time, 1) over(order by timestamp) as N1,

timestamp as D2,

lag(timestamp, 1) over(order by timestamp) as D1

FROM

(

SELECT

n.value('(@name)[1]', 'varchar(50)') AS event_name,

n.value('(@package)[1]', 'varchar(50)') AS package_name,

DATEADD(hh, DATEDIFF(hh, GETUTCDATE(), CURRENT_TIMESTAMP),

n.value('(@timestamp)[1]', 'datetime2')) AS [timestamp],

n.value('(data[@name="percent_processor_time"]/value)[1]', 'bigint') as percent_processor_time

FROM

(SELECT

CAST(event_data AS XML) AS event_data

FROM sys.fn_xe_file_target_read_file('c:\temp\file_name2*.xel', 'c:\temp\file_name2*.xem', NULL, NULL)

) as tab

CROSS APPLY event_data.nodes('event') as q(n)) as A

) as A

order by d2

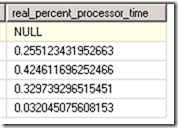

Sample output included here for your reference – there is no value for the first row since it doesn’t have a prior data point to run the formula.

While I’ll be working on the rough edges with the team, I hope this helps speed up your application development!

Happy Querying!

Conor Cunningham