Fill Factor and Data Compression

Another post by our excellent US PFE Susan Van Eyck goes though Fill Factor and Data Compression!

I got a pair of interesting questions from a colleague this week:

- If you’re using fill factor then enable data compression, is the fill factor still enforced?

- If you aren’t using fill factor and enable data compression does it create free space on the original pages?

The answer to #2 is “No” because to enable compression (row or page) you rebuild an index, and that involves writing the data out to a new set of data (or index) pages then dropping the original index. Note the page numbers in the illustration below (bonus points if you can explain the funky numbering in the original index). Since this index was rebuilt without a fill factor, each page was filled to capacity. Only the last page might have room for more data (represented here by the lighter colors):

As for question #1, I did a bit of research and found a helpful blog post from Sunil Agarwal (one of the SQL Server Program Managers) confirming that the 2 features are compatible. I wrote a demo script based on Sunil’s, and thought it would be interesting to share with you – along with a few notes about data compression.

To start, we’ll create a table in tempdb then fill it with a lot of repetitive data:

USE tempdb;

CREATE TABLE dbo.BogusData (

BogusDataId INT IDENTITY,

WideColumn CHAR(100) DEFAULT REPLICATE( 'A', 50 )

);

INSERT INTO dbo.BogusData ( WideColumn ) DEFAULT VALUES;

GO 10000

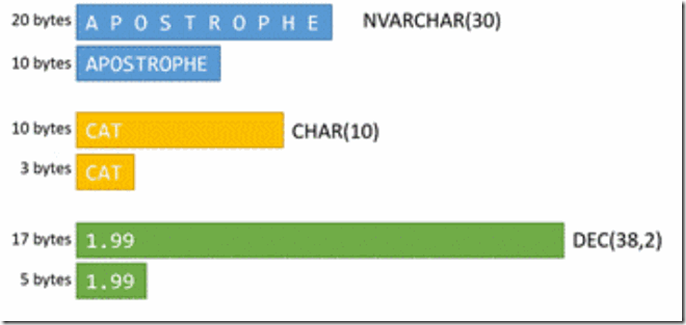

That CHAR(100) column is a good target for Row Compression since we’re using only half the reserved space with strings 50 characters in length. You can think of Row Compression as smooshing out leftover space from columns. Here are some examples of where it will be useful:

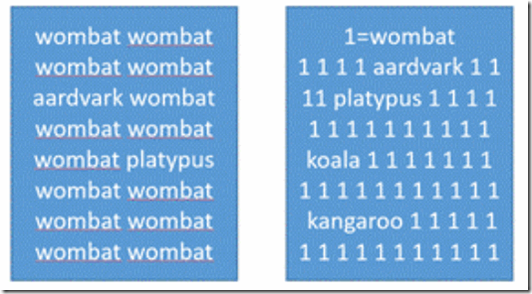

And all that repetitive data is a good target for Page Compression which stores 1 copy of a repeated column values in the page header then substitutes a smaller placeholder in the body of the page (dictionary compression). My index is bit wonky, and the compression process is a bit more involved, but you get the idea – a lot more wombats per page read.

The impact of data compression will depend greatly on both your data types and your data. For instance, a CHAR(10) column that always contains 10 characters won’t benefit from row-compression. Neither will a VARCHAR(n) column doesn’t have extra space to eliminate. A unique index on a column like SocialSecurityNumber won’t benefit from either row or page compression (assuming you’ve chosen the data type and width wisely). And repetitive data can only page-compressed if the repeated values occur on the same data page. Imagine an index on EmployeeId that includes LastName. If there are 2000 Smiths are scattered across 10,000 data pages, dictionary compression won’t be of much use. Onto our demonstration!

In the demos below we’ll start by adding a clustered index to our table, then we’ll look at the impact of adding a fill factor, row compression and finally page compression (which includes row compression). We’ll check the index’s size and space usage after each step (although the query to get those values is only printed once). Let’s see what happens to those values across our 4 test cases.

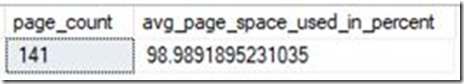

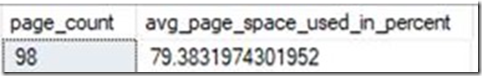

- Add a Clustered Index

CREATE CLUSTERED INDEX ci_BogusDataId ON dbo.BogusData ( BogusDataId );

SELECT page_count, avg_page_space_used_in_percent

FROM sys.dm_db_index_physical_stats( DB_ID(), OBJECT_ID( 'dbo.BogusData' ), NULL, NULL, 'DETAILED' )

WHERE index_level = 0;

Note that our data pages are packed full. This helps keep the cost of I/O down since we get more data rows per page read.

[Note: We’re using DETAILED mode in the query to return the avg_page_space_used_in_percent value, but this requires that all the index’s data pages be read. Be very cautious using this mode in a production environment. ]

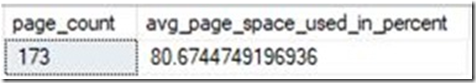

- Add a Fill Factor

ALTER INDEX ci_BogusDataId ON dbo.BogusData REBUILD WITH ( FILLFACTOR = 80 );

We’ve now got about 20% free space on each data page – good for delaying page splits, but we’ve also made the index 20% larger which will negatively impact queries involving index scans as there are now fewer row per page. Keep this trade-off in mind when implementing fill factors.

- Add Row Compression (the specified fill factor will persist through the rebuild)

ALTER INDEX ci_BogusDataId ON dbo.BogusData REBUILD WITH ( DATA_COMPRESSION = ROW );

This is a move in the right direction! We’ve still got free space for new data, and “smooshing” out the empty spaces has dropped the index size by more than 40%. We might have expected a 50% drop since we’re only using half of the column’s 100 character width, but some page space is now is being used to store information about the uncompressed state of the column.

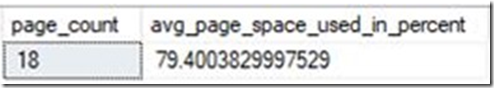

- Add Page Compression (includes Row Compression which will be applied first)

ALTER INDEX ci_BogusDataId ON dbo.BogusData REBUILD WITH ( DATA_COMPRESSION = PAGE );

Once again, the fill factor persists, and due to the very bogus, super-repetitive nature of our data the index is down to a mere 18 pages! Note that you’re unlikely to see such amazing gains with real-world data.

Remember to drop the demo table when you’re done:

DROP TABLE dbo.BogusData;

If you’re thinking about using either fill factor or data compression, here’s some additional reading that covers some of the factors you’ll want to take into consideration:

Specify Fill Factor for an Index Data Compression

And lastly, I’ve attached a script that has the full set of demo scripts to save you a little copy/paste effort!

20180404_FillFactorAndDataCompression

Happy Exploring!