Standards-Based Interoperability

There has been quite a bit of discussion lately in the blogosphere about various approaches to document format interoperability. It’s great to see all of the interest in this topic, and in this post I’d like to outline how we look at interoperability and standards on the Office team. Our approach is based on a few simple concepts:

- Interoperability is best enabled by a multi-pronged approach based on open standards, proactive maintenance of standards, transparency of implementation, and a collaborative approach to interoperability testing.

- Standards conformance is an important starting point, because when implementations deviate from the standard they erode its long-term value

- Once implementers agree on the need for conformance to the standard, interoperability can be improved through supporting activities such as shared stewardship of the standard, community engagement, transparency, and collaborative testing

It’s easy to get bogged down in the details when you start thinking through interoperability issues, so for this post I’m going to focus on a few simple diagrams that illustrate the basics of interoperability. (These diagrams were inspired by a recent blog post by Wouter Van Vugt.)

Interoperability without Standards

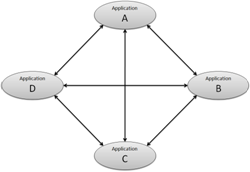

First, let’s consider how software interoperability works when it is not standards-based.

Consider the various ways that four applications can share data, as shown in the diagram to the right. There are six connections between these four applications, and each connection can be traversed in either direction, so there are 12 total types of interoperability involved. (For example., Application A can consume a data file produced by Application B, or vice versa.)

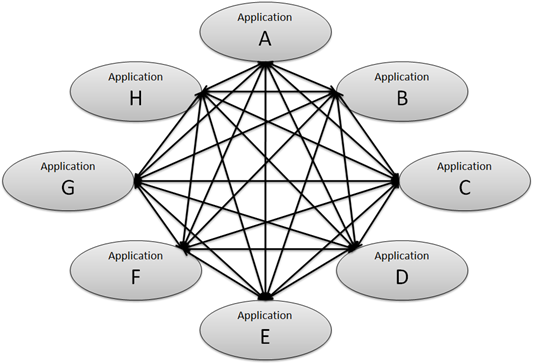

As the number of applications increases, this complexity grows rapidly. Double the number of applications to 8 total, and there will be 56 types of interoperability between them:

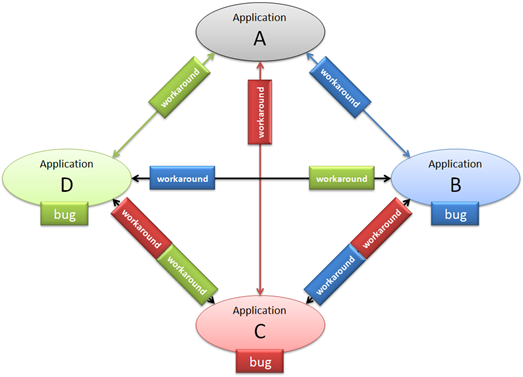

Let’s go back to the simple case of 4 applications that need to interoperate with one another, and take a look at another factor: software bugs. All complex software has bugs, a nd some bugs can present significant challenges to interoperability. Let’s consider the case that 3 of the 4 applications have bugs that affect interoperability, as shown in the diagram to the right.

nd some bugs can present significant challenges to interoperability. Let’s consider the case that 3 of the 4 applications have bugs that affect interoperability, as shown in the diagram to the right.

The bugs will need to be addressed when data moves between these applications. Some bugs can present unsolvable roadblocks to interoperability, but for purposes of this discussion let’s assume that every one of these bugs has a workaround. That is, application A can take into account the known bug in application B and either implement the same buggy behavior itself, or try to fix up the problem when working with files that it knows came from application B.

Here’s where those workarounds will need to be implemented:

Note the complexity of this diagram. There are 6 connections between these 4 applications, and everyone one of them has a different set of workarounds for bugs along the path. Furthermore, any given connection may have different issues when data moves in different directions, leading to 12 interoperability scenarios, every one of which presents unique challenges. And what happens if one of the implementers fixes one of their bugs in a new release? That effectively adds yet another node to the diagram, increasing the complexity of the overall problem.

In the real world, interoperability is almost never achieved in this way. Standards-based interoperability is much better approach for everyone involved, whether that standard is an open one such as ODF (IS26300) or Open XML (IS29500), or a de-facto standard set by one popular implementation.

Standards-Based Interoperability

In the world of de-facto standards, one vendor ends up becoming the “reference implementation” that everyone else works to interoperate with. In actual practice, this de-facto standard may or may not even be written down – engineers can often achieve a high degree of interoperability simply by observing the reference implementation and working to follow it.

De-facto standards often (but not always) get written down to become public standards eventually. One simple example of this is the “Edison base” standard for screw-in light bulbs and sockets, which started as a proprietary approach but has long since been standardized by the IEC. In fact this is a much more common way for standards to become successful than the “green field” approach in which the standard is written down first before there are any implementations.

Once a standard becomes open and public, the process for maintaining it and the way that implementers achieve interoperability with one another changes a little.

The core premise of open standards-based interoperability is this: each application implements the published standard as written, and this provides a baseline for delivering interoperability. Standards don’t address all interoperability challenges, but the existence of a standard addresses many of the issues involved, and the other issues can be addressed through standards maintenance, transparency of implementation details, and collaborative interoperability testing.

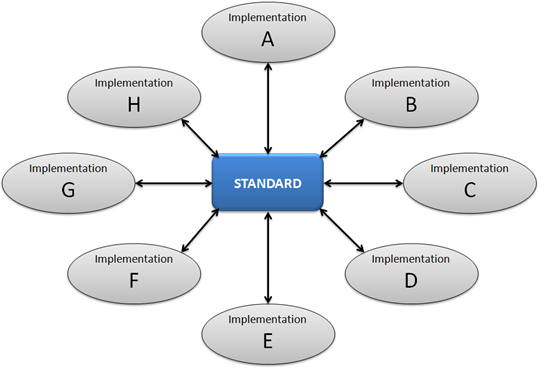

In the standards-based scenario, the standard itself is the central mechanism for enabling interoperability between implementations:

This diagram is much simpler than the other diagram above that showed 56 possible connections between 8 implementations. The presence of the standard means that there are only 8 connections, and each connection only has to deal with the bugs in a single implementation.

How this all applies to Office 2007 SP2

I covered last summer the set of guiding principles that we used to guide the work we did to support ODF in Office 2007 SP2. These principles were applied in a specific order, and I’d like to revisit the top two guiding principles to explain how they support the view of interoperability that I’ve covered above.

Guiding Principle #1: Adhere to the ODF 1.1 Standard

In order to achieve the level of simplicity shown in the diagram above, the standard itself must be carefully written and implementers need to agree on the importance of adhering to the published version of the standard. That’s why we made “Adhere to the ODF 1.1 Standard” our #1 guiding principle. This is the starting point for enabling interoperability.

Recent independent tests have found that our implementation does in fact adhere to the ODF 1.1 standard, and I hope others will continue to conduct such tests and publish the results.

Guiding Principle #2: Be Predictable

The second guiding principle we followed in our ODF implementation was “Be Predictable.” I’ve described this concept in the past as “doing what an informed user would likely expect,” but I’d like to explain this concept in a little more detail here, because it’s a very important aspect of our approach to interoperability in general.

Being predictable is also known as the principle of least astonishment. The basic concept is that users don’t want to be surprised by inconsistencies and quirks in the software they use, and software designers should strive to minimize or eliminate any such surprises.

There are many ways that this concept comes into play when implementing a document format such as ODF or Open XML. One general category is mapping one set of options to a different set of options, and I used an example of this in the blog post mentioned above:

When OOXML is a superset of ODF, we usually map the OOXML-only constructs to a default ODF value. For example, ODF does not support OOXML’s doubleWave border style, so when we save as ODF we map that style to the default border style.

Our other option in this case would have to turn the text box and the border into a picture. That would have made the border look nearly identical when the user opened the file again, but we felt that users would have been astonished (in a bad way) when they discovered that they could no longer edit the text after saving and reopening the file.

What about Bugs and Deviations?

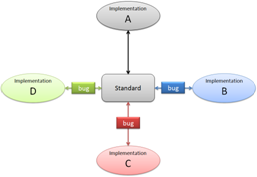

Of course, the existence of a published standard doesn’t prevent interoperability bugs from occurring. These bugs may include deviations from the requirements of the standard. In addition, they may include different interpretations of ambiguous sections of the standard.

The first step in addressing these sorts of real-world issues is transparency. It’s hard to work around bugs and deviations if you’re not sure what they are, or if you have to resort to guesswork and reverse engineering to locate them.

Our approach to the transparency issue has been to document the details of our implementation through published implementer notes. We’ve done that for our implementations of ODF 1.1 and ECMA-376, and going forward we’ll be doing the same for IS29500 and future versions of ODF when we support them.

Interoperability Testing

The final piece of the puzzle is hands-on testing, to identify areas where implementations need to be adjusted to enable reliable interoperability.

This is where the de-facto standard approach meets the public standard. If the written standard is unclear or allows for multiple approaches to something, but all of the leading implementations have already chosen one particular approach, then it is easy for a new entrant to the field to see how to be interoperable. If other implementers have already chosen diverging approaches however, then it is not so clear what to do. Standards maintainers can help a great deal in this situation by clarifying and improving the written standard, and new implementers may want to wait on implementing that particular feature of the standard until the common approach settles out.

We did a great deal of interoperability testing for our ODF implementation before we released it, both internally and through community events such as the DII workshops. We’ve also worked with other implementers in a 1-on-1 manner, and going forward we’ll be participating in a variety of interoperability events. These are necessary steps in achieving the level of interoperability and predictability that customers expect these days.

In my next post, I’ll cover our testing strategy and methodology in more detail. What else would you like to know about how Office approaches document format interoperability?