Multiple ways how to limit parallel tasks processing

I decided to write this post because I saw several implementations how to solve the following interesting exercise across several reviews last days.

Exercise:

Let's have a list of items. We need to execute an asynchronous task for each of them in concurrently limited way (aka max degree of parallelism) and we need to collect all the results.

Solution #1 using TPL Dataflow library

There is a nuget System.Threading.Tasks.Dataflow used to solve so called data flow asynchronous processing tasks. Using its ActionBlock<TInput> and the property ExecutionDataflowBlockOptions.MaxDegreeOfParallelism it is possible solve the goal. You can find more information here: /en-us/dotnet/standard/parallel-programming/how-to-specify-the-degree-of-parallelism-in-a-dataflow-block but let's see how it could be solved.

Code

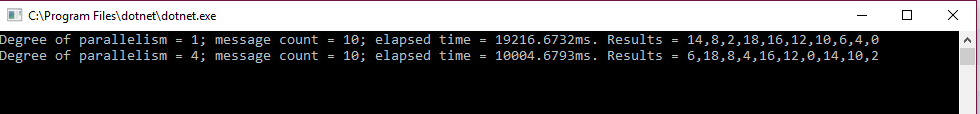

Result

Solution #2 using SemaphoreSlim

Another solution could be to limit the number of concurrent processing by a semaphore, it's kind of rate throttling.

Code

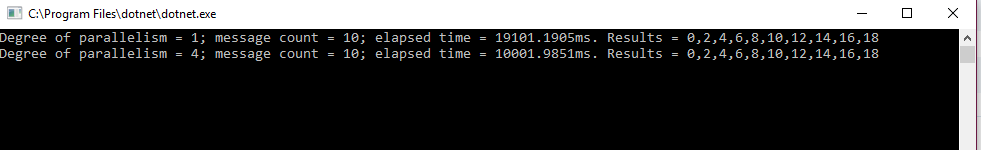

Result

Solution #3 using Enumerable.Range and Concurrent Queue

There is another solution based on the idea of running as many processing loops as we need degree of parallelism to be. The items are provided in thread safe way, using a ConcurrentQueue.

Code

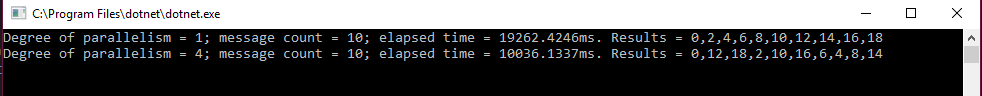

The result

Solution #4 using Partitioner

The last solution is by using System.Collections.Concurrent.Partitioner which is aimed to split the input into the buckets (partitions) and process them in parallel.

Code

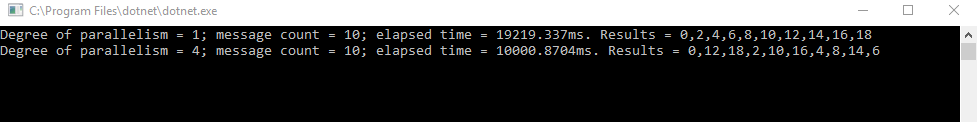

Result

That's all for now folks! All samples can be found here: https://github.com/kadukf/blog.msdn/blob/master/.NET/ParalellizedTasks