More on hard links

After posting my original entry on how hard links work, a number of comments were made requesting clarification. The original blog posting is below:

https://blogs.technet.com/b/joscon/archive/2011/01/06/how-hard-links-work.aspx

To his credit Joseph has been asking me about revisiting this topic for months.

I think part of the confusion about how hard links work revolves around the difference between what the Windows shell shows us and what is really happening in NTFS.

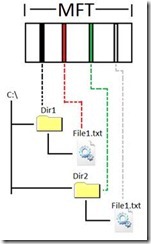

Here we have a couple of directories roughly displayed as the Windows shell would show it to us. The diagram gives the impression that the files exist inside their respective directories. In the following example there are two instances of ‘File1.txt’.

If we look under the hood we can see that each directory and each file has its own entry in the Master File Table (MFT).

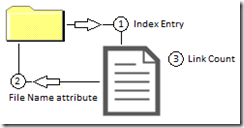

As you can see from the above diagram a file isn’t really ‘inside’ the directory. The directory just has a pointer to the location where the file exists in the MFT. Using the diagram from my old blog entry we can see the three part relationship between the file and the parent directory.

1. The directory has an index entry that tells us the MFT address for the child file.

2. The file has a file name attribute that tells us what the file record number of the parent directory.

3. The file has a link count that tells us that it only has one parent directory.

If I were to dump out the metadata for a directory, it would only tell me the location in the MFT for the files that are related to the directory. No part of the actual file is actually IN the directory. If you were to look at the actual addresses in the MFT they might appear like this…

0025 – Dir1

005a – Dir2

100a – File1.txt

15ab – File1.txt

Dir1 would have an index entry that included a reference to 100a (File1.txt). And Dir2 would have an index entry that included a reference to 15ab (the second instance of File1.txt).

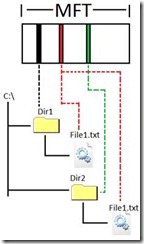

Now let’s look at a hard linked file. The shell part isn’t really going to appear differently.

But when we add in what NTFS is really doing you can start to see a difference.

Instead of having two copies of the same file, the index entries in both directories point to the same address in the MFT for the child file.

The three part relationship also changes. The file becomes aware that it is referenced by multiple directories.

1. Each directory has an index entry that tells us the MFT address for the child file.

2. The file has two file name attributes. One for each parent directory.

3. The link count is incremented to 2h.

And finally if we looked at the addresses in the MFT, they might look like this….

0025 – Dir1

005a – Dir2

100a – File1.txt

100a – File1.txt

Hopefully the new diagrams combined with the older ones will help you to properly visualize what NTFS is doing. To really get your head around it is essential to stop thinking about ‘the real copy of the file’ or ‘the file being IN the directory’.

Finally, when looking at the two link diagrams side-by-side…

…you might be asking yourself, “How is the hard link different than the normal link relationship?”

The answer is that it isn’t. Technically EVERY file is hard linked. We just reserve the term for talking about files that have more than one directory linked to them.

Now moving forward, let’s look at some real world information. Simple names like Dir1 and File1.txt are fine to start off with but we need to relate it to what’s in the Windows directory. We can do this with some easy substitutions.

Dir1 = c:\windows\system32

Dir2 = C:\Windows\winsxs\amd64_microsoft-windows-securestartup-service_31bf3856ad364e35_6.1.7600.16385_none_c09aa5b3bec88beb

File1.txt = bdesvc.dll

And I kept them color coded to keep it easier to follow.

I dumped out the metadata for the file bdesvc.dll. I’ve simplified it for readability but you can see that it has two file name attributes, one that lists a parent directory of 280b and one that lists a parent directory of 124d.

_FILE_NAME {

_MFT_SEGMENT_REFERENCE ParentDirectory {

ULONGLONG SegmentNumber : 0x000000000000280b

USHORT SequenceNumber : 0x0001

..... FileName : "bdesvc.dll"

_FILE_NAME {

_MFT_SEGMENT_REFERENCE ParentDirectory {

ULONGLONG SegmentNumber : 0x000000000000124d

USHORT SequenceNumber : 0x0001

..... FileName : "bdesvc.dll"

And of course the metadata also showed the higher ‘link count’, meaning that there are two links pointing to the file record.

USHORT ReferenceCount : 0x0002

I dumped out the metadata for both 280b and 124d and found that they were the two directories that I’d expected (system32 and amd64_microsoft-windows-securestartup-service_31bf3856ad364e35_6.1.7600.16385_none_c09aa5b3bec88beb).

Joseph brought up an example of what would happen if a private hotfix were installed. Depending on how that was done it would sever the hardlink and put a new version of the file in the system32 directory. So we would end up with two copies of the file. The old one would still be under amd64_microsoft-windows-securestartup-service_31bf3856ad364e35_6.1.7600.16385_none_c09aa5b3bec88beb. And the new one would be in the system32 directory.

Later if you were to run ‘SFC /scannow’ Windows would remove the new copy and establish a new hard link using the file that was still stored under WinSxS.

When SFC runs it compares a checksum of the file against a copy of the checksum that Windows has squirreled away somewhere.

However if the one and only file were to become damaged, then SFC would fail with an error…

“Windows Resource Protection found corrupt files but was unable to fix some of them.

Details are included in the CBS.Log windir\Logs\CBS\CBS.log.”

The other main concern was how to view disk space. That’s actually the easy one.

See the pie chart? Its correct.

Okay, I’ll explain it a bit more in-depth than that.

There are two ways to view how much free space. The first way it to use the pie chart. The information in the pie chart actually comes from a special metafile named $BITMAP. This file maintains a list of all the clusters of the volume and if they are in use or not. When a file needs space, $BITMAP is queried to see what is free. When space is allocated, $BITMAP is updated to show that the allocated clusters are now in use. Keep in mind that $BITMAP doesn’t track what files own what clusters. It only tracks what clusters are in use. So when we draw the pie chart, we just query $BITMAP to find out how many clusters we have and how many are free. This is also why the pie chart is populated so quickly. We just have to read a single file to build the chart.

The second way to get free space is what I refer to as “the wrong way”. That is to open a CMD prompt at the root directory and do a ‘dir /s’. This will list all the files on the volume that you have access to and add up the sizes at the end. This method is just plain wrong. A big part of why it is so wrong is that hardlinked files will get counted twice….once for each directory that is linked to them. The other big reason is that the DIR will only list files that you have access to. Files in the System Volume Information directory will not be included. That’s a problem because that’s where the VSS snapshots are stored. And the special metafiles that are hidden from the user are also not listed in the total. So the space used by your MFT will not be listed, your security file ($SECURE) will not be listed, and so on. There’s just too much to take into account to get a truly accurate total by adding files together.

I know it sounds like it should work but there are factors involved in storing your files that most people just don’t know about. As an example, Windows 2003 reserved about 12% of the volume for the MFT to have room to grow. So if you had a very large volume with just a few files, you might wonder where all your space was.

The take away from that is what I tell my customers and coworkers, “Trust the Pie Chart”.

I hope this has been helpful.

Robert Mitchell

High Availability

Enterprise Platform Support

Enjoy my writing? Here are other blog entries that I have authored…

https://blogs.technet.com/askcore/archive/2009/10/16/the-four-stages-of-ntfs-file-growth.aspx

https://blogs.technet.com/askcore/archive/2009/12/30/ntfs-metafiles.aspx

https://blogs.technet.com/b/askcore/archive/2010/08/25/ntfs-file-attributes.aspx

https://blogs.technet.com/b/askcore/archive/2010/10/08/gpt-in-windows.aspx

https://blogs.technet.com/b/askperf/archive/2010/12/03/performance-counter-for-iscsi.aspx

https://blogs.technet.com/b/joscon/archive/2011/01/06/how-hard-links-work.aspx

https://blogs.technet.com/b/askcore/archive/2011/04/07/gpt-and-failover-clustering.aspx