Image Segmentation Using MicrosoftML

In computer vision, the goal of image segmentation is to cluster pixels into salient image regions, i.e., regions corresponding to individual surfaces, objects or natural parts of objects. The goal of segmentation is simplify and/or change the representation of an image into something that is more meaningful and easier to analyze. More precisely, image segmentation is the process of assigning a label to every pixel in an image such that pixels with the same label share certain characteristics. The result of image segmentation is a set of segments that collectively cover the entire image, or a set of contours extracted from the image. Image segmentation could be used for object recognition, occlusion boundary estimation with motion or stereo systems, image compression, image editing, or image database look-up.

There is a publicly available image segmentation data from UCI, and they were drawn randomly from a database of 7 outdoor images. Each instance of the data is a 3x3 region (9 pixels) from a image and 19 attributes used to describe the characteristics of each region (instance) in the data. In this article, we try to use this data to train a model by using Microsoft R Server and MicrosoftML package, and then the model can be used to assign the label to every pixel in an image. We choose Neural Networks algorithm in MicrosoftML package to train the model, and use another separate image segmentation data from UCI to test the model and show the performance tuning by adjust the parameters in Neural Networks algorithm.

Train/Validation/Test data set

- The data sets used in this article can be download from https://archive.ics.uci.edu/ml/datasets/Image+Segmentation

- Training data contains 210 data points and the testing data contains 2100 data points

- These data sets contain 19 continuous features, you can check the this information from here https://archive.ics.uci.edu/ml/datasets/Image+Segmentation

- Class distribution

- Classes: brickface, sky, foliage, cement, window, path, grass

- 30 data points per class for training data

- 300 data points per class for testing data

- We randomly get 50% testing data set as an independent validation set and the other 50% testing data as the new test data so that the train, validation and test data are independent

Train Model

MicrosoftML provides rxNeuralNet to train a Neural Network for regression modeling and for binary and multi-class classification. Once the training/testing data is ready, you can train a Neural Networks model by using Microsoft R Server and MicrosoftML package. According to the training data, it's a multi-class classification problem, so we should specify the argument "type" in rxNeuralNet to be "multiclass", the default value of argument "type" is "binary".

Sample R code for training Neural Networks model with default setting

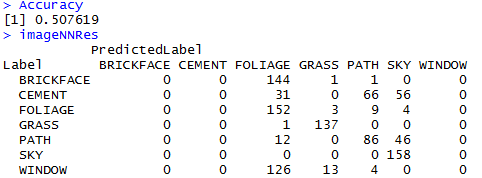

Performance

Performance Tuning

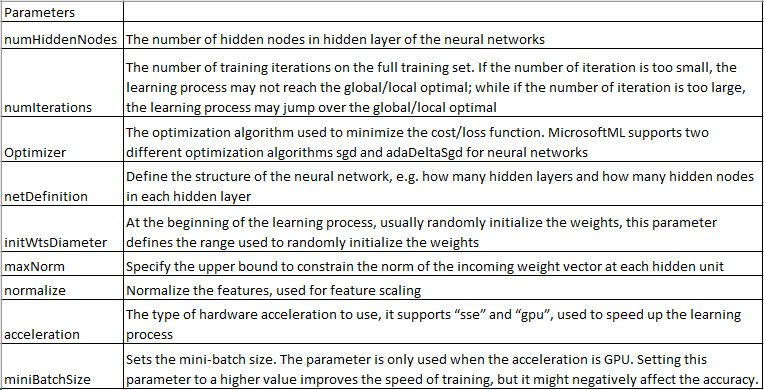

According to the results of the model trained in the previous section on testing set, we can tell that the performance of the model is not good. However, we cannot tell if we should use other algorithms or we should keep working on the training data, features, and tuning parameters. Actually, rxNeuralNet provides several parameters to tune the performance of the model, here are the parameters supported in MicrosoftML. In section, we use the validation set to tune and select the best model, and then evaluate the model on the same test data used in the previous section.

Sample R code for training Neural Networks model with tuning

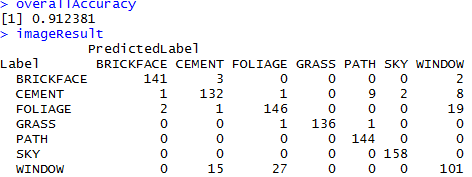

Performance

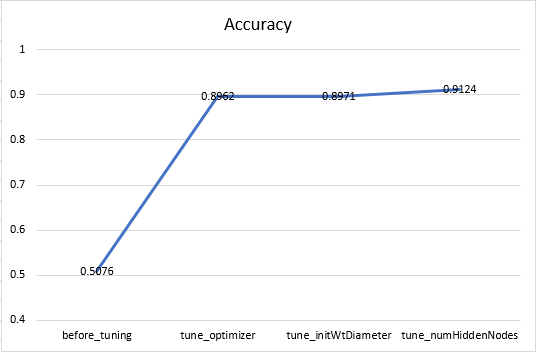

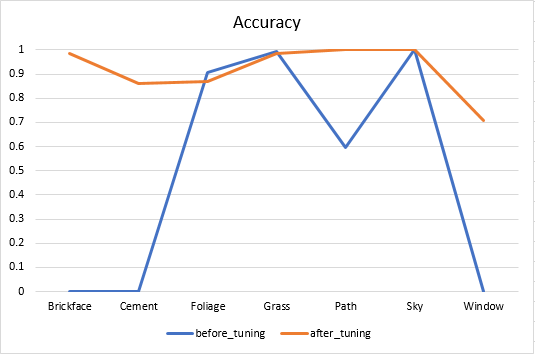

After tuning the parameters optimizer, initWtDiameter, and numHiddenNodes, the accuracy improved significantly from 51% to 91% on the same testing set. We also noticed accuracy of classes brickface, cement and window was 0 before tuning the model, after tuning, the accuracy of classes brickface, cement and window increased to 98.6%, 86.3% and 70.6%. Here is the overall accuracy of the model after tuning different parameters, and the accuracy of each class before and after tuning.

Summary

From this article, we can see that tuning is very important step for improving the performance of machine learning model once the training data and features are fixed.

Reference

- https://en.wikipedia.org/wiki/Image_segmentation

- Linda G. Shapiro and George C. Stockman (2001): “Computer Vision”, pp 279-325, New Jersey, Prentice-Hall, ISBN 0-13-030796-3

- Barghout, Lauren, and Lawrence W. Lee. "Perceptual information processing system." Paravue Inc. U.S. Patent Application 10/618,543, filed July 11, 2003.

- https://archive.ics.uci.edu/ml/datasets/Image+Segmentation

- https://msdn.microsoft.com/en-us/microsoft-r/microsoftml/microsoftml

- https://msdn.microsoft.com/en-us/microsoft-r/rserver