New IDC Paper Focuses on Ability of Utilities to Handle New Torrents of Smart Grid Data

Utilities concerned about their IT systems’ limited potential for adjusting to the future Smart Grid data environment should read a new IDC Whitepaper, sponsored by Microsoft, which evaluates the likely needs and infrastructure solutions for operating in a transformed energy industry.

The paper “Building Scale for the Utility Company’s Future” is available on the front page of the utilities portion of Microsoft’s website and it succinctly describes the data challenges facing utility companies:

To be prepared for future demands on the utility industry, utility companies will need to choose hardware and infrastructure applications that are fit to purpose and capable of:

· Processing large volumes of data (terabytes and possibly petabytes)

· Providing high data quality at various levels of precision

· Accommodating multiple types of data (transactional and time series, structured and unstructured) matched with the time period

· Maintaining high availability of data with successful failover without data loss

· Supporting multiple latency requirements (high, low, and medium)

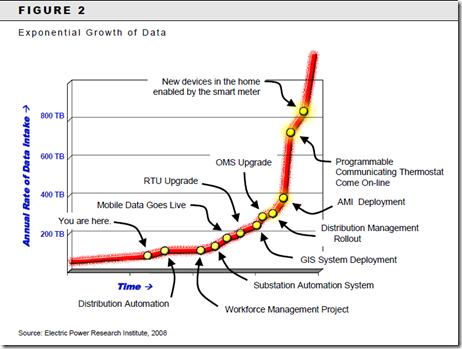

That’s the core of the issue – exponential data growth and handling -- and it’s intellectually understandable from IDC’s introduction. But looking at the challenge with the help of a graph really makes the issue really come to life.

The following Electric Power Research Institute graphic is on page 9 of the IDC paper and I’m sure you’ll degree it’s dramatic:

Thankfully, the paper isn’t intended to scare. It goes on to identify the concomitant data challenges, like quality, matching and security and then offers two case study examples of utilities that have tackled various data challenges in recent years:

AGL: Phoenix Rising

In July 2006, [AGL Energy Limited, Australia’s largest integrated renewable energy company] embarked on an application consolidation initiative — Project Phoenix — that would reduce the total cost of ownership and inefficiency of supporting 11 different customer information systems (CIS) and 100 other applications. The company wanted to take this opportunity not only to reduce costs but also to ensure that all customers are treated in the same way using a single set of processes. According to AGL, "The goal was a system that would enable AGL to house all its customers on a single integrated platform that could scale as new channels emerged and provide consistent information across the various business functions."

Entergy: Preparing the Changing Nuclear Workforce

Like many other owners of nuclear power generation, Entergy is faced with managing the impact of an aging nuclear workforce. According to the U.S. Department of Labor, 30% of the nation's nuclear engineers, 26% of its reactor operators, and 26% of its nuclear technicians are

expected to retire by 2012. The challenge for the utility is to capture this experiential knowledge and transfer it to the incoming workforce to be able to access as much knowledge as possible needed to run the plants. Each nuclear facility is unique and has its own rich collection of documentation that needs to be easily accessible to staff.

Information management applications support the transfer of knowledge to the next generation of plant engineers and operators by ensuring that correct and accurate information is readily and easily accessible by plant workers. Since 1995, Entergy had been running eB Nuclear Suite from Enterprise Informatics for Entergy Regulated sites for managing storage/retrieval of plant information and records.

In 2006, Entergy made a decision to upgrade to the latest version of eB for Nuclear suite based on new enhancements and an updated architecture as a result of monitoring changing market conditions to identify newly emerging opportunities to further reduce cost and improve service.

Both case studies explore the role that SQL Server 2005 and SQL Server 2008 played in accomplishing AGL and Entergy’s business objectives. The paper offers a chart that explains various SQL Server 2008 features, including integration services, data warehousing, operation data store, analysis services, reporting services, geospatial data types, backup and compression, dynamic management views, and encryption.

It’s my hope you’ll take a look through this paper. It offers a very complete roadmap for those utilities considering their information technology infrastructure needs in this new energy era by identifying and addressing the ways that utilities can apply technology to the challenges they face. – Jon Arnold