How we moved microsoft.com to a p=quarantine DMARC record

In case you hadn’t noticed, Microsoft recently published a DMARC record that says p=quarantine:

_dmarc.microsoft.com. 3600 IN TXT "v=DMARC1; p=quarantine; pct=100; rua=mailto:d@rua.agari.com; ruf=mailto:d@ruf.agari.com; fo=1"

This means that any sender transmitting email either into Microsoft’s corp mail servers or to any other domain that receives email, and the message is spoofed (it doesn’t pass SPF or DKIM, or it does pass one of those two but doesn’t align with the domain in the From: address), the message will be marked as spam.

So how did we do it?

Let me run you through the steps because it took a couple of years.

1. First, the domain MUST publish an SPF record

Microsoft's SPF record is the following:

microsoft.com. 3600 IN TXT "v=spf1 include:_spf-a.microsoft.com include:_spf-b.microsoft.com include:_spf-c.microsoft.com include:_spf-ssg-a.microsoft.com include:spf-a.hotmail.com ip4:147.243.128.24 ip4:147.243.128.26 ip4:147.243.1.153 ip4:147.243.1.47 ip4:147.243.1.48 -all"

It used to be over the 10 DNS-lookup limit, and it was soft fail ~all instead of hard fall -all.

.

2. Second, the domain MUST publish a DMARC record.

I recommend you send your DMARC reports to a 3rd party to avoid having to parse XML reports yourself. Various options include Agari, ValiMail, or DMARCIAN. Microsoft uses Agari (Agari pre-dated the other two options [1] at the time we published DMARC records for Microsoft).

.

3. Start looking at DMARC reports

Various 3rd parties then started sending all of the DMARC reports back to Agari. This is important because Agari's tools parse through the DMARC reports and make it possible to see who was and was not sending email in an SPF-compliant way.

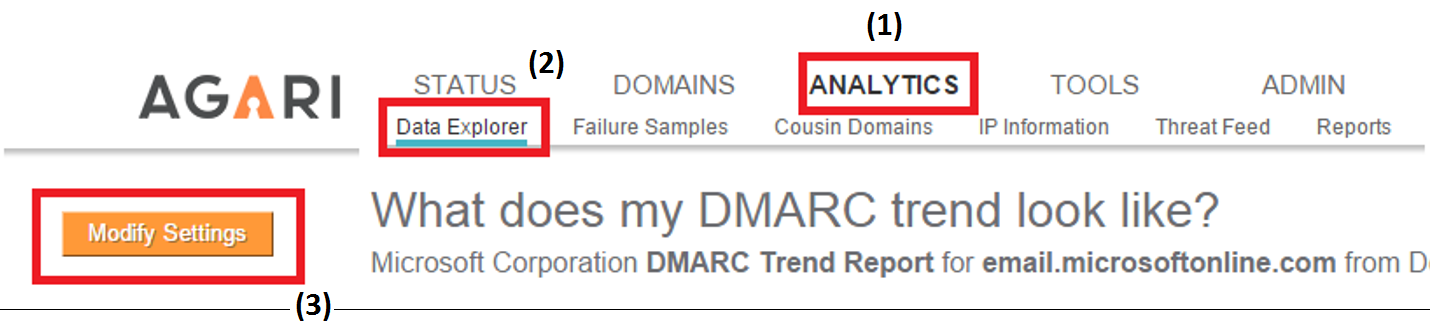

To do this, I would login to the Agari portal and navigate to ANALYTICS > Data Explorer and then Modify Settings

.

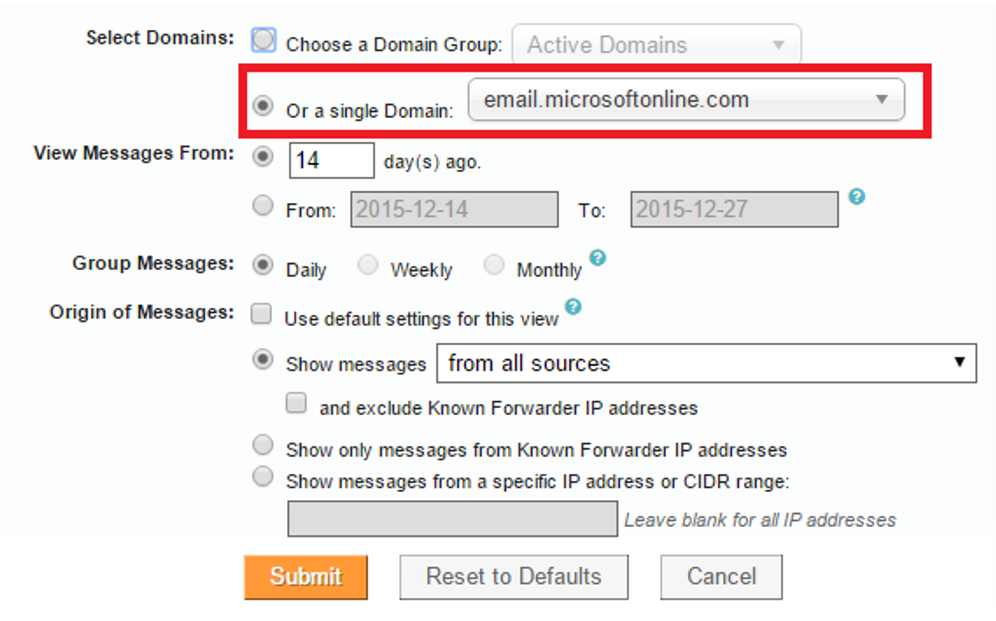

I would change the report settings to the single domain I wanted to look at (in this case, Microsoft). If I didn't change it, I would be looking at the entire the entire set of Microsoft-protected domains.

In the above picture, it shows "email.microsoftonline.com" but I could select any domain I wanted.

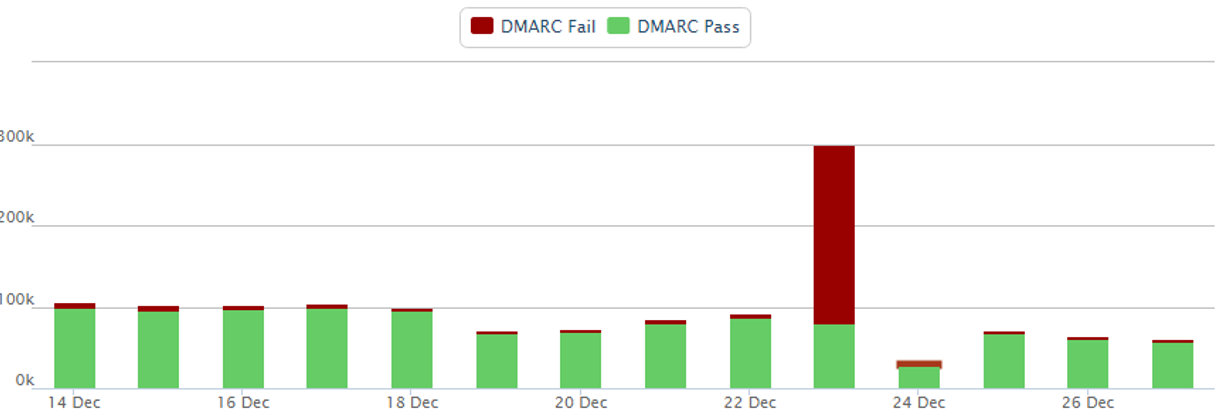

When selected, the DMARC trend would show up for that domain. Here's a screenshot from last December for email.microsoftonline.com. You can see that on Dec 23 there were a lot of messages failing DMARC. That doesn't mean that it was a large spam run, it could have been a large bulk email campaign from an unauthorized sender who was sending on Microsoft's behalf. Remember, at this point, email.microsoftonline.com only had a soft fail (or hard fail, I forget) in its SPF record, and a DMARC record of p=none, so nobody would have junked this email automatically.

But other than that, most messages were passing authentication which is a good sign. It is the red ones that needed investigation.

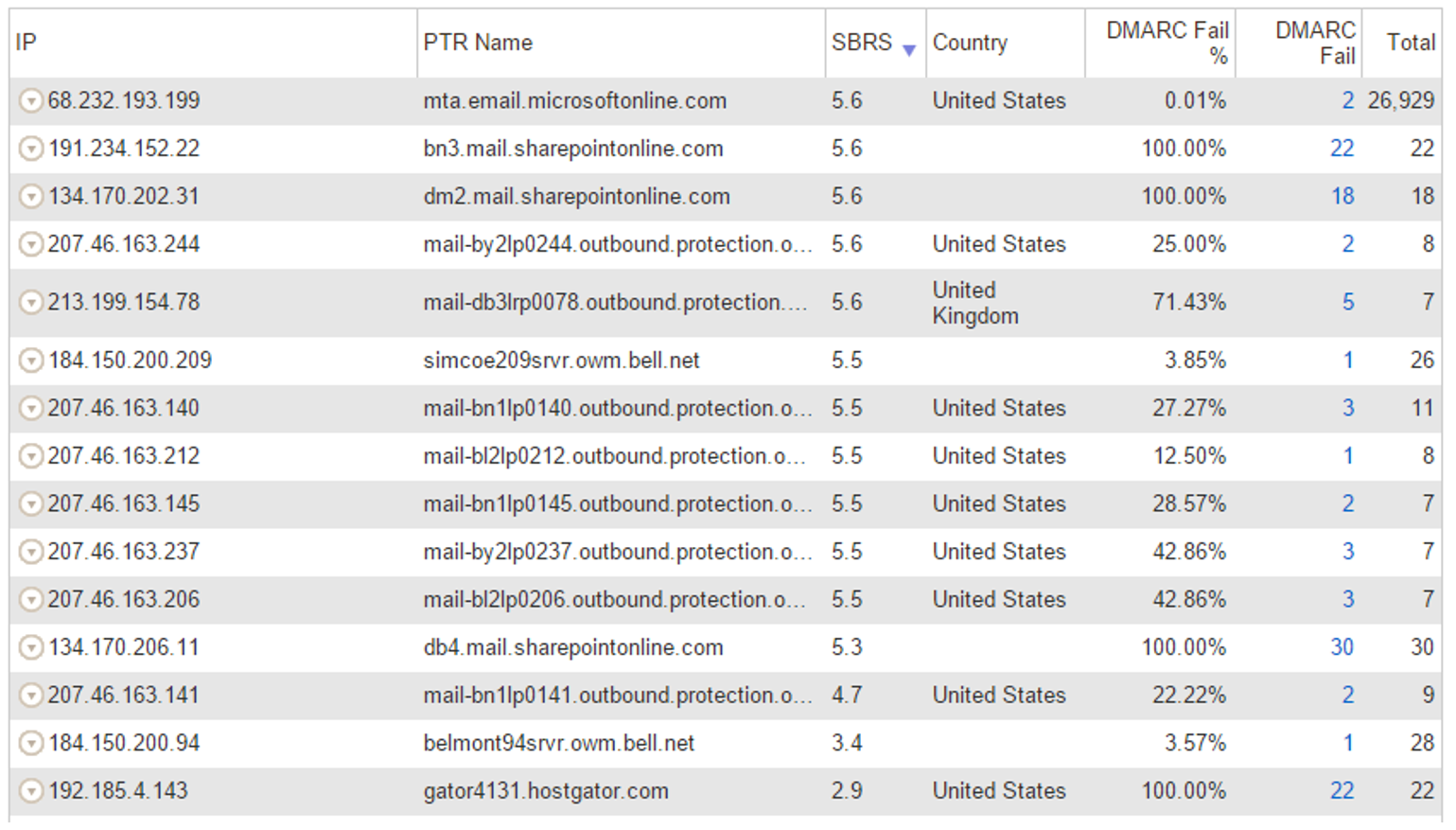

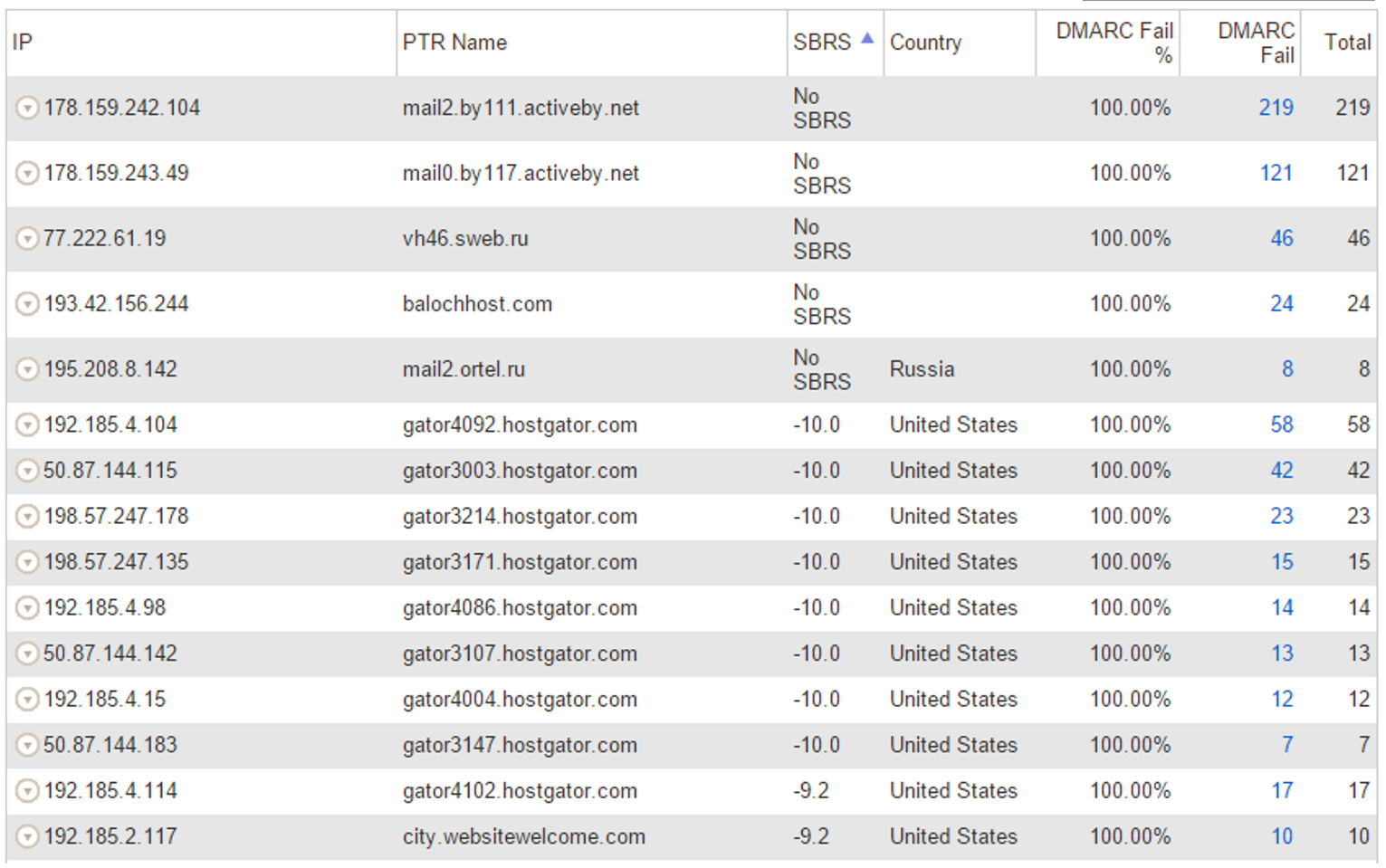

Agari inventories all sending IPs and grades them by SBRS - Senderbase Reputation Score, which is Cisco/Ironport's IP reputation. The higher the score, the better the reputation of the sending IP. In general, anything over 0.2 is probably a good IP or a forwarder. Anything less than zero is suspicious. Anything without an SBRS is probably suspicious but it depends on the PTR record.

For the above IPs that were failing DMARC, sorted by highest to lowest SBRS:

a) *.outbound.protection.outlook.com is Office 365 forwarding to another service like Hotmail, Gmail, Yahoo, etc. As of Sept 2016, Office 365 modifies message content when forwarding email so this can break the DKIM signature. SPF will similarly break, and this is what breaks DMARC. However, you can see below that the number of messages is fairly small, only a handful per day.

b) *.sharepointonline.com is also on the list with a few more messages per day, but still not very high. This may be forwarding.

c) There are a handful of other IPs with good reputation failing DMARC. These are also likely forwarders. As long as the numbers are not too high, this is fine. It is only when these numbers are in the tens of hundreds that this will cause significant FPs.

Quickly glancing over the below, we don't see too many good IPs failing DMARC which is good.

If I sort by lowest-to-highest SBRS:

These IPs are sending reasonably high volumes of DMARC failures but all have terrible reputation. This is balanced against a small handful of good sending IPs (above). In general, email.microsoftonline.com passes DMARC and is mostly spoofed by bad sources except on Dec 23, 2015 above when it was spoofed in large amounts.

This domain is likely safe to move to a more aggressive DMARC record. We published p=quarantine for email.microsoftonline.com because it was fairly straightforward.

.

4. Go after the more complicated domains by breaking it down one by one

While email.microsoftonline.com wasn't too bad, microsoft.com was much more complicated.

a) There were at least 25 different teams that I could find in the good senders list, e.g., visualstudio [at] microsoft [dot] com. Some of them were sending from 3rd party bulk senders, some were sending from our internal SMTP team (which I discovered while doing this project - they are the ones that send MSN and Outlook.com marketing messages), and some were sending from random mail servers from some of the buildings on Microsoft's campus.

b) To discover these, I would click on the sending IP (sorted by SBRS) and try to look for a message with a DMARC forensic report. If the sending message looked legitimate, I took the localpart of the email address (e.g., visualstudio) and then looked it up in Microsoft's Global Address List (GAL). About 2/3 of the time, it resolved to a distribution list. I then had to go to another internal tool and look up the owner of that distribution list and contact them personally.

For the other 1/3, I had to do a lot of creative searching of the Global Address List. If I found a sending email address that failed DMARC and it had the alias healthvault [at] microsoft [dot] com and I couldn't find it in the GAL, I had to type around using auto-complete until I found something that looked similar. Sometimes I had to do it 3, 4, or 5 times. But I managed to track them all down.

When I did, I would get them to either send from a subdomain (e.g., email.microsoft.com) if they were sending from a 3rd party like SendGrid, use the internal SMTP solution, or send from a real mailbox within Microsoft IT's infrastructure. I sometimes had to cut a series of tickets requesting DNS updates to @microsoft.com, @email.microsoft.com, and a couple of other subdomains to ensure that they had the right 3rd party bulk mailers in the SPF record. I had to do this for 25 different teams.

If that sounds like a lot of work, that's because it was.

But, nobody pushed back on it. Whenever I contacted anyone, they would make the required changes. Sometimes within a day, sometimes within two weeks. But it got done.

When we figured we had enough senders covered, we published SPF hard fail in the SPF record for microsoft.com.

.

5. Wait for any additional false positive complaints and fix them as you find them

We knew that probably was going to be insufficient. Even though a lot of third parties send email as Microsoft to the outside world, probably just as many send it into Microsoft-only - not to third parties - which meant they were being sent through Office 365. At the time, Office 365 didn't send DMARC reports (we still don't, not as of this writing) but we also didn't have a good way to detect who was spoofing the domain. But because Microsoft published an SPF hard fail, these messages would frequently get marked as spam.

So, as we found one-off senders, we simply added them to local overrides within the Office 365 service. We either added them to IP Allow lists, or we added them to Exchange Transport Rules that skips filtering, or we jiggered around the SPF record to get them in if it made sense.

We did this for about a month but at no point did we revert the SPF hard fail. Once we reached that point, there was no going back.

Doing a proactive analysis didn't find all the potential false positives, it was only through publishing a more aggressive policy that we were able to find more legitimate senders.

.

6. Set up DKIM for your corporate traffic

We continued in this manner for about a year. Occasionally a 3rd party sender would ask us to set up DKIM, so I would assist by creating the necessary change requests for the DNS team to make the update. Along the way, I found that there were at least five different processes for updating DNS records for domains owned by Microsoft.

I wouldn't be surprised if it's the same at other large organizations.

But the day came when Office 365 released outbound DKIM signing. The very first customer I got this working for was Microsoft itself. I knew right then that this was the key to getting Microsoft to p=quarantine.

For you see, you should not go to p=quarantine without setting up both SPF and DKIM. If one fails, you can usually fall back on the other to rescue a message. I know that a lot of Microsoft's corp traffic is forwarded so it had to have DKIM signatures attached. I know that other third parties don't set up DKIM, but I also knew that a large chunk of them could. Believe me, if legitimate senders can't get their email delivered, they find a way to contact me to help. At that point, I would either get them into the SPF record or more preferably, set up DKIM so they could sign on Microsoft's behalf.

.

7. Publish an even more aggressive policy for messages sent to your domain

At this point, we were ready to roll.

Within Microsoft's tenant settings in Office 365, we created an Exchange Transport Rule (ETR) - if the message failed DMARC, mark the message as spam. This was the same as publishing a DMARC record of p=quarantine internally, and p=none externally.

Before we did this, I pulled all the data for messages sending into Microsoft and failing DMARC, looking for good senders. This was much harder because I didn't have Agari's portal. We then added a bunch of good IPs into an ETR Allow list (sending IP + From: domain = microsoft.com) and went live with the rule.

We immediately started seeing false positives all over the place. But we didn't roll back the rule, we just added them into the local overrides. This lasted for about a month and then it stabilized. Yes, people didn't like that legitimate messages were going to junk; but we explained that we were clamping down on spoofing and phishing. When we added the local overrides, the problem went away.

.

8. Publish a stronger DMARC policy and roll it up slowly, fixing false positives as you find them

We waited several months to ensure that nothing else would break. The occasional good sender would ask to be allowed to send. Microsoft's SPF record is full so it's not easy to add new senders, we try to add only senders from infrastructure that we control (e.g., our own data centers, or sending IPs in Azure that are locked to Microsoft).

We then decided to publish a DMARC record of p=quarantine at 1%. I knew that it wouldn't affect sending any inbound traffic to Microsoft Corp because we'd had that equivalent in place for a few months. I wasn't sure what would happen for external email.

We published it and... almost nothing happened.

I may be misremembering, but the only incident I can remember (or maybe it's the only big incident) is a couple of mailing lists were being sent to Gmail, and they were being junked. I'm not sure if all messages were being junked, or only 1%, but it sure felt like it was all of them.

Fortunately, Gmail's system learns to override DMARC failures if you rescue them enough. The problem seemed to resolve itself eventually.

We then moved to 5%, then 10%, then 25%, then 60%. The whole time we waited for false positive complaints, but almost none came. We finally published p=quarantine. Nothing happened, I haven't seen any major complaints since we did that. I think it's because we cleaned up so much ahead of time and were able to predict in advance what would happen. And once you reach that harder security stance, it's rare to flip it back the other way. These days, at least with regards to email security, the direction only moves forward.

.

Can I summarize this quickly?

Hmm, maybe.

- If you're going through the process of tightening your email authentication records, you don't get that much pushback as long as you take a great deal of care ahead of time to avoid problems down the road. If you do that, you will build a lot of trust. This is even more true if you have a plan, publish it, and execute on it.

. - The amount of messages that you can prevent being spoofed doesn't move the needle that much when you're trying to justify to your superiors about why you should do that work. Hundreds of messages per day has about the same psychological impact as millions per day. However, blocking several hundred or thousands of legitimate email per day is really, really bad. That undermines your effort, so avoid that at all costs.

. - It takes a long time to do the work. It also requires a lot of analysis, so make sure you have the right tools.

. - You're going to get false positives no matter what. Be prepared to fix them.

. - Once you go strict, don't go back (as long as you've done #1-3). Just fix the problems when you find them.

So that's how we published p=quarantine for Microsoft.com. It took a while, but now it's complete. Hopefully others will find this helpful.

.

[1] Sometimes people ask me which service they should use. I respond back by saying that DMARCIAN has a lot of do-it-yourself tools that are good for small and medium sized organizations. Agari is geared towards larger organizations but have since branched out into more products besides DMARC reports. Valimail does DMARC reports but they help you semi-automate the procedure so you can get to p=quarantine/reject faster than if you do it yourself.