What Azure Machine Learning Algorithm Should You Use

Azure Machine Learning Studio comes with a large number of machine learning algorithms that you can use to solve predictive analytics problems.

Firstly it really depends on the answers to the following questions

1. The size, quality, and nature of the data?

2. What you want to do with the answer?

3. How the math of the algorithm was translated into instructions for the computer you are using?

4. How much time you have?

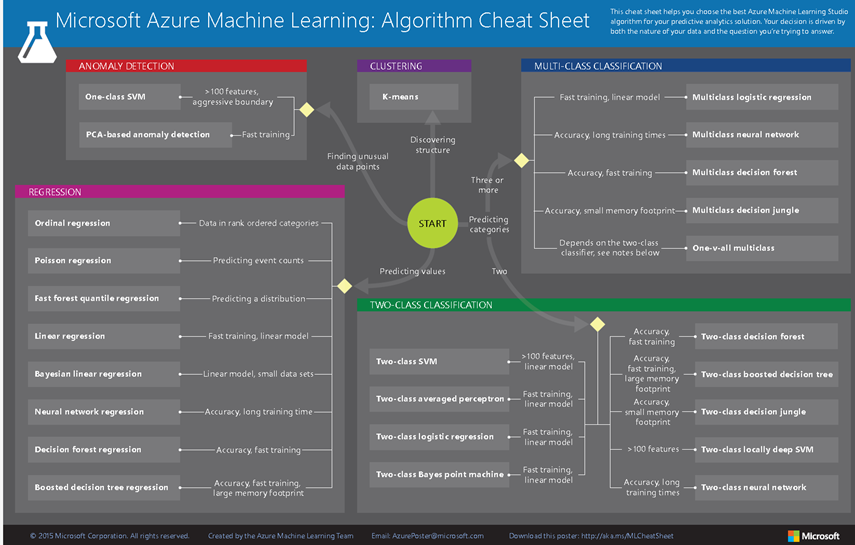

The infographic below demonstrates how the four types of machine learning algorithms - regression, anomaly detection, clustering, and classification - can be used to answer your machine learning questions.

So here are 4 top tips to help you

Tip 1. The Machine Learning Algorithm Cheat Sheet

The Microsoft Azure Machine Learning Algorithm Cheat Sheet helps you choose the right machine learning algorithm for your predictive analytics solutions from the Microsoft Azure Machine Learning library of algorithms. To download the cheat sheet and follow along with this article, go to Machine learning algorithm cheat sheet for Microsoft Azure Machine Learning Studio. This cheat sheet is perfect for students its aimed at someone with undergraduate-level machine learning, trying to choose an algorithm to start with in Azure Machine Learning Studio. Therefore the cheat sheet makes some generalizations and oversimplifications, but it will point you in a safe direction. It also means that there are lots of algorithms not listed here. As Azure Machine Learning grows to encompass a more complete set of available methods, the cheatsheet will be updated to include these.

Tip 2. How to use the cheat sheet

Read the path and algorithm labels on the chart as "For <path label> use <algorithm> ." For example, "For speed use two class logistic regression." Sometimes more than one branch will apply. Sometimes none of them will be a perfect fit. They're intended to be rule-of-thumb recommendations, so don't worry about it being exact. Several data scientists I talked with said that the only sure way to find the very best algorithm is to try all of them. Here's an example from the Cortana Intelligence Gallery of an experiment that tries several algorithms against the same data and compares the results: Compare Multi-class Classifiers: Letter recognition.

Tip: To download and print a diagram that gives an overview of the capabilities of Machine Learning Studio, see Overview diagram of Azure Machine Learning Studio capabilities.

Step 3. Understanding Supervised vs UnSupervised vs Reinforcement learning algorithms

Supervised learning algorithms make predictions based on a set of examples. For instance, historical stock prices can be used to hazard guesses at future prices. Each example used for training is labelled with the value of interest—in this case the stock price. A supervised learning algorithm looks for patterns in those value labels. It can use any information that might be relevant—the day of the week, the season, the company's financial data, the type of industry, the presence of disruptive geopolitical events—and each algorithm looks for different types of patterns. After the algorithm has found the best pattern it can, it uses that pattern to make predictions for unlabelled testing data—tomorrow's prices.

This is a popular and useful type of machine learning. With one exception, all of the modules in Azure Machine Learning are supervised learning algorithms. There are several specific types of supervised learning that are represented within Azure Machine Learning: classification, regression, and anomaly detection.

Classification. When the data are being used to predict a category, supervised learning is also called classification. This is the case when assigning an image as a picture of either a 'cat' or a 'dog'. When there are only two choices, this is called two-class or binomial classification. When there are more categories, as when predicting the winner of the NCAA March Madness tournament, this problem is known as multi-class classification.

Regression. When a value is being predicted, as with stock prices, supervised learning is called regression.

Anomaly detection. Sometimes the goal is to identify data points that are simply unusual. In fraud detection, for example, any highly unusual credit card spending patterns are suspect. The possible variations are so numerous and the training examples so few, that it's not feasible to learn what fraudulent activity looks like. The approach that anomaly detection takes is to simply learn what normal activity looks like (using a history non-fraudulent transactions) and identify anything that is significantly different.

In unsupervised learning, data points have no labels associated with them. Instead, the goal of an unsupervised learning algorithm is to organize the data in some way or to describe its structure. This can mean grouping it into clusters or finding different ways of looking at complex data so that it appears simpler or more organized.

In reinforcement learning, the algorithm gets to choose an action in response to each data point. The learning algorithm also receives a reward signal a short time later, indicating how good the decision was. Based on this, the algorithm modifies its strategy in order to achieve the highest reward. Currently there are no reinforcement learning algorithm modules in Azure Machine Learning. Reinforcement learning is common in robotics, where the set of sensor readings at one point in time is a data point, and the algorithm must choose the robot's next action. It is also a natural fit for Internet of Things applications.

Step 4. Considerations when choosing an algorithm

Getting the most accurate answer possible isn't always necessary. Sometimes an approximation is adequate, depending on what you want to use it for. If that's the case, you may be able to cut your processing time dramatically by sticking with more approximate methods. Another advantage of more approximate methods is that they naturally tend to avoid overfitting.

The number of minutes or hours necessary to train a model varies a great deal between algorithms. Training time is often closely tied to accuracy—one typically accompanies the other. In addition, some algorithms are more sensitive to the number of data points than others. When time is limited it can drive the choice of algorithm, especially when the data set is large.

Lots of machine learning algorithms make use of linearity. Linear classification algorithms assume that classes can be separated by a straight line (or its higher-dimensional analog). These include logistic regression and support vector machines (as implemented in Azure Machine Learning). Linear regression algorithms assume that data trends follow a straight line. These assumptions aren't bad for some problems, but on others they bring accuracy down. Despite their dangers, linear algorithms are very popular as a first line of attack. They tend to be algorithmically simple and fast to train.

Parameters are the knobs a data scientist gets to turn when setting up an algorithm. They are numbers that affect the algorithm's behaviour, such as error tolerance or number of iterations, or options between variants of how the algorithm behaves. The training time and accuracy of the algorithm can sometimes be quite sensitive to getting just the right settings. Typically, algorithms with large numbers parameters require the most trial and error to find a good combination.

Alternatively, there is a parameter sweeping module block in Azure Machine Learning that automatically tries all parameter combinations at whatever granularity you choose. While this is a great way to make sure you've spanned the parameter space, the time required to train a model increases exponentially with the number of parameters. The upside is that having many parameters typically indicates that an algorithm has greater flexibility. It can often achieve very good accuracy. Provided you can find the right combination of parameter settings. For certain types of data, the number of features can be very large compared to the number of data points. This is often the case with genetics or textual data. The large number of features can bog down some learning algorithms, making training time unfeasibly long. Support Vector Machines are particularly well suited to this case (see below).

Some learning algorithms make particular assumptions about the structure of the data or the desired results. If you can find one that fits your needs, it can give you more useful results, more accurate predictions, or faster training times.

| Algorithm | Accuracy | Training time | Linearity | Parameters | Notes |

|---|---|---|---|---|---|

| Two-class classification | |||||

| logistic regression | ● | ● | 5 | ||

| decision forest | ● | ○ | 6 | ||

| decision jungle | ● | ○ | 6 | Low memory footprint | |

| boosted decision tree | ● | ○ | 6 | Large memory footprint | |

| neural network | ● | 9 | Additional customization is possible | ||

| averaged perceptron | ○ | ○ | ● | 4 | |

| support vector machine | ○ | ● | 5 | Good for large feature sets | |

| locally deep support vector machine | ○ | 8 | Good for large feature sets | ||

| Bayes’ point machine | ○ | ● | 3 | ||

| Multi-class classification | |||||

| logistic regression | ● | ● | 5 | ||

| decision forest | ● | ○ | 6 | ||

| decision jungle | ● | ○ | 6 | Low memory footprint | |

| neural network | ● | 9 | Additional customization is possible | ||

| one-v-all | - | - | - | - | See properties of the two-class method selected |

| Regression | |||||

| linear | ● | ● | 4 | ||

| Bayesian linear | ○ | ● | 2 | ||

| decision forest | ● | ○ | 6 | ||

| boosted decision tree | ● | ○ | 5 | Large memory footprint | |

| fast forest quantile | ● | ○ | 9 | Distributions rather than point predictions | |

| neural network | ● | 9 | Additional customization is possible | ||

| Poisson | ● | 5 | Technically log-linear. For predicting counts | ||

| ordinal | 0 | For predicting rank-ordering | |||

| Anomaly detection | |||||

| support vector machine | ○ | ○ | 2 | Especially good for large feature sets | |

| PCA-based anomaly detection | ○ | ● | 3 | ||

| K-means | ○ | ● | 4 | A clustering algorithm |

Algorithm properties:

● - shows excellent accuracy, fast training times, and the use of linearity

○ - shows good accuracy and moderate training times

Additional Resources

Machine learning basics with algorithm examples https://azure.microsoft.com/en-us/documentation/articles/machine-learning-basics-infographic-with-algorithm-examples/

For a deeper discussion of the different types of machine learning algorithms, how they're used, and how to choose the right one for your solution, see How to choose algorithms for Microsoft Azure Machine Learning.

For a list by category of all the machine learning algorithms available in Machine Learning Studio, see Initialize Model in the Machine Learning Studio Algorithm and Module Help.

For a complete list of algorithms and modules in Machine Learning Studio, see A-Z list of Machine Learning Studio modules in Machine Learning Studio Algorithm and Module Help.

To download and print a diagram that gives an overview of the capabilities of Machine Learning Studio, see Overview diagram of Azure Machine Learning Studio capabilities.