The Future of Data Science – TechNet Catch up with Quentin Clark

Surveillance systems, campaign trackers, sales tools, supply chain monitoring, manufacturing lines, it’s hard to think of an area of a modern business isn’t churning out reams of data. This abundance of information presents companies with one of their biggest challenges, and opportunities, of the digital age to date - How on earth do we go about making sense of all this information?

In turn, this creates a plethora of new considerations for IT Departments, and particularly those individuals whose working lives revolve around data science. Where does the responsibility for analysing all this new information lie? How can IT provide the right services to facilitate the business? Who is responsible for developing an inquisitive data-driven culture?

These are among the questions that we put to Quentin Clark, leader of the Data Platform group at Microsoft, when we caught up with him after keynoting the ‘Accelerate Your Insights’ event in London earlier this year. With SQLBits having taken place in Telford last week, we thought what better opportunity to share Quentin’s thoughts on the future of data, and further whet your appetite for things to come.

Hi Quentin. Could you briefly explain what your role is within Microsoft and your current focus?

I lead the product group for Data Platform at Microsoft. That’s everything from SQL Server, to all of the BI within Excel, Office 365 and Azure HD Insight. So everything end to end Data Platform.

What’s your favourite Microsoft product at the moment?

Outside my own product space, the one I’m gaining the most use out of actually is Surface. I have a Surface Pro 2, I travel with it, I use it at home, and I have three of them in total. The mix of battery life, usability; it’s a great product.

The ‘Data Culture’ philosophy that Satya (Nadella) has spoken about numerous times is a large part of Microsoft’s vision. In layman’s terms, what’s meant by that phrase ‘Data Culture’?

Data Culture is the notion that any business concerns, from culture to governance, are all going to undergo a transformation as the role of data changes the ability to build better products, have better customer engagement and operationalise more efficiently. You only get there by everyone embracing a Data Culture. The transformation isn’t going to just happen by relying on the pre-existing IT to do the additional reporting, BI and so on. Really everybody is going to have to think about and embrace data as part of their jobs.

What key challenges will the business users who don’t currently use data on a day-to-day basis face in changing their mind-sets?

We think about this a lot, as you can imagine.

One of the challenges is going to be what I refer to as accessibility, by which I mean how reachable the technology is. This underpins things like Q&A in PowerBI, which shifts the ability to get value from BI visualisations of data from requiring that you understand things like the Database Schema and how to lay out charts, to simply just being able to ask a question. Q&A has changed the addressable market of BI in one fell swoop, because we provide this natural user interface that lets people explore structured data as simply, as they can explore data from the web using search engines. It wasn’t until that kind of accessibility came to be in the consumer space with search engines that the internet really took off.

We think the same thing could be true with regards to the Data Culture. Where you provide the tools, people are inherently going to want to be better informed, it’s just human nature. So the challenge is on us as technologists to provide them with tools that can let them satisfy their curiosity, in a way that is easily attainable by everybody.

Do you think that making data more attainable for everyone is something that Data Scientists should be concerned about?

No, not at all.

A bit of a history lesson from a long time ago - When SQL 7.0 came out, which was very easy to use with auto tune, did we put DBAs out of business for ever? Hardly! In fact, they grew tremendously, and I think we’ll find the same virtuous cycle exists here. The more people are able to reach for and get value from data, the more that data becomes part of how a business operationalises, the more interest there’s going to be from the business to take that to the next level.

The difference lies between what your ‘lay person’ could do and what data experts offer. Whilst the former can surface information with Q&A, find the data that’s of value and then operationalise that to help them on a day-to-day basis, deeper expertise can then support their model with more data, change it to be more accurate and drive better insights. That’s a virtuous cycle - the more data sets, views, models and analytics that become useful, the more people will reach out for data and the greater the demand for deeper sophistication, accuracy and completeness of the models there will be. The latter will be more of a data science activity, so I think the one will end up fuelling the other.

Where does the responsibility lie then for driving that data culture, is it something that the business has to pull through, or do IT and Data Scientists have to push it out to the business?

|

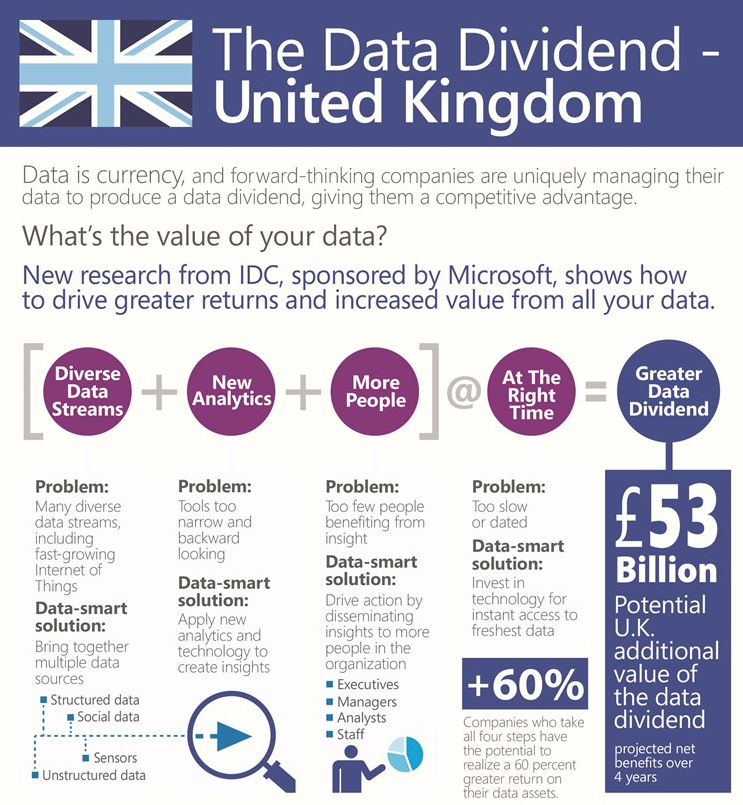

Are you fully utilising your Data? - Full Infographic |

What we’re seeing more and more is that it’s the business owners who are engaging us in dialogues to try and figure out how to gain better insights and drive that data culture. Whether it’s CMOs or the core functions that aren’t directly aligned with business activity like HR and Marketing Departments, we’re seeing all sorts of different functions outside of IT that are engaging in dialogues with us. They believe that their business has valuable data, and they’re looking for the tools to let them carry out analytics and present visualisations and insights to their peers within the business, with the ultimate goal of getting value out of that data and benefiting from the Data Dividend; the return on ambient data.

I have never spent more time in my career with non-IT folks as I have in the last year or so. I’ve been around and leading parts of the Data Platform business for the last seven years or so, and most of that time was all spent with Implementers, IT, DBAs, these kinds of folks. Over the last year the conversations I’m in have shifted tremendously, not from our doing but because they’re coming to us. Data Culture is about everybody, people will find their way to new behaviours as they see opportunity and they embrace challenges, more so than when they’re told to do something.how to gain better insights and drive that data culture. Whether it’s CMOs or the core functions that aren’t directly aligned with business activity like HR and Marketing Departments, we’re seeing all sorts of different functions outside of IT that are engaging in dialogues with us. They believe that their business has valuable data, and they’re looking for the tools to let them carry out analytics and present visualisations and insights to their peers within the business, with the ultimate goal of getting value out of that data and benefiting from the Data Dividend; the return on ambient data.

It’s really interesting to be part of the industry right now, where this understanding that there is a new value out there is taking hold and more and more people are becoming curious.

So, we have a demand in the business for data and insights. In the world of Hybrid Cloud environments, which we’re talking to a lot of IT Professionals and Enterprises about, what’s the ideal method of IT Departments enabling the business to carry out Big Data Analytics? Is it an on-premises solution? Is it in the Cloud? Is it a mixture of both?

From a Microsoft perspective, the main thing we have the opportunity to do is allow customers to see the cloud as just a part of their own platform.

Instead of us saying “it’s a cloud only world”, like some of our competitors, or “the real business stuff only happens on-premises”, like some of our traditional on-premises competitors, we approach our customers differently. We take the point of view that the world’s evolving, we really need and will benefit from a continuum of capability between what’s on-premises and what’s in the cloud. So there is no right way as such, the right way is to embrace all the data that can be valuable to you.

You can curate an on-premises data source using a product like the Analytics Platform Solution (APS), which is the evolution of Parallel Data Warehouse, where you can put both Hadoop and relational information in at scale and produce data that you’ll want to be able enrol with an analytics function. You can certainly use on-premises systems such as Excel and then enrol that data into solutions you’ve built as part of Office 365 to really broaden the reach of the data.

That’s an example of leveraging on-premises data, but scaling the exposure of it to more people by using what’s in Office 365. But the reverse is true as well, we have customers who are running large scale HDInsight clusters in Azure producing datasets that are reimported into on-premises systems that they’re using as part of their analysis. So we’re really letting customers embrace the data where it is.

One of the things we think about a lot is figure where all your data is, and will you embrace it for what it is? If it’s born in the cloud, let it be there. If it’s born on-prem, let it be there. Just integrate the solutions in a way where ultimately you’re looking at how much you can expose the information to the people who would benefit from it. The reach of the solutions is key, it’s one of the reasons we’re making the bet on Office 365, because there’s no need to retrain to use it. Just sign in, provision a BI site, add people to it and upload solutions. Suddenly you’re able to build and run the solution on a Windows 8 tablet, see it from any HTML5 browser and do Q&A solutions over it. It all just lights up without any IT deployment.

One of the things we think about a lot is figure where all your data is, and will you embrace it for what it is? If it’s born in the cloud, let it be there. If it’s born on-prem, let it be there. Just integrate the solutions in a way where ultimately you’re looking at how much you can expose the information to the people who would benefit from it. The reach of the solutions is key, it’s one of the reasons we’re making the bet on Office 365, because there’s no need to retrain to use it. Just sign in, provision a BI site, add people to it and upload solutions. Suddenly you’re able to build and run the solution on a Windows 8 tablet, see it from any HTML5 browser and do Q&A solutions over it. It all just lights up without any IT deployment.

So we really encourage customers to think about where all the relevant data is and what it is. Whether it’s data they have or data from across the world, benefit from it and be successful.

That’s quite an agile scenario isn’t it, just being able to click and the information’s there, through Office 365 for example. In many way’s that’s reflective of businesses becoming increasingly more agile. How can IT also become more agile in enabling the wider business to be that reactive?

It’s interesting because we’re back to this cloud continuum point really, I think that customers who have embraced the cloud as an extension of their own platform are able to burst capability there. One of the scenarios that we support in APS is the ability to export partitions and data out of the appliance and move them into Azure. From there they can be processed with HDInsight, and so they get this great agility where they’re able to expand the overall dataset under management without having to expand their on-premises footprint cost, just by using the cloud as part of their platform.

We have other customers who burst compute capacity for applications or solutions when they just need more scale on the application tier. They leverage what we’re able to give them in Azure as a turnkey way to get there. The role cloud computing’s going to take in shaping the IT agenda going forward is in fact not just about cost, it’s not even just about new capability. One of the big promises of cloud computing really is this notion of agility.

You’re suddenly able to respond to opportunities in a way that’s much more dynamic because you’re taking advantage of a large cloud provider. We can provide huge scale to you at a moment’s notice, whereas a project to deploy a thousand servers for your typical business… well for your typical business it’s outside the range of possibility! For the Fortune 50 even, that’s a big project. Considerations like: Do we have the datacentre space? Does the datacentre have enough cooling and power to keep a thousand servers? Where do they come from? What’s the supply chain for it? How soon can they get here? These are all a thing of the past.

Something that you mentioned which I think is a really interesting case of how Microsoft as a company is changing is Hadoop, Microsoft’s support of Hadoop as an open source project and how we’re becoming more and more open as a company. What’s the thinking behind the decision to support open source projects like that?

I think Hadoop is a really good example because the decision for us to support it was relatively straight forward. The fact that its open source is interesting but not the heart of the story. The heart of the story really is that a standard was emerging, there’s as strong a standard as emerged around relational data 25-30 years ago called SQL. Whether it’s us or our competitors doing the relational database, we all support some variant of a spec called SQL 92, and so that lingua franca to talk to a relational system was set. As strange as it would be to abandon this in the early 90’s and create a relational database that did not know anything about SQL, it would be strange to build a solution for processing and querying into large sets of semi-structured data that wasn’t Hadoop today. A standard had been set, we have to enable our customers to get the best out of that standard. The decision to build HDInsight, the Hadoop service in Azure, was simply a matter of supporting a standard that we knew our customers would need support for.

What we try to do with that service of course is ensure that it meets the expectations that our customers have around what an Enterprise solution should be. Whether it’s the security integration, the availability of the service, or other elements, we want to make sure our customers have the right experience.

In the vein of interoperability and sharing, data sharing was a topic that came up quite frequently during your talk in London. How can we as the wider IT industry really encourage the sharing of data between businesses to enable the mutual gains?

T hat’s a great question. I think we’re at the very edge of that continent so to speak, very much in uncharted territory. There are companies where their entire business is about data and they curate and sell that data, and we as an industry need to find a way to scale the value of those data sets. But when you look at companies who aren’t natural data providers, but the business they’re in produces a data set that is of value outside that business, what exists today is mainly just a collection of bespoke relationships.

hat’s a great question. I think we’re at the very edge of that continent so to speak, very much in uncharted territory. There are companies where their entire business is about data and they curate and sell that data, and we as an industry need to find a way to scale the value of those data sets. But when you look at companies who aren’t natural data providers, but the business they’re in produces a data set that is of value outside that business, what exists today is mainly just a collection of bespoke relationships.

There’s a bank that I know who have a relationship with a shipping company, because the shipping telemetry is an interesting data signal to loan veracity. For smaller business retail loans, it just turns out that how many packages are coming and going is an interesting signal as to whether or not the loan’s going to be any good in the future. That’s a very bespoke relationship.

I’ve talked with manufacturing companies who’ve said things like “we don’t do a good enough job with the telemetry that comes off of our manufacturing systems to hold the equipment makers of those systems accountable for the reliability and maintenance schedules. If we collaborated with our competitors, who are also all using the same equipment classes, and pooled that data, we’d have a lot of insight and data to help us in the discussions with these manufacturing machine makers”.

There are all these pockets where there’s desire but there’s not yet this forum. We are working on a programme to make Azure that forum, we want to help the world embrace the notion that there’s other data available that would be valuable to help them understand their business better, drive efficiency, improve customer experience and create new business using the diversity of data that’s key to the Data Culture. More than that, we also want to help businesses consider what data they have that they’re not necessarily utilising today, but might be of incredible value outside their core operation. I think that as an industry, we’re barely at the very beginning of that conversation.

Might this become a bit of a virtuous circle for Microsoft, both providing the platform and getting people sharing data?

Yeah getting people talking and providing what people sometime refer to as the ‘data bazar’, a place where people can explore and find each other’s goods, so to speak. I think that there will be over time tremendous value in this, I think it’s going to end up being more and more important in conversations around this whole Big Data meme over the next several years.

Building a Data Culture within your organisation encapsulates a plethora of elements - Full Infographic

Building a Data Culture within your organisation encapsulates a plethora of elements - Full Infographic

The flip side of all that sharing and openness is governance and control, which might be something that’s lingering at the back of the IT Department’s mind. If we have all these various parts of the business going off using these BI solutions and building whatever they want with them, how do we reign that in, protect our business’ intellectual property and manage the proliferation of data around the business? How can IT approach that potential concern?

It’s interesting, I think we already have one proof of success on this, which is the managed self-service BI meme.

A few releases back in the Office/SharePoint/SQL Server/BI/Excel circle of work, we introduced this concept called managed self-service, where the goal was to empower people to go and build solutions on their own - use Power Pivot, build a model, build your own visualisations and all of that kind of good stuff. Then use SharePoint as the mechanism where all these things are stored, carried and managed. In doing so, IT administrators are able to see which data models were in use and what data sources they were talking to using the SharePoint facilities, sometimes referred to BI on BI. This enables us to have some sense of what’s going on out there and take control of things that we need to take control of.

Important to that strategy was compatibility of the underlying model, so as users created things with Excel, the professional analytics developer using the SQL Server BI tooling could import that model directly into Excel. They could then modify it and start to operationalise either for scale, for security or to enrich it; make it deeper, more accurate, and more comprehensive.

We’re investing in the same sort of dynamics today as we look at Office 365 and Azure. One of the features that’s in Office 365 that’s not all that well understood or known right now is this thing called the catalogue. Using tools like PowerQuery in Excel, you can find data that’s being curated from the public world, like government data sets and data that’s coming out of commercial systems. We have a handful of partnerships with commercial data providers to offer as part of the Azure marketplace, accessible through Office 365 and discoverable through the likes of PowerQuery, but perhaps more important is the Enterprise catalogue.

Every Office 365 tenant ends up getting this catalogue and one person can create a model and publish it, which means it’s now discoverable by everybody else. So we’re trying to encourage not just that self-service for an individual, but find ways for people to build on each other’s work. By building all this tooling into Office 365, we can also offer IT administrators the ability to see everything, see what’s being searched for. When you look at those tools and you look at what’s being searched for in PowerQuery, you can just see right there what people are interested in. From there you can establish whether you do or don’t have models that are sanctioned, driven and governed by IT. If you don’t, then one should probably be created seeing as this is what people are looking for. This can then be the top result when people search for it, and the model that people base additional derivative work on. In this way we’re giving IT an opportunity to get ahead of where users are, along with the controls and insight over how it’s being used through Office 365.

Finally, in one word what is it that really sets Microsoft’s whole data platform apart from the crowd?

I guess if I had to pick one thing, it would be ‘complete’. That would be the word I would use. We don’t just have In-Memory for analytics, we have In-Memory for transaction processing, data warehousing, analytics and personal BI. We don’t just have an offering in the cloud, we have offerings that are on-prem and then the cloud can provide a continuum across them. We don’t just have a BI visualisation tool or a Hadoop distribution, we in fact have the relational side, the non-relational side, the data processing, analytics and scale in the cloud and the BI tooling.

The reality is that getting that Data Culture really takes a complete platform. You have to have data, and diverse sets of data, a lot of heterogeneity, scale and ability to process and query that data. You need the analytics side to shape, refine and select, to interoperate with traditional analytics and machine learning analysis. Plus you need the ability for humans to absorb this, visualise and extract the insights, extract the value. So you really need that whole loop that doesn’t come from any one of those parts in isolation. I’d say the thing that’s differentiating us right now is that we are the first ones that are really after building that truly complete package and at this point have the pieces put together.

How are you engendering a Data Culture within your organisation? Are you seeing increasing demand for deeper analytics and insight? Let us know in the comments, or join the conversation on Twitter @TechNetUK .