Understanding High-End Video Performance Issues with Hyper-V

A while ago I wrote a relatively short blog post high-lighting the fact that there are performance issues with Hyper-V when used with a high-end graphics adapter. Since then I have been inundated with people asking questions and trying to get their heads around this issue. Today I would like to take a chance to drill in on this:

What is the cause of the problem?

Okay – let’s grab the pertinent text from the original KB article:

This issue occurs when a device driver or other kernel mode component makes frequent memory allocations by using the PAGE_WRITECOMBINE protection flag set while the hypervisor is running. When the kernel memory manager allocates memory by using the WRITECOMBINE attribute, the kernel memory manager must flush the Translation Lookaside Buffer (TLB) and the cache for the specific page. However, when the Hyper-V role is enabled, the TLB is virtualized by the hypervisor. Therefore, every TLB flush sends an intercept into the hypervisor. This intercept instructs the hypervisor to flush the virtual TLB. This is an expensive operation that introduces a fixed overhead cost to virtualization. Usually, this is an infrequent event in supported virtualization scenarios. However, some video graphics drivers may cause this operation to occur very frequently during certain operations. This significantly magnifies the overhead in the hypervisor.

Usually when I talk to people about this – their eyes start to gloss over – so let’s dig in a little here. With the help of Wikipedia we can get some definitions here:

Write-combining (https://en.wikipedia.org/wiki/Write-combining):

Write combining (WC) is a computer bus technique for allowing data to be combined and temporarily stored in a buffer -- the write combine buffer (WCB) -- to be released together later in burst mode instead of writing (immediately) as single bits or small chunks.

Write combining cannot be used for general memory access (data or code regions) due to the 'weak ordering'. Write-combining does not guarantee that the combination of writes and reads is done in the correct order. For example, a Write/Read/Write combination to a specific address would lead to the write combining order of Read/Write/Write which can lead to obtaining wrong values with the first read (which potentially relies on the write before).

In order to avoid the problem of read/write order described above, the write buffer can be treated as a fully-associative cache and added into the memory hierarchy of the device in which it is implemented. Adding complexity slows down the memory hierarchy so this technique is often only used for memory which does not need 'strong ordering' (always correct) like the frame buffers of video cards.

In summary, write-combining is a method of accessing memory that is typically only used by video cards.

Translation Lookaside Buffer (TLB) (https://en.wikipedia.org/wiki/Translation_Lookaside_Buffer)

A Translation lookaside buffer (TLB) is a CPU cache that memory management hardware uses to improve virtual address translation speed. It was the first cache introduced in processors. All current desktop and server processors (such as x86) use a TLB. A TLB has a fixed number of slots that contain page table entries, which map virtual addresses to physical addresses. It is typically a content-addressable memory (CAM), in which the search key is the virtual address and the search result is a physical address. If the requested address is present in the TLB, the CAM search yields a match quickly, after which the physical address can be used to access memory. This is called a TLB hit. If the requested address is not in the TLB, the translation proceeds by looking up the page table in a process called a page walk. The page walk is a high latency process, as it involves reading the contents of multiple memory locations and using them to compute the physical address. Furthermore, the page walk takes significantly longer if the translation tables are swapped out into secondary storage, which a few systems allow. After the physical address is determined, the virtual address to physical address mapping and the protection bits are entered in the TLB.

So the TLB is a CPU cache that helps with translation between virtual address spaces and physical address. Note that these virtual address spaces have nothing to do with virtual machines – but are used to allow multiple applications on an operating system to be isolated from each other.

Summarizing all of this – video card drivers tend to use memory access methods that cause Hyper-V to need to clear out the CPU cache for memory page table mapping a lot. This is an expensive thing to do in Hyper-V at the best of times. In fact – the above TLB article on Wikipedia even has a section on the problems of virtualization and the TLB.

Now that we have the ground rules in place – let’s head on to some of the other questions.

How could you possibly ship Hyper-V with this issue? Did you not test this product?

To answer the second question first – I actually was the first person (in the world) to hit this issue. Early on in development I tried to use Hyper-V as my desktop OS on my home system with a GeForce 8800 video card. Everything seemed to work okay (though some things were oddly sluggish) until I tried to pay Age of Empires III. I had never played this game before, and the first time I tried to play it was on top of Hyper-V. In short, it sucked. Unfortunately I spent most of the weekend trying to tweak my rig and looking for patches to Age of Empires III before I thought to try disabling Hyper-V.

As soon as I realized what was happening I filed a bug and the issue was investigated.

When the issue was determined to be a specific result of the combination of the Hyper-V hypervisor and the Nvidia driver – we decided to leave things as they were for a couple of reasons:

- Windows Server does not include any video drivers other than the SVGA driver by default

- Windows Server will not install a high-end video driver automatically at any stage – you need to manually install the Windows 7 drivers

Also, Hyper-V was being developed solely for server virtualization and:

- We have always recommended that nothing be run in the management operating system, other than basic management tools

- No server workload that we tested generated anywhere near the rate of TLB flushing that these video drivers cause

Finally, this is a really hard issue to address. In fact, there are no hypervisor based virtualization platforms that addresses this issue today – and while there are several under development I suspect that they will either have specific hardware requirements (I will get to this later) or will have simplifications / limitations to help them mitigate this issue (like only having one virtual machine).

Why does this affect Hyper-V and not Virtual PC?

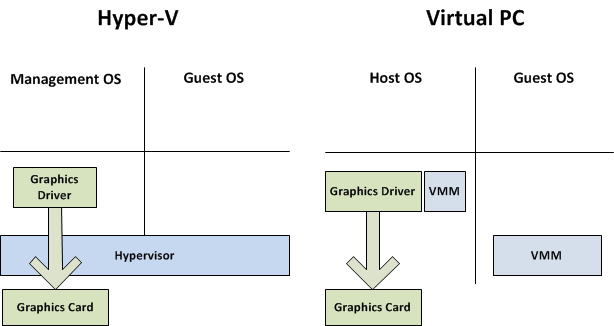

Here we are seeing the difference between a hypervisor and a host VMM type solution. With a hypervisor base platform (like Hyper-V) everything runs on top of the hypervisor – even the management operating system. Where as with a hosted VMM platform (like Virtual PC) the host operating system still has direct access to the hardware. To explain this better – here is a diagram:

Hopefully you can see the difference here. It should also be noted that all desktop virtualization products available today use an architecture similar to that of Virtual PC.

How do I know if this is affecting my computer?

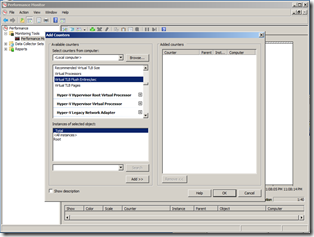

To check if this is affecting your system – what you need to do is open Performance Monitor (you can do this by running “perfmon” from the start menu). Select the Performance Monitor node and click on the plus symbol to add a new counter. Then find the Hyper-V Hypervisor Root Partition entry, expand it, select Virtual TLB Flush Entries/sec and add the Root counter. This will allow you to keep an eye on the rate of TLB flushing in the management operating system:

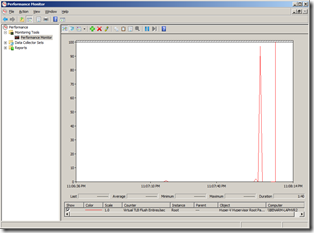

So what do you look for now? On my system – the only time I see a significant rate of TLB flushing (>10) is when I start a virtual machine. A system that has this problem will either generate a continuous rate of TLB flushing above 100 or will generate spikes in the thousands.

What can I do to stop this / work around it?

There are a couple of options here:

Use the default video driver (SVGA).

Yes, I know it is not sexy or fun – but if you are planning to just use Hyper-V as a server virtualization platform this is your easiest and simplest option. It is the way we intended Hyper-V to be used, and it will always give the best performance.

Tone down the use of 3D graphics.

Some video cards (like the Nvidia Quadro FX 1700M) seem to work fine as long as Aero is not enabled and no 3D applications are running. If I enable Aero I start to see a fairly frequent rate of spikes in my TLB flush count (which causes annoying lurches in the window animation). Running a 3D game (like Halo 2) is just terrible.

This means that for those of you who do not want the high-end driver for 3D graphics, but instead need it for multi-monitor support or for the ability to connect a projector to your laptop (like me) this may work.

Choose your video card carefully.

As a general rule of thumb – the less capable the video card, the less likely this is to be an issue. My previous laptop had an integrated graphics controller – which was terrible for gaming – but worked great for Hyper-V. When I wanted to get my new laptop and found that there was no Intel option – I tracked down a coworker with a similar graphics card in their laptop and tried out Hyper-V on it before going ahead and buying it.

Get a system with Second Level Address Translation (SLAT).

SLAT is a technology that goes by different names depending on whether you get Intel (where it is called “Extended Page Tables” (EPT)) or AMD (where it is called “Nested Page Tables” (NPT) or “Rapid Virtualization Indexing” (RVI)). These technologies are an extension to the traditional TLB that allow us to use the hardware to handle multiple TLBs – one for each virtual machine. We added support for this hardware in Windows Server 2008 R2. If you run Windows Server 2008 R2 on a system with SLAT capabilities – you will not have any problems running 3D graphics at all.

Intel started shipping this technology in the Nehalem (or core i7) processor line. AMD has been shipping this for a while now – ever since generation 3 of the AMD Quad-core family. Unfortunately neither have shipped this technology in the laptop processors yet – though Intel has indicated that they are planning to soon.

Hopefully this has answered all of your questions satisfactorily. If you have any further questions – please feel free to ask away. I would also encourage you that if you have a video card that appears to work well with Hyper-V and 3D graphics – post the details in the comments so that others can benefit from your good fortune!

Cheers,

Ben