The only thing constant is change – Part 1

Hello it’s Kevin Hill here. I want to provide some insight into where we are heading with the WER datasets for partners.

We surveyed users of WER data on https://winqual.microsoft.com to get feedback for the direction of features, the below two themes surfaced quickly as a result:

- “Reduce noise” – we had a lot of feedback around filtering out low priority “events” as these end up being time-wasters.

- “More automation & notifications” – Don’t make me always come to the website to get information push it to me.

We have made feature investments to help make progress in these areas:

“Reduce noise”

For the user mode datasets in the past we had shown “event” (a.k.a. Bucket) based information. We are moving this dataset to be based on Failures which are the result of the !Analyze debugging extension. Below I scratch the surface with the difference between these two datasets, but watching the Channel 9 video really helps to understand what logic goes into analyzing failures.

Buckets classify issues by: Application Name, Application Version, Application Build Date, Module Name, Module Version, Module Build Date, OS Exception Code, and Module Code Offset. On the surface this looks like a very precise way to classify issues; however it can be misleading at times since we generate this bucket on the Windows OS client without performing any symbol analysis on the memory dump. The module that is picked by the Windows Error Reporting client is the module at the top of the stack. We have found that investigating these issues as raw buckets can cause many investigations that result in a faulting module that is different from the original bucket determination. Ryan Kivett (a developer lead from the Windows Reliability team) has a great blog post titled: WER History 101

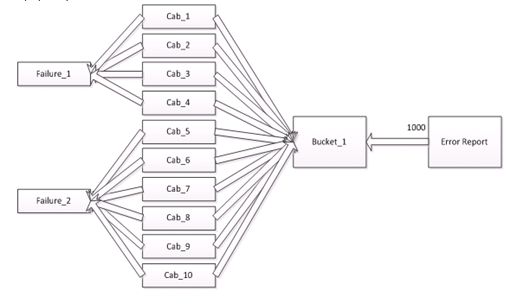

Failures, however, are the result of processing memory dumps collected from buckets in order to more precisely assess blame to the appropriate module. I have included the graphic below to show how buckets can result in multiple different failures. While this is a typically scenario once private symbol analysis has been applied, there can also be cases where multiple buckets get aggregated into a !Unknown symbol as well. If you download cabs and run !Analyze with your own private symbol paths you will break down the !Unknown failures into a very precise set of issues.

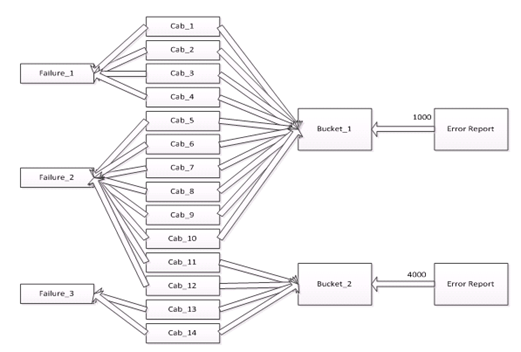

Here is another example considering multiple buckets:

Since we are performing automated analysis of issues using private symbols we are able to filter out issues where we know we will likely be able to provide a fix, in order to provide fewer issues that will result in investigations that point to issues in modules that don’t belong to you. This is one of the ways we are reducing the noise in the dataset. This doesn’t mean that there will be fewer number of issues, but the issues we share are more likely to be actionable for your software.

The below video contains a detailed explanation of the logic behind !Analyze and what goes into the failure dataset.

“More automation & notifications”

We are providing email updates for all the new failure based reports that are going to be published based on the new user mode failure based dataset and the kernel mode failure based dataset as well.

Stay tuned for more updates on planned changes, Part 2 will provide more information…