Upgrading Operations Manager 2012 to SP1: The CDM Monitoring and Management Experience

Introduction

Hi, I’m Dave Hatton and welcome to my 1st System Center - Operations Manager (OM) technical blog. I’m a Senior Systems Engineer on the Cloud and Datacenter Management (CDM) Monitoring and Management team (M&M), and my primary focus has been supporting our OM infrastructure.

The CDM M&M team run what some have referred to as an “Extremely Large” OM environment, with 6,200+ agents reporting to a single management group. Our partners include some of Microsoft’s largest externally facing sites and services including Microsoft.com, Windows Update, and MSDN/TechNet, to name a few.

Today’s main goal is to provide some best practices, gotchas, and overall upgrade approach we took with our production environment.

Why SP1?

In addition to the usual fixes, we were especially interested in lighting up Global Service Monitoring (GSM) and Application Performance Monitoring (APM) for our partners. For more information on those SP1 features, see my teammate Charlie’s blog here.

CDM M&M Production Environment Details

· Single Management Group

· 6,200+ agents

· 3 - Resource Pool Management servers

· 14 - 1st tier gateways

· 6 - 2nd tier gateways

· 4 - 3rd tier gateways

· 2 - Standalone Management servers with ACS

· 2 - Standalone Management servers with OM Web Console

· 1 - SQL Server housing the OperationsManager database (1 failover)

· 1 - SQL Server housing the OperationsManagerDW, SSRS, OM Reporting (1 failover)

Recommended Prerequisite Tasks

Verify that the System Center folders on the core infrastructure systems (Management Servers, Gateways) aren’t open by users/external processes (Health Service State folder, etc…)

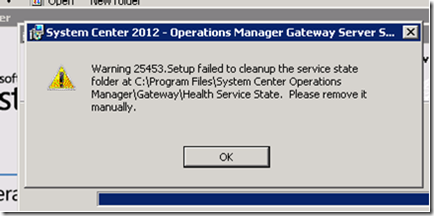

· If so, you will experience the following 25453 warning during the upgrade:

· If you encounter this warning, you will need to:

o Click ok to complete the upgrade

o Stop the Health Service (System Center Management)

o Rename the Health Service State folder

o Start the Health Service

IMPORTANT!!!

During the 1st management server upgrade, the OperationsManager and OperationsManagerDW databases are updated as well.

· DO NOT proceed with additional management server upgrades, until the initial upgrade has completed.

o If additional management server upgrades are started prematurely, the SQL changes may not be recognized and will be applied again.

· DO manually execute ETL cleanup. There is an ETL grooming script that is executed in the beginning of the 1st upgrade, and can take an extended period of time, especially in large OM environments.

o In our pre-production environment with 5,000 agents, the upgrade timed out and rolled back after approx. 30 minutes. The error found in the setup log was “System.Data.SqlClient.SqlException, Exception Error Code: 0x80131904, Exception.Message: Timeout expired.“

o Manual execution of the grooming script took 50 min. and we went from 76 million to 300k rows in the EntityTransactionLog table.

To avoid excessive upgrade times and/or an undesired rollback due to the cleanup process timing out, proceed with the below steps.

1. Backup the OperationsManager and OperationsManagerDW databases. (Hey, you were going to do it anyway, right? J)

2. Execute the following code in the OperationsManager database and allow to finish before you begin your first upgrade.

-- (c) Copyright 2004-2006 Microsoft Corporation, All Rights Reserved --

-- Proprietary and confidential to Microsoft Corporation --

-- File: CatchupETLGrooming.sql --

-- Contents: A bug in the ETL grooming code could have left the customer --

-- database with a large amount of ETL rows to groom. This script will groom --

-- the ETL entries in a loop 100K rows at a time to avoid filling up the --

-- transaction log --

---------------------------------------------------------------------------------

DECLARE @RowCount int = 1;

DECLARE @BatchSize int = 100000;

DECLARE @SubscriptionWatermark bigint = 0;

DECLARE @LastErr int;

-- Delete rows from the EntityTransactionLog. We delete the rows with TransactionLogId that aren't being

-- used anymore by the EntityChangeLog table and by the RelatedEntityChangeLog table.

SELECT @SubscriptionWatermark = dbo.fn_GetEntityChangeLogGroomingWatermark();

WHILE(@RowCount > 0)

BEGIN

DELETE TOP(@BatchSize) ETL

FROM EntityTransactionLog ETL

WHERE NOT EXISTS (SELECT 1 FROM EntityChangeLog ECL WHERE ECL.EntityTransactionLogId = ETL.EntityTransactionLogId)

AND NOT EXISTS (SELECT 1 FROM RelatedEntityChangeLog RECL WHERE RECL.EntityTransactionLogId = ETL.EntityTransactionLogId)

AND ETL.EntityTransactionLogId < @SubscriptionWatermark;

SELECT @LastErr = @@ERROR, @RowCount = @@ROWCOUNT;

END

3. The time this takes to complete is the time you saved on your SP1 update!

UPGRADE!!!

Well, a couple of notes worth mentioning first…

· To play it safe, we upgraded our additional management servers one at time. This was to cut down on database churn and assure proper load balancing and failovers for our gateways.

· We upgraded our Gateways in order of tier level and Primary/Secondary functionality (see overview below)

· Check your web console after the upgrade! If you encounter a “Server Error in ‘/OperationsManager’ Application.” web.config error, you will need to reregister ASPNet 4.0.

1. Reregister Internet Information Services (IIS) to use ASP.NET 4.0 by running the following command:

%WINDIR%\Microsoft.NET\Framework64\v4.0.30319\aspnet_regiis.exe -r

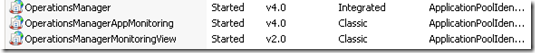

2. Change the IIS Application pool “OperationsManagerMonitoringView” ASP.NET framework integration from ASP.NET 4.0 to ASP.NET 2.0.

a. This is changed when you re-register and you need to change it back. We encountered a “500 – Internal Server Error” when drilling into the web console alert view.

b. OperationsManager and OperationsManagerAppMonitoring application pools should be ASP.NET 4.0.

3. Run IISreset and you should be good to go!

CDM M&M Production Infrastructure SP1 Upgrade Step Overview

Component/Step in order of deployment |

Duration in minutes |

Notes |

Initial Configuration |

6 |

1st install |

OperationsManager Database |

22 |

1st install |

1st Management Server (RMS Emulator) |

9 |

1st install/Resource Pool |

Warehouse |

5 |

1st install |

Ops Console/Final Config |

3 |

1st install |

2nd Management Server |

9 |

Resource Pool |

3rd Management Server |

9 |

Resource Pool |

Standalone Management Server/ACS |

14 |

|

Standalone Management Server/Web Console |

15 |

3 min. to reregister ASPNet 4.0 |

1st Tier Primary Gateways |

8 |

Parallel upgrade |

1st Tier Failover Gateways |

8 |

Parallel upgrade |

2nd Tier Primary Gateways |

8 |

Parallel upgrade |

2nd Tier Failover Gateways |

8 |

Parallel upgrade |

3rd Tier Primary Gateways |

8 |

Parallel upgrade |

3rd Tier Failover Gateways |

8 |

Parallel upgrade |

OM Reporting |

7 |

|

Approx. Total Upgrade Time (2.5 hours) |

147 |

So there you have it!

Although we had “all hands on deck” for a 3 hour upgrade during an off-hour window, the actual upgrade only took 2 ½ hours, and stress levels remained low.

Overall, we were extremely satisfied with the upgrade process, and experienced only a minor < 8 min. alerting delay during the initial Operations Manager database upgrade (Yes, this was awesome!) All other components failed over as expected, and no loss of data was experienced.

Upgrading to enable key new features and with minimal impact in a high volume, highly visible OM environment? I’ll take it!