OAuth machine-to-machine (M2M) authentication

OAuth machine-to-machine (M2M) authentication uses the credentials of an automated entity (in this case, an Azure Databricks managed service principal or a Microsoft Entra ID (formerly Azure Active Directory) managed service principal) to authenticate the target entity.

After Azure Databricks successfully authenticates the target service principal through the OAuth M2M authentication request, an Azure Databricks OAuth token is given to the participating tool or SDK to perform token-based authentication from that time forward on the service principal’s behalf. The Azure Databricks OAuth token has a lifespan of one hour, following which the tool or SDK involved will make an automatic background attempt to obtain a new token that is also valid for one hour.

To begin configuring OAuth M2M authentication, do the following:

Note

You must be an Azure Databricks account admin to manage Azure Databricks OAuth credentials for service principals.

Step 1: Create a Microsoft Entra ID service principal in your Azure account

Complete this step if you want to link a Microsoft Entra ID service principal to your Azure Databricks account, workspace, or both. Otherwise, skip ahead to Step 2.

Sign in to the Azure portal.

Note

The portal to use is different depending on whether your Microsoft Entra ID (formerly Azure Active Directory) application runs in the Azure public cloud or in a national or sovereign cloud. For more information, see National clouds.

If you have access to multiple tenants, subscriptions, or directories, click the Directories + subscriptions (directory with filter) icon in the top menu to switch to the directory in which you want to provision the service principal.

In Search resources, services, and docs, search for and select Microsoft Entra ID.

Click + Add and select App registration.

For Name, enter a name for the application.

In the Supported account types section, select Accounts in this organizational directory only (Single tenant).

Click Register.

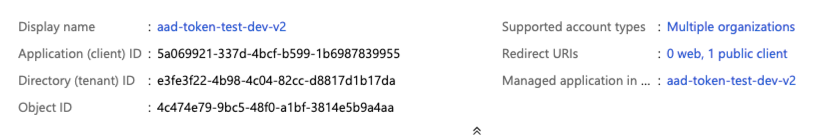

On the application page’s Overview page, in the Essentials section, copy the following values:

- Application (client) ID

- Directory (tenant) ID

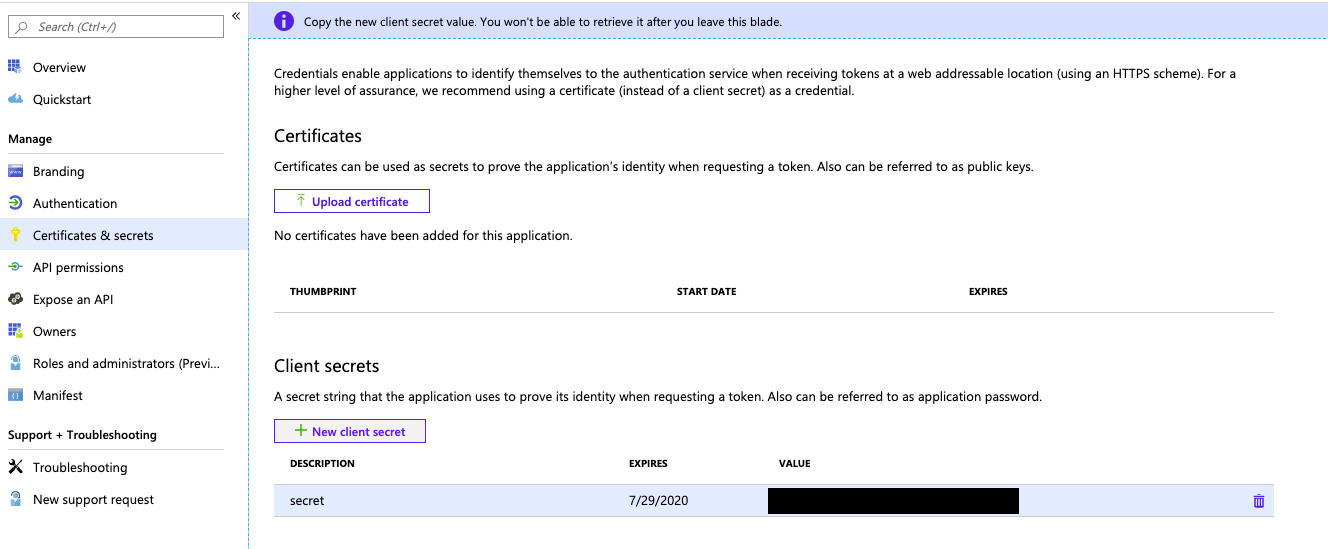

To generate a client secret, within Manage, click Certificates & secrets.

Note

You use this client secret to generate Microsoft Entra ID tokens for authenticating Microsoft Entra ID service principals with Azure Databricks. To determine whether an Azure Databricks tool or SDK can use Microsoft Entra ID tokens, see the tool’s or SDK’s documentation.

On the Client secrets tab, click New client secret.

In the Add a client secret pane, for Description, enter a description for the client secret.

For Expires, select an expiry time period for the client secret, and then click Add.

Copy and store the client secret’s Value in a secure place, as this client secret is the password for your application.

Step 2: Add a service principal to your Azure Databricks account

This step works only if your target Azure Databricks workspace is enabled for identity federation. If your workspace is not enabled for identity federation, skip ahead to Step 3.

In your Azure Databricks workspace, click your username in the top bar and click Manage account.

Alternatively, go directly to your Azure Databricks account console, at https://accounts.azuredatabricks.net.

Sign in to your Azure Databricks account, if prompted.

On the sidebar, click User management.

Click the Service principals tab.

Click Add service principal.

Under Management, choose Databricks managed or Microsoft Entra ID managed.

If you chose Microsoft Entra ID managed, under Microsoft Entra application ID, paste the application (client) ID value from Step 1.

Enter a Name for the service principal.

Click Add.

(Optional) Assign account-level permissions to the service principal:

- On the Service principals tab, click the name of your service principal.

- On the Roles tab, toggle to enable or disable each target role that you want this service principal to have.

- On the Permissions tab, grant access to any Azure Databricks users, service principals, and account group roles that you want to manage and use this service principal. See Manage roles on a service principal.

Step 3: Add the service principal to your Azure Databricks workspace

If your workspace is enabled for identity federation:

- In your Azure Databricks workspace, click your username in the top bar and click Settings.

- Click on the Identity and access tab.

- Next to Service principals, click Manage.

- Click Add service principal.

- Select your service principal from Step 2 and click Add.

Skip ahead to Step 4.

If your workspace is not enabled for identity federation:

- In your Azure Databricks workspace, click your username in the top bar and click Settings.

- Click on the Identity and access tab.

- Next to Service principals, click Manage.

- Click Add service principal.

- Click Add new.

- Under Management, choose Databricks managed or Microsoft Entra ID managed.

- If you chose Microsoft Entra ID managed, under Microsoft Entra application ID, paste the application (client) ID value from Step 1.

- Enter some Display Name for the new service principal and click Add.

Step 4: Assign workspace-level permissions to the service principal

- If the admin console for your workspace is not already opened, click your username in the top bar and click Settings.

- Click on the Identity and access tab.

- Next to Service principals, click Manage.

- Click the name of your service principal to open its settings page.

- On the Configurations tab, check the box next to each entitlement that you want your service principal to have for this workspace, and then click Update.

- On the Permissions tab, grant access to any Azure Databricks users, service principals, and groups that you want to manage and use this service principal. See Manage roles on a service principal.

Step 5: Create an Azure Databricks OAuth secret for the service principal

Before you can use OAuth to authenticate to Azure Databricks, you must first create an OAuth secret, which can be used to generate OAuth access tokens. A service principal can have up to five OAuth secrets. To create an OAuth secret for a service principal by using the account console:

- Sign in to the Azure Databricks account console, at https://accounts.azuredatabricks.net.

- Sign in to your Azure Databricks account, if prompted.

- On the sidebar, click User management.

- Click the Service principals tab.

- Click the name of the service principal.

- In the Principal information tab’s OAuth secrets section, click Generate secret.

- In the Generate secret dialog, copy and store the Secret value in a secure place, as this OAuth secret is the password for the service principal.

- Click Done.

Note

To enable the service principal to use clusters or SQL warehouses, you must give the service principal access to them. See Compute permissions or Manage a SQL warehouse.

Finish configuring OAuth M2M authentication

To finish configuring OAuth M2M authentication, you must set the following associated environment variables, .databrickscfg fields, Terraform fields, or Config fields:

- The Azure Databricks host, specified as

https://accounts.azuredatabricks.netfor account operations or the target per-workspace URL, for examplehttps://adb-1234567890123456.7.azuredatabricks.netfor workspace operations. - The Azure Databricks account ID, for Azure Databricks account operations.

- The service principal client ID.

- The service principal secret.

To perform OAuth M2M authentication, integrate the following within your code, based on the participating tool or SDK:

Environment

To use environment variables for a specific Azure Databricks authentication type with a tool or SDK, see Supported authentication types by Azure Databricks tool or SDK or the tool’s or SDK’s documentation. See also Environment variables and fields for client unified authentication and the Default order of evaluation for client unified authentication methods and credentials.

For account-level operations, set the following environment variables:

DATABRICKS_HOST, set to the Azure Databricks account console URL,https://accounts.azuredatabricks.net.DATABRICKS_ACCOUNT_IDDATABRICKS_CLIENT_IDDATABRICKS_CLIENT_SECRET

For workspace-level operations, set the following environment variables:

DATABRICKS_HOST, set to the Azure Databricks per-workspace URL, for examplehttps://adb-1234567890123456.7.azuredatabricks.net.DATABRICKS_CLIENT_IDDATABRICKS_CLIENT_SECRET

Profile

Create or identify an Azure Databricks configuration profile with the following fields in your .databrickscfg file. If you create the profile, replace the placeholders with the appropriate values. To use the profile with a tool or SDK, see Supported authentication types by Azure Databricks tool or SDK or the tool’s or SDK’s documentation. See also Environment variables and fields for client unified authentication and the Default order of evaluation for client unified authentication methods and credentials.

For account-level operations, set the following values in your .databrickscfg file. In this case, the Azure Databricks account console URL is https://accounts.azuredatabricks.net:

[<some-unique-configuration-profile-name>]

host = <account-console-url>

account_id = <account-id>

client_id = <service-principal-client-id>

client_secret = <service-principal-secret>

For workspace-level operations, set the following values in your .databrickscfg file. In this case, the host is the Azure Databricks per-workspace URL, for example https://adb-1234567890123456.7.azuredatabricks.net:

[<some-unique-configuration-profile-name>]

host = <workspace-url>

client_id = <service-principal-client-id>

client_secret = <service-principal-secret>

Cli

For the Databricks CLI, do one of the following:

- Set the environment variables as specified in this article’s “Environment” section.

- Set the values in your

.databrickscfgfile as specified in this article’s “Profile” section.

Environment variables always take precedence over values in your .databrickscfg file.

See also OAuth machine-to-machine (M2M) authentication.

Connect

Note

OAuth M2M authentication is supported on the following Databricks Connect versions:

- For Python, Databricks Connect for Databricks Runtime 14.0 and above.

- For Scala, Databricks Connect for Databricks Runtime 13.3 LTS and above. The Databricks SDK for Java that is included with Databricks Connect for Databricks Runtime 13.3 LTS and above must be upgraded to Databricks SDK for Java 0.17.0 or above.

For Databricks Connect, you can do one of the following:

- Set the values in your

.databrickscfgfile for Azure Databricks workspace-level operations as specified in this article’s “Profile” section. Also set thecluster_idenvironment variable in your profile to your per-workspace URL, for examplehttps://adb-1234567890123456.7.azuredatabricks.net. - Set the environment variables for Azure Databricks workspace-level operations as specified in this article’s “Environment” section. Also set the

DATABRICKS_CLUSTER_IDenvironment variable to your per-workspace URL, for examplehttps://adb-1234567890123456.7.azuredatabricks.net.

Values in your .databrickscfg file always take precedence over environment variables.

To initialize the Databricks Connect client with these environment variables or values in your .databrickscfg file, see one of the following:

- For Python, see Configure connection properties for Python.

- For Scala, see Configure connection properties for Scala.

Vs code

For the Databricks extension for Visual Studio Code, do the following:

- Set the values in your

.databrickscfgfile for Azure Databricks workspace-level operations as specified in this article’s “Profile” section. - In the Configuration pane of the Databricks extension for Visual Studio Code, click Configure Databricks.

- In the Command Palette, for Databricks Host, enter your per-workspace URL, for example

https://adb-1234567890123456.7.azuredatabricks.net, and then pressEnter. - In the Command Palette, select your target profile’s name in the list for your URL.

For more details, see Authentication setup for the Databricks extension for VS Code.

Terraform

For account-level operations, for default authentication:

provider "databricks" {

alias = "accounts"

}

For direct configuration (replace the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as HashiCorp Vault. See also Vault Provider). In this case, the Azure Databricks account console URL is https://accounts.azuredatabricks.net:

provider "databricks" {

alias = "accounts"

host = <retrieve-account-console-url>

account_id = <retrieve-account-id>

client_id = <retrieve-client-id>

client_secret = <retrieve-client-secret>

}

For workspace-level operations, for default authentication:

provider "databricks" {

alias = "workspace"

}

For direct configuration (replace the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as HashiCorp Vault. See also Vault Provider). In this case, the host is the Azure Databricks per-workspace URL, for example https://adb-1234567890123456.7.azuredatabricks.net:

provider "databricks" {

alias = "workspace"

host = <retrieve-workspace-url>

client_id = <retrieve-client-id>

client_secret = <retrieve-client-secret>

}

For more information about authenticating with the Databricks Terraform provider, see Authentication.

Python

For account-level operations, use the following for default authentication:

from databricks.sdk import AccountClient

a = AccountClient()

# ...

For direct configuration, use the following, replacing the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as Azure KeyVault. In this case, the Azure Databricks account console URL is https://accounts.azuredatabricks.net:

from databricks.sdk import AccountClient

a = AccountClient(

host = retrieve_account_console_url(),

account_id = retrieve_account_id(),

client_id = retrieve_client_id(),

client_secret = retrieve_client_secret()

)

# ...

For workspace-level operations, specifically default authentication:

from databricks.sdk import WorkspaceClient

w = WorkspaceClient()

# ...

For direct configuration, replace the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as Azure KeyVault. In this case, the host is the Azure Databricks per-workspace URL, for example https://adb-1234567890123456.7.azuredatabricks.net:

from databricks.sdk import WorkspaceClient

w = WorkspaceClient(

host = retrieve_workspace_url(),

client_id = retrieve_client_id(),

client_secret = retrieve_client_secret()

)

# ...

For more information about authenticating with Databricks tools and SDKs that use Python and implement Databricks client unified authentication, see:

- Set up the Databricks Connect client for Python

- Authenticate the Databricks SDK for Python with your Azure Databricks account or workspace

Note

The Databricks extension for Visual Studio Code uses Python but has not yet implemented OAuth M2M authentication.

Java

For workspace-level operations, for default authentication:

import com.databricks.sdk.WorkspaceClient;

// ...

WorkspaceClient w = new WorkspaceClient();

// ...

For direct configuration (replace the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as Azure KeyVault). In this case, the host is the Azure Databricks per-workspace URL, for example https://adb-1234567890123456.7.azuredatabricks.net:

import com.databricks.sdk.WorkspaceClient;

import com.databricks.sdk.core.DatabricksConfig;

// ...

DatabricksConfig cfg = new DatabricksConfig()

.setHost(retrieveWorkspaceUrl())

.setClientId(retrieveClientId())

.setClientSecret(retrieveClientSecret());

WorkspaceClient w = new WorkspaceClient(cfg);

// ...

For more information about authenticating with Databricks tools and SDKs that use Java and implement Databricks client unified authentication, see:

- Set up the Databricks Connect client for Scala (the Databricks Connect client for Scala uses the included Databricks SDK for Java for authentication)

- Authenticate the Databricks SDK for Java with your Azure Databricks account or workspace

Go

For account-level operations, for default authentication:

import (

"github.com/databricks/databricks-sdk-go"

)

// ...

w := databricks.Must(databricks.NewWorkspaceClient())

// ...

For direct configuration (replace the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as Azure KeyVault). In this case, the Azure Databricks account console URL is https://accounts.azuredatabricks.net:

import (

"github.com/databricks/databricks-sdk-go"

)

// ...

w := databricks.Must(databricks.NewWorkspaceClient(&databricks.Config{

Host: retrieveAccountConsoleUrl(),

AccountId: retrieveAccountId(),

ClientId: retrieveClientId(),

ClientSecret: retrieveClientSecret(),

}))

// ...

For workspace-level operations, for default authentication:

import (

"github.com/databricks/databricks-sdk-go"

)

// ...

a := databricks.Must(databricks.NewAccountClient())

// ...

For direct configuration (replace the retrieve placeholders with your own implementation to retrieve the values from the console or some other configuration store, such as Azure KeyVault). In this case, the host is the Azure Databricks per-workspace URL, for example https://adb-1234567890123456.7.azuredatabricks.net:

import (

"github.com/databricks/databricks-sdk-go"

)

// ...

a := databricks.Must(databricks.NewAccountClient(&databricks.Config{

Host: retrieveWorkspaceUrl(),

ClientId: retrieveClientId(),

ClientSecret: retrieveClientSecret(),

}))

// ...

For more information about authenticating with Databricks tools and SDKs that use Go and that implement Databricks client unified authentication, see Authenticate the Databricks SDK for Go with your Azure Databricks account or workspace.

Manually generate and use access tokens for OAuth machine-to-machine (M2M) authentication

Azure Databricks tools and SDKs that implement the Databricks client unified authentication standard will automatically generate, refresh, and use Azure Databricks OAuth access tokens on your behalf as needed for OAuth M2M authentication.

If for some reason you must manually generate, refresh, or use Azure Databricks OAuth access tokens for OAuth M2M authentication, follow the instructions in this section.

Step 1: Create a service principal and an OAuth secret

If you do not already have an Azure Databricks managed service principal or Microsoft Entra ID managed service principal and its corresponding Azure Databricks OAuth secret, create them by completing Steps 1-5 at the beginning of this article.

Step 2: Manually generate an access token

You can use the Azure Databricks managed service principal’s or Microsoft Entra ID managed service principal’s client ID and the Azure Databricks OAuth secret to request an Azure Databricks OAuth access token to authenticate to both account-level REST APIs and workspace-level REST APIs. The token will expire in one hour. You must request a new Azure Databricks OAuth access token after the expiration. The scope of the OAuth access token depends on the level that you create the token from. You can create a token at either the account level or the workspace level, as follows:

- To call account-level and workspace-level REST APIs within accounts and workspaces that the Azure Databricks managed service principal or Microsoft Entra ID managed service principal has access to, manually generate an access token at the account level.

- To call REST APIs within only one workspace that the Azure Databricks managed service principal or Microsoft Entra ID managed service principal has access to, you can manually generate an access token at the workspace level for only that workspace.

Manually generate an account-level access token

An Azure Databricks OAuth access token created from the account level can be used against Databricks REST APIs in the account and in any workspaces the Azure Databricks managed service principal or Microsoft Entra ID managed service principal has been assigned to.

As an account admin, log in to the account console.

Click the down arrow next to your username in the upper right corner.

Copy your Account ID.

Construct the token endpoint URL by replacing

<my-account-id>in the following URL with the account ID that you copied.https://accounts.azuredatabricks.net/oidc/accounts/<my-account-id>/v1/tokenUse a client such as

curlto request an Azure Databricks OAuth access token with the token endpoint URL, the client ID (which is also known as the application ID) of the Azure Databricks managed service principal or Microsoft Entra ID managed service principal, and the Azure Databricks OAuth secret that you created for the Azure Databricks managed service principal or Microsoft Entra ID managed service principal. Theall-apisscope requests an Azure Databricks OAuth access token that can be used to access all Databricks REST APIs that the Azure Databricks managed service principal or Microsoft Entra ID managed service principal has been granted access to.- Replace

<token-endpoint-URL>with the token endpoint URL from above. - Replace

<client-id>with the Azure Databricks managed service principal’s or Microsoft Entra ID managed service principal’s client ID, which is also known as an application ID. - Replace

<client-secret>with the Azure Databricks OAuth secret that you created for the Azure Databricks managed service principal or Microsoft Entra ID managed service principal.

export CLIENT_ID=<client-id> export CLIENT_SECRET=<client-secret> curl --request POST \ --url <token-endpoint-URL> \ --user "$CLIENT_ID:$CLIENT_SECRET" \ --data 'grant_type=client_credentials&scope=all-apis'This generates a response similar to:

{ "access_token": "eyJraWQiOiJkYTA4ZTVjZ…", "scope": "all-apis", "token_type": "Bearer", "expires_in": 3600 }Copy the

access_tokenfrom the response.The Azure Databricks OAuth access token will expire in one hour. You must manually generate a new Azure Databricks OAuth access token after the expiration.

- Replace

Skip ahead to Step 3: Call a Databricks REST API.

Manually generate a workspace-level access token

An Azure Databricks OAuth access token created from the workspace level can only access REST APIs in that workspace, even if the Azure Databricks managed service principal or Microsoft Entra ID managed service principal is an account admin or is a member of other workspaces.

Construct the token endpoint URL by replacing

https://<databricks-instance>with the workspace URL of your Azure Databricks deployment:https://<databricks-instance>/oidc/v1/tokenUse a client such as

curlto request an Azure Databricks OAuth access token with the token endpoint URL, the client ID (which is also known as the application ID) of the Azure Databricks managed service principal or Microsoft Entra ID managed service principal, and the Azure Databricks OAuth secret that you created for the Azure Databricks managed service principal or Microsoft Entra ID managed service principal. Theall-apisscope requests an Azure Databricks OAuth access token that can be used to access all Databricks REST APIs that the Azure Databricks managed service principal or Microsoft Entra ID managed service principal has been granted access to within the workspace that you are requesting the token from.Replace

<token-endpoint-URL>with the token endpoint URL from above.Replace

<client-id>with the Azure Databricks managed service principal’s or Microsoft Entra ID managed service principal’s client ID, which is also known as an application ID.Replace

<client-secret>with the Azure Databricks OAuth secret that you created for the Azure Databricks managed service principal or Microsoft Entra ID managed service principal.export CLIENT_ID=<client-id> export CLIENT_SECRET=<client-secret> curl --request POST \ --url <token-endpoint-URL> \ --user "$CLIENT_ID:$CLIENT_SECRET" \ --data 'grant_type=client_credentials&scope=all-apis'This generates a response similar to:

{ "access_token": "eyJraWQiOiJkYTA4ZTVjZ…", "scope": "all-apis", "token_type": "Bearer", "expires_in": 3600 }Copy the

access_tokenfrom the response.The Azure Databricks OAuth access token will expire in one hour. You must manually generate a new Azure Databricks OAuth access token after the expiration.

Step 3: Call a Databricks REST API

You can now use an Azure Databricks OAuth access token to authenticate to Azure Databricks account-level REST APIs and workspace-level REST APIs. The Azure Databricks managed service principal or Microsoft Entra ID managed service principal must be an account admin to call account-level REST APIs.

You can include the token in the header using Bearer authentication. You can use this approach with curl or any client that you build.

Example account-level REST API request

This example uses Bearer authentication to get a list of all workspaces associated with an account.

- Replace

<oauth-access-token>with the Azure Databricks OAuth access token for the Azure Databricks managed service principal or Microsoft Entra ID managed service principal. - Replace

<account-id>with your account ID.

export OAUTH_TOKEN=<oauth-access-token>

curl --request GET --header "Authorization: Bearer $OAUTH_TOKEN" \

'https://accounts.azuredatabricks.net/api/2.0/accounts/<account-id>/workspaces'

Example workspace-level REST API request

This example uses Bearer authentication to list all available clusters in the specified workspace.

Replace

<oauth-access-token>with the Azure Databricks OAuth access token for the Azure Databricks managed service principal or Microsoft Entra ID managed service principal.Replace

<workspace-URL>with your base workspace URL, which has the form similar toadb-1111111111111111.1.azuredatabricks.net.export OAUTH_TOKEN=<oauth-access-token> curl --request GET --header "Authorization: Bearer $OAUTH_TOKEN" \ 'https://<workspace-URL>/api/2.0/clusters/list'

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for