What is Azure Load Balancer?

Load balancing refers to efficiently distributing incoming network traffic across a group of backend servers or resources.

Azure Load Balancer operates at layer 4 of the Open Systems Interconnection (OSI) model. It's the single point of contact for clients. Load balancer distributes inbound flows that arrive at the load balancer's front end to backend pool instances. These flows are according to configured load-balancing rules and health probes. The backend pool instances can be Azure Virtual Machines or instances in a Virtual Machine Scale Set.

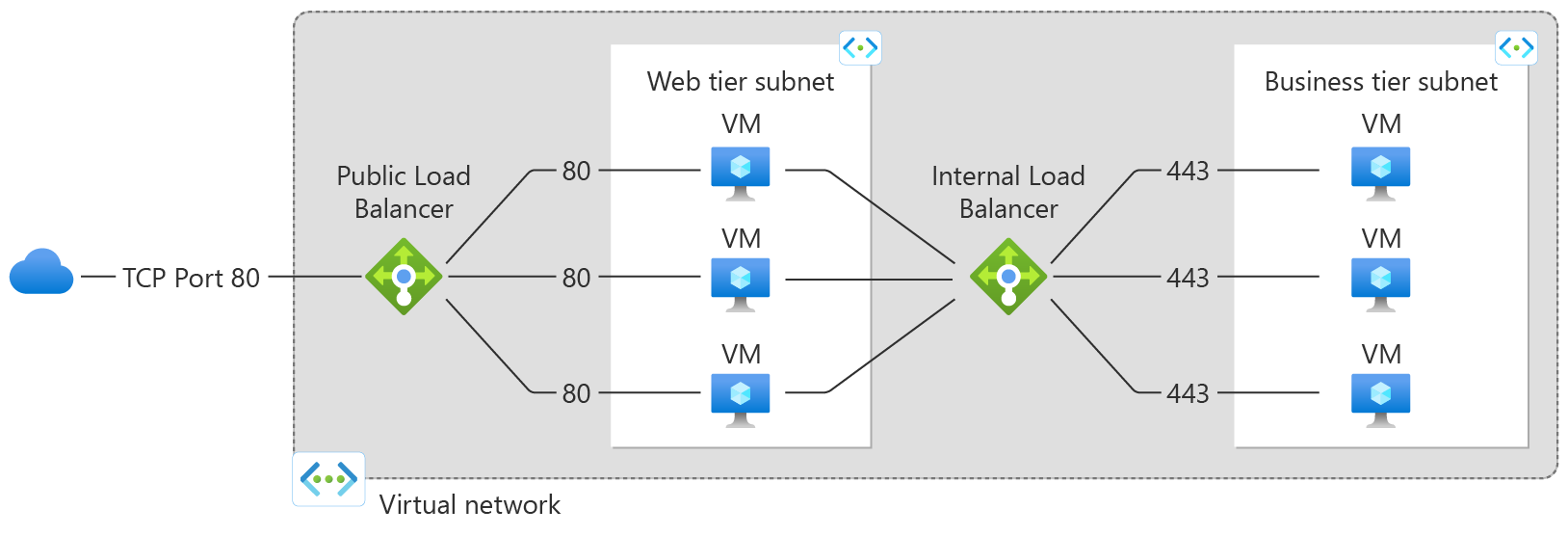

A public load balancer can provide outbound connections for virtual machines (VMs) inside your virtual network. These connections are accomplished by translating their private IP addresses to public IP addresses. Public Load Balancers are used to load balance internet traffic to your VMs.

An internal (or private) load balancer is used where private IPs are needed at the frontend only. Internal load balancers are used to load balance traffic inside a virtual network. A load balancer frontend can be accessed from an on-premises network in a hybrid scenario.

Figure: Balancing multi-tier applications by using both public and internal Load Balancer

For more information on the individual load balancer components, see Azure Load Balancer components.

Why use Azure Load Balancer?

With Azure Load Balancer, you can scale your applications and create highly available services. Load balancer supports both inbound and outbound scenarios. Load balancer provides low latency and high throughput, and scales up to millions of flows for all TCP and UDP applications.

Key scenarios that you can accomplish using Azure Standard Load Balancer include:

Load balance internal and external traffic to Azure virtual machines.

Pass-through load balancing which results in ultra-low latnecy.

Increase availability by distributing resources within and across zones.

Configure outbound connectivity for Azure virtual machines.

Use health probes to monitor load-balanced resources.

Employ port forwarding to access virtual machines in a virtual network by public IP address and port.

Enable support for load-balancing of IPv6.

Standard load balancer provides multi-dimensional metrics through Azure Monitor. These metrics can be filtered, grouped, and broken out for a given dimension. They provide current and historic insights into performance and health of your service. Insights for Azure Load Balancer offers a preconfigured dashboard with useful visualizations for these metrics. Resource Health is also supported. Review Standard load balancer diagnostics for more details.

Load balance services on multiple ports, multiple IP addresses, or both.

Move internal and external load balancer resources across Azure regions.

Load balance TCP and UDP flow on all ports simultaneously using HA ports.

Chain Standard Load Balancer and Gateway Load Balancer.

Secure by default

Standard load balancer is built on the zero trust network security model.

Standard Load Balancer is secure by default and part of your virtual network. The virtual network is a private and isolated network.

Standard load balancers and standard public IP addresses are closed to inbound connections unless opened by Network Security Groups. NSGs are used to explicitly permit allowed traffic. If you don't have an NSG on a subnet or NIC of your virtual machine resource, traffic isn't allowed to reach this resource. To learn about NSGs and how to apply them to your scenario, see Network Security Groups.

Basic load balancer is open to the internet by default.

Load balancer doesn't store customer data.

Pricing and SLA

For standard load balancer pricing information, see Load balancer pricing. Basic load balancer is offered at no charge. See SLA for load balancer. Basic load balancer has no SLA.

What's new?

Subscribe to the RSS feed and view the latest Azure Load Balancer feature updates on the Azure Updates page.

Next steps

See Create a public standard load balancer to get started with using a load balancer.

For more information on Azure Load Balancer limitations and components, see Azure Load Balancer components and Azure Load Balancer concepts

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for