Create a standalone cluster running on Windows Server

You can use Azure Service Fabric to create Service Fabric clusters on any virtual machines or computers running Windows Server. This means you can deploy and run Service Fabric applications in any environment that contains a set of interconnected Windows Server computers, be it on premises or with any cloud provider. Service Fabric provides a setup package to create Service Fabric clusters called the standalone Windows Server package. Traditional Service Fabric clusters on Azure are available as a managed service, while standalone Service Fabric clusters are self-service. For more on the differences, see Comparing Azure and standalone Service Fabric clusters.

This article walks you through the steps for creating a Service Fabric standalone cluster.

Note

This standalone Windows Server package is commercially available at no cost and may be used for production deployments. This package may contain new Service Fabric features that are in "Preview". Scroll down to "Preview features included in this package." section for the list of the preview features. You can download a copy of the EULA now.

Get support for the Service Fabric for Windows Server package

- Ask the community about the Service Fabric standalone package for Windows Server in the Microsoft Q&A question page for Azure Service Fabric.

- Open a ticket for Professional Support for Service Fabric.

- You can also get support for this package as a part of Microsoft Premier Support.

- For more details, please see Azure Service Fabric support options.

- To collect logs for support purposes, run the Service Fabric Standalone Log collector.

Download the Service Fabric for Windows Server package

To create the cluster, use the Service Fabric for Windows Server package (Windows Server 2012 R2 and newer) found here:

Download Link - Service Fabric Standalone Package - Windows Server

Find details on contents of the package here.

The Service Fabric runtime package is automatically downloaded at time of cluster creation. If deploying from a machine not connected to the internet, please download the runtime package out of band from here:

Download Link - Service Fabric Runtime - Windows Server

Find Standalone Cluster Configuration samples at:

Standalone Cluster Configuration Samples

Create the cluster

Several sample cluster configuration files are installed with the setup package. ClusterConfig.Unsecure.DevCluster.json is the simplest cluster configuration: an unsecure, three-node cluster running on a single computer. Other config files describe single or multi-machine clusters secured with X.509 certificates or Windows security. You don't need to modify any of the default config settings for this tutorial, but look through the config file and get familiar with the settings. The nodes section describes the three nodes in the cluster: name, IP address, node type, fault domain, and upgrade domain. The properties section defines the security, reliability level, diagnostics collection, and types of nodes for the cluster.

The cluster created in this article is unsecure. Anyone can connect anonymously and perform management operations, so production clusters should always be secured using X.509 certificates or Windows security. Security is only configured at cluster creation time and it is not possible to enable security after the cluster is created. Update the config file enable certificate security or Windows security. Read Secure a cluster to learn more about Service Fabric cluster security.

Step 1: Create the cluster

Scenario A: Create an unsecured local development cluster

Service Fabric can be deployed to a one-machine development cluster by using the ClusterConfig.Unsecure.DevCluster.json file included in Samples.

Unpack the standalone package to your machine, copy the sample config file to the local machine, then run the CreateServiceFabricCluster.ps1 script through an administrator PowerShell session, from the standalone package folder.

.\CreateServiceFabricCluster.ps1 -ClusterConfigFilePath .\ClusterConfig.Unsecure.DevCluster.json -AcceptEULA

See the Environment Setup section at Plan and prepare your cluster deployment for troubleshooting details.

If you're finished running development scenarios, you can remove the Service Fabric cluster from the machine by referring to steps in section "Remove a cluster".

Scenario B: Create a multi-machine cluster

After you have gone through the planning and preparation steps detailed at Plan and prepare your cluster deployment, you are ready to create your production cluster using your cluster configuration file.

The cluster administrator deploying and configuring the cluster must have administrator privileges on the computer. You cannot install Service Fabric on a domain controller.

The TestConfiguration.ps1 script in the standalone package is used as a best practices analyzer to validate whether a cluster can be deployed on a given environment. Deployment preparation lists the pre-requisites and environment requirements. Run the script to verify if you can create the development cluster:

.\TestConfiguration.ps1 -ClusterConfigFilePath .\ClusterConfig.jsonYou should see output similar to the following. If the bottom field "Passed" is returned as "True", sanity checks have passed and the cluster looks to be deployable based on the input configuration.

Trace folder already exists. Traces will be written to existing trace folder: C:\temp\Microsoft.Azure.ServiceFabric.WindowsServer\DeploymentTraces Running Best Practices Analyzer... Best Practices Analyzer completed successfully. LocalAdminPrivilege : True IsJsonValid : True IsCabValid : True RequiredPortsOpen : True RemoteRegistryAvailable : True FirewallAvailable : True RpcCheckPassed : True NoConflictingInstallations : True FabricInstallable : True Passed : TrueCreate the cluster: Run the CreateServiceFabricCluster.ps1 script to deploy the Service Fabric cluster across each machine in the configuration.

.\CreateServiceFabricCluster.ps1 -ClusterConfigFilePath .\ClusterConfig.json -AcceptEULA

Note

Deployment traces are written to the VM/machine on which you ran the CreateServiceFabricCluster.ps1 PowerShell script. These can be found in the subfolder DeploymentTraces, based in the directory from which the script was run. To see if Service Fabric was deployed correctly to a machine, find the installed files in the FabricDataRoot directory, as detailed in the cluster configuration file FabricSettings section (by default c:\ProgramData\SF). As well, FabricHost.exe and Fabric.exe processes can be seen running in Task Manager.

Scenario C: Create an offline (internet-disconnected) cluster

The Service Fabric runtime package is automatically downloaded at cluster creation. When deploying a cluster to machines not connected to the internet, you will need to download the Service Fabric runtime package separately, and provide the path to it at cluster creation.

The runtime package can be downloaded separately, from another machine connected to the internet, at Download Link - Service Fabric Runtime - Windows Server. Copy the runtime package to where you are deploying the offline cluster from, and create the cluster by running CreateServiceFabricCluster.ps1 with the -FabricRuntimePackagePath parameter included, as shown in this example:

.\CreateServiceFabricCluster.ps1 -ClusterConfigFilePath .\ClusterConfig.json -FabricRuntimePackagePath .\MicrosoftAzureServiceFabric.cab

.\ClusterConfig.json and .\MicrosoftAzureServiceFabric.cab are the paths to the cluster configuration and the runtime .cab file respectively.

Step 2: Connect to the cluster

Connect to the cluster to verify the cluster is running and available. The ServiceFabric PowerShell module is installed with the runtime. You can connect to the cluster from one of the cluster nodes or from a remote computer with the Service Fabric runtime. The Connect-ServiceFabricCluster cmdlet establishes a connection to the cluster.

To connect to an unsecure cluster, run the following PowerShell command:

Connect-ServiceFabricCluster -ConnectionEndpoint <*IPAddressofaMachine*>:<Client connection end point port>

For example:

Connect-ServiceFabricCluster -ConnectionEndpoint 192.13.123.234:19000

See Connect to a secure cluster for other examples of connecting to a cluster. After connecting to the cluster, use the Get-ServiceFabricNode cmdlet to display a list of nodes in the cluster and status information for each node. HealthState should be OK for each node.

PS C:\temp\Microsoft.Azure.ServiceFabric.WindowsServer> Get-ServiceFabricNode |Format-Table

NodeDeactivationInfo NodeName IpAddressOrFQDN NodeType CodeVersion ConfigVersion NodeStatus NodeUpTime NodeDownTime HealthState

-------------------- -------- --------------- -------- ----------- ------------- ---------- ---------- ------------ -----------

vm2 localhost NodeType2 5.6.220.9494 0 Up 00:03:38 00:00:00 OK

vm1 localhost NodeType1 5.6.220.9494 0 Up 00:03:38 00:00:00 OK

vm0 localhost NodeType0 5.6.220.9494 0 Up 00:02:43 00:00:00 OK

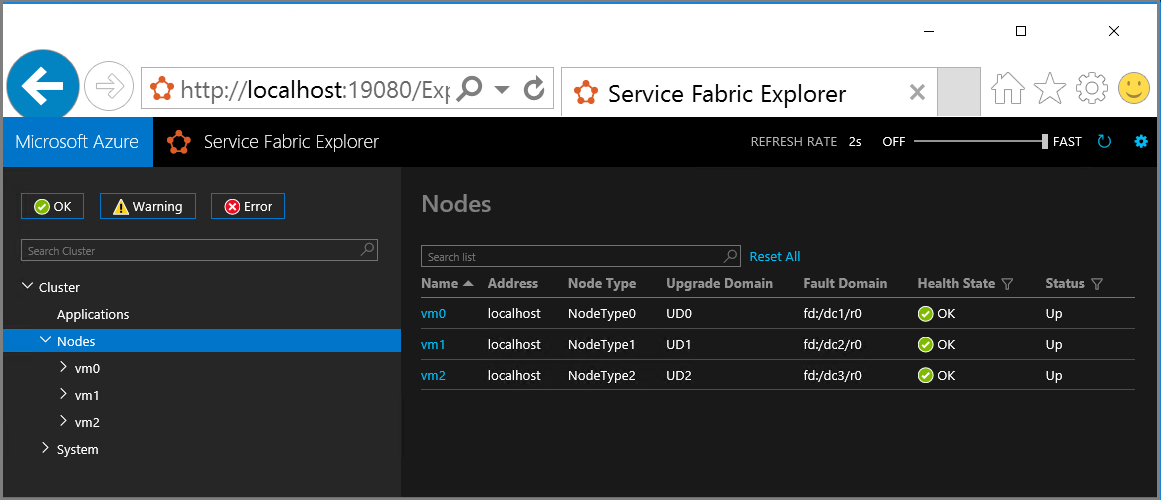

Step 3: Visualize the cluster using Service Fabric explorer

Service Fabric Explorer is a good tool for visualizing your cluster and managing applications. Service Fabric Explorer is a service that runs in the cluster, which you access using a browser by navigating to http://localhost:19080/Explorer.

The cluster dashboard provides an overview of your cluster, including a summary of application and node health. The node view shows the physical layout of the cluster. For a given node, you can inspect which applications have code deployed on that node.

Add and remove nodes

You can add or remove nodes to your standalone Service Fabric cluster as your business needs change. See Add or Remove nodes to a Service Fabric standalone cluster for detailed steps.

Remove a cluster

To remove a cluster, run the RemoveServiceFabricCluster.ps1 PowerShell script from the package folder and pass in the path to the JSON configuration file. You can optionally specify a location for the log of the deletion.

This script can be run on any machine that has administrator access to all the machines that are listed as nodes in the cluster configuration file. The machine that this script is run on does not have to be part of the cluster.

# Removes Service Fabric from each machine in the configuration

.\RemoveServiceFabricCluster.ps1 -ClusterConfigFilePath .\ClusterConfig.json -Force

# Removes Service Fabric from the current machine

.\CleanFabric.ps1

Telemetry data collected and how to opt out of it

As a default, the product collects telemetry on the Service Fabric usage to improve the product. If it is not reachable, the setup fails unless you opt out of telemetry.

- It is a best-effort upload and has no impact on the cluster functionality. The telemetry is only sent from the node that runs the failover manager primary. No other nodes send out telemetry.

- The telemetry consists of the following:

- Number of services

- Number of ServiceTypes

- Number of Applications

- Number of ApplicationUpgrades

- Number of FailoverUnits

- Number of InBuildFailoverUnits

- Number of UnhealthyFailoverUnits

- Number of Replicas

- Number of InBuildReplicas

- Number of StandByReplicas

- Number of OfflineReplicas

- CommonQueueLength

- QueryQueueLength

- FailoverUnitQueueLength

- CommitQueueLength

- Number of Nodes

- IsContextComplete: True/False

- ClusterId: This is a GUID randomly generated for each cluster

- ServiceFabricVersion

- IP address of the virtual machine or machine from which the telemetry is uploaded

To disable telemetry, add the following to properties in your cluster config: enableTelemetry: false.

Preview features included in this package

None.

Note

Starting with the new GA version of the standalone cluster for Windows Server (version 5.3.204.x), you can upgrade your cluster to future releases, manually or automatically. Refer to Upgrade a standalone Service Fabric cluster version document for details.

Next steps

- Deploy and remove applications using PowerShell

- Configuration settings for standalone Windows cluster

- Add or remove nodes to a standalone Service Fabric cluster

- Upgrade a standalone Service Fabric cluster version

- Create a standalone Service Fabric cluster with Azure VMs running Windows

- Secure a standalone cluster on Windows using Windows security

- Secure a standalone cluster on Windows using X509 certificates

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for