Tutorial: Machine learning model scoring wizard for dedicated SQL pools

Learn how to easily enrich your data in dedicated SQL pools with predictive machine learning models. The models that your data scientists create are now easily accessible to data professionals for predictive analytics. A data professional in Azure Synapse Analytics can simply select a model from the Azure Machine Learning model registry for deployment in Azure Synapse SQL pools and launch predictions to enrich the data.

In this tutorial, you'll learn how to:

- Train a predictive machine learning model and register the model in the Azure Machine Learning model registry.

- Use the SQL scoring wizard to launch predictions in a dedicated SQL pool.

If you don't have an Azure subscription, create a free account before you begin.

Prerequisites

- Azure Synapse Analytics workspace with an Azure Data Lake Storage Gen2 storage account configured as the default storage. You need to be the Storage Blob Data Contributor of the Data Lake Storage Gen2 file system that you work with.

- Dedicated SQL pool in your Azure Synapse Analytics workspace. For details, see Create a dedicated SQL pool.

- Azure Machine Learning linked service in your Azure Synapse Analytics workspace. For details, see Create an Azure Machine Learning linked service in Azure Synapse.

Sign in to the Azure portal

Sign in to the Azure portal.

Train a model in Azure Machine Learning

Before you begin, verify that your version of sklearn is 0.20.3.

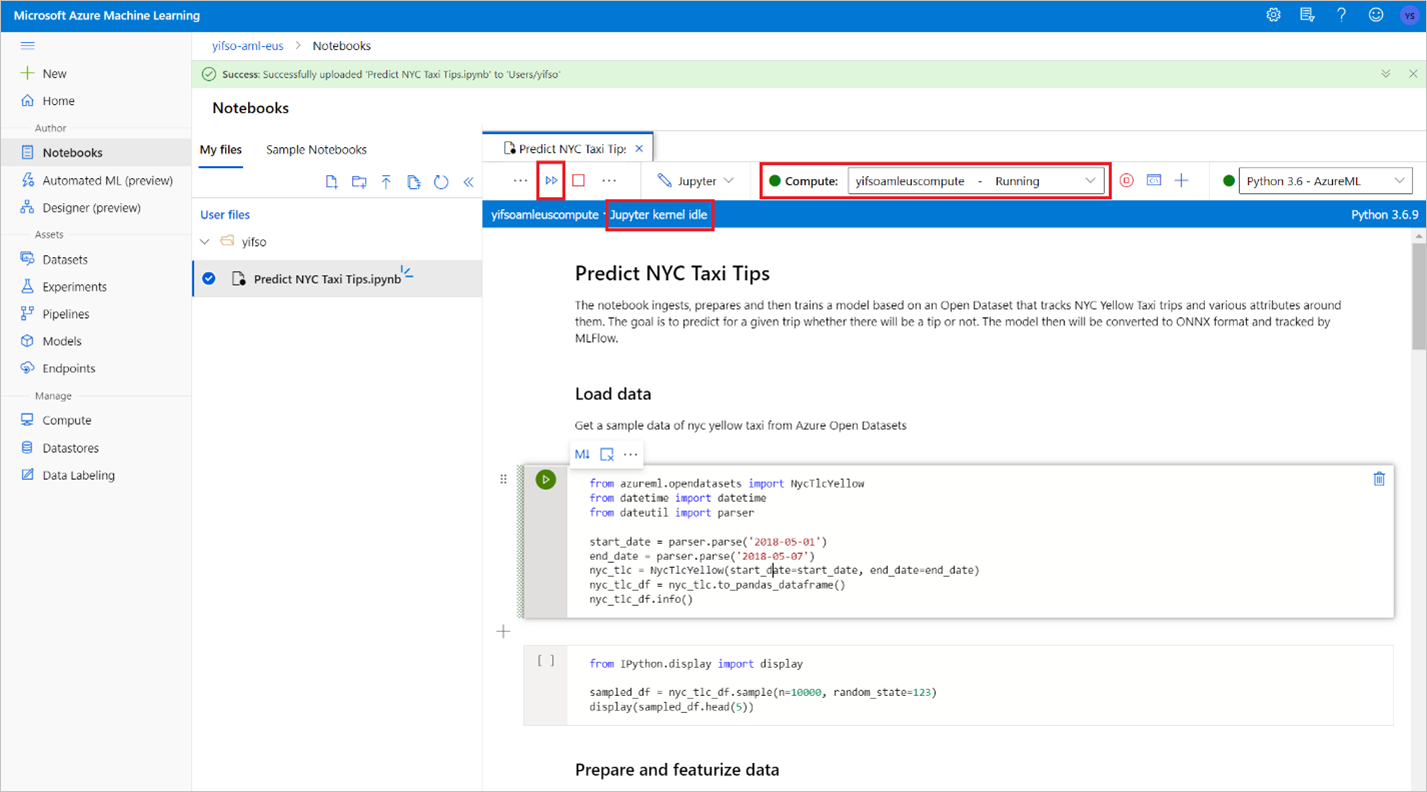

Before you run all cells in the notebook, check that the compute instance is running.

Go to your Azure Machine Learning workspace.

Download Predict NYC Taxi Tips.ipynb.

Open the Azure Machine Learning workspace in Azure Machine Learning Studio.

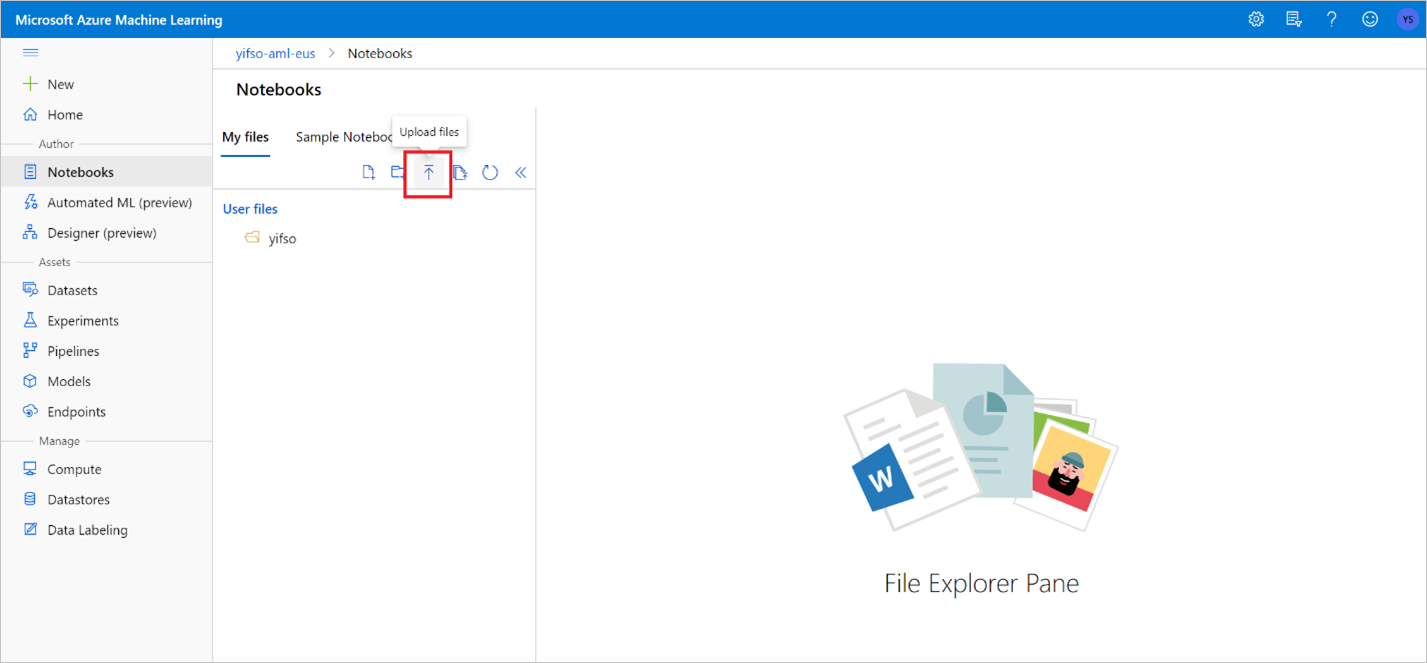

Go to Notebooks > Upload files. Then select the Predict NYC Taxi Tips.ipynb file that you downloaded and upload it.

After the notebook is uploaded and opened, select Run all cells.

One of the cells might fail and ask you to authenticate to Azure. Watch for this in the cell outputs, and authenticate in your browser by following the link and entering the code. Then re-run the notebook.

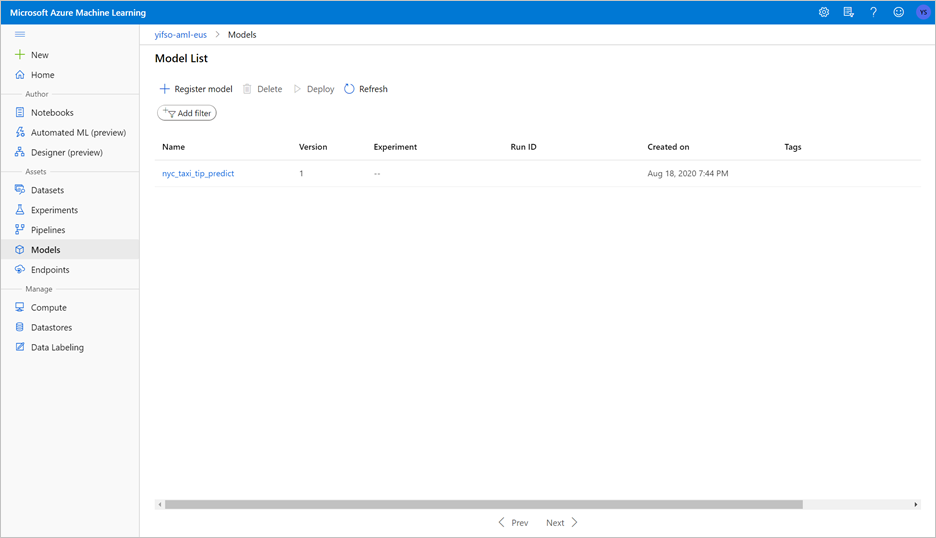

The notebook will train an ONNX model and register it with MLflow. Go to Models to check that the new model is registered properly.

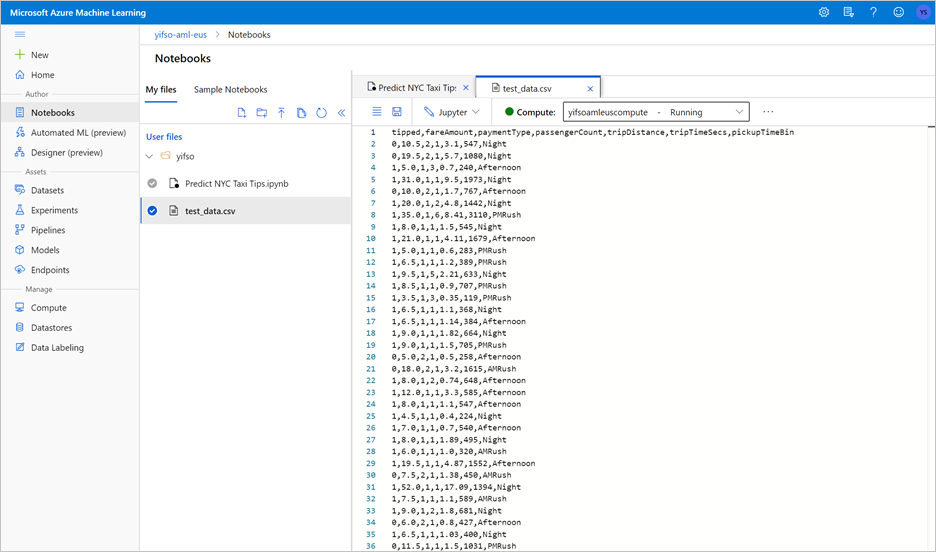

Running the notebook will also export the test data into a CSV file. Download the CSV file to your local system. Later, you'll import the CSV file into a dedicated SQL pool and use the data to test the model.

The CSV file is created in the same folder as your notebook file. Select Refresh in File Explorer if you don't see it right away.

Launch predictions with the SQL scoring wizard

Open the Azure Synapse workspace with Synapse Studio.

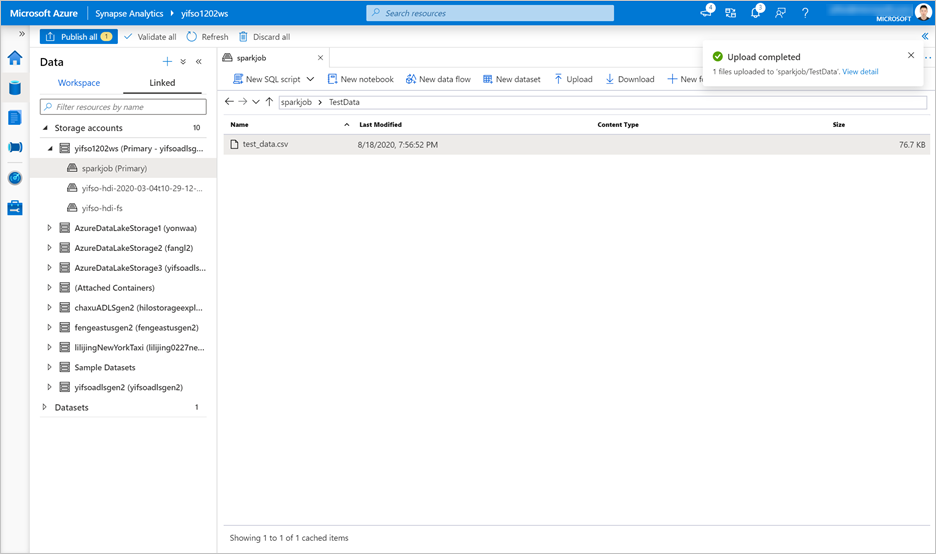

Go to Data > Linked > Storage Accounts. Upload

test_data.csvto the default storage account.

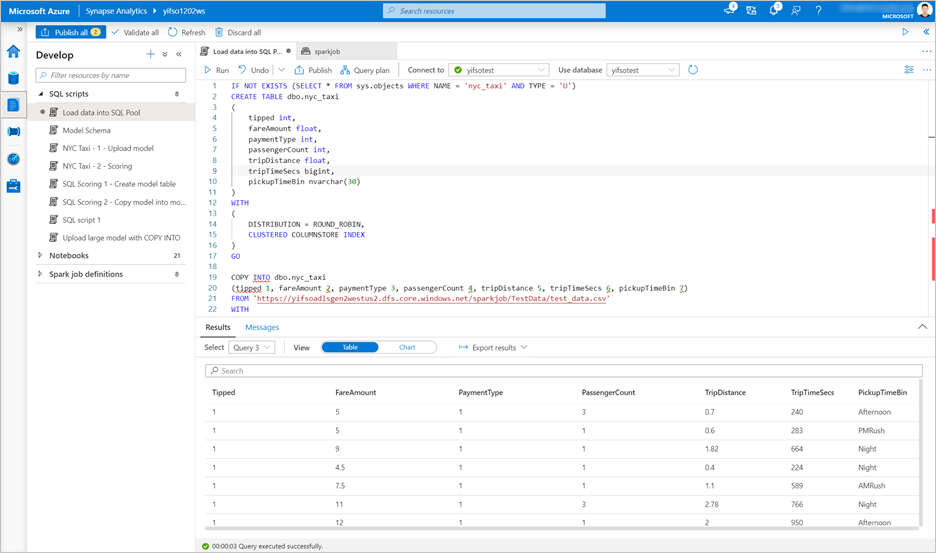

Go to Develop > SQL scripts. Create a new SQL script to load

test_data.csvinto your dedicated SQL pool.Note

Update the file URL in this script before running it.

IF NOT EXISTS (SELECT * FROM sys.objects WHERE NAME = 'nyc_taxi' AND TYPE = 'U') CREATE TABLE dbo.nyc_taxi ( tipped int, fareAmount float, paymentType int, passengerCount int, tripDistance float, tripTimeSecs bigint, pickupTimeBin nvarchar(30) ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ) GO COPY INTO dbo.nyc_taxi (tipped 1, fareAmount 2, paymentType 3, passengerCount 4, tripDistance 5, tripTimeSecs 6, pickupTimeBin 7) FROM '<URL to linked storage account>/test_data.csv' WITH ( FILE_TYPE = 'CSV', ROWTERMINATOR='0x0A', FIELDQUOTE = '"', FIELDTERMINATOR = ',', FIRSTROW = 2 ) GO SELECT TOP 100 * FROM nyc_taxi GO

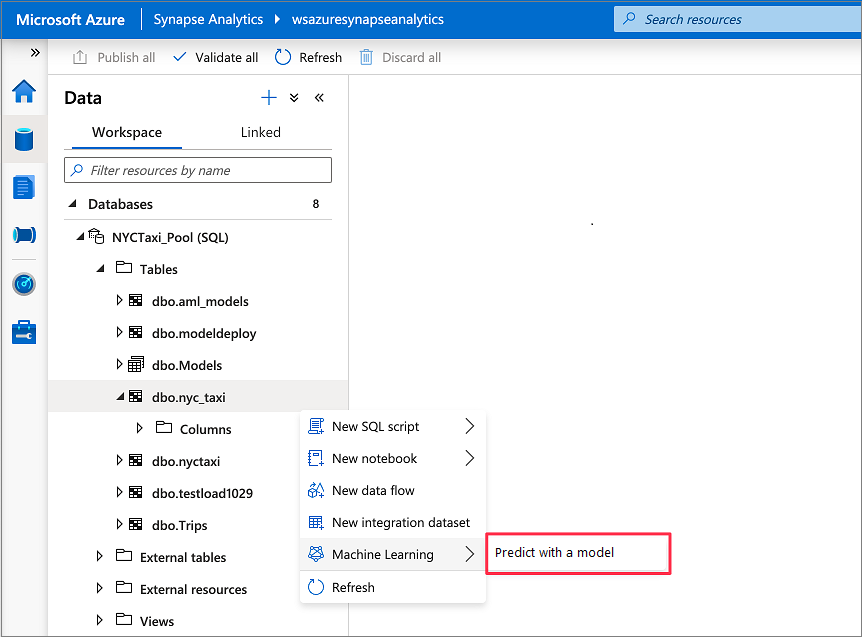

Go to Data > Workspace. Open the SQL scoring wizard by right-clicking the dedicated SQL pool table. Select Machine Learning > Predict with a model.

Note

The machine learning option does not appear unless you have a linked service created for Azure Machine Learning. (See Prerequisites at the beginning of this tutorial.)

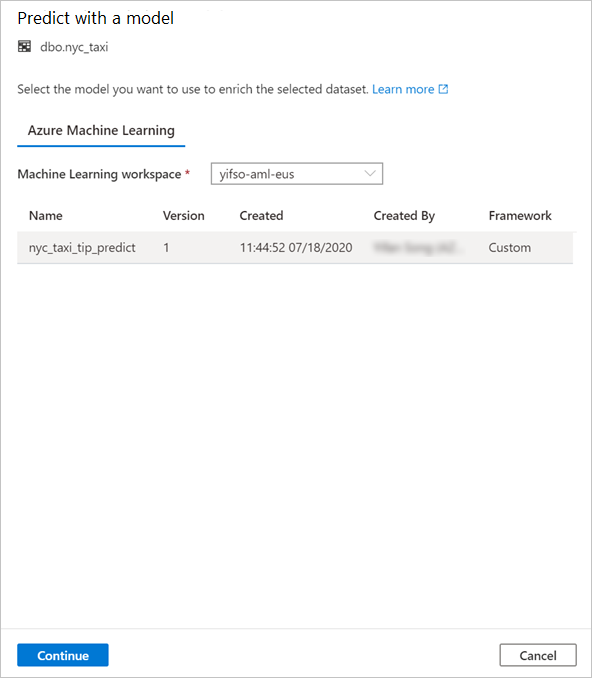

Select a linked Azure Machine Learning workspace in the drop-down box. This step loads a list of machine learning models from the model registry of the chosen Azure Machine Learning workspace. Currently, only ONNX models are supported, so this step will display only ONNX models.

Select the model that you just trained, and then select Continue.

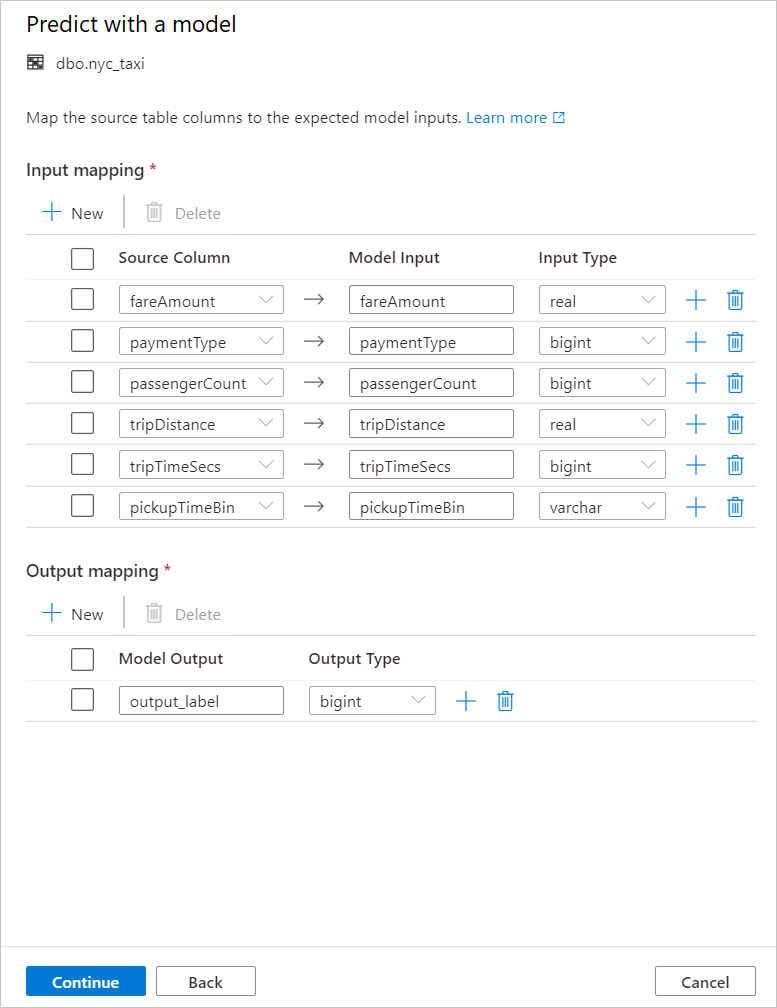

Map the table columns to the model inputs and specify the model outputs. If the model is saved in the MLflow format and the model signature is populated, the mapping will be done automatically for you by using a logic based on the similarity of names. The interface also supports manual mapping.

Select Continue.

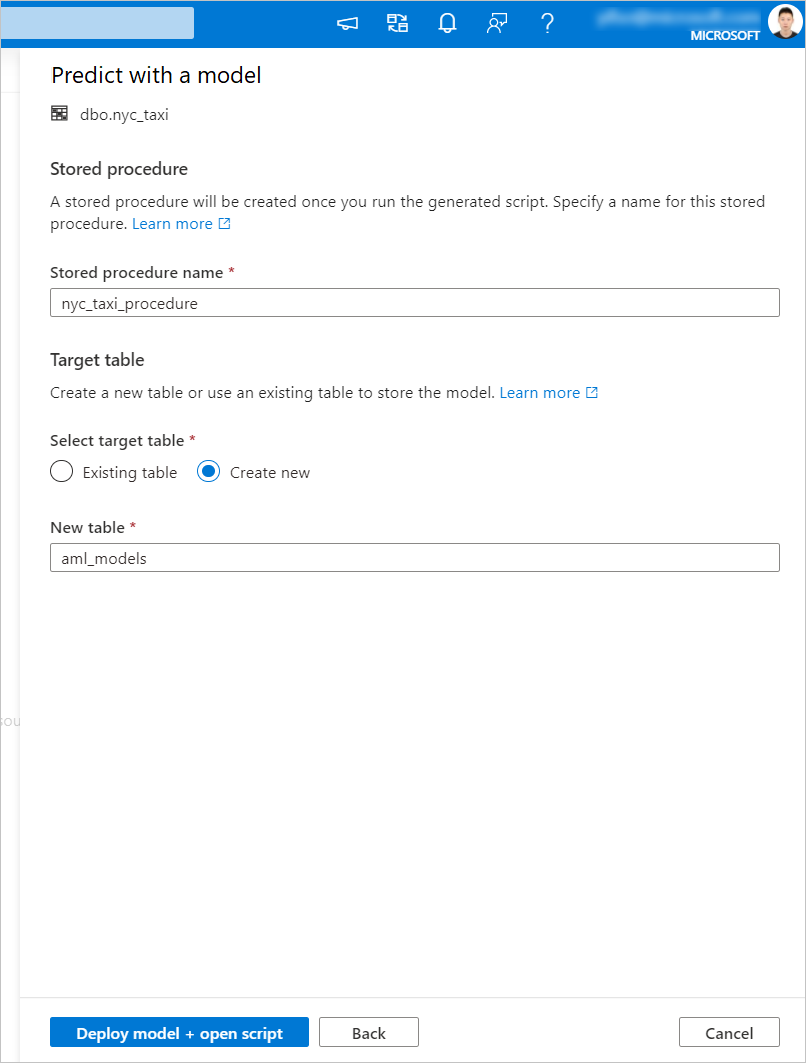

The generated T-SQL code is wrapped inside a stored procedure. This is why you need to provide a stored procedure name. The model binary, including metadata (version, description, and other information), will be physically copied from Azure Machine Learning to a dedicated SQL pool table. So you need to specify which table to save the model in.

You can choose either Existing table or Create new. When you're done, select Deploy model + open script to deploy the model and generate a T-SQL prediction script.

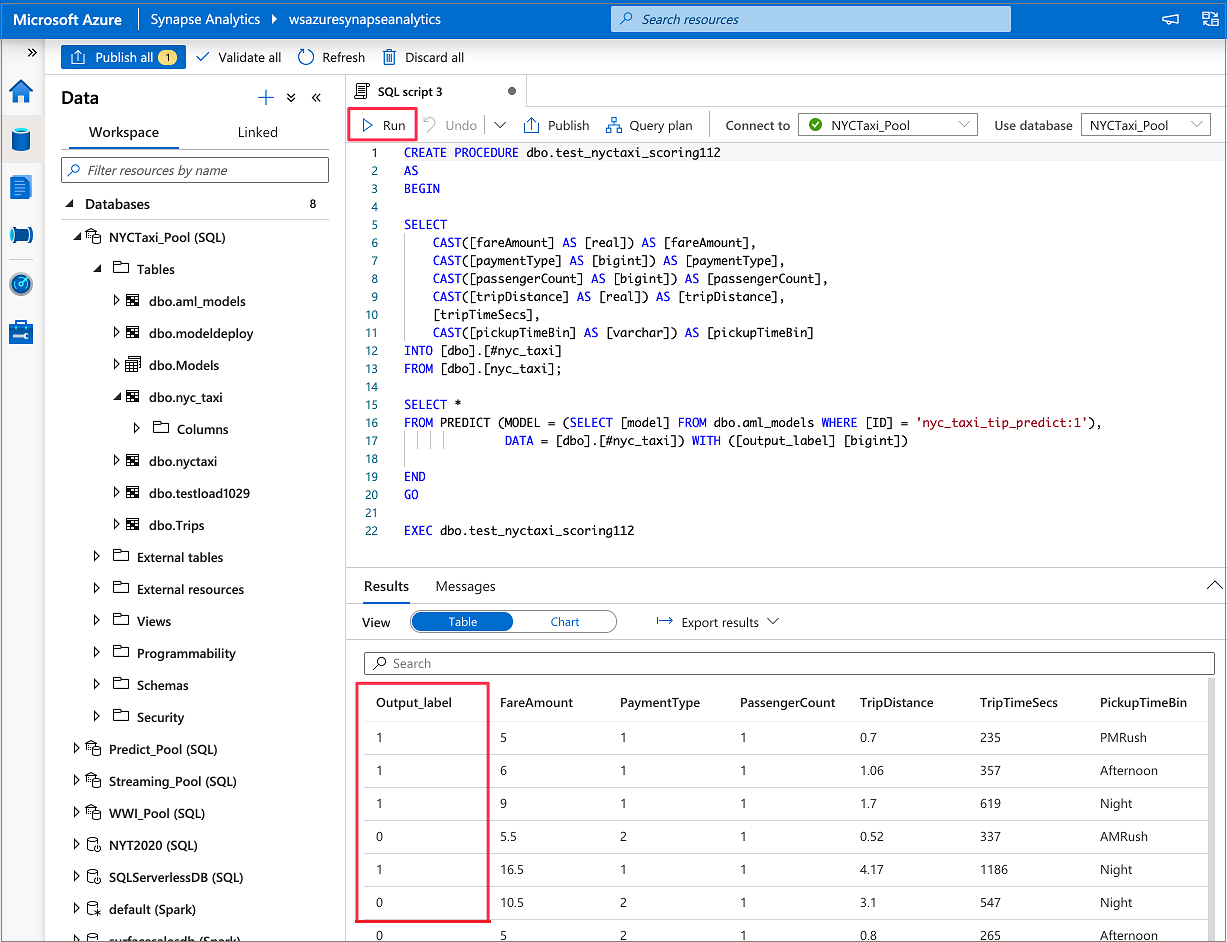

After the script is generated, select Run to execute the scoring and get predictions.

Next steps

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for