Tutorial: Load the New York Taxicab dataset

This tutorial uses the COPY statement to load New York Taxicab dataset from an Azure Blob Storage account. The tutorial uses the Azure portal and SQL Server Management Studio (SSMS) to:

- Create a user designated for loading data

- Create the tables for the sample dataset

- Use the COPY T-SQL statement to load data into your data warehouse

- View the progress of data as it is loading

If you don't have an Azure subscription, create a free Azure account before you begin.

Before you begin

Before you begin this tutorial, download and install the newest version of SQL Server Management Studio (SSMS).

This tutorial assumes you have already created a SQL dedicated pool from the following tutorial.

Create a user for loading data

The server admin account is meant to perform management operations, and is not suited for running queries on user data. Loading data is a memory-intensive operation. Memory maximums are defined according to the data warehouse units and resource class configured.

It's best to create a login and user that is dedicated for loading data. Then add the loading user to a resource class that enables an appropriate maximum memory allocation.

Connect as the server admin so you can create logins and users. Use these steps to create a login and user called LoaderRC20. Then assign the user to the staticrc20 resource class.

In SSMS, right-select master to show a drop-down menu, and choose New Query. A new query window opens.

In the query window, enter these T-SQL commands to create a login and user named LoaderRC20, substituting your own password for 'a123STRONGpassword!'.

CREATE LOGIN LoaderRC20 WITH PASSWORD = 'a123STRONGpassword!'; CREATE USER LoaderRC20 FOR LOGIN LoaderRC20;Select Execute.

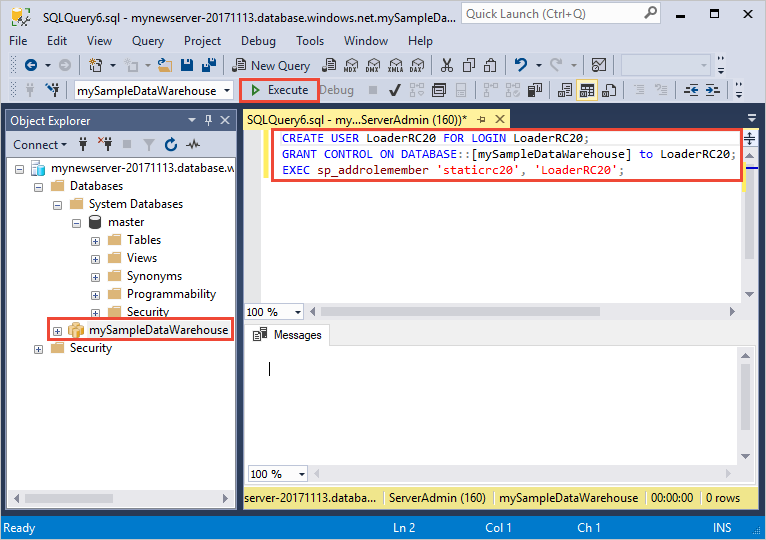

Right-click mySampleDataWarehouse, and choose New Query. A new query Window opens.

Enter the following T-SQL commands to create a database user named LoaderRC20 for the LoaderRC20 login. The second line grants the new user CONTROL permissions on the new data warehouse. These permissions are similar to making the user the owner of the database. The third line adds the new user as a member of the staticrc20 resource class.

CREATE USER LoaderRC20 FOR LOGIN LoaderRC20; GRANT CONTROL ON DATABASE::[mySampleDataWarehouse] to LoaderRC20; EXEC sp_addrolemember 'staticrc20', 'LoaderRC20';Select Execute.

Connect to the server as the loading user

The first step toward loading data is to login as LoaderRC20.

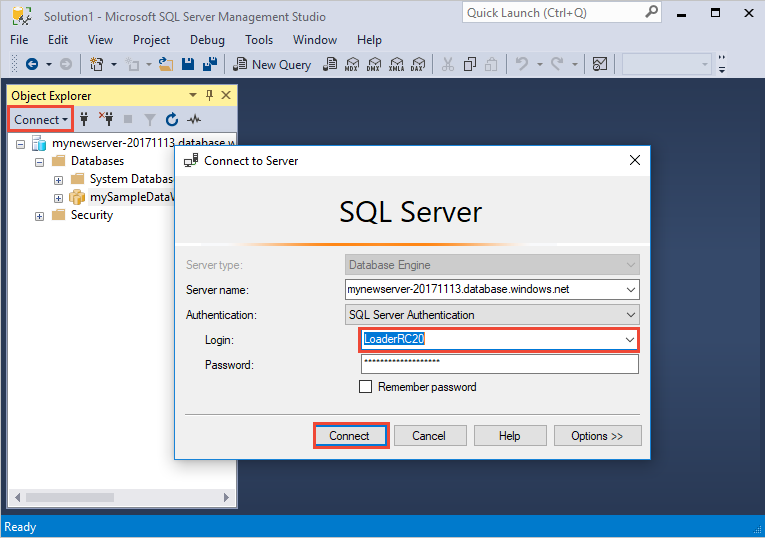

In Object Explorer, select the Connect drop down menu and select Database Engine. The Connect to Server dialog box appears.

Enter the fully qualified server name, and enter LoaderRC20 as the Login. Enter your password for LoaderRC20.

Select Connect.

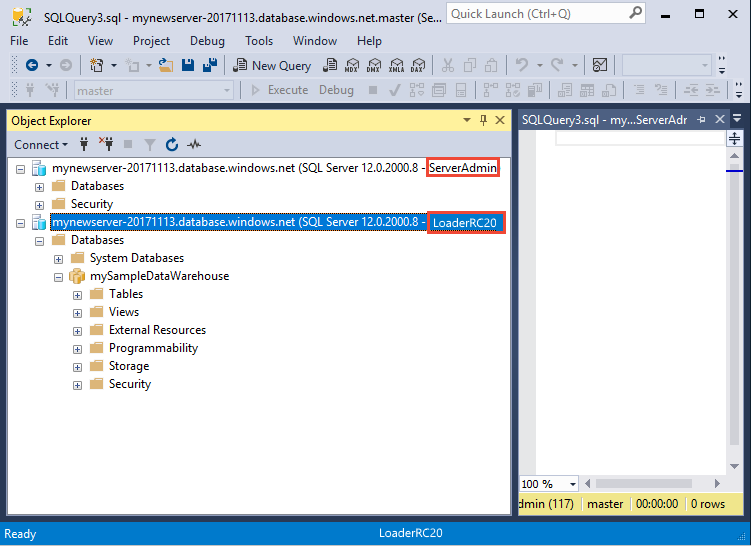

When your connection is ready, you will see two server connections in Object Explorer. One connection as ServerAdmin and one connection as LoaderRC20.

Create tables for the sample data

You are ready to begin the process of loading data into your new data warehouse. This part of the tutorial shows you how to use the COPY statement to load the New York City taxi cab dataset from an Azure Storage blob. For future reference, to learn how to get your data to Azure Blob Storage or to load it directly from your source, see the loading overview.

Run the following SQL scripts and specify information about the data you wish to load. This information includes where the data is located, the format of the contents of the data, and the table definition for the data.

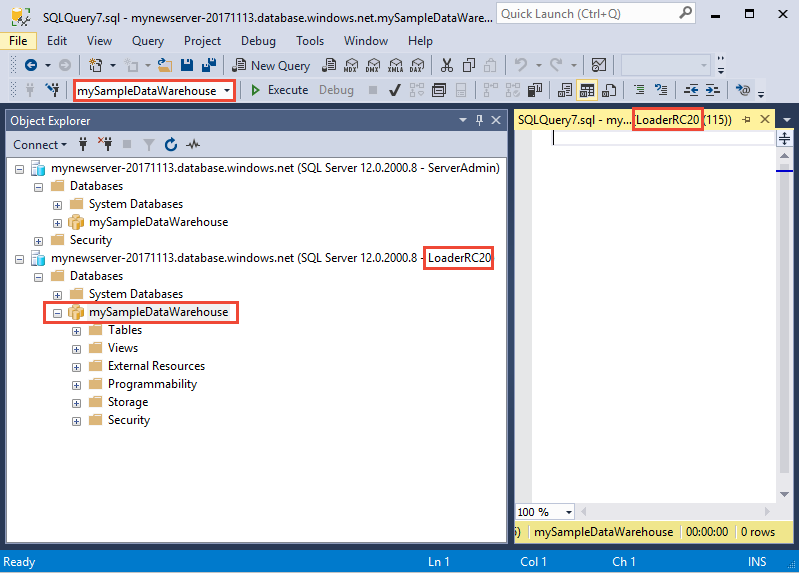

In the previous section, you logged into your data warehouse as LoaderRC20. In SSMS, right-click your LoaderRC20 connection and select New Query. A new query window appears.

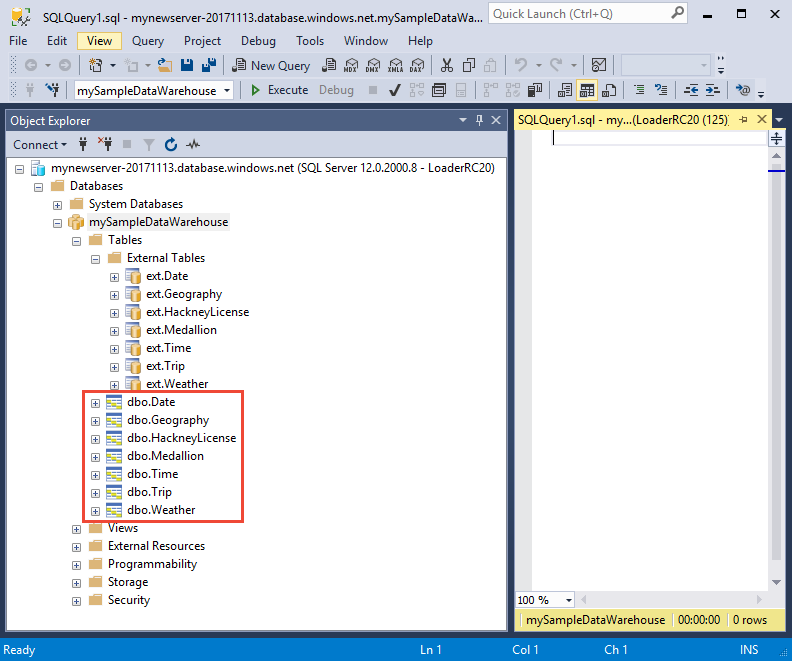

Compare your query window to the previous image. Verify your new query window is running as LoaderRC20 and performing queries on your MySampleDataWarehouse database. Use this query window to perform all of the loading steps.

Run the following T-SQL statements to create the tables:

CREATE TABLE [dbo].[Date] ( [DateID] int NOT NULL, [Date] datetime NULL, [DateBKey] char(10) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayOfMonth] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DaySuffix] varchar(4) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayName] varchar(9) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayOfWeek] char(1) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayOfWeekInMonth] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayOfWeekInYear] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayOfQuarter] varchar(3) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DayOfYear] varchar(3) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [WeekOfMonth] varchar(1) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [WeekOfQuarter] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [WeekOfYear] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [Month] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [MonthName] varchar(9) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [MonthOfQuarter] varchar(2) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [Quarter] char(1) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [QuarterName] varchar(9) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [Year] char(4) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [YearName] char(7) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [MonthYear] char(10) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [MMYYYY] char(6) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [FirstDayOfMonth] date NULL, [LastDayOfMonth] date NULL, [FirstDayOfQuarter] date NULL, [LastDayOfQuarter] date NULL, [FirstDayOfYear] date NULL, [LastDayOfYear] date NULL, [IsHolidayUSA] bit NULL, [IsWeekday] bit NULL, [HolidayUSA] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ); CREATE TABLE [dbo].[Geography] ( [GeographyID] int NOT NULL, [ZipCodeBKey] varchar(10) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, [County] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [City] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [State] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [Country] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [ZipCode] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ); CREATE TABLE [dbo].[HackneyLicense] ( [HackneyLicenseID] int NOT NULL, [HackneyLicenseBKey] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, [HackneyLicenseCode] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ); CREATE TABLE [dbo].[Medallion] ( [MedallionID] int NOT NULL, [MedallionBKey] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, [MedallionCode] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ); CREATE TABLE [dbo].[Time] ( [TimeID] int NOT NULL, [TimeBKey] varchar(8) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, [HourNumber] tinyint NOT NULL, [MinuteNumber] tinyint NOT NULL, [SecondNumber] tinyint NOT NULL, [TimeInSecond] int NOT NULL, [HourlyBucket] varchar(15) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL, [DayTimeBucketGroupKey] int NOT NULL, [DayTimeBucket] varchar(100) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ); CREATE TABLE [dbo].[Trip] ( [DateID] int NOT NULL, [MedallionID] int NOT NULL, [HackneyLicenseID] int NOT NULL, [PickupTimeID] int NOT NULL, [DropoffTimeID] int NOT NULL, [PickupGeographyID] int NULL, [DropoffGeographyID] int NULL, [PickupLatitude] float NULL, [PickupLongitude] float NULL, [PickupLatLong] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [DropoffLatitude] float NULL, [DropoffLongitude] float NULL, [DropoffLatLong] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [PassengerCount] int NULL, [TripDurationSeconds] int NULL, [TripDistanceMiles] float NULL, [PaymentType] varchar(50) COLLATE SQL_Latin1_General_CP1_CI_AS NULL, [FareAmount] money NULL, [SurchargeAmount] money NULL, [TaxAmount] money NULL, [TipAmount] money NULL, [TollsAmount] money NULL, [TotalAmount] money NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX ); CREATE TABLE [dbo].[Weather] ( [DateID] int NOT NULL, [GeographyID] int NOT NULL, [PrecipitationInches] float NOT NULL, [AvgTemperatureFahrenheit] float NOT NULL ) WITH ( DISTRIBUTION = ROUND_ROBIN, CLUSTERED COLUMNSTORE INDEX );

Load the data into your data warehouse

This section uses the COPY statement to load the sample data from Azure Storage Blob.

Note

This tutorial loads the data directly into the final table. You would typically load into a staging table for your production workloads. While data is in the staging table you can perform any necessary transformations.

Run the following statements to load the data:

COPY INTO [dbo].[Date] FROM 'https://nytaxiblob.blob.core.windows.net/2013/Date' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = ',', FIELDQUOTE = '' ) OPTION (LABEL = 'COPY : Load [dbo].[Date] - Taxi dataset'); COPY INTO [dbo].[Geography] FROM 'https://nytaxiblob.blob.core.windows.net/2013/Geography' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = ',', FIELDQUOTE = '' ) OPTION (LABEL = 'COPY : Load [dbo].[Geography] - Taxi dataset'); COPY INTO [dbo].[HackneyLicense] FROM 'https://nytaxiblob.blob.core.windows.net/2013/HackneyLicense' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = ',', FIELDQUOTE = '' ) OPTION (LABEL = 'COPY : Load [dbo].[HackneyLicense] - Taxi dataset'); COPY INTO [dbo].[Medallion] FROM 'https://nytaxiblob.blob.core.windows.net/2013/Medallion' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = ',', FIELDQUOTE = '' ) OPTION (LABEL = 'COPY : Load [dbo].[Medallion] - Taxi dataset'); COPY INTO [dbo].[Time] FROM 'https://nytaxiblob.blob.core.windows.net/2013/Time' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = ',', FIELDQUOTE = '' ) OPTION (LABEL = 'COPY : Load [dbo].[Time] - Taxi dataset'); COPY INTO [dbo].[Weather] FROM 'https://nytaxiblob.blob.core.windows.net/2013/Weather' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = ',', FIELDQUOTE = '', ROWTERMINATOR='0X0A' ) OPTION (LABEL = 'COPY : Load [dbo].[Weather] - Taxi dataset'); COPY INTO [dbo].[Trip] FROM 'https://nytaxiblob.blob.core.windows.net/2013/Trip2013' WITH ( FILE_TYPE = 'CSV', FIELDTERMINATOR = '|', FIELDQUOTE = '', ROWTERMINATOR='0X0A', COMPRESSION = 'GZIP' ) OPTION (LABEL = 'COPY : Load [dbo].[Trip] - Taxi dataset');View your data as it loads. You're loading several GBs of data and compressing it into highly performant clustered columnstore indexes. Run the following query that uses a dynamic management views (DMVs) to show the status of the load.

SELECT r.[request_id] , r.[status] , r.resource_class , r.command , sum(bytes_processed) AS bytes_processed , sum(rows_processed) AS rows_processed FROM sys.dm_pdw_exec_requests r JOIN sys.dm_pdw_dms_workers w ON r.[request_id] = w.request_id WHERE [label] = 'COPY : Load [dbo].[Date] - Taxi dataset' OR [label] = 'COPY : Load [dbo].[Geography] - Taxi dataset' OR [label] = 'COPY : Load [dbo].[HackneyLicense] - Taxi dataset' OR [label] = 'COPY : Load [dbo].[Medallion] - Taxi dataset' OR [label] = 'COPY : Load [dbo].[Time] - Taxi dataset' OR [label] = 'COPY : Load [dbo].[Weather] - Taxi dataset' OR [label] = 'COPY : Load [dbo].[Trip] - Taxi dataset' and session_id <> session_id() and type = 'WRITER' GROUP BY r.[request_id] , r.[status] , r.resource_class , r.command;View all system queries.

SELECT * FROM sys.dm_pdw_exec_requests;Enjoy seeing your data nicely loaded into your data warehouse.

Clean up resources

You are being charged for compute resources and data that you loaded into your data warehouse. These are billed separately.

- If you want to keep the data in storage, you can pause compute when you aren't using the data warehouse. By pausing compute you will only be charge for data storage and you can resume the compute whenever you are ready to work with the data.

- If you want to remove future charges, you can delete the data warehouse.

Follow these steps to clean up resources as you desire.

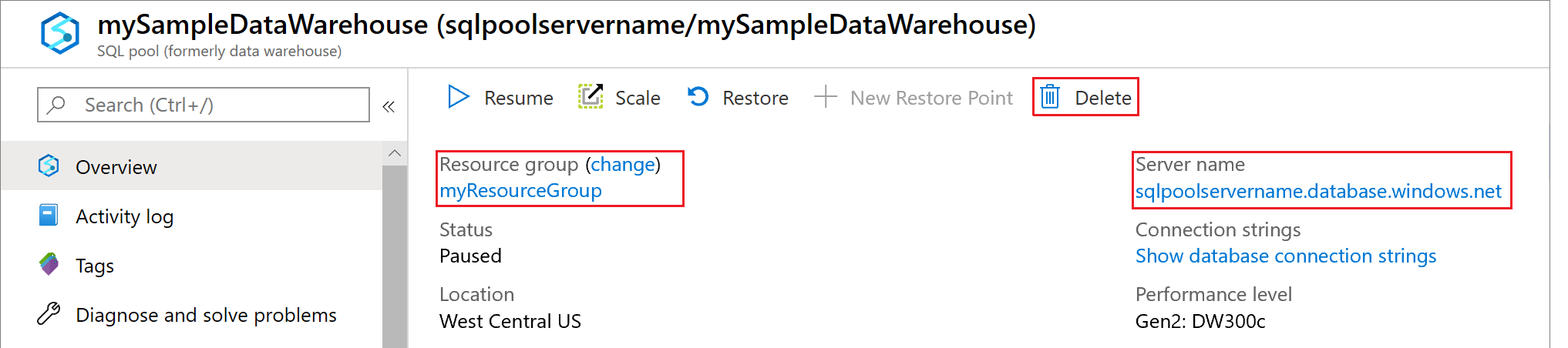

Log in to the Azure portal, select your data warehouse.

To pause compute, select the Pause button. When the data warehouse is paused, you will see a Start button. To resume compute, select Start.

To remove the data warehouse so you won't be charged for compute or storage, select Delete.

To remove the server you created, select mynewserver-20180430.database.windows.net in the previous image, and then select Delete. Be careful with this as deleting the server will delete all databases assigned to the server.

To remove the resource group, select myResourceGroup, and then select Delete resource group.

Next steps

In this tutorial, you learned how to create a data warehouse and create a user for loading data. You used the simple COPY statement to load data into your data warehouse.

You did these things:

- Created a data warehouse in the Azure portal

- Set up a server-level firewall rule in the Azure portal

- Connected to the data warehouse with SSMS

- Created a user designated for loading data

- Created the tables for the sample data

- Used the COPY T-SQL statement to load data into your data warehouse

- Viewed the progress of data as it is loading

Advance to the development overview to learn how to migrate an existing database to Azure Synapse Analytics:

For more loading examples and references, view the following documentation:

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for