Running HPC SOA Services in Hybrid HPC Pack Cluster

This documentation describes how to deploy and run HPC SOA services in a hybrid HPC Pack cluster which contains both on-premise compute nodes and Azure IaaS compute nodes.

Pre-requisite

An Azure Subscription.

- To create an Azure subscription, go to the Azure site.

- To access an existing subscription, go to the Azure portal.

A Site-to-Site VPN or ExpressRoute connection from your on-premises network to the Azure virtual network.

Deploy a Hybrid HPC Pack Cluster

To deploy a hybrid HPC Pack Cluster, you can leverage IaaS Burst feature from HPC Pack 2016 Update 1. See Burst to Azure IaaS VM from an HPC Pack Cluster for detail information.

Deploy HPC SOA services in a Hybrid HPC Pack Cluster

IaaS compute nodes are domain joined

If the IaaS compute nodes are domain joined, the cluster shall behave as an on-premise HPC Pack Cluster. See our tutorial series for more information on HPC SOA.

IaaS compute nodes are non-domain joined

If the IaaS compute nodes are non-domain joined compute nodes, the way to deploy a new HPC SOA service is slightly different.

Deploy a new HPC SOA service

Deploy service binaries

When deploying a new HPC SOA service to a hybrid HPC Pack Cluster which contains non-domain joined IaaS compute nodes, the service binaries need to be placed in every compute nodes' local folder. (e.g. C:\Services\Sample.dll)

Change settings in service registration file

Change the assembly path to the local file on every compute node

<microsoft.Hpc.Session.ServiceRegistration> <!--Change assembly path below--> <service assembly="C:\Services\Sample.dll" /> </microsoft.Hpc.Session.ServiceRegistration>As domain identity is not available on non-domain joined compute nodes, we need to set security mode between broker node and compute nodes to

None. Add following section into service registration file or change existing one:<system.serviceModel> <bindings> <netTcpBinding> <!--binding used by broker's backend--> <binding name="Microsoft.Hpc.BackEndBinding" maxConnections="1000"> <!--for non domain joined compute nodes, the security mode should be None--> <security mode="None"/> </binding> </netTcpBinding> </bindings> </system.serviceModel>

Deploy service registration file

There are two ways to deploy the service registration file of new SOA service

Deploy the service registration file to every cluster nodes' local folder

To deploy service registration file using this way, we need to create a local service registration folder on every node (e.g. C:\ServiceRegistration), and add it to HPC Pack cluster's

CCP_SERVICEREGISTRATION_PATHenvironment.The cluster environment can be fetched on head node using command

cluscfg listenvsand will give output like:C:\Windows\system32>cluscfg listenvs CCP_SERVICEREGISTRATION_PATH=CCP_REGISTRATION_STORE;\\<headnode>\HpcServiceRegistration HPC_RUNTIMESHARE=\\<headnode>\Runtime$ CCP_MPI_NETMASK=10.0.0.0/255.255.252.0 CCP_CLUSTER_NAME=<headnode>To configure the environment, use command

cluscfg setenvslikecluscfg setenvs CCP_SERVICEREGISTRATION_PATH=CCP_REGISTRATION_STORE;\\<headnode>\HpcServiceRegistration;C:\ServiceRegistrationThen copy the service registration file to newly created local folder (e.g. C:\ServiceRegistration\Sample.config)

More about

cluscfgcan be found in the cluscfg documentation.More about creating and deploying a new SOA service can be found in HPC Pack SOA Tutorial I – Write your first SOA service and client.

Deploy the service registration file using HA configuration file feature

From HPC Pack Update 1, we added HA configuration file feature to help deploying service registration files in HA clusters and in clusters with non-domain joined compute nodes.

To use this feature, please ensure

CCP_REGISTRATION_STOREis set inCCP_SERVICEREGISTRATION_PATHcluster environment. Check the result ofcluscfg listenvs, it should containsCCP_SERVICEREGISTRATION_PATH=CCP_REGISTRATION_STORE;\\<headnode>\HpcServiceRegistrationIf the value

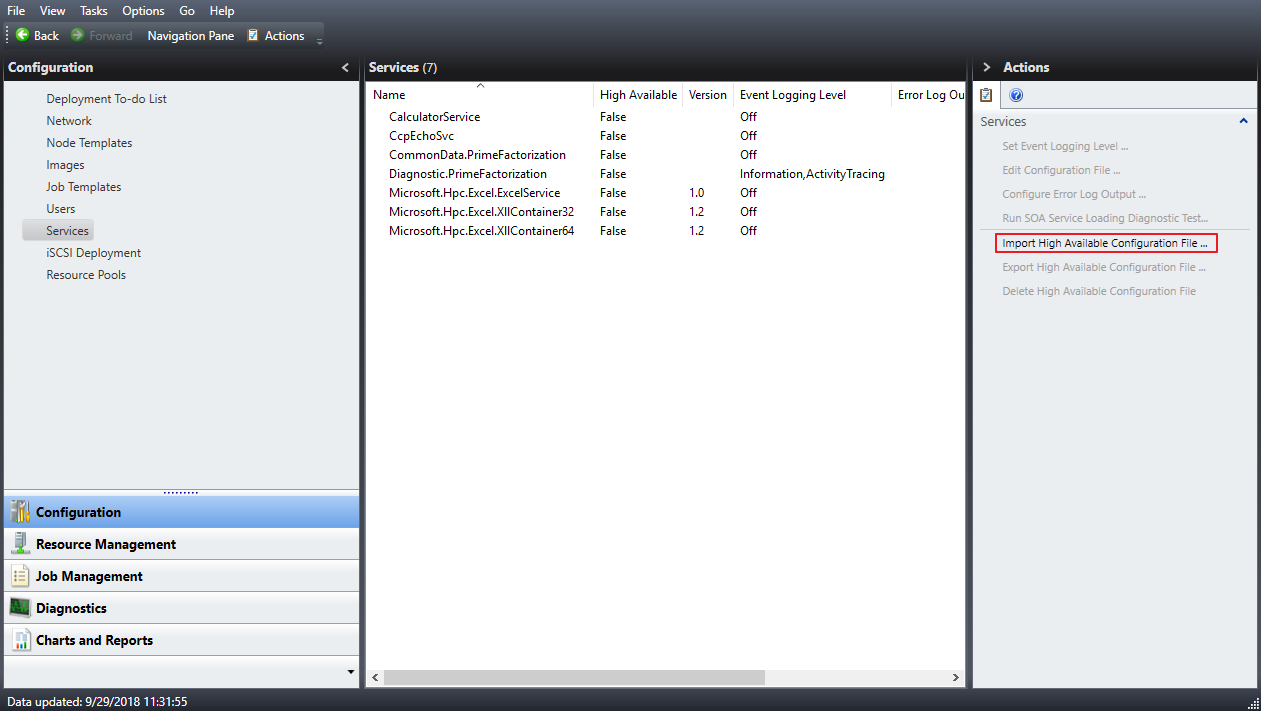

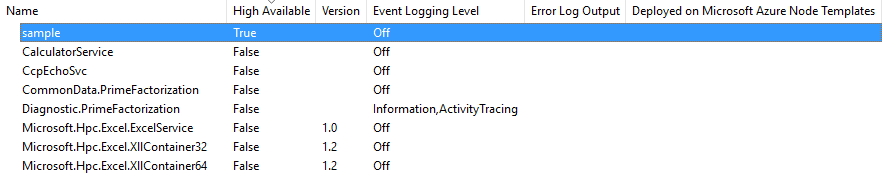

CCP_REGISTRATION_STOREis missing, please set it use commandcluscfg setenvslikecluscfg setenvs CCP_SERVICEREGISTRATION_PATH=CCP_REGISTRATION_STORE;\\<headnode>\HpcServiceRegistrationTo add a HA configuration file, in HPC Pack 2016 Cluster Manager, go to Configuration -> Services panel, click Import High Available Configuration File... in Action panel. Then select the HA configuration file to import.

Once imported, a new service entry will show in Services panel with High Available set to True.

Using Common Data

From HPC Pack 2016 Update 2, non-domain joined compute nodes can read SOA Common Data from Azure Blob Storage directly without accessing to HPC Runtime share which usually located on the head node. This enables the SOA workloads in a hybrid HPC Pack Cluster with compute resource provisioned in Azure.

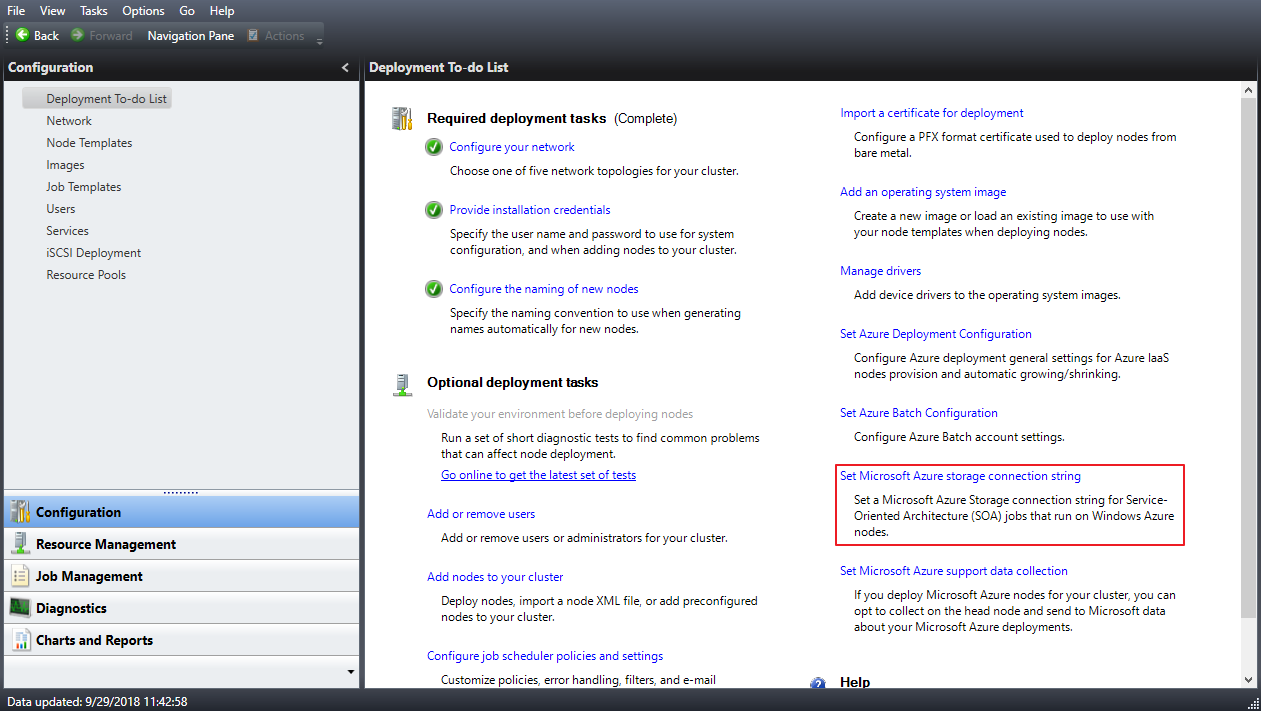

Configure HPC Pack Cluster

To enable this feature, an Azure Storage Connection String need to be configured in HPC Cluster. In HPC Pack 2016 Cluster Manager, find Set Microsoft Azure storage connection string in Configuration -> Deployment To-do List, and fill in the correct connection string.

Change the SOA service code

If your service calls

ServiceContext.GetDataClientto obtain a reference ofDataClientbefore, no code change is needed.Instead if your service explicitly uses

DataClient.Open, you'll need to change the call to a new API if your service is running on a non-domain joined node. Here is the sample of it.// Get Job ID from environment variable string jobIdEnvVar = Environment.GetEnvironmentVariable(Microsoft.Hpc.Scheduler.Session.Internal.Constant.JobIDEnvVar); if (!int.TryParse(jobIdEnvVar, out int jobId)) { throw new InvalidOperationException($"jobIdEnvVar is invalid:{jobIdEnvVar}"); } // Get Job Secret from environment variable string jobSecretEnvVar = Environment.GetEnvironmentVariable(Microsoft.Hpc.Scheduler.Session.Internal.Constant.JobSecretEnvVar); return DataClient.Open(dataClientId, jobId, jobSecretEnvVar);

More about cluscfg can be found in the cluscfg documentation.

To read more about Azure Storage Connection Strings, see Configure Azure Storage connection strings.

To read more about HPC SOA Common Data, see HPC Pack SOA Tutorial IV – Common Data.