Azure Content Moderator は 2024 年 2 月に非推奨となり、2027 年 2 月までに廃止される予定です。 これは、高度な AI 機能とパフォーマンス強化を提供する Azure AI Content Safety に置き換えられます。

Azure AI Content Safety は、アプリケーションやサービスでユーザーまたは AI によって生成された有害コンテンツを検出するように設計された包括的なソリューションです。 Azure AI Content Safety は、オンライン マーケットプレース、ゲーム会社、ソーシャル メッセージング プラットフォーム、エンタープライズ メディア企業、K-12 教育ソリューション プロバイダーなど、多くのシナリオに適しています。 その特徴と機能の概要を次に示します。

テキストと画像を検出する API : テキストと画像をスキャンし、多様な重大度レベルで性的コンテンツ、暴力、憎悪、自傷行為を検出します。

Content Safety Studio : 最先端のコンテンツ モデレーション ML モデルを使用し、不快、危険、有害となる可能性があるコンテンツを処理するように設計されたオンライン ツール。 テンプレートとカスタマイズされたワークフローを備えており、ユーザーは独自のコンテンツ モデレーション システムを構築できます。

言語サポート : Azure AI Content Safety は 100 を超える言語をサポートしており、とりわけ、英語、ドイツ語、日本語、スペイン語、フランス語、イタリア語、ポルトガル語、中国語でトレーニングされています。

Azure AI Content Safety は、コンテンツ モデレーションのニーズに対して堅牢で柔軟なソリューションを提供します。 Content Moderator から Azure AI Content Safety に切り替えることで、コンテンツが常に仕様に厳密に合わせてモデレートされるよう、最新のツールとテクノロジを活用できます。

Azure AI Content Safety の詳細はこちらをご覧いただき 、コンテンツ モデレーション戦略を高める方法を模索してください。

Azure Content Moderator は、不快感を与える可能性がある内容、リスクのある内容、その他望ましくない可能性のある内容を管理できる AI サービスです。 これには、テキスト、画像、ビデオをスキャンし、コンテンツ フラグを自動的に適用する、AI を利用したコンテンツ モデレーション サービスが含まれます。

法的規制に準拠したり、ユーザーに意図されている環境を維持したりするために、コンテンツ フィルタリング ソフトウェアをアプリに組み込むことをお勧めします。

このドキュメントには、次の種類の記事が含まれています。

クイックスタート は、サービスへの要求の実行方法を説明する概要手順です。

攻略ガイド には、より具体的またはカスタマイズした方法でサービスを使用するための手順が記載されています。

概念 では、サービスの機能と特徴について詳しく説明します。

より構造化されたアプローチについては、Content Moderator のトレーニング モジュールに従ってください。

使用場所

ソフトウェア開発者またはチームがコンテンツ モデレーション サービスを使用するシナリオをいくつか次に示します。

ユーザーが生成した製品カタログなどのコンテンツをモデレートするオンライン マーケットプレース。

ユーザーが生成したゲームの成果物とチャット ルームをモデレートするゲーム会社。

ユーザーによって追加された画像、テキスト、ビデオをモデレートするソーシャル メッセージング プラットフォーム。

コンテンツに対する一元的なモデレーションを実装するエンタープライズ メディア企業。

学生および教育者にとって不適切なコンテンツをフィルター処理して除外する K-12 教育ソリューション プロバイダー。

Content Moderator を使用して、子どもの搾取に該当する違法な画像を検出することはできません。 ただし、認められた機関は PhotoDNA Cloud Service を使用してこのようなコンテンツを審査できます。

備えている機能

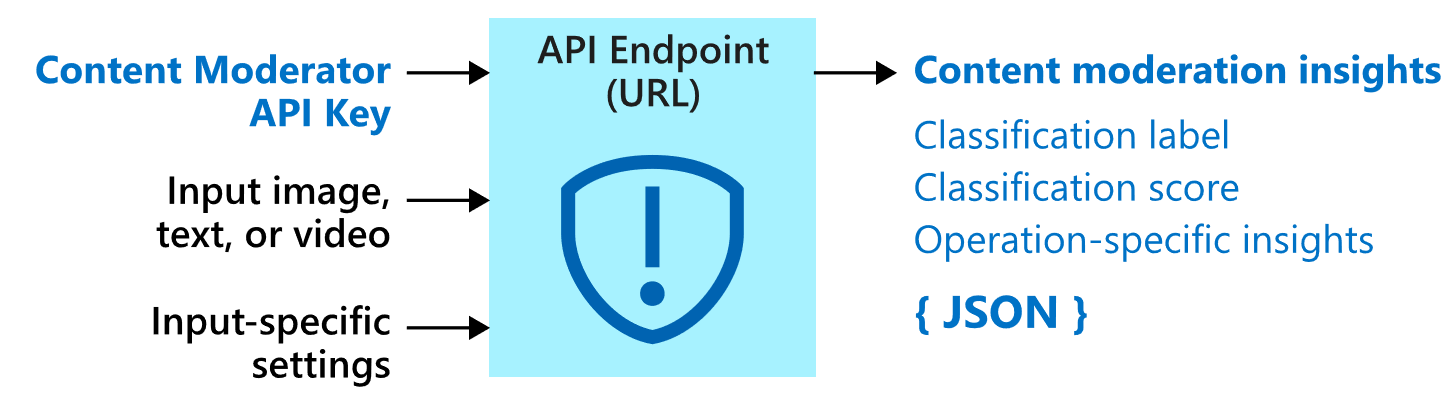

Content Moderator サービスは、REST 呼び出しと .NET SDK のどちらを介しても利用できるいくつかの Web サービス API で構成されています。

モデレート API

Content Moderator サービスには、モデレート API が含まれています。これは、不適切または好ましくない可能性がある素材についてコンテンツをチェックします。

次の表では、さまざまな種類のモデレーション API について説明します。

テーブルを展開する

API グループ

説明

テキストのモデレーション

テキストをスキャンし、不適切な表現、露骨に性的な表現、性的な内容を暗示する表現、下品な表現、個人データが含まれているかどうかを確認します。

カスタム用語リスト

テキストをスキャンし、組み込みの用語と共に、カスタムの用語リストと照合します。 カスタム リストは、独自のコンテンツ ポリシーに従ってコンテンツをブロックまたは許可する際に使用します。

画像のモデレーション

画像をスキャンして成人向けのコンテンツやわいせつなコンテンツが含まれているかどうかを確認するほか、光学式文字認識 (OCR) 機能により画像内のテキストを検出したり、顔検出を実行したりします。

カスタム画像リスト

画像をスキャンし、画像のカスタム リストと照合します。 カスタム画像リストを使うと、何度も出現し、そのたびに分類をするのが煩わしいコンテンツを除去できます。

ビデオのモデレーション

ビデオをスキャンして成人向けのコンテンツやわいせつなコンテンツが含まれているかどうかを確認し、そのようなコンテンツがあればタイム マーカーを返します。

データのプライバシーとセキュリティ

Azure AI サービス全般に言えることですが、Content Moderator サービスを使用する開発者は、顧客データに関する Microsoft のポリシーに留意する必要があります。 詳細については、Microsoft Trust Center の Azure AI サービス ページ を参照してください。

次の手順