Databaseresources migreren naar globale Azure

Belangrijk

Sinds augustus 2018 hebben we geen nieuwe klanten meer geaccepteerd of nieuwe functies en services geïmplementeerd op de oorspronkelijke Microsoft Cloud Duitsland-locaties.

Op basis van de evolutie in de behoeften van klanten hebben we onlangs twee nieuwe datacenterregio's in Duitsland gelanceerd , die locatie van klantgegevens, volledige connectiviteit met het wereldwijde cloudnetwerk van Microsoft en concurrerende prijzen bieden.

Daarnaast hebben we op 30 september 2020 aangekondigd dat de Microsoft Cloud Duitsland op 29 oktober 2021 wordt gesloten. Meer informatie vindt u hier: https://www.microsoft.com/cloud-platform/germany-cloud-regions.

Profiteer van de uitgebreide functionaliteit, beveiliging op bedrijfsniveau en uitgebreide functies die beschikbaar zijn in onze nieuwe Duitse datacenterregio's door vandaag nog te migreren .

Dit artikel bevat informatie die u kan helpen bij het migreren van Azure-databaseresources van Azure Duitsland naar wereldwijde Azure.

SQL Database

Als u kleinere Azure SQL Database-workloads wilt migreren zonder de gemigreerde database online te houden, gebruikt u de exportfunctie om een BACPAC-bestand te maken. Een BACPAC-bestand is een gecomprimeerd (gezipt) bestand dat metagegevens en de gegevens uit de SQL Server-database bevat. Nadat u het BACPAC-bestand hebt gemaakt, kunt u het bestand kopiëren naar de doelomgeving (bijvoorbeeld met behulp van AzCopy) en de importfunctie gebruiken om de database opnieuw te bouwen. Houd rekening met de volgende overwegingen:

- Als u wilt dat een export transactieconsistent is, moet u ervoor zorgen dat aan een van de volgende voorwaarden wordt voldaan:

- Er vindt geen schrijfactiviteit plaats tijdens het exporteren.

- U exporteert vanuit een transactieconsistente kopie van uw SQL-database.

- Als u wilt exporteren naar Azure Blob Storage, is de BACPAC-bestandsgrootte beperkt tot 200 GB. Voor een groter BACPAC-bestand exporteert u naar lokale opslag.

- Als de exportbewerking van SQL Database langer duurt dan 20 uur, kan de bewerking worden geannuleerd. Raadpleeg de volgende artikelen voor tips over het verbeteren van de prestaties.

Notitie

De verbindingsreeks verandert na de exportbewerking omdat de DNS-naam van de server tijdens het exporteren wordt gewijzigd.

Voor meer informatie:

- Meer informatie over het exporteren van een database naar een BACPAC-bestand.

- Meer informatie over het importeren van een BACPAC-bestand in een database.

- Raadpleeg de documentatie voor Azure SQL Database.

Notitie

Het wordt aanbevolen de Azure Az PowerShell-module te gebruiken om te communiceren met Azure. Zie Azure PowerShell installeren om aan de slag te gaan. Raadpleeg Azure PowerShell migreren van AzureRM naar Az om te leren hoe u naar de Azure PowerShell-module migreert.

SQL Database migreren met behulp van actieve geo-replicatie

Voor databases die te groot zijn voor BACPAC-bestanden, of om van de ene cloud naar de andere te migreren en met minimale downtime online te blijven, kunt u actieve geo-replicatie van Azure Duitsland naar wereldwijde Azure configureren.

Belangrijk

Het configureren van actieve geo-replicatie voor het migreren van databases naar wereldwijde Azure wordt alleen ondersteund met behulp van Transact-SQL (T-SQL). Voordat u migreert, moet u activering van uw abonnement aanvragen om migratie naar wereldwijde Azure te ondersteunen. Als u een aanvraag wilt indienen, moet u deze koppeling voor de ondersteuningsaanvraag gebruiken.

Notitie

Wereldwijde Azure-cloudregio's, Duitsland - west-centraal en Duitsland - noord, zijn de ondersteunde regio's voor actieve geo-replicatie met de Azure Duitsland-cloud. Als een alternatieve globale Azure-regio wordt gewenst als de uiteindelijke database(s) bestemming, is de aanbeveling om na voltooiing van de migratie naar globale Azure een extra geo-replicatiekoppeling te configureren van Duitsland - west-centraal of Duitsland - noord naar de vereiste azure-cloudregio.

Zie de sectie Actieve geo-replicatie in prijzen van Azure SQL Database voor meer informatie over de kosten voor actieve geo-replicatie.

Voor het migreren van databases met actieve geo-replicatie is een Azure SQL logische server in azure vereist. U kunt de server maken met behulp van de portal, Azure PowerShell, Azure CLI, enzovoort, maar het configureren van actieve geo-replicatie om te migreren van Azure Duitsland naar wereldwijde Azure wordt alleen ondersteund met transact-SQL (T-SQL).

Belangrijk

Bij het migreren tussen clouds moeten de primaire (Azure Duitsland) en secundaire (globale Azure) servernaamvoorvoegsels verschillend zijn. Als de servernamen hetzelfde zijn, wordt de instructie ALTER DATABASE uitgevoerd, maar mislukt de migratie. Als het voorvoegsel van de naam van de primaire server bijvoorbeeld (myserver.database.cloudapi.de) is, kan myserver het voorvoegsel van de secundaire servernaam in globale Azure niet zijnmyserver.

Met de ALTER DATABASE instructie kunt u een doelserver opgeven in globale Azure met behulp van de volledig gekwalificeerde DNS-servernaam aan de doelzijde.

ALTER DATABASE [sourcedb] add secondary on server [public-server.database.windows.net]

sourcedbvertegenwoordigt de databasenaam in een Azure SQL server in Azure Duitsland.public-server.database.windows.netvertegenwoordigt de Azure SQL servernaam die bestaat in globale Azure, waar de database moet worden gemigreerd. De naamruimte 'database.windows.net' is vereist. Vervang public-server door de naam van uw logische SQL-server in het globale Azure. De server in globale Azure moet een andere naam hebben dan de primaire server in Azure Duitsland.

De opdracht wordt uitgevoerd op de hoofddatabase op de Azure Duitsland-server die als host fungeert voor de lokale database die moet worden gemigreerd.

De T-SQL start-copy-API verifieert de aangemelde gebruiker in de openbare cloudserver door een gebruiker met dezelfde SQL-aanmelding/gebruikersnaam te vinden in de hoofddatabase van die server. Deze benadering is cloud-agnostisch; De T-SQL-API wordt dus gebruikt om kopieën in de cloud te starten. Zie Actieve geo-replicatie enALTER DATABASE (Transact-SQL) maken en gebruiken voor machtigingen en meer informatie over dit onderwerp.

Met uitzondering van de initiële T-SQL-opdrachtextensie die een Azure SQL logische server in globale Azure aangeeft, is de rest van het actieve geo-replicatieproces identiek aan de bestaande uitvoering in de lokale cloud. Zie Actieve geo-replicatie maken en gebruiken met uitzondering van de secundaire database die wordt gemaakt in de secundaire logische server die is gemaakt in globale Azure voor gedetailleerde stappen voor het maken van actieve geo-replicatie .

Zodra de secundaire database in globale Azure bestaat (als onlinekopie van de Azure Duitsland-database), kan de klant een databasefailover van Azure Duitsland naar wereldwijde Azure initiëren voor deze database met behulp van de T-SQL-opdracht ALTER DATABASE (zie de onderstaande tabel).

Zodra de secundaire database na de failover een primaire database in globale Azure wordt, kunt u de actieve geo-replicatie stoppen en de secundaire database aan de zijde van Azure Duitsland op elk gewenst moment verwijderen (zie de onderstaande tabel en de stappen in het diagram).

Na een failover blijven er kosten in rekening gebracht voor de secundaire database in Azure Duitsland totdat deze wordt verwijderd.

Het gebruik van de

ALTER DATABASEopdracht is de enige manier om actieve geo-replicatie in te stellen om een Azure Duitsland-database te migreren naar wereldwijde Azure.Er is geen Azure Portal, Azure Resource Manager, PowerShell of CLI beschikbaar om actieve geo-replicatie voor deze migratie te configureren.

Een database migreren van Azure Duitsland naar wereldwijde Azure:

Kies de gebruikersdatabase in Azure Duitsland, bijvoorbeeld

azuregermanydbMaak een logische server in globale Azure (de openbare cloud), bijvoorbeeld

globalazureserver. De FQDN (Fully Qualified Domain Name) isglobalazureserver.database.windows.net.Start actieve geo-replicatie van Azure Duitsland naar wereldwijde Azure door deze T-SQL-opdracht uit te voeren op de server in Azure Duitsland. Houd er rekening mee dat de volledig gekwalificeerde DNS-naam wordt gebruikt voor de openbare server

globalazureserver.database.windows.net. Dit is om aan te geven dat de doelserver zich in de wereldwijde Azure en niet in Azure Duitsland bevindt.ALTER DATABASE [azuregermanydb] ADD SECONDARY ON SERVER [globalazureserver.database.windows.net];Wanneer de replicatie gereed is om de werkbelasting lezen/schrijven te verplaatsen naar de globale Azure-server, start u een geplande failover naar globale Azure door deze T-SQL-opdracht uit te voeren op de globale Azure-server.

ALTER DATABASE [azuregermanydb] FAILOVER;De actieve geo-replicatiekoppeling kan vóór of na het failoverproces worden beëindigd. Als u de volgende T-SQL-opdracht uitvoert na de geplande failover, wordt de geo-replicatiekoppeling verwijderd waarbij de database in de globale Azure de lees-schrijfkopie is. Deze moet worden uitgevoerd op de logische server van de huidige geo-primaire database (dat wil zeggen op de globale Azure-server). Hiermee wordt het migratieproces voltooid.

ALTER DATABASE [azuregermanydb] REMOVE SECONDARY ON SERVER [azuregermanyserver];De volgende T-SQL-opdracht die wordt uitgevoerd vóór de geplande failover stopt ook het migratieproces, maar in dit geval blijft de database in Azure Duitsland de lees-schrijfkopie. Deze T-SQL-opdracht moet ook worden uitgevoerd op de logische server van de huidige geo-primaire database, in dit geval op de Azure Duitsland-server.

ALTER DATABASE [azuregermanydb] REMOVE SECONDARY ON SERVER [globalazureserver];

Deze stappen voor het migreren van Azure SQL databases van Azure Duitsland naar wereldwijde Azure kunnen ook worden gevolgd met behulp van actieve geo-replicatie.

Voor meer informatie worden in de volgende tabellen de T-SQL-opdrachten voor het beheren van failover aangegeven. De volgende opdrachten worden ondersteund voor actieve geo-replicatie in meerdere clouds tussen Azure Duitsland en azure wereldwijd:

| Opdracht | Beschrijving |

|---|---|

| ALTER DATABASE | Het argument ADD SECONDARY ON SERVER gebruiken om een secundaire database voor een bestaande database te maken en de gegevensreplicatie te starten |

| ALTER DATABASE | FAILOVER of FORCE_FAILOVER_ALLOW_DATA_LOSS gebruiken om een secundaire database om te zetten in primaire database om failover te initiëren |

| ALTER DATABASE | Gebruik REMOVE SECONDARY ON SERVER om een gegevensreplicatie tussen een SQL Database en de opgegeven secundaire database te beëindigen. |

Systeemweergaven voor actieve geo-replicatiebewaking

| Opdracht | Beschrijving |

|---|---|

| sys.geo_replication_links | Retourneert informatie over alle bestaande replicatiekoppelingen voor elke database op de Azure SQL Database-server. |

| sys.dm_geo_replication_link_status | Hiermee haalt u de laatste replicatietijd, de laatste replicatievertraging en andere informatie over de replicatiekoppeling voor een bepaalde SQL-database op. |

| sys.dm_operation_status | Toont de status voor alle databasebewerkingen, inclusief de status van de replicatiekoppelingen. |

| sp_wait_for_database_copy_sync | Zorgt ervoor dat de toepassing wacht totdat alle vastgelegde transacties zijn gerepliceerd en bevestigd door de actieve secundaire database. |

Back-ups van langetermijnretentie SQL Database migreren

Het migreren van een database met geo-replicatie of BACPAC-bestand wordt niet gekopieerd over de back-ups voor langetermijnretentie die de database mogelijk in Azure Duitsland heeft. Als u bestaande back-ups met langetermijnretentie wilt migreren naar de globale Doelregio van Azure, kunt u de back-upprocedure COPY voor langetermijnretentie gebruiken.

Notitie

LTR-back-upkopiemethoden die hier worden beschreven, kunnen alleen de LTR-back-ups van Azure Duitsland naar wereldwijde Azure kopiëren. Het kopiëren van PITR-back-ups met behulp van deze methoden wordt niet ondersteund.

Vereisten

- De doeldatabase waarin u de LTR-back-ups kopieert, moet in globale Azure bestaan voordat u begint met het kopiëren van de back-ups. Het is raadzaam om eerst de brondatabase te migreren met behulp van actieve geo-replicatie en vervolgens de LTR-back-up te starten. Dit zorgt ervoor dat de back-ups van de database worden gekopieerd naar de juiste doeldatabase. Deze stap is niet vereist als u via LTR-back-ups van een verwijderde database kopieert. Bij het kopiëren van LTR-back-ups van een verwijderde database, wordt een dummy DatabaseID gemaakt in de doelregio.

- Deze PowerShell Az-module installeren

- Voordat u begint, moet u ervoor zorgen dat vereiste Azure RBAC-rollen worden verleend binnen het bereik van het abonnement of de resourcegroep . Opmerking: voor toegang tot LTR-back-ups die deel uitmaken van een verwijderde server, moet de machtiging worden verleend in het abonnementsbereik van die server. .

Beperkingen

- Failovergroepen worden niet ondersteund. Dit betekent dat klanten die Azure Germany-database(s) migreren, de verbindingsreeksen zelf moeten beheren tijdens een failover.

- Geen ondersteuning voor Azure Portal, Azure Resource Manager API's, PowerShell of CLI. Dit betekent dat elke Azure Duitsland-migratie de installatie en failover van actieve geo-replicatie moet beheren via T-SQL.

- Klanten kunnen niet meerdere geo-secundaire databases maken in wereldwijde Azure voor databases in Azure Duitsland.

- Het maken van een geo-secundaire locatie moet worden gestart vanuit de Azure Duitsland-regio.

- Klanten kunnen databases alleen vanuit Azure Duitsland migreren naar wereldwijde Azure. Momenteel wordt er geen andere migratie tussen clouds ondersteund.

- Azure AD gebruikers in Azure Duitsland gebruikersdatabases worden gemigreerd, maar zijn niet beschikbaar in de nieuwe Azure AD tenant waarin de gemigreerde database zich bevindt. Als u deze gebruikers wilt inschakelen, moeten ze handmatig worden verwijderd en opnieuw worden gemaakt met behulp van de huidige Azure AD gebruikers die beschikbaar zijn in de nieuwe Azure AD tenant waarin de zojuist gemigreerde database zich bevindt.

Back-ups met langetermijnretentie kopiëren met behulp van PowerShell

Er is een nieuwe PowerShell-opdracht Copy-AzSqlDatabaseLongTermRetentionBackup geïntroduceerd, die kan worden gebruikt voor het kopiëren van de langetermijnretentieback-ups van Azure Duitsland naar wereldwijde Azure-regio's.

- LTR-back-up kopiëren met de back-upnaam In het volgende voorbeeld ziet u hoe u een LTR-back-up van Azure Duitsland naar de globale Azure-regio kunt kopiëren met behulp van de backupname.

# Source database and target database info

$location = "<location>"

$sourceRGName = "<source resourcegroup name>"

$sourceServerName = "<source server name>"

$sourceDatabaseName = "<source database name>"

$backupName = "<backup name>"

$targetDatabaseName = "<target database name>"

$targetSubscriptionId = "<target subscriptionID>"

$targetRGName = "<target resource group name>"

$targetServerFQDN = "<targetservername.database.windows.net>"

Copy-AzSqlDatabaseLongTermRetentionBackup

-Location $location

-ResourceGroupName $sourceRGName

-ServerName $sourceServerName

-DatabaseName $sourceDatabaseName

-BackupName $backupName

-TargetDatabaseName $targetDatabaseName

-TargetSubscriptionId $targetSubscriptionId

-TargetResourceGroupName $targetRGName

-TargetServerFullyQualifiedDomainName $targetServerFQDN

- LTR-back-up kopiëren met behulp van resourceID voor back-up In het volgende voorbeeld ziet u hoe u LTR-back-ups van Azure Duitsland naar een globale Azure-regio kunt kopiëren met behulp van een resource-id voor back-ups. Dit voorbeeld kan ook worden gebruikt om back-ups van een verwijderde database te kopiëren.

$location = "<location>"

# list LTR backups for All databases (you have option to choose All/Live/Deleted)

$ltrBackups = Get-AzSqlDatabaseLongTermRetentionBackup -Location $location -DatabaseState All

# select the LTR backup you want to copy

$ltrBackup = $ltrBackups[0]

$resourceID = $ltrBackup.ResourceId

# Source Database and target database info

$targetDatabaseName = "<target database name>"

$targetSubscriptionId = "<target subscriptionID>"

$targetRGName = "<target resource group name>"

$targetServerFQDN = "<targetservername.database.windows.net>"

Copy-AzSqlDatabaseLongTermRetentionBackup

-ResourceId $resourceID

-TargetDatabaseName $targetDatabaseName

-TargetSubscriptionId $targetSubscriptionId

-TargetResourceGroupName $targetRGName

-TargetServerFullyQualifiedDomainName $targetServerFQDN

Beperkingen

- Pitr-back-ups (point-in-time restore) worden alleen gemaakt voor de primaire database. Dit is standaard. Bij het migreren van databases van Azure Duitsland met behulp van Geo-DR, worden pitr-back-ups uitgevoerd op de nieuwe primaire na failover. De bestaande PITR-back-ups (op de vorige primaire in Azure Duitsland) worden echter niet gemigreerd. Als u PITR-back-ups nodig hebt ter ondersteuning van herstelscenario's naar een bepaald tijdstip, moet u de database herstellen vanuit PITR-back-ups in Azure Duitsland en vervolgens de herstelde database migreren naar globale Azure.

- Langetermijnretentiebeleid wordt niet gemigreerd met de database. Als u een beleid voor langetermijnretentie (LTR) hebt voor uw database in Azure Duitsland, moet u het LTR-beleid handmatig kopiëren en opnieuw maken voor de nieuwe database na de migratie.

Toegang aanvragen

Als u een database wilt migreren van Azure Duitsland naar wereldwijde Azure met behulp van geo-replicatie, moet uw abonnement in Azure Duitsland zijn ingeschakeld om de migratie tussen clouds te configureren.

Als u uw Azure Germany-abonnement wilt inschakelen, moet u de volgende koppeling gebruiken om een ondersteuningsaanvraag voor migratie te maken:

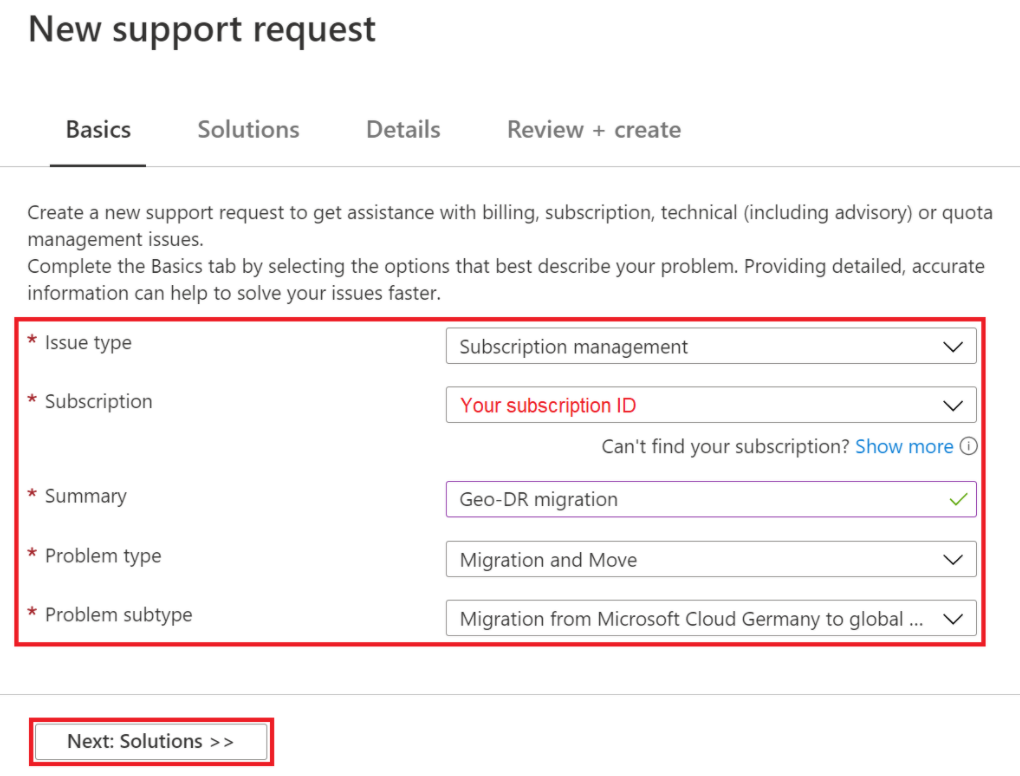

Blader naar de volgende ondersteuningsaanvraag voor migratie.

Voer op het tabblad Basisbeginselen Geo-DR-migratie in als samenvatting en selecteer vervolgens Volgende: Oplossingen

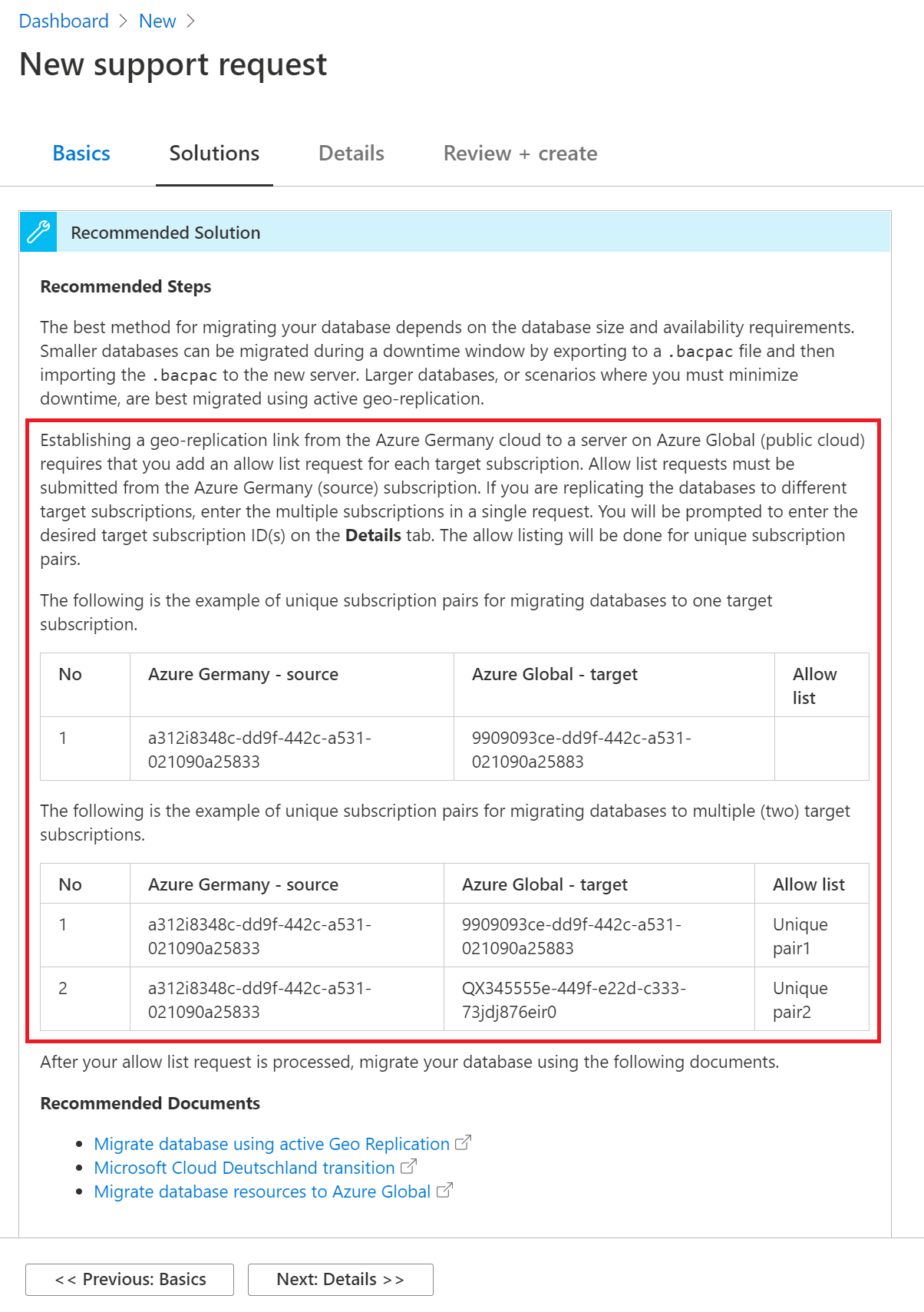

Bekijk de aanbevolen stappen en selecteer vervolgens Volgende: Details.

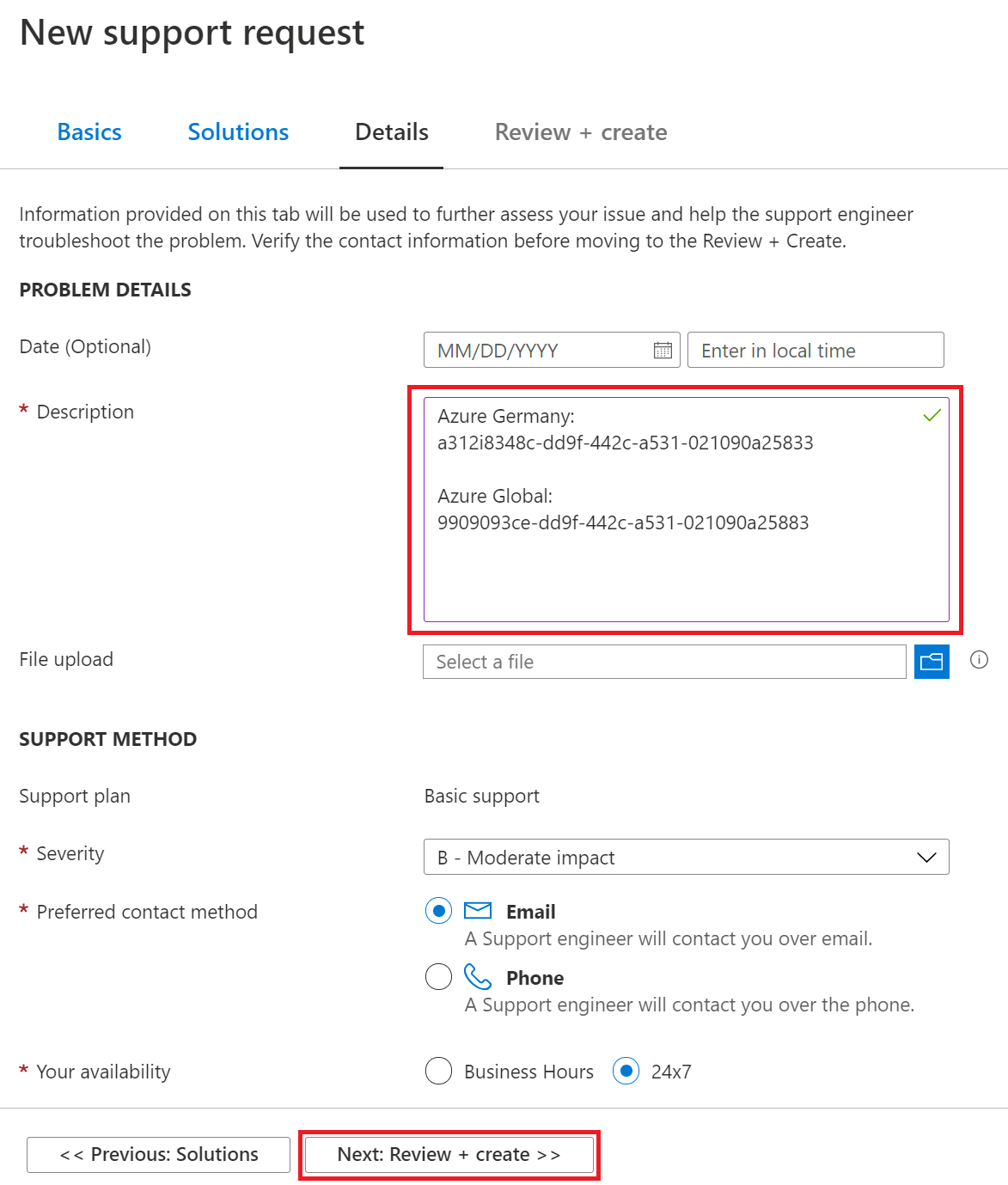

Geef op de detailpagina het volgende op:

- Voer in het vak Beschrijving de globale Azure-abonnements-id in waar u naar wilt migreren. Als u databases wilt migreren naar meer dan één abonnement, voegt u een lijst toe met de globale Azure-id's waarnaar u databases wilt migreren.

- Geef contactgegevens op: naam, bedrijfsnaam, e-mailadres of telefoonnummer.

- Vul het formulier in en selecteer volgende: Controleren en maken.

Controleer de ondersteuningsaanvraag en selecteer vervolgens Maken.

Er wordt contact met u opgenomen zodra de aanvraag is verwerkt.

Azure Cosmos DB

U kunt het Hulpprogramma voor gegevensmigratie van Azure Cosmos DB gebruiken om gegevens te migreren naar Azure Cosmos DB. Azure Cosmos DB-hulpprogramma voor gegevensmigratie is een opensource-oplossing waarmee gegevens uit verschillende bronnen in Azure Cosmos DB worden geïmporteerd, waaronder: JSON-bestanden, MongoDB, SQL Server, CSV-bestanden, Azure Table Storage, Amazon DynamoDB, HBase en Azure Cosmos-containers.

Azure Cosmos DB Data Migration Tool is beschikbaar als een grafisch interfaceprogramma of als opdrachtregelprogramma. De broncode is beschikbaar in de GitHub-opslagplaats azure Cosmos DB Data Migration Tool . Een gecompileerde versie van het hulpprogramma is beschikbaar in het Microsoft Downloadcentrum.

Als u Azure Cosmos DB-resources wilt migreren, wordt u aangeraden de volgende stappen uit te voeren:

- Bekijk de uptimevereisten en accountconfiguraties van de toepassing om het beste actieplan te bepalen.

- Kloon de accountconfiguraties van Azure Duitsland naar de nieuwe regio door het hulpprogramma voor gegevensmigratie uit te voeren.

- Als het gebruik van een onderhoudsvenster mogelijk is, kopieert u gegevens van de bron naar de bestemming door het hulpprogramma voor gegevensmigratie uit te voeren.

- Als het gebruik van een onderhoudsvenster geen optie is, kopieert u gegevens van de bron naar de bestemming door het hulpprogramma uit te voeren en voert u de volgende stappen uit:

- Gebruik een configuratiegestuurde benadering om wijzigingen aan te brengen in het lezen/schrijven in een toepassing.

- Voltooi een eerste synchronisatie.

- Stel een incrementele synchronisatie in en bekijk de wijzigingenfeed.

- Verwijs leesbewerkingen naar het nieuwe account en valideer de toepassing.

- Stop schrijfbewerkingen naar het oude account, controleer of de wijzigingenfeed is ingehaald en verwijs schrijfbewerkingen naar het nieuwe account.

- Stop het hulpprogramma en verwijder het oude account.

- Voer het hulpprogramma uit om te controleren of de gegevens consistent zijn in oude en nieuwe accounts.

Voor meer informatie:

- Zie Zelfstudie: Hulpprogramma voor gegevensmigratie gebruiken om uw gegevens te migreren naar Azure Cosmos DB voor meer informatie over het gebruik van het hulpprogramma voor gegevensmigratie.

- Zie Welkom bij Azure Cosmos DB voor meer informatie over Cosmos DB.

Azure Cache voor Redis

U hebt een aantal opties als u een Azure Cache voor Redis-exemplaar wilt migreren van Azure Duitsland naar wereldwijde Azure. Welke optie u kiest, is afhankelijk van uw vereisten.

Optie 1: Verlies van gegevens accepteren, een nieuw exemplaar maken

Deze benadering is het meest zinvol wanneer aan beide van de volgende voorwaarden wordt voldaan:

- U gebruikt Azure Cache voor Redis als een tijdelijke gegevenscache.

- Uw toepassing vult de cachegegevens automatisch opnieuw in de nieuwe regio.

Migreren met gegevensverlies en een nieuw exemplaar maken:

- Maak een nieuw Azure Cache voor Redis exemplaar in de nieuwe doelregio.

- Werk uw toepassing bij om het nieuwe exemplaar in de nieuwe regio te gebruiken.

- Verwijder het oude Azure Cache voor Redis exemplaar in de bronregio.

Optie 2: Gegevens kopiëren van het bronexemplaar naar het doelexemplaar

Een lid van het Azure Cache voor Redis-team heeft een opensource-hulpprogramma geschreven waarmee gegevens van het ene Azure Cache voor Redis exemplaar naar het andere worden gekopieerd zonder dat hiervoor import- of exportfunctionaliteit nodig is. Zie stap 4 in de volgende stappen voor informatie over het hulpprogramma.

Gegevens kopiëren van het bronexemplaar naar het doelexemplaar:

- Maak een virtuele machine in de bronregio. Als uw gegevensset in Azure Cache voor Redis groot is, moet u ervoor zorgen dat u een relatief krachtige VM-grootte selecteert om de kopieertijd te minimaliseren.

- Maak een nieuw Azure Cache voor Redis exemplaar in de doelregio.

- Gegevens uit het doelexemplaren verwijderen. (Zorg ervoor dat u het bronexemplaren niet leegmaken. Leegmaken is vereist omdat het kopieerprogramma bestaande sleutels op de doellocatie niet overschrijft.)

- Gebruik het volgende hulpprogramma om automatisch gegevens te kopiëren van het bron-Azure Cache voor Redis-exemplaar naar het doel-Azure Cache voor Redis-exemplaar: Hulpprogrammabron en hulpprogramma downloaden.

Notitie

Dit proces kan lang duren, afhankelijk van de grootte van uw gegevensset.

Optie 3: Exporteren vanuit het bronexemplaren, importeren naar het doelexemplaren

Deze aanpak maakt gebruik van functies die alleen beschikbaar zijn in de Premium-laag.

Exporteren vanuit het bronexemplaren en importeren naar het doelexemplaren:

Maak een nieuwe Premium-laag Azure Cache voor Redis exemplaar in de doelregio. Gebruik dezelfde grootte als het bronexemplaar Azure Cache voor Redis.

Exporteer gegevens uit de broncache of gebruik de PowerShell-cmdlet Export-AzRedisCache.

Notitie

Het Azure Storage-exportaccount moet zich in dezelfde regio bevinden als het cache-exemplaar.

Kopieer de geëxporteerde blobs naar een opslagaccount in de doelregio (bijvoorbeeld met behulp van AzCopy).

Importeer gegevens in de doelcache of gebruik de PowerShell-cmdlet Import-AzRedisCAche.

Configureer uw toepassing opnieuw om het doelexemplaar Azure Cache voor Redis te gebruiken.

Optie 4: Gegevens schrijven naar twee Azure Cache voor Redis exemplaren, gelezen vanuit één exemplaar

Voor deze aanpak moet u uw toepassing wijzigen. De toepassing moet gegevens schrijven naar meer dan één cache-exemplaar tijdens het lezen van een van de cache-exemplaren. Deze aanpak is zinvol als de gegevens die zijn opgeslagen in Azure Cache voor Redis voldoen aan de volgende criteria:

- De gegevens worden regelmatig vernieuwd.

- Alle gegevens worden naar de doel-Azure Cache voor Redis-instantie geschreven.

- U hebt voldoende tijd om alle gegevens te vernieuwen.

Voor meer informatie:

- Bekijk het overzicht van Azure Cache voor Redis.

PostgreSQL en MySQL

Zie de artikelen in de sectie Back-ups maken en gegevens migreren van PostgreSQL en MySQL voor meer informatie.

Volgende stappen

Meer informatie over hulpprogramma's, technieken en aanbevelingen voor het migreren van resources in de volgende servicecategorieën: