Friday Mail Sack: Saturday Edition

Ned here. As you may have noticed, it is not Friday. You may also have noticed that this post is awesome and packed with many weeks of delayed content goodness. This notice may extend to the fact that I have no life. You notice a lot, don’t you smarty?

I cannot imagine someone looking less like Sherlock Holmes than this goober.

This week I talk about AD scalability, DC usage, DFSR, DFSN, USMT, old user accounts, and angry networking. If you don’t see your question here it may just be in the backlog; don’t worry, I’ll get to it. Just kidding, your question stunk. And there is no Santa Claus.

The game is afoot!

- Maximum DCs in a domain

- AD Bridgehead improvements

- DFSR membership disable

- DHCP server authorization permissions

- Supportabillty of moving DCs to other OUs

- USMT /MD error 71

- DNS client and server best practices for AD

- msDS-LastSuccessfulInteractiveLogonTime versus LastLogonTimeStamp

- Other

Question

I was reviewing Active Directory Maximum Limits – Scalability and noticed that it says the maximum number of a DC’s a domain can contain is 1200 when using FRS for SYSVOL. Did that number change in Windows Server 2008 when DFSR could be used for SYSVOL?

Answer

A quick clarification: it’s the recommended maximum. The number 1200 is not some buffer in FRS that prevents further DC’s from being added; it was the largest number you could add before we decided that recovering a damaged SYSVOL became impractically hard. I’d also say from personal experience that this is a grossly inflated number – you try recovering a damaged FRS from a “mere” 200 DC’s and see what I mean.

Bottom line, this number is pulled from thin air. DFSR does not have a maximum number for SYSVOL either. Code review shows no reasonable limits and customer experiences go much higher. Repairing a damaged DFSR SYSVOL would go faster and be easier; not to mention it’s much less likely to be damaged in the first place due to improved reliability.

Question

Are there any improvements in AD bridgehead servers in Windows Server 2008 R2?

Answer

Yes, and we recently published them in a nicely hidden spot. Check:

Bridgehead Server Selection https://technet.microsoft.com/en-us/library/bridgehead_server_selection(WS.10).aspx

For the first time true probabilistic load-balancing was introduced for RWDC’s (the RODC’s got some of this code in Win2008). Connections and load are kept evenly distributed across all bridgeheads. This is a big deal in larger environments – we believe there is no longer any need to mess with ADLB.EXE.

The load-balancing doesn’t factor in hardware, just DC nodes. There is no forest functional level requirement, so you can coexist with 2008 and 2003, but those older OS’ don’t know about this new system. The more 2008 R2 DCs you use, the better the system will be in terms of load balancing. The KCC can still leave unbalanced connections if DCs go offline. The KCC doesn’t rebalance automatically when they come back up, as the unbalanced configuration that relies on DCs that haven’t gone offline is likely better than the balanced one that relied on machines that did go offline. You can manually force the load-balancing algorithm by deleting the inbound intersite connections for a DC or site and running the repadmin /kcc command (or deleting the connections then simply waiting for the KCC to run automatically within 15 minutes). We recommend upgrading your main hub site DC’s to Win2008 R2 first so that they can start evenly load-balancing with their out-of-site branch partners more efficiently. And heck, hubs are easier DC’s to upgrade, right? They’re just down the hall…

Question

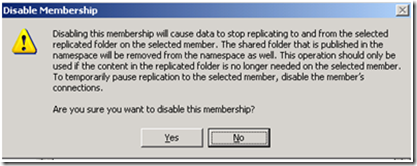

When I choose to disable a DFSR membership though the management snap-in (DFSMGMT.MSC), I get a very scary message:

The part that scares me is “The shared folder that is published in the namespace will be removed from the namespace as well”. I want to disable the membership and stop using DFSR on this folder, but I don’t want the DFS Namespace folder target to stop being shared. Possible?

Answer

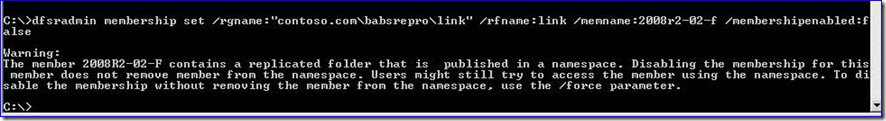

Yes, but you must use DFSRADMIN.EXE instead. For example:

dfsradmin membership set /RFName:Test-RG-RF /RGName:Test-RG /MemName:opa\win2008x64DC2 /MembershipEnabled:false

When this runs you will be warned that it will NOT remove the sharing of the folder through DFS:

If you add /FORCE to your dfsradmin command it will remove the DFSR membership but leave DFSN untouched.

Critical note: It’s seriously dangerous to do this as you are letting users continue to access data that is not being replicated anymore; if they are allowed to modify that data, and if you later re-enable this membership, all of their changes are going to be wiped out. You will be triggering a non-authoritative sync, after all. This is only reasonably safe if the replicated folder was using Read Only DFSR or if the share is marked only for READ permissions. This is why the GUI tries to talk you out of it and also remove the DFSN link to the folder.

Question

Why can’t my child domain admin authorize a DHCP server that’s a member of his same child domain?

Answer

I’ll give you a hint: where does the CN=DhcpRoot object live in the forest?

Question

I have heard that moving a DC to a child OU under the default Domain Controllers OU is not supported by Microsoft. Is it is possible, and any supporting arguments for or against doing this.

Answer

It’s supported but not recommended - bad things happen when developers assume an object will always be in the same spot. Some examples:

978994 Error message when you try to migrate the SYSVOL share from the FRS to the DFSR service in a Windows Server 2008 domain: "The parameter is incorrect" https://support.microsoft.com/default.aspx?scid=kb;EN-US;978994

833436 "The current DC is not in the domain controller's OU" error message when you run the Dcdiag tool https://support.microsoft.com/default.aspx?scid=kb;EN-US;833436

And so on. We periodically find bugs and fix them without much argument. More often it’s third parties that really get bent out of shape. Too many of their developers test using a domain built with DCPROMO using “next next next next done – now don’t touch it!”. They may not be as accommodating about a fix, so if you design this you are likely to need to un-design it someday.

The real question you have to ask yourself is: why do you feel the need to move the DC’s? Because you must, or because you can? I’ve never had a customer successfully convince me of the former case. You can try in the comments if you like, I welcome all comers. Don't say "because I need differnet policies applies to different computers" because you can use security groups (global or domain local) to do that, or WMI filters.

Question

I’m using USMT 4.0 with the /MD switch to migrate to a new domain. But if the mapped users do not exist in the new domain, the migration fails with “Fatal error 71” and these log entries:

2010-06-29 17:59:35, Info [0x000000] User CONTOSO\NED maps to FABRIKAM\NED

2010-06-29 17:59:35, Info [0x000000] Adding domain account CONTOSO\NED

2010-06-29 17:59:35, Info [0x0803b2] Adding user CONTOSO\NED

2010-06-29 17:59:35, Info [0x000000] Failed.[gle=0x00000091]

2010-06-29 17:59:35, Info [0x000000] A Windows Win32 API error occurred

Windows error 1332 description: No mapping between account names and security IDs was done.[gle=0x00000091]

2010-06-29 17:59:35, Info [0x000000] Windows Error 1332 description: No mapping between account names and security IDs was done.

2010-06-29 17:59:35, Info [0x000000] Entering MigCloseCurrentStore method

Is there a way to ignore this error? Using /C does not appear to work, and the <ErrorControl> element in config.xml is confusing.

Answer

This is expected behavior and by design. As workarounds:

1. Don’t migrate accounts that you have no intention of creating a new user for in the new domain (use /UEL or /UE).

or

2. If you are going to use them, pre-create the accounts. It’s not like the migrated profiles will be useful if they have no accounts.

or

3. Don’t hold it that way. Wait, that was Steve Jobs’ answer.

Your rubber band phone does not go with my hipster glasses. And I look better in a turtleneck than you.

The reason /C does not work is the error is fatal, and /C is only for non-fatal errors:

https://technet.microsoft.com/en-us/library/dd823291(WS.10).aspx

The reason <ErrorControl> is confusing here is because it’s not relevant. Take a closer look at the syntax (https://technet.microsoft.com/en-us/library/dd560760(WS.10).aspx#BKMK_ErrorControl). It’s for fine-tuned error handling of file and registry errors, not issues with the built-in command-line.

Question

What is Microsoft's best practice for where and how many DNS servers exist? What about for configuring DNS client settings on DC’s and members?

Answer

It depends on who you ask. :-) We in MS have been arguing this amongst ourselves for 11 years now. Here are the general guidelines that the Microsoft AD and Networking Support teams give to customers, based on our not inconsiderable experience with customers and their CritSits:

- If a DC is hosting DNS, it should point to itself at least somewhere in the client list of DNS servers.

- If at all possible on a DC, client DNS should point to another DNS server as primary and itself as secondary or tertiary. It should not point to self as primary due to various DNS islanding and performance issues that can occur. (This is where the arguments usually start)

- When referencing a DNS server on itself, a DNS client should always use a loopback address and not a real IP address.

- Unless there is a valid reason not to that you can concretely explain with more pros than cons, all DC’s in a domain should be running DNS and hosting at least their own DNS zone; all DC’s in the forest should be hosting the _MSDCS zones. This is default when DNS is configured on a new Win2003 or later forest’s DC’s. (Lots more arguments here).

- DC’s should have at least two DNS client entries.

- Clients should have these DNS servers specified via DHCP or by deploying via group policy/group policy preferences, to avoid admin errors; both of those scenarios allow you to align your clients with subnets, and therefore specific DNS servers. Having all the clients & members point to the same one or two DNS servers will eventually lead to an outage and a conversation with us and your manager. If every DC is a DNS server, clients can be fine-tuned to keep their traffic as local as possible and DNS will be highly available with special work or maintenance. It also means that branch offices can survive WAN outages and keep working, if they have local DC’s running DNS.

- We don’t care if you use Windows or 3rd party DNS. It’s no skin off our nose: you already paid us for the DC’s and we certainly don’t need you to buy DNS-only Windows servers. But we won’t be able to assist you with your BIND server, and their free product’s support is not free.

- (Other things I didn’t say that are people’s pet peeves, leading to even more arguments).

There are plans afoot to consolidate all this info, expand it, and get our message consistent and consolidated. This has started in the Windows Server 2008 R2 BPA for DNS. We also recently released a new namespace planning site that explains and prevents some design pitfalls:

DNS Namespace Planning Solution Center

https://support.microsoft.com/namespace

And we offer this great guide and portal site:

Creating a DNS Infrastructure Design https://technet.microsoft.com/en-us/library/cc725625(WS.10).aspx

DNS Portal https://technet.microsoft.com/en-us/network/bb629410.aspx

Now flame on.

You aren’t using conditional forwarders? I don’t even know you anymore…

Question

We're moving to Windows 2008 R2 DC’s and I'm wondering what is the best attribute is to determine unused user accounts? I've read the TechNet article here about “msDS-LastSuccessfulInteractiveLogonTime” and your blog here about “LastLogontimeStamp”. We're interested in completeness rather than up to the minute accuracy. Is using the msDS-LastSuccessfulInteractiveLogonTime attribute going to give complete results? Is one better to use than the other?

Answer

The problem with msDS-LastSuccessfulInteractiveLogonTime and its associated group policy “Display information about previous logons during user logon” is that they are going to only work for users logging on from Vista or later OS’s through CTRL+ALT+DEL (CredUI) and "Run As" operations (either through the Shell or with RUNAS.EXE). If there are accounts (like services, applications, etc) using creds elsewhere, this attribute will not be updated.

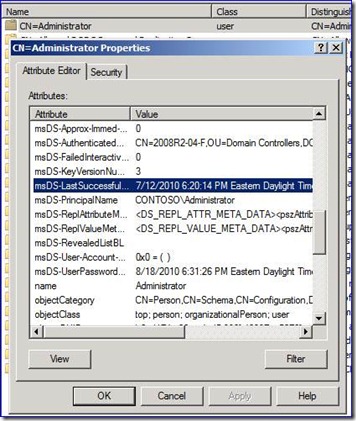

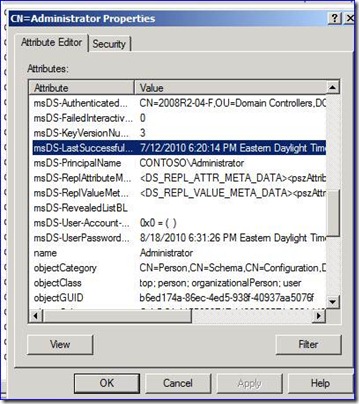

So for example, here I just logged in to some member computer using CTRL+ALT+DEL at the main logon screen, and logged on:

And here I then mapped a drive to another computer from that same client I just logged onto:

No difference; the value was not updated. I certainly logged on over there, but not interactively.

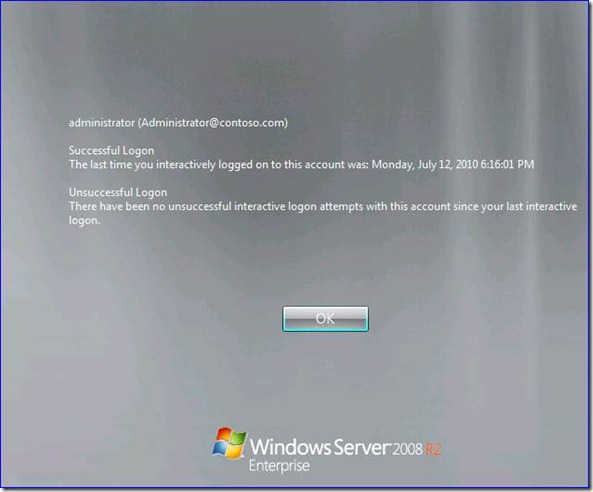

Also, using this policy changes the user logon experience pretty dramatically:

It’s designed more for high security environments where the end user would read this and say ‘What the… I didn’t log in yesterday. Haxors, call out the SWAT team!”. Mission Impossible movie stuff, where end users actually know what security and logons are. In reality the user will just click OK without reading, the same way they do when asked if they would like to install some malware. And you know the extra step will tick off some DB VP. This is really only valuable when your users are trained and cognizant about security – then it becomes very powerful indeed.

So sticking with the old Warren way is still valid. This was a great question, most folks don’t know about this new setting.

Other

Finally, we are still hiring full time employees here in DS support. We have not been overwhelmed with inquiries – so much for the great recession – so please send us your resume and come join us.

It's like working here.

Ok, more like here.

You won’t be bored.

- Ned “founded upon the observation of trifles” Pyle