Evaluating Windows Server and System Center on a Laptop (or two, or three) – Fabric

Building your Domain, Configuring Hyper-V and getting internet access for your VMs

Continuing on from my Introduction post, we’re now going to get our laptops to become the Fabric (compute, network and storage) for our evaluation of Windows Server and System Center.

Note: This is NOT best practice deployment advice. This is “notes from the field”. This is how to get by with what we have.

If you’re following along and have completed all the steps in the Introduction, you’ll now have a laptop (or two or three) dual booting into Windows Server and have the Hyper-V role enabled.

If you only have two laptops, your physical networking can be as simple as an Ethernet crossover cable. If you have three (or more) laptops, then you’ll need a switch - I use a little 8-port gigabit one. If you want to play with NIC Teaming, then you’ll need the switch and a few USB to Ethernet adapters (more on this in a subsequent post).

1. Internet access for your VMs (Network Fabric)

This might come across as putting the cart before the horse or the chicken before the egg, but you do need to decide on this early on (before you’ve even built your first virtual machine). Do you want/need your VMs to be able to connect to the internet? If you don’t, then feel free to ignore this part. If you do, then you have a few options:

Give all your VMs two virtual NICs

One that uses your Ethernet cable to connect to other VMs and hosts in your lab and one that goes out over WiFi to the internet. This is “messy” – especially if you need to fire up your VMs in a hotel or other public place (each connection will need its own set of credentials). I don’t recommend this one – but it is an option.

Build a Firewall VM

Create a virtual machine with two virtual NICs – one connected to the Ethernet for your lab and one to WiFi. Install a Firewall into it and setup web access rules. Have all your VMs use it for internet access (either set it as the default gateway or as the web proxy). When I use this option I install TMG (I know it’s been discontinued, but you can still download it from TechNet or MSDN).

This is a good solution if you’re using a set of pre-canned virtual machines, all pre-configured with their own IP addresses. You just need to configure the IP address on the lab interface of your firewall to match and you’re done (you may need to set the default gateway or web proxy on all your VMs too).

Use Internet Connection Sharing

This is the solution I use. It works everywhere I go, only needs one WiFi credential and gives all my VMs internet access (regardless what laptop they are running on).

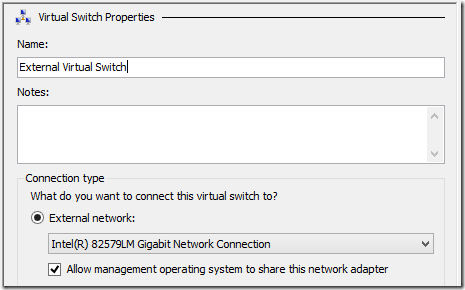

On all of your laptops, create an External Hyper-V Virtual Switch that connects to the physical Ethernet port on your laptop. Make sure you give them all the same name (or things will break later on) and make sure you select Allow management operating system to share this network adapter.

On one of your laptops, install the Wireless LAN Service feature. Disable WiFi on your other laptops. Now enable Internet Connection Sharing:

Open Network Connections (ncpa.cpl), right click the WiFi connection, select Properties and on the Sharing tab, check Allow other network users to connect through this computer’s Internet connection.

This actually sets up a MSHOME network. It sets the IP configuration for your Ethernet connection to 192.168.0.1 and configures a DHCP server that allocates IP configurations with 192.168.0.1 as the default gateway and DNS. Remember this – it will make sense later.

2. Building your Domain Controller (Compute Fabric)

Everything we do from now on requires Active Directory. Some people install AD directly onto their laptop – I don’t. I want my Hyper-V hosts (my laptops) to be dedicated to running Hyper-V and I want to be able to copy ALL my VMs somewhere else and have them still work. Having a virtual Domain Controller gives me just that.

Do these steps on the laptop with the WiFi enabled:

Create a new VM (DC01). Connect it to the External Virtual Switch we created earlier and install Windows Server.

It will pick up an IP configuration from your MSHOME network and will have full Internet access.

Optional step – create a VM Template

There’s no harm doing this step now – it will come in handy later (and doesn’t take too long).

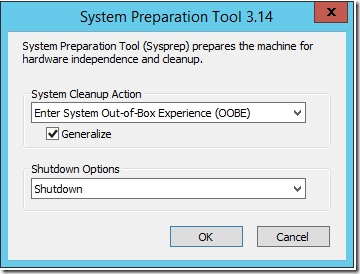

On your VM, run Windows Update, reboot and run Sysprep (Generalize & Shutdown)

Copy the VHD file away somewhere – you can use it as the starting point for all of your new VMs (rename it to something meaningful).

Continue building your Domain Controller – start the VM (DC01), finish off setup and change the computername (DC01)

Give it a static IP address on the 192.168.0.0/24 network (I use 192.168.0.100 just because it’s easy to remember!) and set the Default Gateway and DNS to 192.168.0.1

Check that you still have internet access.

Now install DNS and Active Directory Domain Services (new forest). You will loose Internet access as your preferred DNS server has been set to 127.0.0.1 (an internal loopback). To get it back you need to configure a DNS forwarder:

On DC01, in DNS Manager, right click the server and select Properties. On the Forwarders tab click Edit and enter the IP addresses of the DNS server on your network.

From a machine on your network, type IPCONFIG /ALL and look at the addresses of your DNS Servers. If they are different from that of your Default Gateway - use them (they are your DNS Servers). If they are the same, then the router on your network has the DNS configuration (it’s sending all DNS requests to your ISP) – if you can get that information, use it. If not, you can simply use a public DNS service (don’t tell anyone, but I use 8.8.8.8 and 8.8.4.4).

Your Domain Controller installation is complete and it should have full internet access.

3. Joining your hosts (laptops) to the domain (Compute Fabric)

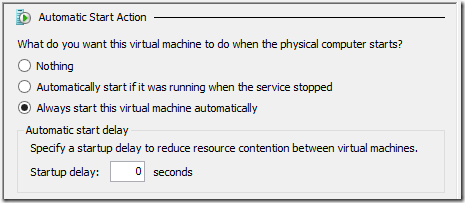

The default behaviour of a virtual machine, is to automatically start if it was running when the host it was running on was shutdown. You want your new Domain Controller to always start. In Hyper-V Manager, right click your Domain Controller VM and go into its Settings. Scroll down to the Automatic Start Action and set it to Always start this virtual machine automatically (without a delay)

Still on your first laptop, go into Network Settings (ncpa.cpl) and set the DNS server to be 192.168.0.100 on your Ethernet Virtual Switch.

Now simply join the domain and reboot. The virtual DC will automatically start and your laptop will (eventually) authenticate with it. You can now logon to your laptop with the Domain Administrator credentials.

On your second (and third) laptop, you have a choice to make (we like choices):

To use Static IP Addresses

If you do nothing, your second laptop will pick up an IP configuration from the MSHOME network running on your first laptop and will not be able to find your domain. So you either have to give it a static IP address on the 192.168.0.0/24 network (with 192.168.0.1 as its Default Gateway and 192.168.0.100 as its DNS server) or you need to configure a DHCP server for your network that overrides the MSHOME one. I prefer to use static addresses for my hosts, so we’ll ignore the DHCP option for now (we will come back to it in a later post).

So with a static IP configuration, join the domain and reboot. You should have full internet access via the Internet Connection Sharing on your first laptop.

4. Virtual Machine storage (Storage Fabric)

We’re using laptops, so Storage Fabric is a bit far-fetched – we’ll be using the disks (preferably SSDs) in the laptops. If your laptop has USB 3.0, eSATA or FireWire then an external disk caddy is a good idea. The only downside to external disks is that it’s just something else to carry! My laptops have a pair of 256GB SSDs in them.

We will look into other storage technologies in future posts, but as far as our Fabric (compute, network and storage) is concerned, we’re done.

We now (should) have a laptop (or two or three) all running Windows Server and Hyper-V, domain joined and with internet access.

Enjoy

@DaveNorthey

Part 1 in this series is here:Introduction

Part 2 in this series is here:Fabric

Part 3 in this series is here: Failover Clustering (part 1)

Part 4 in this series is here: Failover Clustering (part 2)