Network Settings, Network Teaming, Receive Side Scaling (RSS) & Unbalanced CPU Load

Modern powerful SAP application servers running multiple application servers can generate large amount of network traffic and can saturate a 1 Gigabit connection easily. Unless the network connections are correctly configured network can be a severe bottleneck.

All customers small, medium & large are strongly recommended to standardize on 10 Gigabit Network Technology for all new purchases even if 10 Gigabit Network Switches are not available.

10 Gigabit Network cards and their associated drivers have new technologies for distributing network processing across multiple processors, handle Processor Groups/K-Groups and NUMA handling.

How to Identify a Common Network Problem?

The scenario below shows a HP DL980 dedicated database server and 3 x DL380 application servers. Each DL380 has 4 SAP instances (system numbers 00,01,02,03) each instance having ~50 workprocesses. A useful way to test for network bottlenecks is to run SGEN. SGEN will generate a lot of network traffic and will also stress the CPU on the SAP application server. In order to stress the DB server further it is also possible to run a CHECKDB and a backup simultaneously. Such a test is useful to do before go live or during an off peak period. It is not recommended to run SGEN while users are on a system as they will likely receive Shortdumps “LOAD_VERSION_LOST”.

In the scenario below the following was done:

- Run SGEN

- Run DBCC CHECKDB WITH PHYSICAL ONLY

- Run BACKUP DATABASE <SID> TO DISK=’NUL:’ WITH COPY_ONLY;

- Run Task Manager – go to View Menu and “Show Kernel Times”

- Run Perfmon with some specific counters (listed below)

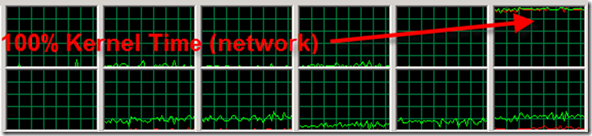

Figure 1 shows Processor 11 (processors start from 0,1,2….) is pegged at 100% Kernel time

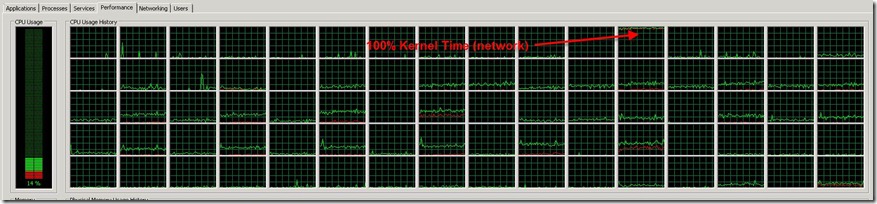

Figure 2 shows all 80 logical processors. Only one of them is particularly busy. 79 out of the 80 local processors are almost idle

How to verify this is a Receive Side Scaling issue?

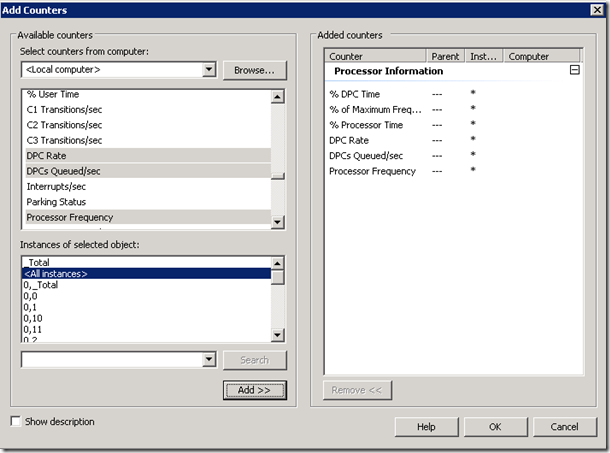

Windows OS includes a powerful tool Perfmon. As of Windows 2008 R2 the perfmon counter “Processor Information” should be used. Select the following counters.

Figure 3 shows the required perfmon counters <all instances>

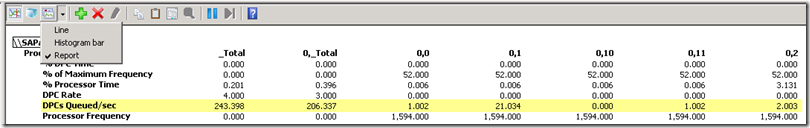

Figure 4 shows Perfmon changed to report mode. DPCs Queued/sec will be extra-ordinarily high on processor 11 (not shown in this picture due to the width of this screenshot)

If the following symptoms manifest it is highly likely that the network is misconfigured

- One processor in Task Manager shows very high utilization and the majority of the utilization is in “Red” meaning Kernel time

- The majority of the other processors are not busy or idle

- Perfmon DPCs Queued shows a high number of DPC queued on the processor identified in #1 above

What is Happening & How Fix This Network Issue?

The problem is that all network processing is bottlenecked on one logical processor. The network configuration has been misconfigured and this will lead to severe performance problems. Problems such as these are more severe and pronounced on more powerful 4 processor and 8 processor Intel servers, therefore the network configuration on 4 processor and 8 processor servers is extremely important.

Modern Network Cards support Receive Side Scaling. RSS is a technology to distribute network processing over multiple processors thereby avoiding the bottlenecks seen above.

10 Gigabit Network Cards generally have better and more configurable device drivers than 1 Gigabit Network Cards. In addition most 10 Gigabit Network Cards are aware of Processor Groups and NUMA topologies.

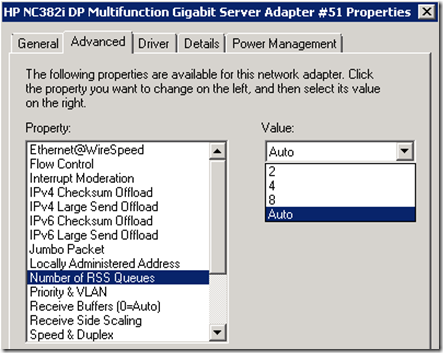

How to Switch On RSS for a Stand Alone Network Card

Most 1 Gigabit network cards support RSS and up to 8 queues.

All 10 Gigabit network cards support RSS 16 or more queues.

Different Network card vendors use slightly different terminology : Queues, Rings, Processors are all common terminologies. This blog will use the term “Queue”. Basically the number of “Queues” is the number of Processors that will handle network traffic processing.

Note: more queues does not always mean better network throughput. It is best to leave this setting “Auto” if available. If Auto is not available set this to a number equal to or less than the number of logical processors per physical processor otherwise expensive NUMA memory access could occur.

Example A: 4 processor server with 4 cores and Hyperthreading switched off. The ideal value would be 4. Setting the value to 8 could leave to expensive NUMA access and result in poor network performance

Example B: 8 processor server with 10 cores and Hyperthreading switched on. Each physical processor (which is one NUMA node) has 20 logical processors. Each processor has 10 cores and they are hyperthreaded therefore present as 20 logical processors. A RSS Queue value of 16 is possible so long as Hyperthreading is switched on. If Hyperthreaded was switched off, remember to reduce the number of RSS queues to less than 10 otherwise network processing could occur across NUMA nodes

Sometimes it can be difficult to determine exactly how many Processors, Cores, Logical Processors are on a system. It is recommended to read this blog, run MSINFO32 and download coreinfo.exe if you are unsure

Figure 5 above shows number of RSS Queues. In general try to use “Auto” if available.

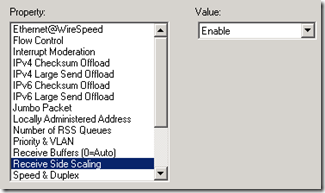

Figure 6 above shows RSS enabled. High throughput links should always have RSS enabled. Links such as Heart Beat for clusters should not have RSS enabled

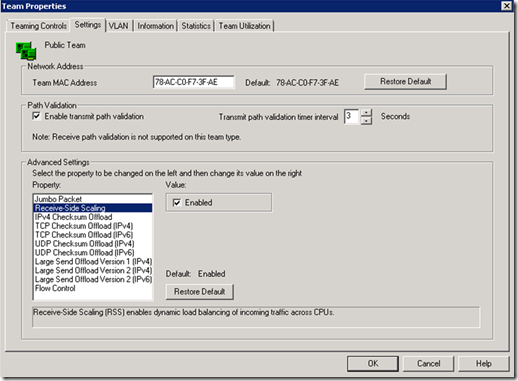

How to Switch On RSS for a Teamed Network Card

In order to increase aggregate bandwidth and to provide fault tolerance some customers team network connections. It is important that the network teaming utility supports RSS otherwise the network teaming may itself cause a severe performance bottleneck.

HP, Dell, NEC and IBM all have teaming utilities. It is important to update to the latest version available. Older versions of the HP Network Configuration Utility (NCU) do not support RSS for teams and will create a huge bottleneck. It is recommended to use NCU 10.45 or later for HP servers.

Figure 7 shows the NCU 10.45 for HP servers with RSS enabled

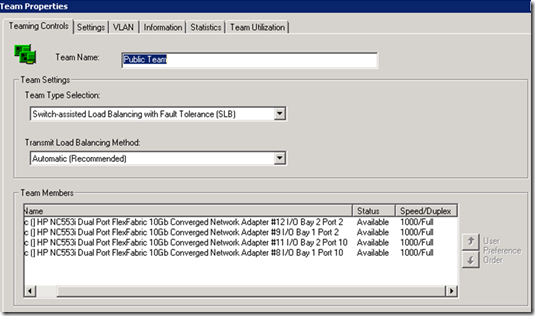

Additional Note About Network Teaming

Software based Network Teaming is usually a Transmit functionality only. The teaming software is able to send/transmit data and aggregate bandwidth.

In order to have both Transmit and Receive aggregate Switch Port Trunking is needed. This requires the Cisco/Network administrator to configure the ports on the switch.

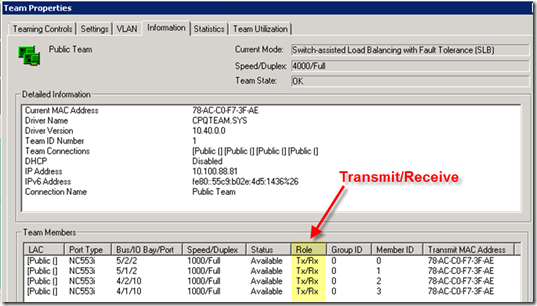

Figure 8 shows Switch Assisted Load Balancing (SLB) used to aggregate 4 x 1 Gigabit links

Figure 9 shows that all four ports can send and receive. Software only teaming would show 4 x Tx and 1 x Rx (Receive).

Medium (3,000-5,000 users) and Large (>10,000 users) size SAP systems are recommended to have two networks. One network is for traffic in between the SAP application servers <-> SQL Server DB Server. The other network is for public access to the SAP application servers (via SAPGUI, RFC etc). The reason is to ensure adequate bandwidth and low latency is always available between the SAP application servers <-> SQL Server DB Server.

Multi-homed servers must meet the following requirements : the name resolution from the DB host to all application servers must be consistent.

IPx -> Hostname x -> IPx

Hostname x -> IPx -> Hostname x

The SAP trace files dev_disp and dev_ms will display will display warnings if the above conditions are not met

Note 21151 - Multiple Network adapters in SAP Servers (download the attachments and read them)

Note 129997 - Hostname and IP address lookup (from this note)

It is crucial for the operation of the R/3 system that the following requirement is fulfilled for all hosts running R/3 instances:

a) The hostname of the computer (or the name that is configured with the profile parameter SAPLOCALHOST) must be resolvable into an IP address.

b) This IP address must resolve back into the same hostname. If the IP address resolves into more than one address, the hostname must be first in the list.

c) This resolution must be identical on all R/3 server machines that belong to the same R/3 system.

Note 364552 - Loadbalancing does not find application server

It is recommended to do the following:

- Open Command Prompt

- Run hostname

- Run nslookup <hostname>

- Run ping –a <ip in #3>

- Adjust the bindings as needed under Advanced Network Properties

- Remove any entries in /etc/hosts file

Figure 10 shows Adapters and Binding – this can be used to adjust the bindings order

Final Recommendations

When renewing or migrating to SQL Server it is highly recommended to use 10 Gigabit technologies especially on larger scale up servers.

Common configurations used by customers today are:

SAP application server/SQL DB server :

- 2 x Intel Nehalem (6-8 core) or AMD Processors (12-16 core) ~28,000-35,000 SAPS or more

- 128GB RAM

- 2 x Dual Port HBA (required for DB servers only)

- Dual 10 Gigabit NIC

SQL Server DB 4 way Servers

- 4 x Intel Nehalem (10 core) or AMD Processors (12-16 core) ~55,000 – 74,000 SAPS

- 512GB RAM

- 2 or 4 Dual Port HBA

- 2 x Dual port 10 Gigabit NIC (on board 1 Gbps NIC used for cluster heartbeat)

SQL Server DB 8 way Servers

- 8 x Intel Nehalem 10 core Processors > 135,000 SAPS

- 512-1TB GB RAM

- 2 or 4 Dual Port HBA

- 2 x Dual port 10 Gigabit NIC (on board 1 Gbps NIC used for cluster heartbeat)

It is strongly recommended not to run SAP ABAP or Java application server on scale up servers. SQL Server is Processor Group & NUMA aware but SAP is not able to effectively and efficiently run on NUMA scale up hardware. SAP ABAP application server runs best on 2 processor configurations. Customers trying to run SAP ABAP systems on large scale up 8 way systems have reported significant and serious performance problems. A specific example is the ABAP server will by default only run on one processor out of eight in some configurations. 2 processor Intel commodity servers are recommended for SAP applications.

SQL Server is fully able to utilize scale up NUMA server platforms.

More information on RSS can be found here: Scalable Networking- Eliminating the Receive Processing Bottleneck-Introducing RSS

During OS/DB Migrations from UNIX/Oracle/DB2 to Windows SQL it is highly recommended to use 10 Gigabit NIC as the network traffic from dedicated R3LOAD server(s) can be very high.

![clip_image002[8] clip_image002[8]](https://msdntnarchive.blob.core.windows.net/media/MSDNBlogsFS/prod.evol.blogs.msdn.com/CommunityServer.Blogs.Components.WeblogFiles/00/00/00/84/42/metablogapi/6204.clip_image0028_thumb_0544626C.jpg)