Interactive Lab on Microsoft Cognitive Services at Oxford

Guest post from Shu Ishida Microsoft Team Leader at Oxford University

My name is Shu Ishida. I am a third year engineering student, and team lead for the Microsoft Student Partner Chapter at the University of Oxford. I am into web development and machine learning, and my ambition is to help break down educational, cultural and social barriers by technological innovation. My current interest is in applications of Artificial Intelligence to e-Learning. I also have passion towards outreach and teaching, and have mentoring experiences in summer schools as an Oxford engineering student ambassador.

LinkedIn: https://www.linkedin.com/in/shu-ishida

On 26th February, Oxford Microsoft Student Partners hosted a Microsoft speaker event. We were delighted to welcome Ms Frances Tibble, who kindly travelled to Oxford to run an interactive lab on Microsoft Cognitive Services.

Frances (@frances_tibble) graduated with a degree in Computing from Imperial College London having completed a final year project with Microsoft Research.

Frances recently graduated from Imperial College London, joining Microsoft as a Software Engineer. She now specialises in Machine Learning and AI.

In the session, the attendees learnt how to build on Microsoft’s Cognitive Services, which is perfectly suited for hackathon projects. It was a beginner-friendly session which assumes no programming experience but walked people through how to set up image classification and language models. Frances provided us the starter code on GitHub.

Part 1: Vision

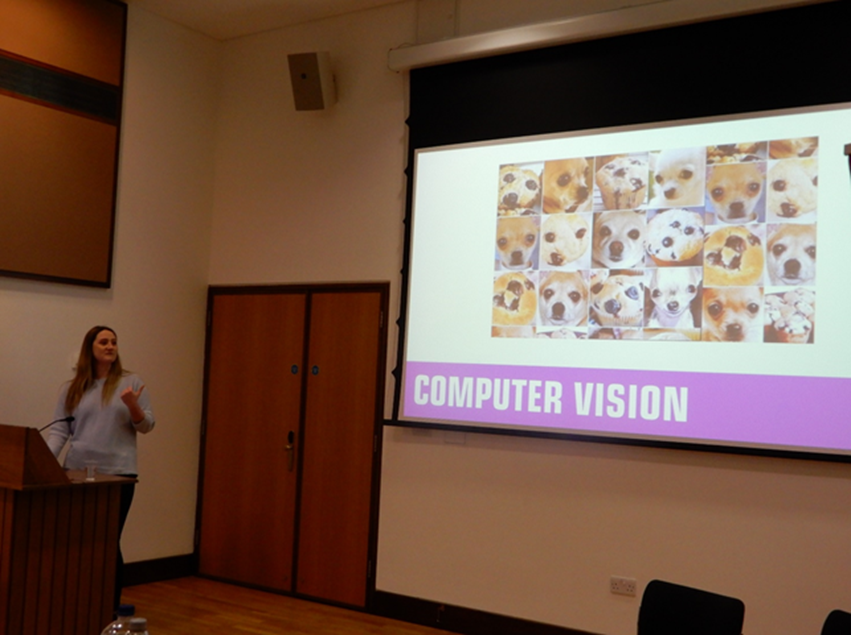

Frances started off by showing us to the breadth of AI solutions Microsoft has on offer. After a general overview of the all the APIs and services, Frances showed us a demo of how exactly we could use the Computer Vision API to produce an image caption or identify text using the image of a college in Oxford.

Pictured: "Dog versus blueberry muffin" is an example of images that are visually similar. For a human, these aren't too difficult to identify, but it proves a far more challenging task for a machine.

The demo was followed by a hands-on lab where we built our own machine learning model to classify images using Microsoft's Custom Vision Service. This was taken from a real-life example, where image classification was used for analysing and labelling different sign languages. For the lab, we specifically trained the model to identify sign language that corresponds to ‘rabbit’ and ‘sleeping’. With just 5 training images for each class, we were able to achieve a remarkable level of accuracy of over 95%.

Part 2: Language (30 mins)

Then we had a deep-dive presentation into Microsoft Language Services. Frances mentioned her recent project for a hospital, where they needed a handy service to find unoccupied beds, and to also identify the wards each patient resides in when the doctors/nurses are in a hurry. Using LUIS (Language Understanding Intelligent Service) we were able to create our own custom language model that could understand the request a doctor or nurse might be making whilst out on the ward.

Pictured: LUIS uses utterances – phrases a user might say, intents – their intended action, and entities – keywords in a sentence, to add intelligence to language.

After some explanation and a demo of how to use LUIS, Frances walked us through a lab to build our own custom language model. We learnt the concepts of intents and entities. In the hospital ward example, a nurse might make the utterance "is there a free bed on ward 1A?". Their intended action, their intent, is to find a free bed. LUIS is able to pick out the keyword (entity) 'Ward 1A' which we could later use to query a database in order to return a useful result. Frances showed us how we could build an accurate language model in a short space of time.

Closing

After the event, many stayed on to enjoy the social, where we sat around in a circle in sofas and talked about AI, quantum computing and Frances’ life at Microsoft.

We would like to thank Ms Frances Tibble for the entertaining session!