Quickstart: Run a workflow through the Microsoft Genomics service

In this quickstart, you upload input data into an Azure Blob storage account, and run a workflow through the Microsoft Genomics service by using the Python Genomics client. Microsoft Genomics is a scalable, secure service for secondary analysis that can rapidly process a genome, starting from raw reads and producing aligned reads and variant calls.

Prerequisites

- An Azure account with an active subscription. Create an account for free.

- Python 2.7.12+, with

pipinstalled, andpythonin your system path. The Microsoft Genomics client isn't compatible with Python 3.

Set up: Create a Microsoft Genomics account in the Azure portal

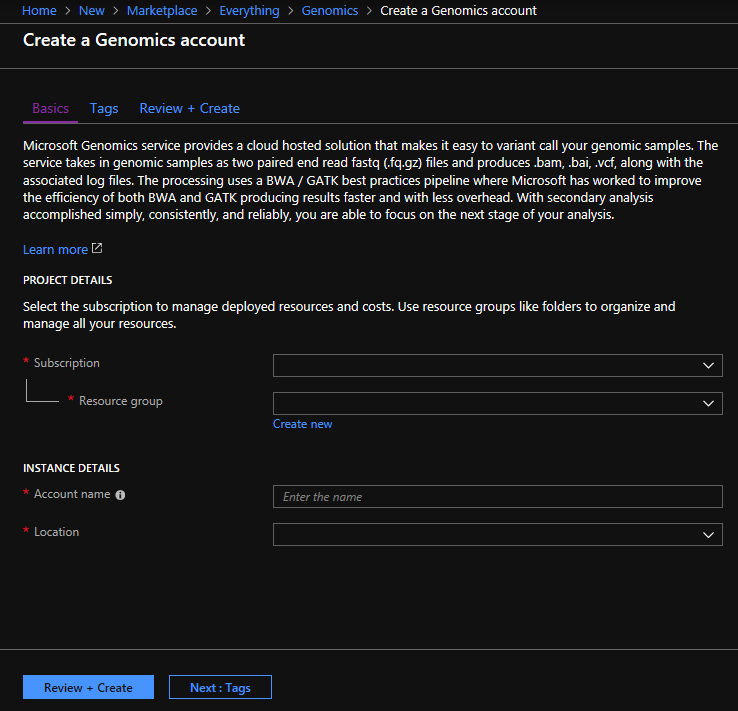

To create a Microsoft Genomics account, navigate to Create a Genomics account in the Azure portal. If you don’t have an Azure subscription yet, create one before creating a Microsoft Genomics account.

Configure your Genomics account with the following information, as shown in the preceding image.

| Setting | Suggested value | Field description |

|---|---|---|

| Subscription | Your subscription name | This is the billing unit for your Azure services - For details about your subscription see Subscriptions |

| Resource group | MyResourceGroup | Resource groups allow you to group multiple Azure resources (storage account, genomics account, etc.) into a single group for simple management. For more information, see Resource Groups. For valid resource group names, see Naming Rules |

| Account name | MyGenomicsAccount | Choose a unique account identifier. For valid names, see Naming Rules |

| Location | West US 2 | Service is available in West US 2, West Europe, and Southeast Asia |

You can select Notifications in the top menu bar to monitor the deployment process.

For more information about Microsoft Genomics, see What is Microsoft Genomics?

Set up: Install the Microsoft Genomics Python client

You need to install both Python and the Microsoft Genomics Python client msgen in your local environment.

Install Python

The Microsoft Genomics Python client is compatible with Python 2.7.12 or a later 2.7.xx version. 2.7.14 is the suggested version. You can find the download here.

Important

Python 3.x isn't compatible with Python 2.7.xx. msgen is a Python 2.7 application. When running msgen, make sure that your active Python environment is using a 2.7.xx version of Python. You may get errors when trying to use msgen with a 3.x version of Python.

Install the Microsoft Genomics Python client msgen

Use Python pip to install the Microsoft Genomics client msgen. The following instructions assume Python2.x is already in your system path. If you have issues with pip install not being recognized, you need to add Python and the scripts subfolder to your system path.

pip install --upgrade --no-deps msgen

pip install msgen

If you don't want to install msgen as a system-wide binary and modify system-wide Python packages, use the –-user flag with pip.

When you use the package-based installation or setup.py, all necessary required packages are installed.

Test msgen Python client

To test the Microsoft Genomics client, download the config file from your Genomics account. In the Azure portal, navigate to your Genomics account by selecting All services in the top left, and then searching for and selecting Genomics accounts.

Select the Genomics account you just made, navigate to Access Keys, and download the configuration file.

Test that the Microsoft Genomics Python client is working with the following command

msgen list -f "<full path where you saved the config file>"

Create a Microsoft Azure Storage account

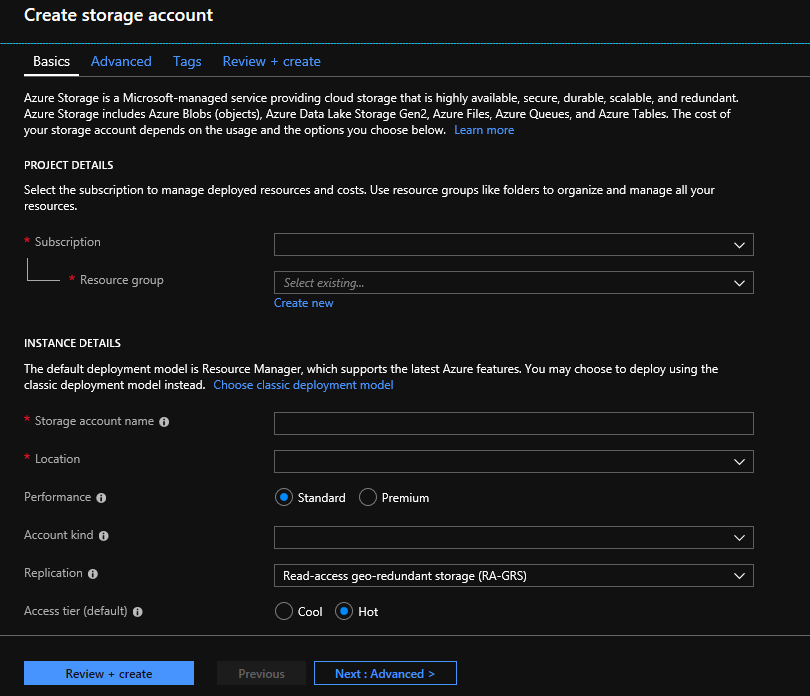

The Microsoft Genomics service expects inputs to be stored as block blobs in an Azure storage account. It also writes output files as block blobs to a user-specified container in an Azure storage account. The inputs and outputs can reside in different storage accounts. If you already have your data in an Azure storage account, you only need to make sure that it is in the same location as your Genomics account. Otherwise, egress charges are incurred when running the Microsoft Genomics service. If you don’t yet have an Azure storage account, you need to create one and upload your data. You can find more information about Azure storage accounts here, including what a storage account is and what services it provides. To create an Azure storage account, navigate to Create storage account in the Azure portal.

Configure your storage account with the following information, as shown in the preceding image. Use most of the standard options for a storage account, specifying only that the account is BlobStorage, not general purpose. Blob storage can be 2-5x faster for downloads and uploads. The default deployment model, Azure Resource Manager, is recommended.

| Setting | Suggested value | Field description |

|---|---|---|

| Subscription | Your Azure subscription | For details about your subscription see Subscriptions |

| Resource group | MyResourceGroup | You can select the same resource group as your Genomics account. For valid resource group names, see Naming rules |

| Storage account name | MyStorageAccount | Choose a unique account identifier. For valid names, see Naming rules |

| Location | West US 2 | Use the same location as the location of your Genomics account, to reduce egress charges, and reduce latency. |

| Performance | Standard | The default is standard. For more details on standard and premium storage accounts, see Introduction to Microsoft Azure storage |

| Account kind | BlobStorage | Blob storage can be 2-5x faster than general purpose for downloads and uploads. |

| Replication | Locally redundant storage | Locally redundant storage replicates your data within the datacenter in the region you created your storage account. For more information, see Azure Storage replication |

| Access tier | Hot | Hot access indicates objects in the storage account will be more frequently accessed. |

Then select Review + create to create your storage account. As you did with the creation of your Genomics account, you can select Notifications in the top menu bar to monitor the deployment process.

Upload input data to your storage account

The Microsoft Genomics service expects paired end reads (fastq or bam files) as input files. You can choose to either upload your own data, or explore using publicly available sample data provided for you.

Within your storage account, you need to make one blob container for your input data and a second blob container for your output data. Upload the input data into your input blob container. Various tools can be used to do this, including Microsoft Azure Storage Explorer, BlobPorter, or AzCopy.

Run a workflow through the Microsoft Genomics service using the msgen Python client

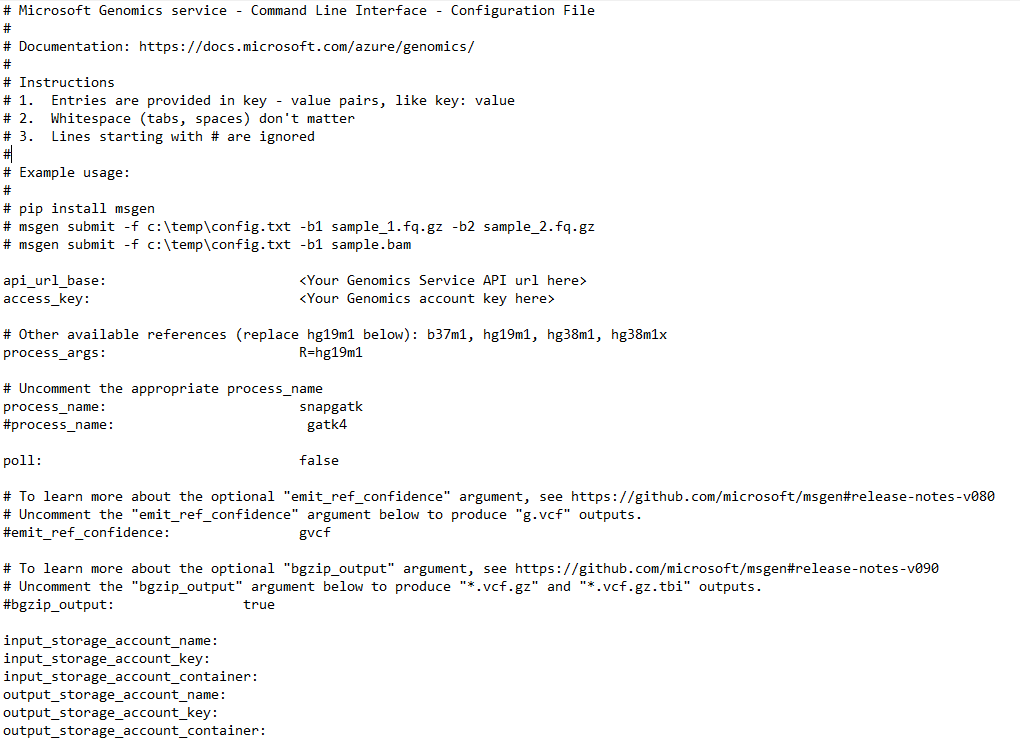

To run a workflow through the Microsoft Genomics service, edit the config.txt file to specify the input and output storage container for your data. Open the config.txt file that you downloaded from your Genomics account. The sections you need to specify are your subscription key and the six items at the bottom, the storage account name, key, and container name for both the input and output. You can find this information by navigating in the Azure portal to Access keys for your storage account, or directly from the Azure Storage Explorer.

If you would like to run GATK4, set the process_name parameter to gatk4.

By default, the Genomics service outputs VCF files. If you would like a gVCF output rather than a VCF output (equivalent to -emitRefConfidence in GATK 3.x and emit-ref-confidence in GATK 4.x), add the emit_ref_confidence parameter to your config.txt and set it to gvcf, as shown in the preceding figure. To change back to VCF output, either remove it from the config.txt file or set the emit_ref_confidence parameter to none.

bgzip is a tool that compresses the vcf or gvcf file, and tabix creates an index for the compressed file. By default, the Genomics service runs bgzip followed by tabix on ".g.vcf" output but does not run these tools by default for ".vcf" output. When run, the service produces ".gz" (bgzip output) and ".tbi" (tabix output) files. The argument is a boolean, which is set to false by default for ".vcf" output, and to true by default for ".g.vcf" output. To use on the command line, specify -bz or --bgzip-output as true (run bgzip and tabix) or false. To use this argument in the config.txt file, add bgzip_output: true or bgzip_output: false to the file.

Submit your workflow to the Microsoft Genomics service using the msgen Python client

Use the Microsoft Genomics Python client to submit your workflow with the following command:

msgen submit -f [full path to your config file] -b1 [name of your first paired end read] -b2 [name of your second paired end read]

You can view the status of your workflows using the following command:

msgen list -f c:\temp\config.txt

Once your workflow completes, you can view the output files in your Azure storage account in the output container that you configured.

Next steps

In this article, you uploaded sample input data into Azure storage and submitted a workflow to the Microsoft Genomics service through the msgen Python client. To learn more about other input file types that can be used with the Microsoft Genomics service, see the following pages: paired FASTQ | BAM | Multiple FASTQ or BAM.

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for