Quickstart: Create Apache Hadoop cluster in Azure HDInsight using ARM template

In this quickstart, you use an Azure Resource Manager template (ARM template) to create an Apache Hadoop cluster in Azure HDInsight. Hadoop was the original open-source framework for distributed processing and analysis of big data sets on clusters. The Hadoop ecosystem includes related software and utilities, including Apache Hive, Apache HBase, Spark, Kafka, and many others.

An Azure Resource Manager template is a JavaScript Object Notation (JSON) file that defines the infrastructure and configuration for your project. The template uses declarative syntax. You describe your intended deployment without writing the sequence of programming commands to create the deployment.

Currently HDInsight comes with seven different cluster types. Each cluster type supports a different set of components. All cluster types support Hive. For a list of supported components in HDInsight, see What's new in the Hadoop cluster versions provided by HDInsight?

If your environment meets the prerequisites and you're familiar with using ARM templates, select the Deploy to Azure button. The template will open in the Azure portal.

Prerequisites

If you don't have an Azure subscription, create a free account before you begin.

Review the template

The template used in this quickstart is from Azure Quickstart Templates.

{

"$schema": "https://schema.management.azure.com/schemas/2019-04-01/deploymentTemplate.json#",

"contentVersion": "1.0.0.0",

"metadata": {

"_generator": {

"name": "bicep",

"version": "0.26.54.24096",

"templateHash": "1839820966662864707"

}

},

"parameters": {

"clusterName": {

"type": "string",

"metadata": {

"description": "The name of the HDInsight cluster to create."

}

},

"clusterType": {

"type": "string",

"allowedValues": [

"hadoop",

"intractivehive",

"hbase",

"storm",

"spark"

],

"metadata": {

"description": "The type of the HDInsight cluster to create."

}

},

"clusterLoginUserName": {

"type": "string",

"metadata": {

"description": "These credentials can be used to submit jobs to the cluster and to log into cluster dashboards."

}

},

"clusterLoginPassword": {

"type": "securestring",

"minLength": 10,

"metadata": {

"description": "The password must be at least 10 characters in length and must contain at least one digit, one upper case letter, one lower case letter, and one non-alphanumeric character except (single-quote, double-quote, backslash, right-bracket, full-stop). Also, the password must not contain 3 consecutive characters from the cluster username or SSH username."

}

},

"sshUserName": {

"type": "string",

"metadata": {

"description": "These credentials can be used to remotely access the cluster. The username cannot be admin."

}

},

"sshPassword": {

"type": "securestring",

"minLength": 6,

"maxLength": 72,

"metadata": {

"description": "SSH password must be 6-72 characters long and must contain at least one digit, one upper case letter, and one lower case letter. It must not contain any 3 consecutive characters from the cluster login name"

}

},

"location": {

"type": "string",

"defaultValue": "[resourceGroup().location]",

"metadata": {

"description": "Location for all resources."

}

},

"HeadNodeVirtualMachineSize": {

"type": "string",

"defaultValue": "Standard_E4_v3",

"allowedValues": [

"Standard_A4_v2",

"Standard_A8_v2",

"Standard_E2_v3",

"Standard_E4_v3",

"Standard_E8_v3",

"Standard_E16_v3",

"Standard_E20_v3",

"Standard_E32_v3",

"Standard_E48_v3"

],

"metadata": {

"description": "This is the headnode Azure Virtual Machine size, and will affect the cost. If you don't know, just leave the default value."

}

},

"WorkerNodeVirtualMachineSize": {

"type": "string",

"defaultValue": "Standard_E4_v3",

"allowedValues": [

"Standard_A4_v2",

"Standard_A8_v2",

"Standard_E2_v3",

"Standard_E4_v3",

"Standard_E8_v3",

"Standard_E16_v3",

"Standard_E20_v3",

"Standard_E32_v3",

"Standard_E48_v3"

],

"metadata": {

"description": "This is the workdernode Azure Virtual Machine size, and will affect the cost. If you don't know, just leave the default value."

}

}

},

"variables": {

"defaultStorageAccount": {

"name": "[uniqueString(resourceGroup().id)]",

"type": "Standard_LRS"

}

},

"resources": [

{

"type": "Microsoft.Storage/storageAccounts",

"apiVersion": "2021-08-01",

"name": "[variables('defaultStorageAccount').name]",

"location": "[parameters('location')]",

"sku": {

"name": "[variables('defaultStorageAccount').type]"

},

"kind": "StorageV2",

"properties": {}

},

{

"type": "Microsoft.HDInsight/clusters",

"apiVersion": "2021-06-01",

"name": "[parameters('clusterName')]",

"location": "[parameters('location')]",

"properties": {

"clusterVersion": "4.0",

"osType": "Linux",

"clusterDefinition": {

"kind": "[parameters('clusterType')]",

"configurations": {

"gateway": {

"restAuthCredential.isEnabled": true,

"restAuthCredential.username": "[parameters('clusterLoginUserName')]",

"restAuthCredential.password": "[parameters('clusterLoginPassword')]"

}

}

},

"storageProfile": {

"storageaccounts": [

{

"name": "[replace(replace(concat(reference(resourceId('Microsoft.Storage/storageAccounts', variables('defaultStorageAccount').name), '2021-08-01').primaryEndpoints.blob), 'https:', ''), '/', '')]",

"isDefault": true,

"container": "[parameters('clusterName')]",

"key": "[listKeys(resourceId('Microsoft.Storage/storageAccounts', variables('defaultStorageAccount').name), '2021-08-01').keys[0].value]"

}

]

},

"computeProfile": {

"roles": [

{

"name": "headnode",

"targetInstanceCount": 2,

"hardwareProfile": {

"vmSize": "[parameters('HeadNodeVirtualMachineSize')]"

},

"osProfile": {

"linuxOperatingSystemProfile": {

"username": "[parameters('sshUserName')]",

"password": "[parameters('sshPassword')]"

}

}

},

{

"name": "workernode",

"targetInstanceCount": 2,

"hardwareProfile": {

"vmSize": "[parameters('WorkerNodeVirtualMachineSize')]"

},

"osProfile": {

"linuxOperatingSystemProfile": {

"username": "[parameters('sshUserName')]",

"password": "[parameters('sshPassword')]"

}

}

}

]

}

},

"dependsOn": [

"[resourceId('Microsoft.Storage/storageAccounts', variables('defaultStorageAccount').name)]"

]

}

],

"outputs": {

"storage": {

"type": "object",

"value": "[reference(resourceId('Microsoft.Storage/storageAccounts', variables('defaultStorageAccount').name), '2021-08-01')]"

},

"cluster": {

"type": "object",

"value": "[reference(resourceId('Microsoft.HDInsight/clusters', parameters('clusterName')), '2021-06-01')]"

}

}

}

Two Azure resources are defined in the template:

- Microsoft.Storage/storageAccounts: create an Azure Storage Account.

- Microsoft.HDInsight/cluster: create an HDInsight cluster.

Deploy the template

Select the Deploy to Azure button below to sign in to Azure and open the ARM template.

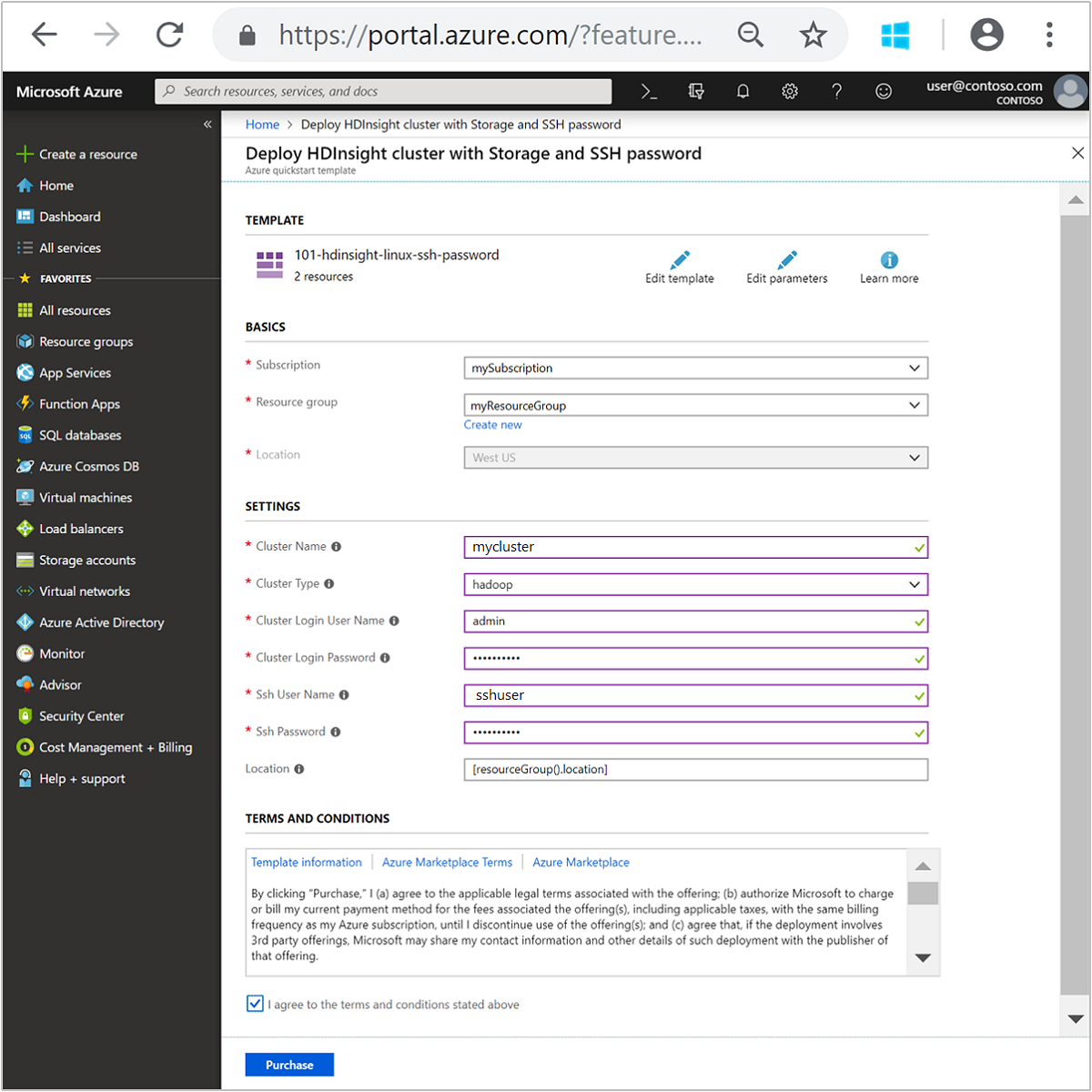

Enter or select the following values:

Property Description Subscription From the drop-down list, select the Azure subscription that's used for the cluster. Resource group From the drop-down list, select your existing resource group, or select Create new. Location The value will autopopulate with the location used for the resource group. Cluster Name Enter a globally unique name. For this template, use only lowercase letters, and numbers. Cluster Type Select hadoop. Cluster Login User Name Provide the username, default is admin.Cluster Login Password Provide a password. The password must be at least 10 characters in length and must contain at least one digit, one uppercase, and one lower case letter, one non-alphanumeric character (except characters ' ` ").Ssh User Name Provide the username, default is sshuser.Ssh Password Provide the password. Some properties have been hardcoded in the template. You can configure these values from the template. For more explanation of these properties, see Create Apache Hadoop clusters in HDInsight.

Note

The values you provide must be unique and should follow the naming guidelines. The template does not perform validation checks. If the values you provide are already in use, or do not follow the guidelines, you get an error after you have submitted the template.

Review the TERMS AND CONDITIONS. Then select I agree to the terms and conditions stated above, then Purchase. You'll receive a notification that your deployment is in progress. It takes about 20 minutes to create a cluster.

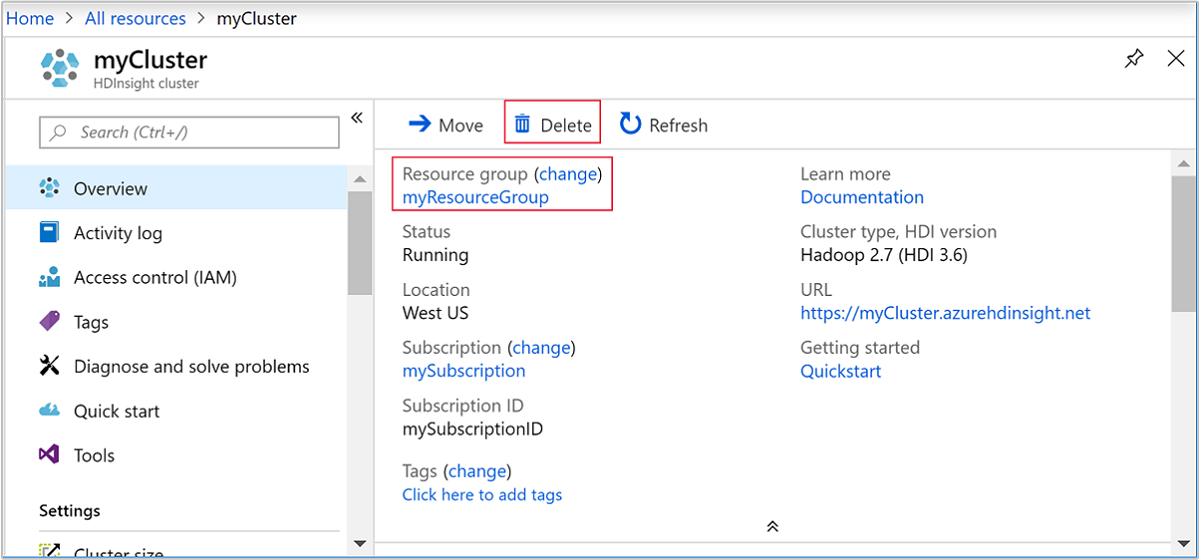

Review deployed resources

Once the cluster is created, you'll receive a Deployment succeeded notification with a Go to resource link. Your Resource group page will list your new HDInsight cluster and the default storage associated with the cluster. Each cluster has an Azure Blob Storage account, an Azure Data Lake Storage Gen1, or an Azure Data Lake Storage Gen2 dependency. It's referred as the default storage account. The HDInsight cluster and its default storage account must be colocated in the same Azure region. Deleting clusters doesn't delete the storage account.

Note

For other cluster creation methods and understanding the properties used in this quickstart, see Create HDInsight clusters.

Clean up resources

After you complete the quickstart, you may want to delete the cluster. With HDInsight, your data is stored in Azure Storage, so you can safely delete a cluster when it isn't in use. You're also charged for an HDInsight cluster, even when it isn't in use. Since the charges for the cluster are many times more than the charges for storage, it makes economic sense to delete clusters when they aren't in use.

Note

If you are immediately proceeding to the next tutorial to learn how to run ETL operations using Hadoop on HDInsight, you may want to keep the cluster running. This is because in the tutorial you have to create a Hadoop cluster again. However, if you are not going through the next tutorial right away, you must delete the cluster now.

From the Azure portal, navigate to your cluster, and select Delete.

You can also select the resource group name to open the resource group page, and then select Delete resource group. By deleting the resource group, you delete both the HDInsight cluster, and the default storage account.

Next steps

In this quickstart, you learned how to create an Apache Hadoop cluster in HDInsight using an ARM template. In the next article, you learn how to perform an extract, transform, and load (ETL) operation using Hadoop on HDInsight.

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for