Agregacja i zbieranie zdarzeń przy użyciu funkcji EventFlow

Przepływ zdarzeń diagnostycznych firmy Microsoft może kierować zdarzenia z węzła do co najmniej jednego miejsca docelowego monitorowania. Ponieważ jest on dołączany jako pakiet NuGet w projekcie usługi, kod EventFlow i podróż konfiguracji z usługą, eliminując problem z konfiguracją węzła wymieniony wcześniej na temat Diagnostyka Azure. Przepływ zdarzeń jest uruchamiany w ramach procesu usługi i łączy się bezpośrednio ze skonfigurowanymi danymi wyjściowymi. Ze względu na bezpośrednie połączenie usługa EventFlow działa w przypadku wdrożeń usług platformy Azure, kontenerów i lokalnych. Należy zachować ostrożność, jeśli uruchamiasz funkcję EventFlow w scenariuszach o wysokiej gęstości, takich jak w kontenerze, ponieważ każdy potok EventFlow wykonuje połączenie zewnętrzne. Tak więc, jeśli hostujesz kilka procesów, otrzymujesz kilka połączeń wychodzących! Nie jest to tak duże zagrożenie dla aplikacji usługi Service Fabric, ponieważ wszystkie repliki ServiceType przebiegu w tym samym procesie i ogranicza to liczbę połączeń wychodzących. Funkcja EventFlow oferuje również filtrowanie zdarzeń, dzięki czemu wysyłane są tylko zdarzenia zgodne z określonym filtrem.

Konfigurowanie usługi EventFlow

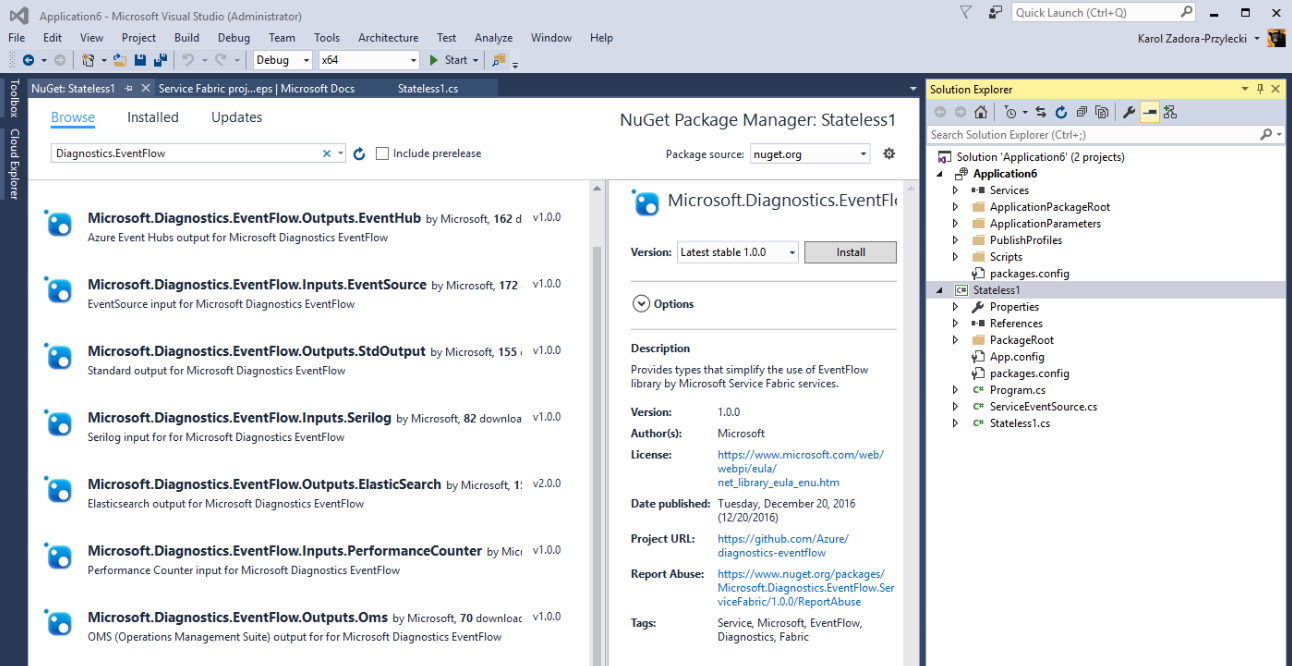

Pliki binarne przepływu zdarzeń są dostępne jako zestaw pakietów NuGet. Aby dodać element EventFlow do projektu usługi Service Fabric, kliknij prawym przyciskiem myszy projekt w Eksplorator rozwiązań i wybierz pozycję "Zarządzaj pakietami NuGet". Przejdź do karty "Przeglądaj" i wyszukaj ciąg "Diagnostics.EventFlow":

Zostanie wyświetlona lista różnych pakietów z etykietami "Inputs" i "Outputs". Usługa EventFlow obsługuje różnych dostawców rejestrowania i analizatorów. Hostowanie usługi EventFlow powinno zawierać odpowiednie pakiety w zależności od źródła i miejsca docelowego dla dzienników aplikacji. Oprócz podstawowego pakietu ServiceFabric należy również skonfigurować co najmniej jedną konfigurację danych wejściowych i wyjściowych. Można na przykład dodać następujące pakiety w celu wysyłania zdarzeń EventSource do usługi Application Insights:

Microsoft.Diagnostics.EventFlow.Inputs.EventSourceprzechwytywanie danych z klasy EventSource usługi oraz standardowych źródeł zdarzeń, takich jak Microsoft-ServiceFabric-Services i Microsoft-ServiceFabric-Actors)Microsoft.Diagnostics.EventFlow.Outputs.ApplicationInsights(wyślemy dzienniki do zasobu usługi aplikacja systemu Azure Insights)Microsoft.Diagnostics.EventFlow.ServiceFabric(umożliwia inicjowanie potoku EventFlow z poziomu konfiguracji usługi Service Fabric i zgłasza wszelkie problemy z wysyłaniem danych diagnostycznych jako raportów kondycji usługi Service Fabric)

Uwaga

Microsoft.Diagnostics.EventFlow.Inputs.EventSourcePakiet wymaga, aby projekt usługi był przeznaczony na .NET Framework 4.6 lub nowszy. Przed zainstalowaniem tego pakietu upewnij się, że ustawiono odpowiednią strukturę docelową we właściwościach projektu.

Po zainstalowaniu wszystkich pakietów następnym krokiem jest skonfigurowanie i włączenie funkcji EventFlow w usłudze.

Konfigurowanie i włączanie zbierania dzienników

Potok EventFlow odpowiedzialny za wysyłanie dzienników jest tworzony na podstawie specyfikacji przechowywanej w pliku konfiguracji. Pakiet Microsoft.Diagnostics.EventFlow.ServiceFabric instaluje początkowy plik konfiguracji EventFlow w PackageRoot\Config folderze rozwiązania o nazwie eventFlowConfig.json. Ten plik konfiguracji należy zmodyfikować w celu przechwycenia danych z domyślnej klasy usługi EventSource , a także wszelkich innych danych wejściowych, które chcesz skonfigurować, i wysłać dane do odpowiedniego miejsca.

Uwaga

Jeśli plik projektu ma format VisualStudio 2017, eventFlowConfig.json plik nie zostanie automatycznie dodany. Aby rozwiązać ten problem, utwórz plik w Config folderze i ustaw akcję kompilacji na Copy if newer.

Oto przykładowe zdarzenieFlowConfig.json oparte na pakietach NuGet wymienionych powyżej:

{

"inputs": [

{

"type": "EventSource",

"sources": [

{ "providerName": "Microsoft-ServiceFabric-Services" },

{ "providerName": "Microsoft-ServiceFabric-Actors" },

// (replace the following value with your service's ServiceEventSource name)

{ "providerName": "your-service-EventSource-name" }

]

}

],

"filters": [

{

"type": "drop",

"include": "Level == Verbose"

}

],

"outputs": [

{

"type": "ApplicationInsights",

// (replace the following value with your AI resource's instrumentation key)

"instrumentationKey": "00000000-0000-0000-0000-000000000000"

}

],

"schemaVersion": "2016-08-11"

}

Nazwa usługi ServiceEventSource jest wartością właściwości EventSourceAttribute Name zastosowanej do klasy ServiceEventSource. Wszystkie są określone w ServiceEventSource.cs pliku, który jest częścią kodu usługi. Na przykład w poniższym fragmencie kodu nazwa elementu ServiceEventSource to MyCompany-Application1-Stateless1:

[EventSource(Name = "MyCompany-Application1-Stateless1")]

internal sealed class ServiceEventSource : EventSource

{

// (rest of ServiceEventSource implementation)

}

Należy pamiętać, że eventFlowConfig.json plik jest częścią pakietu konfiguracji usługi. Zmiany w tym pliku można uwzględnić w pełnych lub tylko konfiguracjach uaktualnień usługi, z zastrzeżeniem kontroli kondycji uaktualnienia usługi Service Fabric i automatycznego wycofywania w przypadku niepowodzenia uaktualniania. Aby uzyskać więcej informacji, zobacz Uaktualnianie aplikacji usługi Service Fabric.

Sekcja filtrów konfiguracji umożliwia dalsze dostosowywanie informacji, które będą przechodzić przez potok EventFlow do danych wyjściowych, umożliwiając upuszczanie lub dołączanie pewnych informacji lub zmianę struktury danych zdarzenia. Aby uzyskać więcej informacji na temat filtrowania, zobacz Filtry przepływu zdarzeń.

Ostatnim krokiem jest utworzenie wystąpienia potoku EventFlow w kodzie uruchamiania usługi znajdującym się w Program.cs pliku:

using System;

using System.Diagnostics;

using System.Threading;

using Microsoft.ServiceFabric;

using Microsoft.ServiceFabric.Services.Runtime;

// **** EventFlow namespace

using Microsoft.Diagnostics.EventFlow.ServiceFabric;

namespace Stateless1

{

internal static class Program

{

/// <summary>

/// This is the entry point of the service host process.

/// </summary>

private static void Main()

{

try

{

// **** Instantiate log collection via EventFlow

using (var diagnosticsPipeline = ServiceFabricDiagnosticPipelineFactory.CreatePipeline("MyApplication-MyService-DiagnosticsPipeline"))

{

ServiceRuntime.RegisterServiceAsync("Stateless1Type",

context => new Stateless1(context)).GetAwaiter().GetResult();

ServiceEventSource.Current.ServiceTypeRegistered(Process.GetCurrentProcess().Id, typeof(Stateless1).Name);

Thread.Sleep(Timeout.Infinite);

}

}

catch (Exception e)

{

ServiceEventSource.Current.ServiceHostInitializationFailed(e.ToString());

throw;

}

}

}

}

Nazwa przekazana jako parametr CreatePipeline metody ServiceFabricDiagnosticsPipelineFactory to nazwa jednostki kondycji reprezentującej potok zbierania dzienników przepływu zdarzeń. Ta nazwa jest używana, jeśli przepływ zdarzeń napotka błąd i zgłosi go za pośrednictwem podsystemu kondycji usługi Service Fabric.

Używanie ustawień usługi Service Fabric i parametrów aplikacji w pliku eventFlowConfig

Usługa EventFlow obsługuje używanie ustawień usługi Service Fabric i parametrów aplikacji do konfigurowania ustawień przepływu zdarzeń. Parametry ustawień usługi Service Fabric można znaleźć przy użyciu tej specjalnej składni dla wartości:

servicefabric:/<section-name>/<setting-name>

<section-name> jest nazwą sekcji konfiguracji usługi Service Fabric i <setting-name> jest ustawieniem konfiguracji, które będzie używane do konfigurowania ustawienia EventFlow. Aby dowiedzieć się więcej o tym, jak to zrobić, przejdź do tematu Obsługa ustawień usługi Service Fabric i parametrów aplikacji.

Weryfikacja

Uruchom usługę i obserwuj okno Debugowanie danych wyjściowych w programie Visual Studio. Po uruchomieniu usługi należy zacząć widzieć dowody, że usługa wysyła rekordy do skonfigurowanych danych wyjściowych. Przejdź do platformy analizy zdarzeń i wizualizacji i upewnij się, że dzienniki zaczęły się pojawiać (może potrwać kilka minut).