你当前正在访问 Microsoft Azure Global Edition 技术文档网站。 如果需要访问由世纪互联运营的 Microsoft Azure 中国技术文档网站,请访问 https://docs.azure.cn。

教程:使用 .NET API 通过 Azure Batch 运行并行工作负荷

使用 Azure Batch 在 Azure 中高效运行大规模并行和高性能计算 (HPC) 批处理作业。 本教程通过一个 C# 示例演示了如何使用 Batch 运行并行工作负荷。 你可以学习常用的 Batch 应用程序工作流,以及如何以编程方式与 Batch 和存储资源交互。

- 将应用程序包添加到 Batch 帐户。

- 通过 Batch 和存储帐户进行身份验证。

- 将输入文件上传到存储。

- 创建运行应用程序所需的计算节点池。

- 创建用于处理输入文件的作业和任务。

- 监视任务执行情况。

- 检索输出文件。

在本教程中,你会使用 ffmpeg 开放源代码工具将 MP4 媒体文件并行转换为 MP3 格式。

如果没有 Azure 订阅,请在开始之前创建一个 Azure 免费帐户。

先决条件

适用于 Linux、macOS 或 Windows 的 Visual Studio 2017 或更高版本 或 .NET Core SDK。

Batch 帐户和关联的 Azure 存储帐户。 要创建这些帐户,请参阅 Azure 门户 或 Azure CLI 的 Batch 快速入门指南。

将适合你的用例的相应 ffmpeg 版本下载到本地计算机。 本教程和相关示例应用使用 Windows 64 位完全构建版本的 ffmpeg 4.3.1。 本教程只需 zip 文件。 不需将文件解压缩或安装在本地。

登录 Azure

登录 Azure 门户。

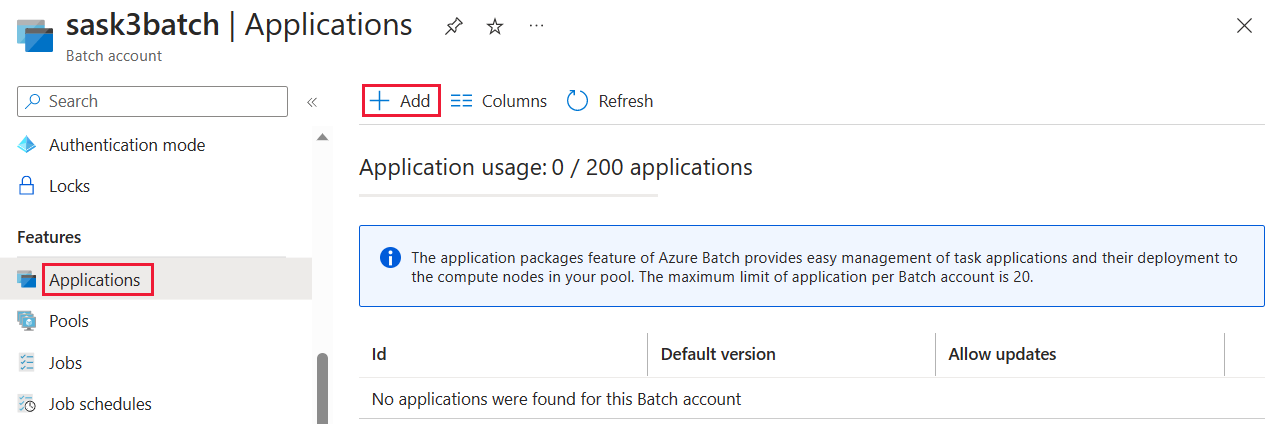

添加应用程序包

使用 Azure 门户,将 ffmpeg 作为应用程序包添加到 Batch 帐户。 应用程序包有助于管理任务应用程序及其到池中计算节点的部署。

在 Azure 门户中,点击“更多服务”>“Batch 帐户”,然后选择 Batch 帐户的名称。

单击“应用程序” > “添加”。

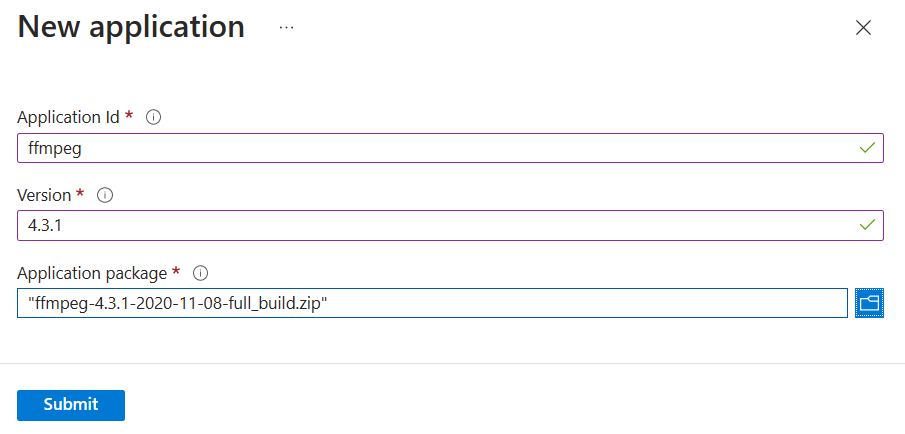

在“应用程序 ID”字段中输入 ffmpeg,在“版本”字段中输入包版本 4.3.1。 选择之前下载的 ffmpeg zip 文件,然后选择“提交”。 ffmpeg 应用程序包添加到 Batch 帐户。

获取帐户凭据

就此示例来说,需为 Batch 帐户和存储帐户提供凭据。 若要获取所需凭据,一种直接的方法是使用 Azure 门户。 (也可使用 Azure API 或命令行工具来获取这些凭据。)

选择“所有服务”>“Batch 帐户”,然后选择 Batch 帐户的名称。

若要查看 Batch 凭据,请选择“密钥”。 将“Batch 帐户”、“URL”和“主访问密钥”的值复制到文本编辑器。

若要查看存储帐户名称和密钥,请选择“存储帐户”。 将“存储帐户名称”和“Key1”的值复制到文本编辑器。

下载并运行示例应用

下载示例应用

从 GitHub 下载或克隆示例应用。 若要使用 Git 客户端克隆示例应用存储库,请使用以下命令:

git clone https://github.com/Azure-Samples/batch-dotnet-ffmpeg-tutorial.git

导航到包含 Visual Studio 解决方案文件 BatchDotNetFfmpegTutorial.sln 的目录。

在 Visual Studio 中打开解决方案文件,使用为帐户获取的值更新 Program.cs 中的凭据字符串。 例如:

// Batch account credentials

private const string BatchAccountName = "yourbatchaccount";

private const string BatchAccountKey = "xxxxxxxxxxxxxxxxE+yXrRvJAqT9BlXwwo1CwF+SwAYOxxxxxxxxxxxxxxxx43pXi/gdiATkvbpLRl3x14pcEQ==";

private const string BatchAccountUrl = "https://yourbatchaccount.yourbatchregion.batch.azure.com";

// Storage account credentials

private const string StorageAccountName = "yourstorageaccount";

private const string StorageAccountKey = "xxxxxxxxxxxxxxxxy4/xxxxxxxxxxxxxxxxfwpbIC5aAWA8wDu+AFXZB827Mt9lybZB1nUcQbQiUrkPtilK5BQ==";

注意

为简化示例,Batch 凭据和存储帐户凭据以明文形式显示。 在实践中,我们建议你限制对凭据的访问,并使用环境变量或配置文件在代码中引用凭据。 有关示例,请参阅 Azure Batch 代码示例存储库。

另外,请确保解决方案中引用的 ffmpeg 应用程序包与你上传到 Batch 帐户的 ffmpeg 包的标识符和版本相匹配。 例如,ffmpeg 和 4.3.1。

const string appPackageId = "ffmpeg";

const string appPackageVersion = "4.3.1";

生成并运行示例项目

在 Visual Studio 中构建并运行应用程序,或在命令行中使用 dotnet build 和 dotnet run 命令。 运行应用程序后,请查看代码,了解应用程序的每个部分的作用。 例如,在 Visual Studio 中:

在解决方案资源管理器中右键单击解决方案,然后选择“生成解决方案”。

出现提示时,请确认还原任何 NuGet 包。 如果需要下载缺少的包,请确保 NuGet 包管理器已安装。

运行解决方案。 运行示例应用程序时,控制台输出如下所示。 在执行期间启动池的计算节点时,会遇到暂停并看到

Monitoring all tasks for 'Completed' state, timeout in 00:30:00...。

Sample start: 11/19/2018 3:20:21 PM

Container [input] created.

Container [output] created.

Uploading file LowPriVMs-1.mp4 to container [input]...

Uploading file LowPriVMs-2.mp4 to container [input]...

Uploading file LowPriVMs-3.mp4 to container [input]...

Uploading file LowPriVMs-4.mp4 to container [input]...

Uploading file LowPriVMs-5.mp4 to container [input]...

Creating pool [WinFFmpegPool]...

Creating job [WinFFmpegJob]...

Adding 5 tasks to job [WinFFmpegJob]...

Monitoring all tasks for 'Completed' state, timeout in 00:30:00...

Success! All tasks completed successfully within the specified timeout period.

Deleting container [input]...

Sample end: 11/19/2018 3:29:36 PM

Elapsed time: 00:09:14.3418742

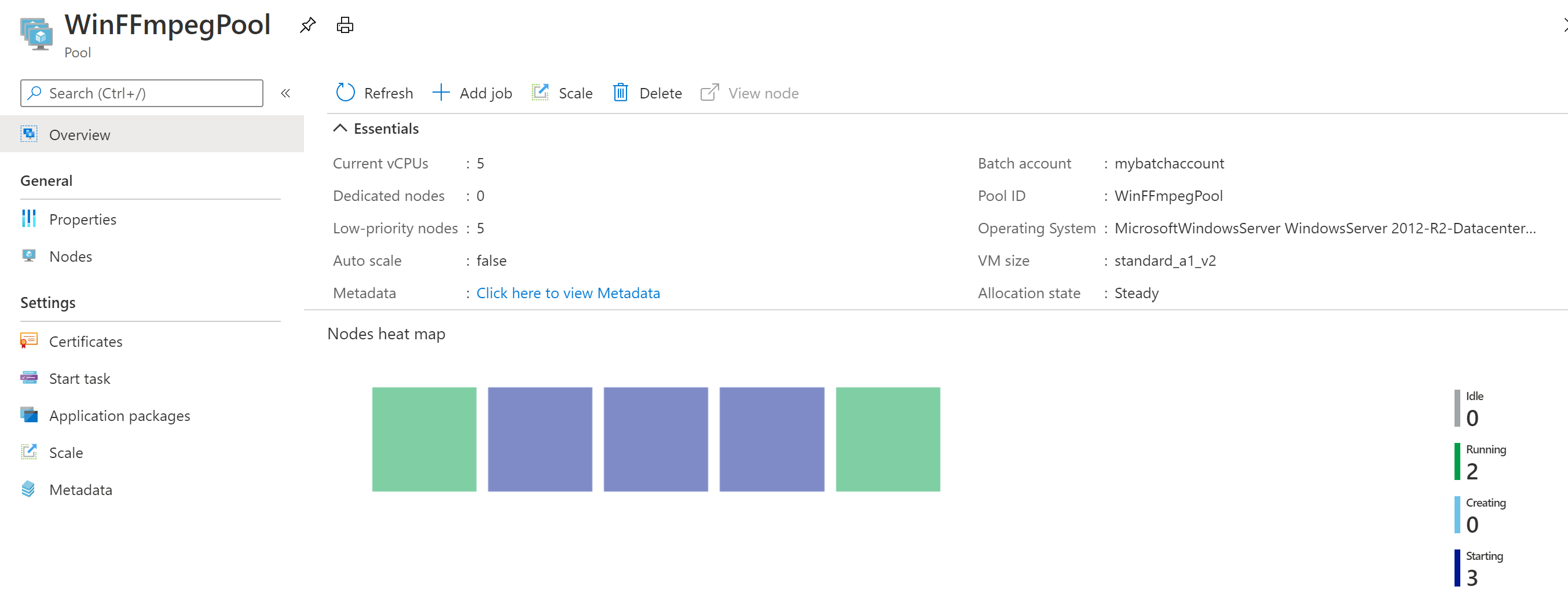

转到 Azure 门户中的 Batch 帐户,监视池、计算节点、作业和任务。 例如,若要查看池中计算节点的热度地图,请单击“池” > “WinFFmpegPool”。

任务正在运行时,热度地图如下所示:

以默认配置运行应用程序时,典型的执行时间大约为 10 分钟。 池创建过程需要最多时间。

检索输出文件

可以使用 Azure 门户下载 ffmpeg 任务生成的输出 MP3 文件。

- 单击“所有服务”>“存储帐户”,然后单击存储帐户的名称。

- 单击“Blob”>“输出”。

- 右键单击一个输出 MP3 文件,然后单击“下载”。 在浏览器中按提示打开或保存该文件。

也可以编程方式从计算节点或存储容器下载这些文件(但在本示例中未演示)。

查看代码

以下部分将示例应用程序细分为多个执行步骤,用于处理 Batch 服务中的工作负荷。 由于我们并未讨论示例中的每个代码行,因此阅读本文的其余内容时,请参考解决方案中的文件 Program.cs。

对 Blob 和 Batch 客户端进行身份验证

为了与关联的存储帐户交互,应用使用用于 .NET 的 Azure 存储客户端库。 它使用 CloudStorageAccount 创建对帐户的引用,使用共享密钥身份验证进行身份验证, 然后创建 CloudBlobClient。

// Construct the Storage account connection string

string storageConnectionString = String.Format("DefaultEndpointsProtocol=https;AccountName={0};AccountKey={1}",

StorageAccountName, StorageAccountKey);

// Retrieve the storage account

CloudStorageAccount storageAccount = CloudStorageAccount.Parse(storageConnectionString);

CloudBlobClient blobClient = storageAccount.CreateCloudBlobClient();

应用创建的 BatchClient 对象用于创建和管理 Batch 服务中的池、作业和任务。 示例中的 Batch 客户端使用共享密钥身份验证。 Batch 还支持通过 Microsoft Entra ID 进行身份验证,以便对单个用户或无人参与应用程序进行身份验证。

BatchSharedKeyCredentials sharedKeyCredentials = new BatchSharedKeyCredentials(BatchAccountUrl, BatchAccountName, BatchAccountKey);

using (BatchClient batchClient = BatchClient.Open(sharedKeyCredentials))

...

上传输入文件

应用将 blobClient 对象传递至 CreateContainerIfNotExistAsync 方法,以便为输入文件(MP4 格式)创建一个存储容器,并为任务输出创建一个容器。

CreateContainerIfNotExistAsync(blobClient, inputContainerName);

CreateContainerIfNotExistAsync(blobClient, outputContainerName);

然后,文件从本地 InputFiles 文件夹上传到输入容器。 存储中的文件定义为 Batch ResourceFile 对象,Batch 随后可以将这些对象下载到计算节点。

上传文件时,涉及到 InputFiles 中的两个方法:

UploadFilesToContainerAsync:返回ResourceFile对象的集合,并在内部调用UploadResourceFileToContainerAsync,从而上传在inputFilePaths参数中传递的每个文件。UploadResourceFileToContainerAsync设置用户帐户 :将每个文件作为 Blob 上传到输入容器。 上传文件后,它会获取该 Blob 的共享访问签名(SAS)并返回代表它的ResourceFile对象。

string inputPath = Path.Combine(Environment.CurrentDirectory, "InputFiles");

List<string> inputFilePaths = new List<string>(Directory.GetFileSystemEntries(inputPath, "*.mp4",

SearchOption.TopDirectoryOnly));

List<ResourceFile> inputFiles = await UploadFilesToContainerAsync(

blobClient,

inputContainerName,

inputFilePaths);

若要详细了解如何使用 .NET 将文件作为 Blob 上传到存储帐户,请参阅使用 .NET 上传、下载和列出 blob。

创建计算节点池

然后,该示例会调用 CreatePoolIfNotExistAsync 以在 Batch 帐户中创建计算节点池。 这个定义的方法使用 BatchClient.PoolOperations.CreatePool 方法设置节点数、VM 大小和池配置。 在这里,VirtualMachineConfiguration 对象指定对 Azure 市场中发布的 Windows Server 映像的 ImageReference。 Batch 支持 Azure 市场中的各种 VM 映像以及自定义 VM 映像。

节点数和 VM 大小使用定义的常数进行设置。 Batch 支持专用节点和现成节点。可以在池中使用这其中的一种,或者两种都使用。 专用节点为池保留。 现成节点在 Azure 有剩余 VM 容量时以优惠价提供。 如果 Azure 没有足够的容量,现成节点会变得不可用。 默认情况下,此示例创建的池只包含 5 个大小为 Standard_A1_v2 的现成节点。

注意

请务必检查节点配额。 有关如何创建配额请求的说明,请参阅 Batch 服务配额和限制。

ffmpeg 应用程序部署到计算节点的方法是添加对池配置的 ApplicationPackageReference。

CommitAsync 方法将池提交到 Batch 服务。

ImageReference imageReference = new ImageReference(

publisher: "MicrosoftWindowsServer",

offer: "WindowsServer",

sku: "2016-Datacenter-smalldisk",

version: "latest");

VirtualMachineConfiguration virtualMachineConfiguration =

new VirtualMachineConfiguration(

imageReference: imageReference,

nodeAgentSkuId: "batch.node.windows amd64");

pool = batchClient.PoolOperations.CreatePool(

poolId: poolId,

targetDedicatedComputeNodes: DedicatedNodeCount,

targetLowPriorityComputeNodes: LowPriorityNodeCount,

virtualMachineSize: PoolVMSize,

virtualMachineConfiguration: virtualMachineConfiguration);

pool.ApplicationPackageReferences = new List<ApplicationPackageReference>

{

new ApplicationPackageReference {

ApplicationId = appPackageId,

Version = appPackageVersion}};

await pool.CommitAsync();

创建作业

Batch 作业可指定在其中运行任务的池以及可选设置,例如工作的优先级和计划。 此示例通过调用 CreateJobAsync 创建一个作业。 这个定义的方法使用 BatchClient.JobOperations.CreateJob 方法在池中创建作业。

CommitAsync 方法将作业提交到 Batch 服务。 作业一开始没有任务。

CloudJob job = batchClient.JobOperations.CreateJob();

job.Id = JobId;

job.PoolInformation = new PoolInformation { PoolId = PoolId };

await job.CommitAsync();

创建任务

此示例通过调用 AddTasksAsync 方法来创建 CloudTask 对象的列表,从而在作业中创建任务。 每个 CloudTask 都运行 ffmpeg,使用 CommandLine 属性处理输入 ResourceFile 对象。 ffmpeg 此前已在创建池时安装在每个节点上。 在这里,命令行运行 ffmpeg 将每个输入 MP4(视频)文件转换为 MP3(音频)文件。

此示例在运行命令行后为 MP3 文件创建 OutputFile 对象。 每个任务的输出文件(在此示例中为一个)都会使用任务的 OutputFiles 属性上传到关联的存储帐户中的一个容器。 我们在前面的代码示例中获取了共享访问签名 URL (outputContainerSasUrl),用于提供对输出容器的写权限。 请注意 outputFile 对象上设置的条件。 只有在任务成功完成后 (OutputFileUploadCondition.TaskSuccess),任务中的输出文件才会上传到容器。 在 GitHub 上查看完整的代码示例,进一步了解实现的详细信息。

然后,示例使用 AddTaskAsync 方法将任务添加到作业,使任务按顺序在计算节点上运行。

将可执行文件的文件路径替换为你下载的版本的名称。 此示例代码使用了示例 ffmpeg-4.3.1-2020-11-08-full_build。

// Create a collection to hold the tasks added to the job.

List<CloudTask> tasks = new List<CloudTask>();

for (int i = 0; i < inputFiles.Count; i++)

{

string taskId = String.Format("Task{0}", i);

// Define task command line to convert each input file.

string appPath = String.Format("%AZ_BATCH_APP_PACKAGE_{0}#{1}%", appPackageId, appPackageVersion);

string inputMediaFile = inputFiles[i].FilePath;

string outputMediaFile = String.Format("{0}{1}",

System.IO.Path.GetFileNameWithoutExtension(inputMediaFile),

".mp3");

string taskCommandLine = String.Format("cmd /c {0}\\ffmpeg-4.3.1-2020-09-21-full_build\\bin\\ffmpeg.exe -i {1} {2}", appPath, inputMediaFile, outputMediaFile);

// Create a cloud task (with the task ID and command line)

CloudTask task = new CloudTask(taskId, taskCommandLine);

task.ResourceFiles = new List<ResourceFile> { inputFiles[i] };

// Task output file

List<OutputFile> outputFileList = new List<OutputFile>();

OutputFileBlobContainerDestination outputContainer = new OutputFileBlobContainerDestination(outputContainerSasUrl);

OutputFile outputFile = new OutputFile(outputMediaFile,

new OutputFileDestination(outputContainer),

new OutputFileUploadOptions(OutputFileUploadCondition.TaskSuccess));

outputFileList.Add(outputFile);

task.OutputFiles = outputFileList;

tasks.Add(task);

}

// Add tasks as a collection

await batchClient.JobOperations.AddTaskAsync(jobId, tasks);

return tasks

监视任务

Batch 将任务添加到作业时,该服务自动对任务排队并进行计划,方便其在关联的池中的计算节点上执行。 Batch 根据指定的设置处理所有任务排队、计划、重试和其他任务管理工作。

监视任务的执行有许多方法。 此示例定义的 MonitorTasks 方法仅在已完成的情况下状态为“任务失败”或“任务成功”时进行报告。 MonitorTasks 代码指定 ODATADetailLevel,只选择有关任务的最少信息,十分高效。 然后,它会创建 TaskStateMonitor,以便提供用于监视任务状态的帮助器实用程序。 在 MonitorTasks 中,示例会在某个时限内等待所有任务达到 TaskState.Completed 状态。 然后,它会终止作业,并对虽已完成但仍遇到故障(例如退出代码非零)的任务进行报告。

TaskStateMonitor taskStateMonitor = batchClient.Utilities.CreateTaskStateMonitor();

try

{

await taskStateMonitor.WhenAll(addedTasks, TaskState.Completed, timeout);

}

catch (TimeoutException)

{

batchClient.JobOperations.TerminateJob(jobId);

Console.WriteLine(incompleteMessage);

return false;

}

batchClient.JobOperations.TerminateJob(jobId);

Console.WriteLine(completeMessage);

...

清理资源

运行任务之后,应用自动删除所创建的输入存储容器,并允许你选择是否删除 Batch 池和作业。 BatchClient 的 JobOperations 和 PoolOperations 类都有相应的删除方法(在确认删除时调用)。 虽然作业和任务本身不收费,但计算节点收费。 因此,建议只在需要的时候分配池。 删除池时会删除节点上的所有任务输出。 但是,输出文件保留在存储帐户中。

若不再需要资源组、Batch 帐户和存储帐户,请将其删除。 为此,请在 Azure 门户中选择 Batch 帐户所在的资源组,然后单击“删除资源组”。

后续步骤

在本教程中,你了解了如何执行以下操作:

- 将应用程序包添加到 Batch 帐户。

- 通过 Batch 和存储帐户进行身份验证。

- 将输入文件上传到存储。

- 创建运行应用程序所需的计算节点池。

- 创建用于处理输入文件的作业和任务。

- 监视任务执行情况。

- 检索输出文件。

更多使用 .NET API 计划和处理 Batch 工作负载的示例,请参阅 GitHub 上的 Batch C# 示例。