Mapowanie przestrzenne

Mapowanie przestrzenne zapewnia szczegółową reprezentację rzeczywistych powierzchni w środowisku wokół urządzenia HoloLens, umożliwiając deweloperom tworzenie przekonującego środowiska rzeczywistości mieszanej. Scalając świat rzeczywisty ze światem wirtualnym, aplikacja może sprawić, że hologramy wydają się prawdziwe. Aplikacje mogą być również bardziej naturalnie zgodne z oczekiwaniami użytkowników, zapewniając znane zachowania i interakcje w świecie rzeczywistym.

Urządzenie obsługuje

| Funkcja | HoloLens (1. generacja) | HoloLens 2 | Immersyjne zestawy słuchawkowe |

| Mapowanie przestrzenne | ✔️ | ✔️ | ❌ |

Dlaczego mapowanie przestrzenne jest ważne?

Mapowanie przestrzenne umożliwia umieszczenie obiektów na rzeczywistych powierzchniach. Pomaga to zakotwiczyć obiekty w świecie użytkownika i korzystać z rzeczywistych wskazówek głębokości. Occluding your holograms based on other holograms and real world objects pomaga przekonać użytkownika, że te hologramy są rzeczywiście w ich przestrzeni. Hologramy pływające w przestrzeni lub poruszające się z użytkownikiem nie będą czuć się tak prawdziwe. Jeśli to możliwe, umieść elementy dla komfortu.

Wizualizowanie powierzchni podczas umieszczania lub przenoszenia hologramów (użyj przewidywanej siatki). Pomaga to użytkownikom wiedzieć, gdzie mogą najlepiej umieścić swoje hologramy i pokazuje, czy miejsce, w którym próbuje umieścić hologram, nie jest mapowane. Możesz "billboard elementy" wobec użytkownika, jeśli skończy się zbyt wiele kąta.

Omówienie pojęć

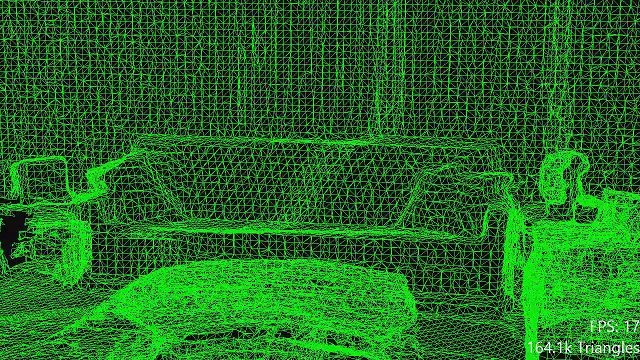

Przykład siatki mapowania przestrzennego obejmującego pokój

Dwa podstawowe typy obiektów używane do mapowania przestrzennego to "Obserwator powierzchni przestrzennej" i "Powierzchnia przestrzenna".

Aplikacja udostępnia obserwatora powierzchni przestrzennej z co najmniej jednym woluminem powiązanym, aby zdefiniować regiony przestrzeni, w których aplikacja chce odbierać dane mapowania przestrzennego. Dla każdego z tych woluminów mapowanie przestrzenne zapewni aplikacji zestaw powierzchni przestrzennych.

Woluminy te mogą być stacjonarne (w stałej lokalizacji opartej na świecie rzeczywistym) lub mogą być dołączone do urządzenia HoloLens (przenoszą się, ale nie obracają się, a urządzenie HoloLens jest przenoszone przez środowisko). Każda powierzchnia przestrzenna opisuje powierzchnie świata rzeczywistego w małej ilości miejsca, reprezentowane jako siatka trójkąta przymocowana do światowego układu współrzędnych przestrzennych.

Gdy urządzenie HoloLens zbiera nowe dane o środowisku i w miarę występowania zmian w środowisku, powierzchnie przestrzenne pojawią się, znikną i zmienią się.

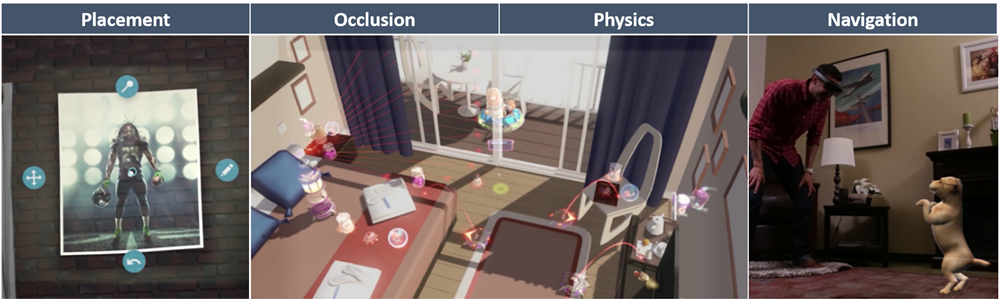

Pokaz koncepcji projektowania świadomości przestrzennej

Jeśli chcesz zobaczyć pojęcia dotyczące projektowania świadomości przestrzennej w działaniu, zapoznaj się z naszym pokazem wideo Projektowanie hologramów — świadomość przestrzenna poniżej. Po zakończeniu przejdź dalej, aby uzyskać bardziej szczegółowe informacje na temat konkretnych tematów.

To wideo zostało zrobione z aplikacji "Projektowanie hologramów" HoloLens 2. Pobierz i ciesz się pełnym doświadczeniem tutaj.

Mapowanie przestrzenne a scena Understanding WorldMesh

W przypadku HoloLens 2 można wykonywać zapytania dotyczące statycznej wersji danych mapowania przestrzennego przy użyciu zestawu SDK rozumienia sceny (ustawienie EnableWorldMesh). Poniżej przedstawiono różnice między dwoma sposobami uzyskiwania dostępu do danych mapowania przestrzennego:

- Interfejs API mapowania przestrzennego:

- Ograniczony zakres: dane mapowania przestrzennego dostępne dla aplikacji w ograniczonym rozmiarze buforowanym "bąbelku" wokół użytkownika.

- Zapewnia aktualizacje małych opóźnień zmienionych regionów siatki za pośrednictwem zdarzeń SurfaceChanged.

- Zmienny poziom szczegółów kontrolowany przez trójkąty na parametr miernik sześcienny.

- Zestaw SDK rozumienia sceny:

- Nieograniczony zakres — udostępnia wszystkie zeskanowane dane mapowania przestrzennego w promieniu zapytania.

- Udostępnia statyczną migawkę danych mapowania przestrzennego. Pobranie zaktualizowanych danych mapowania przestrzennego wymaga uruchomienia nowego zapytania dla całej siatki.

- Spójny poziom szczegółów kontrolowanych przez ustawienie RequestedMeshLevelOfDetail.

Co wpływa na jakość mapowania przestrzennego?

Kilka czynników, szczegółowo opisanych tutaj, może mieć wpływ na częstotliwość i ważność tych błędów. Należy jednak zaprojektować aplikację tak, aby użytkownik mógł osiągnąć swoje cele nawet w przypadku występowania błędów w danych mapowania przestrzennego.

Typowe scenariusze użycia

Umieszczanie

Mapowanie przestrzenne zapewnia aplikacjom możliwość prezentowania naturalnych i znanych form interakcji z użytkownikiem; co może być bardziej naturalne niż umieszczenie telefonu na biurku?

Ograniczenie umieszczania hologramów (lub ogólnie rzecz biorąc, dowolny wybór lokalizacji przestrzennych) na powierzchni zapewnia naturalne mapowanie z 3D (punkt w przestrzeni) do 2D (punkt na powierzchni). Zmniejsza to ilość informacji, które użytkownik musi dostarczyć do aplikacji i sprawia, że interakcje użytkownika są szybsze, łatwiejsze i bardziej precyzyjne. Dzieje się tak, ponieważ "odległość od" nie jest czymś, co jest używane do fizycznej komunikacji z innymi osobami lub komputerami. Gdy wskażemy palcem, określamy kierunek, ale nie odległość.

Ważne jest to, że gdy aplikacja wywnioskuje odległość od kierunku (na przykład wykonując raycast wzdłuż kierunku wzroku użytkownika, aby znaleźć najbliższą powierzchnię przestrzenną), musi to przynieść wyniki, które użytkownik może niezawodnie przewidzieć. W przeciwnym razie użytkownik straci poczucie kontroli i może to szybko stać się frustrujące. Jedną z metod, która pomaga w tym celu, jest wykonanie wielu raycastów, a nie tylko jednej. Zagregowane wyniki powinny być bardziej płynne i bardziej przewidywalne, mniej podatne na wpływ z przejściowych wyników "odstających" (co może być spowodowane przez promienie przechodzące przez małe otwory lub uderzające małe kawałki geometrii, o których użytkownik nie wie). Agregacja lub wygładzanie można również wykonywać w czasie; na przykład można ograniczyć maksymalną szybkość, z jaką hologram może się różnić w odległości od użytkownika. Po prostu ograniczenie minimalnej i maksymalnej wartości odległości może również pomóc, więc hologram przenoszony nie nagle odlatuje do odległości lub spada z powrotem na twarz użytkownika.

Aplikacje mogą również używać kształtu i kierunku powierzchni do kierowania umieszczaniem hologramu. Holograficzne krzesło nie powinno przeniknąć przez ściany i powinno usiąść z podłogą, nawet jeśli jest nieco nierówne. Tego rodzaju funkcjonalność prawdopodobnie polegałaby na wykorzystaniu kolizji fizycznych, a nie raycastów, jednak będą miały zastosowanie podobne obawy. Jeśli umieszczony hologram ma wiele małych wielokątów, które wystają, jak nogi na krześle, może to mieć sens, aby rozszerzyć reprezentację fizyki tych wielokątów na coś szerszego i gładkiego, aby były bardziej w stanie przesuwać się na powierzchnie przestrzenne bez snagging.

W skrajnej mierze dane wejściowe użytkownika można uprościć całkowicie, a powierzchnie przestrzenne mogą służyć do całkowicie automatycznego umieszczania hologramu. Na przykład aplikacja może umieścić przełącznik światła holograficznego gdzieś na ścianie, aby użytkownik naciskał. To samo zastrzeżenie dotyczące przewidywalności ma zastosowanie podwójnie tutaj; jeśli użytkownik oczekuje kontroli nad umieszczaniem hologramu, ale aplikacja nie zawsze umieszcza hologramy, których oczekuje (jeśli przełącznik światła pojawi się gdzieś, że użytkownik nie może osiągnąć), będzie to frustrujące środowisko. Faktycznie może być gorzej zrobić automatyczne umieszczanie, które wymaga korekty przez użytkownika w pewnym czasie, niż tylko wymagać od użytkownika, aby zawsze wykonywać umieszczanie się; ponieważ oczekuje się pomyślnego automatycznego umieszczania, korekta ręczna czuje się jak obciążenie!

Należy również pamiętać, że możliwość używania powierzchni przestrzennych do umieszczania zależy w dużym stopniu od środowiska skanowania aplikacji. Jeśli powierzchnia nie została zeskanowana, nie można jej użyć do umieszczania. Do aplikacji należy to, aby było to jasne dla użytkownika, aby ułatwić skanowanie nowych powierzchni lub wybranie nowej lokalizacji.

Wizualna opinia użytkownika ma kluczowe znaczenie podczas umieszczania. Użytkownik musi wiedzieć, gdzie hologram jest oparty na najbliższej powierzchni z efektami uziemienia. Powinni zrozumieć, dlaczego ruch ich hologramu jest ograniczony (na przykład z powodu kolizji z inną powierzchnią w pobliżu). Jeśli nie mogą umieścić hologramu w bieżącej lokalizacji, opinie wizualne powinny wyjaśnić, dlaczego nie. Jeśli na przykład użytkownik próbuje umieścić holograficzne kanapy utknął w połowie drogi do ściany, to części kanapy, które znajdują się za ścianą, powinny pulsować w złym kolorze. Lub odwrotnie, jeśli aplikacja nie może znaleźć powierzchni przestrzennej w lokalizacji, w której użytkownik może zobaczyć rzeczywistą powierzchnię, aplikacja powinna to wyjaśnić. Oczywisty brak efektu uziemienia w tym obszarze może osiągnąć ten cel.

Okluzji

Jednym z podstawowych zastosowań powierzchni mapowania przestrzennego jest po prostu oklude hologramy. To proste zachowanie ma ogromny wpływ na postrzegany realizm hologramów, pomagając stworzyć trzewne poczucie, które naprawdę mieszka tej samej przestrzeni fizycznej co użytkownik.

Occlusion udostępnia również użytkownikowi informacje; gdy hologram wydaje się być okludniony przez powierzchnię świata rzeczywistego, zapewnia to dodatkową wizualną opinię na temat lokalizacji przestrzennej tego hologramu na świecie. Z drugiej strony occlusion może również przydatne ukrywać informacje od użytkownika; Okluding hologramy za ścianami mogą zmniejszyć wizualny bałagan w intuicyjny sposób. Aby ukryć lub ujawnić hologram, użytkownik musi jedynie przesunąć głowę.

Okluzji można również użyć do przygotowania oczekiwań dla naturalnego interfejsu użytkownika na podstawie znanych interakcji fizycznych; jeśli hologram jest okludowany powierzchnią, jest to dlatego, że powierzchnia jest solidna, więc użytkownik powinien oczekiwać, że hologram będzie zderzył się z tym powierzchnią, a nie przechodzi przez nią.

Czasami occlusion hologramów jest niepożądany. Jeśli użytkownik musi wchodzić w interakcję z hologramem, musi go zobaczyć — nawet jeśli znajduje się za rzeczywistą powierzchnią. W takich przypadkach zwykle warto renderować taki hologram inaczej, gdy jest occluded (na przykład poprzez zmniejszenie jego jasności). Dzięki temu użytkownik może wizualnie zlokalizować hologram, ale nadal będzie wiedział, że znajduje się za czymś.

Fizyki

Zastosowanie symulacji fizyki to inny sposób, w jaki mapowanie przestrzenne może służyć do wzmocnienia obecności hologramów w przestrzeni fizycznej użytkownika. Kiedy moja holografiowa gumowa piłka walcuje realistycznie z mojego biurka, odbija się po podłodze i znika pod kanapą, może być trudno mi uwierzyć, że nie jest tam.

Symulacja fizyki zapewnia również możliwość korzystania z naturalnych i znanych interakcji opartych na fizyce. Przeniesienie kawałka mebli holograficznego na podłodze prawdopodobnie będzie łatwiejsze dla użytkownika, jeśli meble reagują tak, jakby przesuwały się po podłodze z odpowiednią bezwładnością i tarciem.

Aby wygenerować realistyczne zachowania fizyczne, prawdopodobnie trzeba będzie wykonać pewne przetwarzanie siatki , takie jak wypełnianie otworów, usuwanie pływających halucynacji i wygładzanie szorstkich powierzchni.

Należy również wziąć pod uwagę sposób, w jaki środowisko skanowania aplikacji wpływa na symulację fizyki. Po pierwsze, brakujące powierzchnie nie będą zderzać się z niczym; co się stanie, gdy gumowa piłka zrzuca się w dół korytarza i od końca znanego świata? Po drugie, musisz zdecydować, czy będziesz nadal reagować na zmiany w środowisku w czasie. W niektórych przypadkach chcesz odpowiedzieć tak szybko, jak to możliwe; powiedz, czy użytkownik używa drzwi i mebli jako ruchomych barykad w obronie przed tempest przychodzących strzałek rzymskich. W innych przypadkach możesz jednak zignorować nowe aktualizacje; jazdy holograficznego samochodu sportowego wokół toru wyścigowego na podłodze może nagle nie być tak zabawne, jeśli twój pies zdecyduje się usiąść w środku toru.

Nawigacja

Aplikacje mogą używać danych mapowania przestrzennego w celu udzielenia znaków holograficznego (lub agentów) możliwości poruszania się po świecie rzeczywistym w taki sam sposób, jak prawdziwa osoba. Może to pomóc wzmocnić obecność znaków holograficznej, ograniczając je do tego samego zestawu naturalnych, znanych zachowań, co użytkownicy i ich przyjaciele.

Możliwości nawigacji mogą być również przydatne dla użytkowników. Gdy mapa nawigacji została utworzona w danym obszarze, można ją udostępnić, aby zapewnić holograficzne wskazówki dla nowych użytkowników, którzy nie są w stanie użyć tej lokalizacji. Ta mapa może być zaprojektowana w celu zapewnienia bezproblemowego przepływu ruchu dla pieszych lub uniknięcia wypadków w niebezpiecznych miejscach, takich jak plac budowy.

Kluczowymi wyzwaniami technicznymi związanymi z wdrażaniem funkcji nawigacji będzie niezawodne wykrywanie powierzchni z możliwością chodzenia (ludzie nie chodzą po stołach!) i bezproblemową adaptację do zmian w środowisku (ludzie nie przechodzą przez zamknięte drzwi!). Siatka może wymagać przetwarzania , zanim będzie można go używać do planowania ścieżki i nawigacji przez znak wirtualny. Wygładzenie siatki i usunięcie halucynacji może pomóc uniknąć utknięcia znaków. Możesz również znacząco uprościć siatkę, aby przyspieszyć planowanie ścieżki i obliczenia nawigacji znaku. Te wyzwania otrzymały dużą uwagę na rozwój technologii gier wideo i istnieje wiele dostępnych literatury badawczej w tych tematach.

Wbudowane funkcje NavMesh w środowisku Unity nie mogą być domyślnie używane w przypadku powierzchni mapowania przestrzennego, ponieważ powierzchnie nie są znane do momentu uruchomienia aplikacji. Można jednak utworzyć moduł NavMesh podczas wykonywania, instalując aplikację NavMeshComponents. Należy pamiętać, że system mapowania przestrzennego nie dostarcza informacji o powierzchni daleko od bieżącej lokalizacji użytkownika; aby utworzyć mapę dużego obszaru, aplikacja musi "zapamiętać" powierzchnie. Możesz również zwiększyć ustawienie zakresów obserwacji w profilu świadomości przestrzennej, co zwiększa obszar, na którym można utworzyć navMesh.

Wizualizacja

Większość czasu jest odpowiednia, aby powierzchnie przestrzenne są niewidoczne; aby zminimalizować bałagan wizualny i pozwolić światowi rzeczywistemu mówić za siebie. Czasami jednak warto bezpośrednio wizualizować powierzchnie mapowania przestrzennego, mimo że ich rzeczywiste odpowiedniki są widoczne.

Na przykład, gdy użytkownik próbuje umieścić hologram na powierzchni (umieszczenie holograficznej szafki na ścianie, powiedzmy), może być przydatne, aby "uziemić" hologram, odrzucając cień na powierzchnię. Daje to użytkownikowi znacznie jaśniejsze poczucie dokładnej fizycznej odległości między hologramem a powierzchnią. Jest to również przykład bardziej ogólnej praktyki wizualnego "podglądu" zmiany przed zatwierdzeniem przez użytkownika.

Wizualizując powierzchnie, aplikacja może udostępnić użytkownikowi swoją wiedzę na temat środowiska. Na przykład gra na tablicy holograficznej może wizualizować poziome powierzchnie zidentyfikowane jako "tabele", dzięki czemu użytkownik wie, gdzie należy wejść w interakcję.

Wizualizacja powierzchni może być przydatnym sposobem pokazania użytkownikom pobliskich przestrzeni ukrytych przed widokiem. Może to zapewnić użytkownikowi dostęp do kuchni (i wszystkich zawartych hologramów) z salonu.

Siatki powierzchni dostarczone przez mapowanie przestrzenne mogą nie być szczególnie "czyste". Ważne jest, aby odpowiednio je wizualizować. Tradycyjne obliczenia oświetlenia mogą wyróżniać błędy w normalnych warunkach powierzchniowych w sposób wizualnie rozpraszający, podczas gdy "czyste" tekstury przewidywane na powierzchnię mogą pomóc w nadanie mu wyglądu. Istnieje również możliwość wykonania przetwarzania siatki w celu poprawy właściwości siatki przed renderowaniem powierzchni.

Uwaga

HoloLens 2 implementuje nowe środowisko uruchomieniowe usługi Scene Understanding, które zapewnia deweloperom Mixed Reality ustrukturyzowaną, wysoką reprezentację środowiska zaprojektowaną w celu uproszczenia implementacji umieszczania, okluzji, fizyki i nawigacji.

Korzystanie z obserwatora powierzchni

Punktem wyjścia do mapowania przestrzennego jest obserwator powierzchni. Przepływ programu jest następujący:

- Tworzenie obiektu obserwatora powierzchni

- Podaj co najmniej jeden wolumin przestrzenny, aby zdefiniować regiony zainteresowania, w których aplikacja chce odbierać dane mapowania przestrzennego. Wolumin przestrzenny to po prostu kształt definiujący obszar przestrzeni, taki jak sfera lub pole.

- Użyj woluminu przestrzennego ze światowego zablokowanego systemu współrzędnych przestrzennych, aby zidentyfikować stały region świata fizycznego.

- Użyj woluminu przestrzennego, zaktualizowano każdą ramkę z układem współrzędnych przestrzennych zablokowanych treści, aby zidentyfikować region miejsca, który przenosi się (ale nie obraca) z użytkownikiem.

- Te woluminy przestrzenne mogą zostać zmienione później w dowolnym momencie, ponieważ stan aplikacji lub użytkownika ulegnie zmianie.

- Używanie sondowania lub powiadomienia do pobierania informacji o powierzchniach przestrzennych

- W dowolnym momencie możesz sondowania obserwatora powierzchni dla stanu powierzchni przestrzennej. Zamiast tego można zarejestrować zdarzenie "powierzchni zmienione" obserwatora powierzchni, które powiadomi aplikację o zmianie powierzchni przestrzennych.

- W przypadku dynamicznego woluminu przestrzennego, takiego jak frustum widoku lub woluminu zablokowanego treść, aplikacje będą musiały sondować zmiany każdej ramki, ustawiając interesujący go region, a następnie uzyskując bieżący zestaw powierzchni przestrzennych.

- W przypadku woluminu statycznego, takiego jak światowego modułu obejmującego jeden pokój, aplikacje mogą rejestrować się w przypadku powiadomienia o zdarzeniach "zmienione powierzchnie", gdy powierzchnie przestrzenne wewnątrz tego woluminu mogły ulec zmianie.

- Zmiany powierzchni procesów

- Iteracja dostarczonego zestawu powierzchni przestrzennych.

- Klasyfikowanie powierzchni przestrzennych jako dodanych, zmienionych lub usuniętych.

- Dla każdej dodanej lub zmienionej powierzchni przestrzennej, jeśli to konieczne, prześlij żądanie asynchroniczne, aby otrzymać zaktualizowaną siatkę reprezentującą bieżący stan powierzchni na żądanym poziomie szczegółowości.

- Przetwórz żądanie asynchronicznej siatki (więcej szczegółów można znaleźć w poniższych sekcjach).

Buforowanie siatki

Powierzchnie przestrzenne są reprezentowane przez gęste siatki trójkątów. Przechowywanie, renderowanie i przetwarzanie tych siatk może zużywać znaczne zasoby obliczeniowe i magazynowe. W związku z tym każda aplikacja powinna przyjąć schemat buforowania siatki odpowiedni dla swoich potrzeb, aby zminimalizować zasoby używane do przetwarzania i magazynowania siatki. Ten schemat powinien określać siatki, które należy zachować i które należy odrzucić, oraz kiedy zaktualizować siatkę dla każdej powierzchni przestrzennej.

Wiele omówionych zagadnień będzie bezpośrednio informować o tym, jak aplikacja powinna podejść do buforowania siatki. Należy rozważyć, jak użytkownik przechodzi przez środowisko, które powierzchnie są potrzebne, gdy będą obserwowane różne powierzchnie i kiedy zmiany w środowisku powinny zostać przechwycone.

Podczas interpretowania zdarzenia "surface changed" dostarczonego przez obserwatora powierzchni podstawowa logika buforowania siatki jest następująca:

- Jeśli aplikacja widzi wcześniej identyfikator powierzchni przestrzennej, którego nie widziała, powinna traktować ją jako nową powierzchnię przestrzenną.

- Jeśli aplikacja widzi powierzchnię przestrzenną ze znanym identyfikatorem, ale z nowym czasem aktualizacji, powinna traktować ją jako zaktualizowaną powierzchnię przestrzenną.

- Jeśli aplikacja nie widzi już powierzchni przestrzennej o znanym identyfikatorze, powinna traktować ją jako usuniętą powierzchnię przestrzenną.

Do każdej aplikacji należy wybrać następujące opcje:

- Czy w przypadku nowych powierzchni przestrzennych należy zażądać siatki?

- Ogólnie siatka powinna być natychmiast żądana dla nowych powierzchni przestrzennych, co może dostarczyć użytkownikom przydatnych nowych informacji.

- Jednak nowe powierzchnie przestrzenne w pobliżu i przed użytkownikiem powinny mieć priorytet, a ich siatka powinna być najpierw żądana.

- Jeśli nowa siatka nie jest potrzebna, jeśli na przykład aplikacja trwale lub tymczasowo "zamrożona" jej model środowiska, nie powinna być żądana.

- Czy w przypadku zaktualizowanych powierzchni przestrzennych należy zażądać siatki?

- Zaktualizowane powierzchnie przestrzenne w pobliżu i przed użytkownikiem powinny mieć priorytet, a ich siatka powinna być najpierw żądana.

- Może być również odpowiednie nadanie wyższego priorytetu nowym powierzchniom niż zaktualizowanym powierzchniom, zwłaszcza podczas skanowania.

- Aby ograniczyć koszty przetwarzania, aplikacje mogą chcieć ograniczyć szybkość przetwarzania aktualizacji powierzchni przestrzennych.

- Może być możliwe wnioskowanie, że zmiany powierzchni przestrzennej są niewielkie, na przykład jeśli granice powierzchni są małe, w takim przypadku aktualizacja może nie być wystarczająco ważna do przetworzenia.

- Aktualizacje powierzchni przestrzennych poza bieżącym regionem zainteresowania użytkownika może być całkowicie ignorowane, chociaż w tym przypadku może być bardziej wydajne modyfikowanie woluminów ograniczenia przestrzennego używanego przez obserwatora powierzchni.

- Czy w przypadku usuniętych powierzchni przestrzennych należy odrzucić siatkę?

- Ogólnie siatka powinna zostać natychmiast odrzucona w przypadku usuniętych powierzchni przestrzennych, dzięki czemu okluzja hologramu pozostaje poprawna.

- Jeśli jednak aplikacja ma powód, aby sądzić, że powierzchnia przestrzenna pojawi się wkrótce (na podstawie projektu środowiska użytkownika), może być bardziej wydajna, aby zachować ją niż odrzucić siatkę i ponownie utworzyć ją ponownie później.

- Jeśli aplikacja tworzy model na dużą skalę środowiska użytkownika, może nie chcieć odrzucić żadnych siatk w ogóle. Nadal trzeba będzie jednak ograniczyć użycie zasobów, prawdopodobnie przez buforowanie siatki na dysku, gdy powierzchnie przestrzenne znikną.

- Niektóre stosunkowo rzadkie zdarzenia podczas generowania powierzchni przestrzennej mogą spowodować zastąpienie powierzchni przestrzennych nowymi powierzchniami przestrzennymi w podobnej lokalizacji, ale z różnymi identyfikatorami. Dlatego aplikacje, które nie chcą odrzucać usuniętej powierzchni, powinny zadbać o to, aby nie kończyć się wieloma wysoce nakładającymi się siatkami powierzchni przestrzennych obejmujących tę samą lokalizację.

- Czy siatka powinna zostać odrzucona dla innych powierzchni przestrzennych?

- Nawet jeśli powierzchnia przestrzenna istnieje, jeśli nie jest już przydatna dla środowiska użytkownika, powinna zostać odrzucona. Jeśli na przykład aplikacja "zastępuje" pokój po drugiej stronie drzwi alternatywną przestrzenią wirtualną, powierzchnie przestrzenne w tym pomieszczeniu nie mają już znaczenia.

Oto przykładowa strategia buforowania siatki przy użyciu histerezy przestrzennej i czasowej:

- Rozważ aplikację, która chce użyć frustum ukształtowanej ilości zainteresowania przestrzennego, która podąża za spojrzeniem użytkownika, gdy rozglądają się i chodzą.

- Powierzchnia przestrzenna może zniknąć tymczasowo z tego woluminu, ponieważ użytkownik odejdzie od powierzchni lub odchodzi od niej... tylko spojrzeć wstecz lub zbliżyć się ponownie chwilę później. W takim przypadku odrzucenie i ponowne utworzenie siatki dla tej powierzchni oznacza wiele nadmiarowych przetwarzania.

- Aby zmniejszyć liczbę przetworzonych zmian, aplikacja używa dwóch obserwatorów powierzchni przestrzennych, jeden znajdujący się w drugiej. Większy wolumin jest spherical i następuje po użytkowniku "leniwie"; przenosi się tylko wtedy, gdy jest to konieczne, aby upewnić się, że jego środek mieści się w odległości 2,0 metra od użytkownika.

- Nowe i zaktualizowane siatki powierzchni przestrzennej są zawsze przetwarzane z mniejszego obserwatora powierzchni wewnętrznej, ale siatki są buforowane, dopóki nie znikną z większego obserwatora powierzchni zewnętrznej. Dzięki temu aplikacja pozwala uniknąć przetwarzania wielu nadmiarowych zmian z powodu przenoszenia użytkowników lokalnych.

- Ponieważ powierzchnia przestrzenna może również zniknąć tymczasowo z powodu śledzenia utraty, aplikacja również odrzuca usunięte powierzchnie przestrzenne podczas śledzenia utraty.

- Ogólnie rzecz biorąc, aplikacja powinna ocenić kompromis między zmniejszeniem przetwarzania aktualizacji a zwiększonym użyciem pamięci w celu określenia idealnej strategii buforowania.

Renderowanie

Istnieją trzy podstawowe sposoby, w których siatki mapowania przestrzennego mają tendencję do renderowania:

- Wizualizacja powierzchni

- Często przydatne jest bezpośrednie wizualizowanie powierzchni przestrzennych. Na przykład rzutowanie "cieni" z obiektów na powierzchnie przestrzenne może zapewnić użytkownikowi pomocną opinię wizualną podczas umieszczania hologramów na powierzchniach.

- Należy pamiętać, że siatki przestrzenne różnią się od rodzaju siatki, które może stworzyć artysta 3D. Topologia trójkąta nie będzie tak czysta, jak topologia utworzona przez człowieka, a siatka będzie cierpieć z powodu różnych błędów.

- Aby stworzyć przyjemną wizualną estetykę, możesz wykonać pewne przetwarzanie siatki, na przykład w celu wypełnienia otworów lub gładkich normalnych powierzchni. Możesz również użyć cieniowania do projektowania tekstur zaprojektowanych przez artystę na siatkę zamiast bezpośrednio wizualizować topologię siatki i normalnych.

- Do okludingu hologramów za powierzchniami rzeczywistymi

- Powierzchnie przestrzenne mogą być renderowane w przebiegu tylko do głębi, co wpływa tylko na bufor głębokości i nie ma wpływu na cele renderowania kolorów.

- Spowoduje to zagruntowanie buforu głębokości do okludium, a następnie renderowane hologramy za powierzchniami przestrzennymi. Dokładne okluzji hologramów zwiększa poczucie, że hologramy naprawdę istnieją w przestrzeni fizycznej użytkownika.

- Aby włączyć renderowanie tylko do głębokości, zaktualizuj stan blend, aby ustawić właściwość RenderTargetWriteMask na zero dla wszystkich elementów docelowych renderowania kolorów.

- Modyfikowanie wyglądu hologramów occludluded przez powierzchnie świata rzeczywistego

- Zwykle renderowana geometria jest ukryta, gdy jest okluded. Jest to osiągane przez ustawienie funkcji głębokości w stanie wzornika głębi na "mniejsze lub równe", co powoduje, że geometria jest widoczna tylko wtedy, gdy jest bliżej aparatu niż wszystkie wcześniej renderowane geometrie.

- Jednak może być przydatne, aby zachować pewną geometrię widoczną nawet wtedy, gdy jest okluded, i zmodyfikować jego wygląd, gdy okluded jako sposób przekazywania wizualnej opinii użytkownikowi. Dzięki temu aplikacja może na przykład pokazać użytkownikowi lokalizację obiektu, jednocześnie wyjaśniając, że znajduje się za powierzchnią rzeczywistą.

- Aby to osiągnąć, renderuj geometrię po raz drugi przy użyciu innego cieniowania, który tworzy pożądany wygląd "okluded". Przed renderowaniem geometrii po raz drugi wprowadź dwie zmiany w stanie wzornika głębokości. Najpierw ustaw funkcję głębokości na "większą lub równą", aby geometria była widoczna tylko wtedy, gdy jest dalej od aparatu niż wszystkie wcześniej renderowane geometrie. Po drugie ustaw właściwość DepthWriteMask na zero, aby bufor głębokości nie został zmodyfikowany (bufor głębokości powinien nadal reprezentować głębokość geometrii znajdującej się najbliżej aparatu).

Wydajność jest ważnym problemem podczas renderowania siatki mapowania przestrzennego. Poniżej przedstawiono niektóre techniki wydajności renderowania specyficzne dla renderowania siatek mapowania przestrzennego:

- Dopasowuje gęstość trójkątów

- W przypadku żądania siatek powierzchni przestrzennych od obserwatora powierzchni zażądaj najmniejszej gęstości siatki trójkątów, które będą wystarczające dla Twoich potrzeb.

- Może to mieć sens, aby zmieniać gęstość trójkątów na powierzchni według powierzchni, w zależności od odległości powierzchni od użytkownika i jego istotności dla środowiska użytkownika.

- Zmniejszenie liczby trójkątów spowoduje zmniejszenie użycia pamięci i kosztów przetwarzania wierzchołków na procesorze GPU, choć nie wpłynie to na koszty przetwarzania pikseli.

- Korzystanie z frustum culling

- Frustum culling pomija obiekty rysunkowe, których nie można zobaczyć, ponieważ znajdują się poza bieżącym frustum wyświetlania. Zmniejsza to zarówno koszty przetwarzania procesora CPU, jak i procesora GPU.

- Ponieważ wyłuszczanie jest wykonywane na podstawie siatki, a powierzchnie przestrzenne mogą być duże, podzielenie każdej siatki powierzchni przestrzennej na mniejsze fragmenty może spowodować bardziej wydajne wyłuski (w tym mniej trójkątów poza ekranem są renderowane). Istnieje jednak kompromis; tym więcej siatek, tym więcej wywołań rysowania należy wykonać, co może zwiększyć koszty procesora CPU. W skrajnym przypadku obliczenia frustum mogą nawet mieć wymierny koszt procesora CPU.

- Dostosowywanie kolejności renderowania

- Powierzchnie przestrzenne są zwykle duże, ponieważ reprezentują całe otaczające je środowisko użytkownika. Koszty przetwarzania pikseli na procesorze GPU mogą być wysokie, zwłaszcza w przypadkach, gdy istnieje więcej niż jedna warstwa widocznej geometrii (w tym powierzchnie przestrzenne i inne hologramy). W takim przypadku warstwa najbliżej użytkownika będzie ochładzać wszystkie warstwy dalej, więc każdy czas procesora GPU poświęcany na renderowanie tych bardziej odległych warstw jest marnowany.

- Aby zmniejszyć tę nadmiarową pracę na procesorze GPU, pomaga renderować nieprzezroczyste powierzchnie w kolejności od początku do tyłu (bliżej te pierwsze, bardziej odległe ostatni). Przez "nieprzezroczyste" oznaczamy powierzchnie, dla których właściwość DepthWriteMask jest ustawiona na jedną w stanie wzornika głębi. Gdy najbliższe powierzchnie są renderowane, zagruntują bufor głębokości, tak aby bardziej odległe powierzchnie były efektywnie pomijane przez procesor pikseli na procesorze GPU.

Przetwarzanie siatki

Aplikacja może chcieć wykonywać różne operacje na siatkach powierzchni przestrzennych zgodnie z jej potrzebami. Dane indeksu i wierzchołka dostarczane z każdą siatką powierzchni przestrzennych używają tego samego znanego układu co bufory wierzchołków i indeksów , które są używane do renderowania siatki trójkątów we wszystkich nowoczesnych interfejsach API renderowania. Jednak jednym z kluczowych faktów, o których należy pamiętać, jest to, że trójkąty mapowania przestrzennego mają kolejność uzwojenia z przodu zgodnie z ruchem wskazówek zegara. Każdy trójkąt jest reprezentowany przez trzy indeksy wierzchołków w buforze indeksu siatki, a te indeksy identyfikują wierzchołki trójkąta w kolejności wskazówek zegara , gdy trójkąt jest wyświetlany z przodu . Przednia (lub zewnętrzna) siatk powierzchni przestrzennych odpowiada, jak można oczekiwać przedniej (widocznej) strony powierzchni rzeczywistych.

Aplikacje powinny uprościć siatkę tylko wtedy, gdy najcięższa gęstość trójkątów zapewniana przez obserwatora powierzchni jest nadal wystarczająco gruba — ta praca jest kosztowna obliczeniowo i już wykonywana przez środowisko uruchomieniowe w celu wygenerowania różnych podanych poziomów szczegółowości.

Ponieważ każdy obserwator powierzchni może zapewnić wiele niezwiązanych powierzchni przestrzennych, niektóre aplikacje mogą chcieć przyciąć te siatki powierzchni przestrzennych do siebie, a następnie spakować je razem. Ogólnie rzecz biorąc, krok przycinania jest wymagany, ponieważ pobliskie siatki powierzchni przestrzennej często nakładają się nieznacznie.

Raycasting and Collision

Aby interfejs API fizyki (taki jak Havok) zapewniał aplikację z funkcją raycastingu i kolizji dla powierzchni przestrzennych, aplikacja musi zapewnić siatki powierzchni przestrzennej interfejsowi API fizyki. Siatki używane do fizyki często mają następujące właściwości:

- Zawierają tylko niewielką liczbę trójkątów. Operacje fizyki są bardziej wymagające obliczeń niż operacje renderowania.

- Są one "ciasne w wodzie". Powierzchnie przeznaczone do stałego nie powinny mieć małych otworów w nich; nawet otwory zbyt małe, aby być widoczne, mogą powodować problemy.

- Są one przekształcane w wypukłe kadłuby. Kadłuby wypukłe mają kilka wielokątów i są wolne od otworów i są znacznie bardziej wydajne obliczeniowo do przetwarzania niż nieprzetworzone siatki trójkątów.

Podczas wykonywania raycasts na powierzchniach przestrzennych należy pamiętać, że te powierzchnie są często złożone, zaśmiecone kształty pełne niechlujnych małych szczegółów - tak jak twoje biurko! Oznacza to, że pojedynczy raycast jest często niewystarczający, aby zapewnić wystarczającą ilość informacji o kształcie powierzchni i kształcie pustej przestrzeni w pobliżu. Zazwyczaj dobrym pomysłem jest zrobienie wielu raycasts w małym obszarze i użycie zagregowanych wyników w celu uzyskania bardziej niezawodnego zrozumienia powierzchni. Na przykład użycie średniej 10 raycasts do kierowania umieszczaniem hologramu na powierzchni przyniesie znacznie łagodniejszy i mniej "jittery" wynik, że przy użyciu tylko jednego raycastu.

Należy jednak pamiętać, że każda raycast może mieć wysoki koszt obliczeniowy. W zależności od scenariusza użycia należy wyprzedzić koszt obliczeniowy dodatkowych raycastów (wykonanych przy każdej ramce) względem kosztów obliczeniowych przetwarzania siatki , aby wygładzić i usunąć otwory na powierzchniach przestrzennych (wykonywane po zaktualizowaniu siatki przestrzennej).

Środowisko skanowania środowiska

Każda aplikacja korzystająca z mapowania przestrzennego powinna rozważyć zapewnienie "środowiska skanowania"; proces, za pomocą którego aplikacja prowadzi użytkownika do skanowania powierzchni, które są niezbędne do poprawnego działania aplikacji.

Przykład skanowania

Charakter tego środowiska skanowania może się znacznie różnić w zależności od potrzeb poszczególnych aplikacji, ale dwie główne zasady powinny kierować się jego projektowaniem.

Po pierwsze, wyraźna komunikacja z użytkownikiem jest głównym problemem. Użytkownik powinien zawsze wiedzieć, czy wymagania aplikacji są spełnione. Gdy nie są one spełnione, powinno być natychmiast jasne dla użytkownika, dlaczego tak jest i powinny one być szybko kierowane do podjęcia odpowiednich działań.

Po drugie , aplikacje powinny próbować zachować równowagę między wydajnością a niezawodnością. Gdy jest to możliwe w sposób niezawodny, aplikacje powinny automatycznie analizować dane mapowania przestrzennego, aby zaoszczędzić czas użytkownika. Gdy nie jest to możliwe w sposób niezawodny, aplikacje powinny zamiast tego umożliwić użytkownikowi szybkie dostarczenie aplikacji dodatkowych informacji, których wymaga.

Aby ułatwić projektowanie odpowiedniego środowiska skanowania, należy wziąć pod uwagę, które z następujących możliwości mają zastosowanie do aplikacji:

Brak środowiska skanowania

- Aplikacja może działać doskonale bez żadnego środowiska skanowania z przewodnikiem; pozna powierzchnie obserwowane w trakcie naturalnego ruchu użytkowników.

- Na przykład aplikacja, która umożliwia użytkownikowi rysowanie na powierzchniach za pomocą holograficznej farby sprayowej, wymaga znajomości tylko powierzchni obecnie widocznych dla użytkownika.

- Środowisko może być już skanowane, jeśli jest to środowisko, w którym użytkownik spędził już dużo czasu przy użyciu urządzenia HoloLens.

- Należy jednak pamiętać, że aparat używany przez mapowanie przestrzenne może zobaczyć tylko 3,1 m przed użytkownikiem, więc mapowanie przestrzenne nie będzie wiedziało o bardziej odległych powierzchniach, chyba że użytkownik zaobserwował je z bliższej odległości w przeszłości.

- Dzięki temu użytkownik rozumie, które powierzchnie zostały zeskanowane, aplikacja powinna przekazać wizualne informacje zwrotne do tego efektu, na przykład rzutowanie wirtualnych cieni na zeskanowane powierzchnie może pomóc użytkownikowi umieścić hologramy na tych powierzchniach.

- W tym przypadku woluminy ograniczenia obserwatora powierzchni przestrzennej powinny zostać zaktualizowane do układu współrzędnych przestrzennych zablokowanych przez treść, tak aby podążały za użytkownikiem.

Znajdź odpowiednią lokalizację

- Aplikacja może być przeznaczona do użytku w lokalizacji z określonymi wymaganiami.

- Na przykład aplikacja może wymagać pustego obszaru wokół użytkownika, aby można było bezpiecznie ćwiczyć holograficzne kung-fu.

- Aplikacje powinny komunikować się z określonymi wymaganiami z góry i wzmacniać je dzięki jasnym informacjom wizualnym.

- W tym przykładzie aplikacja powinna wizualizować zakres wymaganego pustego obszaru i wizualnie wyróżnić obecność wszelkich niepożądanych obiektów w tej strefie.

- W tym przypadku woluminy ograniczenia obserwatora powierzchni przestrzennej powinny używać systemu współrzędnych przestrzennych zablokowanych na świecie w wybranej lokalizacji.

Znajdowanie odpowiedniej konfiguracji powierzchni

- Aplikacja może wymagać określonej konfiguracji powierzchni, na przykład dwóch dużych, płaskich, przeciwstawnych ścian w celu utworzenia holograficznej sali luster.

- W takich przypadkach aplikacja będzie musiała analizować powierzchnie dostarczane przez mapowanie przestrzenne, aby wykrywać odpowiednie powierzchnie i kierować użytkownika do nich.

- Użytkownik powinien mieć opcję rezerwową, jeśli analiza powierzchni aplikacji nie jest niezawodna. Jeśli na przykład aplikacja niepoprawnie identyfikuje drzwi jako płaską ścianę, użytkownik potrzebuje prostego sposobu skorygowania tego błędu.

Skanowanie części środowiska

- Aplikacja może chcieć przechwycić tylko część środowiska zgodnie z instrukcjami użytkownika.

- Na przykład aplikacja skanuje część pokoju, aby użytkownik mógł opublikować holografię sklasyfikowaną reklamę dla mebli, które chcą sprzedać.

- W takim przypadku aplikacja powinna przechwytywać dane mapowania przestrzennego w regionach obserwowanych przez użytkownika podczas skanowania.

Skanowanie całego pokoju

- Aplikacja może wymagać skanowania wszystkich powierzchni w bieżącym pomieszczeniu, w tym tych znajdujących się za użytkownikiem.

- Na przykład gra może umieścić użytkownika w roli Gulliver, pod oblężeniem z setek małych Lilliputians zbliżających się ze wszystkich kierunków.

- W takich przypadkach aplikacja będzie musiała określić, ile powierzchni w bieżącym pomieszczeniu zostało już zeskanowanych, i skierować spojrzenie użytkownika w celu wypełnienia znaczących luk.

- Kluczem do tego procesu jest przekazanie wizualnej opinii, która jasno pokazuje użytkownikowi, który powierzchnie nie zostały jeszcze zeskanowane. Aplikacja może na przykład użyć mgły opartej na odległości , aby wizualnie wyróżnić regiony, które nie są objęte powierzchniami mapowania przestrzennego.

Tworzenie początkowej migawki środowiska

- Aplikacja może chcieć zignorować wszystkie zmiany w środowisku po utworzeniu początkowej migawki.

- Może to być odpowiednie, aby uniknąć zakłóceń danych utworzonych przez użytkownika, które są ściśle powiązane ze stanem początkowym środowiska.

- W takim przypadku aplikacja powinna utworzyć kopię danych mapowania przestrzennego w stanie początkowym po zakończeniu skanowania.

- Aplikacje powinny nadal otrzymywać aktualizacje danych mapowania przestrzennego, jeśli hologramy są nadal prawidłowo okludnione przez środowisko.

- Dalsze aktualizacje danych mapowania przestrzennego umożliwiają również wizualizowanie wszelkich zmian, które wystąpiły, wyjaśniając użytkownikowi różnice między wcześniejszymi i obecnymi stanami środowiska.

Tworzenie migawek środowiska zainicjowanych przez użytkownika

- Aplikacja może chcieć reagować na zmiany środowiskowe tylko wtedy, gdy użytkownik zostanie poinstruowany.

- Na przykład użytkownik może utworzyć wiele 3D "posągów" znajomego, przechwytując swoje pozy w różnych momentach.

Zezwalaj użytkownikowi na zmianę środowiska

- Aplikacja może być przeznaczona do reagowania w czasie rzeczywistym na wszelkie zmiany wprowadzone w środowisku użytkownika.

- Na przykład użytkownik rysując kurtynę może wyzwolić "zmianę sceny" dla gry holograficznej, która odbywa się po drugiej stronie.

Przewodnik użytkownika, aby uniknąć błędów w danych mapowania przestrzennego

- Aplikacja może chcieć udostępnić użytkownikowi wskazówki podczas skanowania środowiska.

- Może to pomóc użytkownikowi uniknąć pewnych rodzajów błędów w danych mapowania przestrzennego, na przykład dzięki zachowaniu dala od okien przeciwsłonecznych lub dublowań.

Należy pamiętać o tym, że "zakres" danych mapowania przestrzennego nie jest nieograniczony. Mapowanie przestrzenne tworzy stałą bazę danych dużych przestrzeni, ale udostępnia je tylko aplikacjom w bąbelku o ograniczonym rozmiarze wokół użytkownika. Jeśli zaczniesz od początku długiego korytarza i odejdziesz wystarczająco daleko od początku, to ostatecznie powierzchnie przestrzenne z powrotem na początku znikną. Można temu zapobiec, buforując te powierzchnie w aplikacji po ich zniknięciu z dostępnych danych mapowania przestrzennego.

Przetwarzanie siatki

Może to pomóc w wykrywaniu typowych typów błędów na powierzchniach i filtrowaniu, usuwaniu lub modyfikowaniu danych mapowania przestrzennego zgodnie z potrzebami.

Należy pamiętać, że dane mapowania przestrzennego mają być tak wierny, jak to możliwe na rzeczywistych powierzchniach, więc wszelkie przetwarzanie, które stosujesz, wiąże się z ryzykiem przesuwającym powierzchnie dalej od "prawdy".

Oto kilka przykładów różnych typów przetwarzania siatki, które mogą okazać się przydatne:

Wypełnienie otworem

- Jeśli mały obiekt wykonany z ciemnego materiału nie będzie skanowany, pozostawi dziurę na otaczającej powierzchni.

- Dziury wpływają na oklusion: hologramy można zobaczyć "przez" dziurę w rzekomo nieprzezroczystej powierzchni świata rzeczywistego.

- Otwory wpływają na promienie: jeśli używasz raycastów, aby pomóc użytkownikom w interakcji z powierzchniami, może to być niepożądane dla tych promieni, aby przechodzić przez otwory. Jednym z środków zaradczych jest użycie pakietu wielu raycastów obejmujących odpowiedni region o odpowiednim rozmiarze. Pozwoli to filtrować wyniki "odstające", dzięki czemu nawet jeśli jeden raycast przechodzi przez małą dziurę, wynik agregacji będzie nadal prawidłowy. Jednak takie podejście wiąże się z kosztem obliczeniowym.

- Dziury wpływają na kolizje fizyki: obiekt kontrolowany przez symulację fizyki może spaść przez dziurę w podłodze i zostać utracony.

- Można algorytmicznie wypełnić takie otwory w siatce powierzchni. Należy jednak dostosować algorytm, aby "prawdziwe dziury", takie jak okna i drzwi, nie zostały wypełnione. Trudno jest niezawodnie odróżnić "prawdziwe dziury" od "wyimaginowanych otworów", więc trzeba będzie eksperymentować z różnymi heurystykami, takimi jak "rozmiar" i "kształt granicy".

Usuwanie halucynacji

- Odbicia, jasne światła i poruszające się obiekty mogą pozostawić małe utrzymujące się "halucynacje" pływające w środku powietrza.

- Halucynacje wpływają na okluzję: halucynacje mogą stać się widoczne jako ciemne kształty poruszające się przed i okluding innych hologramów.

- Halucynacje wpływają na raycasts: jeśli używasz raycasts, aby pomóc użytkownikom w interakcji z powierzchniami, te promienie mogą uderzyć halucynację zamiast powierzchni za nią. Podobnie jak w przypadku otworów, jednym ograniczeniem jest użycie wielu raycastów zamiast pojedynczego raycastu, ale znowu będzie to przy kosztach obliczeniowych.

- Halucynacje wpływają na kolizje fizyki: obiekt kontrolowany przez symulację fizyki może utknąć przed halucynacją i nie być w stanie przejść przez pozornie jasny obszar przestrzeni.

- Można filtrować takie halucynacje z siatki powierzchniowej. Jednak podobnie jak w przypadku otworów, należy dostosować algorytm tak, aby prawdziwe małe obiekty, takie jak stojaki lampowe i uchwyty drzwi, nie zostały usunięte.

Wygładzanie

- Mapowanie przestrzenne może zwracać powierzchnie, które wydają się być szorstkie lub "hałaśliwy" w porównaniu z ich rzeczywistymi odpowiednikami.

- Gładkość wpływa na kolizje fizyki: jeśli podłoga jest szorstka, fizycznie symulowana piłka golfowa może nie zwijać się gładko przez nią w linii prostej.

- Gładkość wpływa na renderowanie: jeśli powierzchnia jest wizualizowana bezpośrednio, szorstka powierzchnia normalne może wpłynąć na jego wygląd i zakłócić "czysty" wygląd. Można temu zapobiec przy użyciu odpowiedniego oświetlenia i tekstur w cieniatorze używanym do renderowania powierzchni.

- Można wygładzić szorstkość w siatce powierzchniowej. Może to jednak odepchnąć powierzchnię dalej od odpowiedniej powierzchni świata rzeczywistego. Utrzymywanie ścisłej korespondencji jest ważne, aby uzyskać dokładny hologram okluzji i umożliwić użytkownikom osiągnięcie precyzyjnych i przewidywalnych interakcji z powierzchniami holograficzne.

- Jeśli wymagana jest tylko zmiana kosmetyczna, może być wystarczająca do gładkich normalnych wierzchołków bez zmieniania pozycji wierzchołków.

Znalezienie płaszczyzny

- Istnieje wiele form analizy, które aplikacja może chcieć wykonać na powierzchniach udostępnianych przez mapowanie przestrzenne.

- Jednym z prostych przykładów jest "znalezienie płaszczyzny"; identyfikowanie ograniczonych, głównie planarnych regionów powierzchni.

- Regiony planarne mogą być używane jako powierzchnie robocze holograficzne, regiony, w których zawartość holograficzne może być automatycznie umieszczana przez aplikację.

- Regiony planar mogą ograniczać interfejs użytkownika, aby umożliwić użytkownikom interakcję z powierzchniami, które najlepiej odpowiadają ich potrzebom.

- Regiony planarne mogą być używane tak jak w świecie rzeczywistym, aby odpowiedniki holograficzne były obiektami funkcjonalnymi, takimi jak ekrany LCD, tabele lub tablice.

- Regiony planarne mogą definiować obszary gry, tworząc podstawy poziomów gier wideo.

- Regiony planar mogą pomóc wirtualnym agentom poruszać się po świecie rzeczywistym, identyfikując obszary podłogi, na których mogą chodzić prawdziwi ludzie.

Prototypowanie i debugowanie

Przydatne narzędzia

- Emulator holoLens może służyć do tworzenia aplikacji przy użyciu mapowania przestrzennego bez dostępu do fizycznego urządzenia HoloLens. Umożliwia ona symulowanie sesji na żywo na urządzeniu HoloLens w realistycznym środowisku ze wszystkimi danymi, z których aplikacja normalnie korzysta, w tym z ruchu HoloLens, układów współrzędnych przestrzennych i siatk mapowania przestrzennego. Może to służyć do zapewnienia niezawodnych, powtarzalnych danych wejściowych, które mogą być przydatne w przypadku debugowania problemów i oceniania zmian w kodzie.

- Aby odtworzyć scenariusz, przechwyć dane mapowania przestrzennego za pośrednictwem sieci z dynamicznego urządzenia HoloLens, a następnie zapisz je na dysku i użyj ponownie w kolejnych sesjach debugowania.

- Widok 3D portalu urządzeń z systemem Windows umożliwia wyświetlanie wszystkich powierzchni przestrzennych dostępnych obecnie za pośrednictwem systemu mapowania przestrzennego. Zapewnia to podstawę porównania powierzchni przestrzennych wewnątrz aplikacji; można na przykład łatwo określić, czy brakuje jakichkolwiek powierzchni przestrzennych lub są wyświetlane w niewłaściwym miejscu.

Ogólne wskazówki dotyczące prototypowania

- Ponieważ błędy w danych mapowania przestrzennego mogą mieć duży wpływ na środowisko użytkownika, zalecamy przetestowanie aplikacji w wielu różnych środowiskach.

- Nie należy uwięzić w zwyczaju zawsze testowania w tej samej lokalizacji, na przykład w biurku. Pamiętaj, aby przetestować różne powierzchnie różnych pozycji, kształtów, rozmiarów i materiałów.

- Podobnie, podczas gdy dane syntetyczne lub zarejestrowane mogą być przydatne do debugowania, nie są zbyt zależne od tych samych kilku przypadków testowych. Może to opóźnić znalezienie ważnych problemów, które bardziej zróżnicowane testy zostałyby złapane wcześniej.

- Dobrym pomysłem jest przeprowadzenie testów z rzeczywistymi (i idealnie niekoachowanym) użytkownikami, ponieważ mogą nie używać urządzenia HoloLens lub aplikacji w dokładnie taki sam sposób, w jaki to robisz. W rzeczywistości może to zaskoczyć, jak rozbieżne zachowanie ludzi, wiedza i założenia mogą być!

Rozwiązywanie problemów

- Aby siatki powierzchniowe mogły być poprawnie zorientowane, każdy obiekt GameObject musi być aktywny, zanim zostanie wysłany do serwera SurfaceObserver, aby miał skonstruowaną siatkę. W przeciwnym razie siatki pojawią się w przestrzeni, ale obracane pod dziwnymi kątami.

- Obiekt GameObject, który uruchamia skrypt komunikujący się z serwerem SurfaceObserver, musi być ustawiony na źródło. W przeciwnym razie wszystkie obiekty GameObject utworzone i wysyłane do serwera SurfaceObserver w celu utworzenia ich siatki będą miały przesunięcie równe przesunięciom obiektu nadrzędnego gry. Może to sprawić, że siatki pojawią się kilka metrów, co utrudnia debugowanie tego, co się dzieje.