Copy data to or from Azure Data Lake Storage Gen1 using Azure Data Factory or Azure Synapse Analytics

APPLIES TO:  Azure Data Factory

Azure Data Factory  Azure Synapse Analytics

Azure Synapse Analytics

Tip

Try out Data Factory in Microsoft Fabric, an all-in-one analytics solution for enterprises. Microsoft Fabric covers everything from data movement to data science, real-time analytics, business intelligence, and reporting. Learn how to start a new trial for free!

This article outlines how to copy data to and from Azure Data Lake Storage Gen1. To learn more, read the introductory article for Azure Data Factory or Azure Synapse Analytics.

Supported capabilities

This Azure Data Lake Storage Gen1 connector is supported for the following capabilities:

| Supported capabilities | IR |

|---|---|

| Copy activity (source/sink) | ① ② |

| Mapping data flow (source/sink) | ① |

| Lookup activity | ① ② |

| GetMetadata activity | ① ② |

| Delete activity | ① ② |

① Azure integration runtime ② Self-hosted integration runtime

Specifically, with this connector you can:

- Copy files by using one of the following methods of authentication: service principal or managed identities for Azure resources.

- Copy files as is or parse or generate files with the supported file formats and compression codecs.

- Preserve ACLs when copying into Azure Data Lake Storage Gen2.

Important

If you copy data by using the self-hosted integration runtime, configure the corporate firewall to allow outbound traffic to <ADLS account name>.azuredatalakestore.net and login.microsoftonline.com/<tenant>/oauth2/token on port 443. The latter is the Azure Security Token Service that the integration runtime needs to communicate with to get the access token.

Get started

Tip

For a walk-through of how to use the Azure Data Lake Store connector, see Load data into Azure Data Lake Store.

To perform the Copy activity with a pipeline, you can use one of the following tools or SDKs:

- The Copy Data tool

- The Azure portal

- The .NET SDK

- The Python SDK

- Azure PowerShell

- The REST API

- The Azure Resource Manager template

Create a linked service to Azure Data Lake Storage Gen1 using UI

Use the following steps to create a linked service to Azure Data Lake Storage Gen1 in the Azure portal UI.

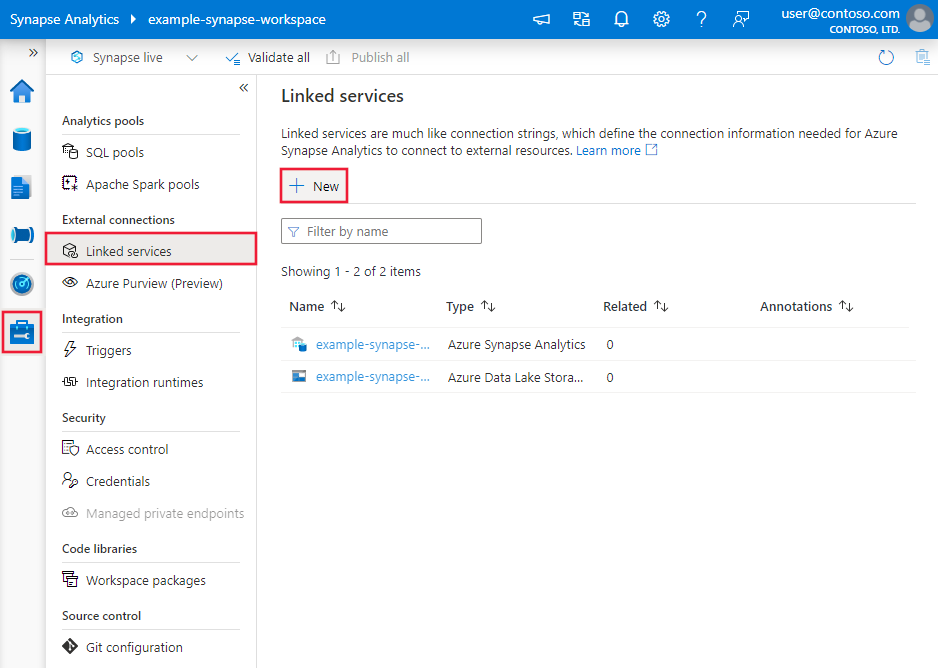

Browse to the Manage tab in your Azure Data Factory or Synapse workspace and select Linked Services, then select New:

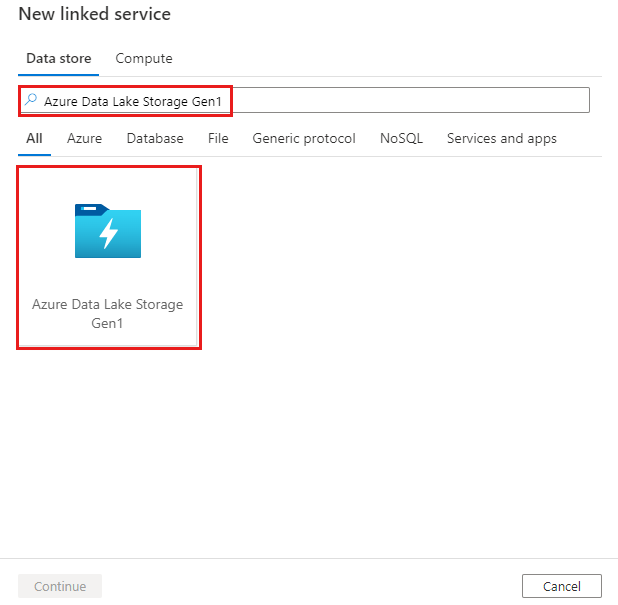

Search for Azure Data Lake Storage Gen1 and select the Azure Data Lake Storage Gen1 connector.

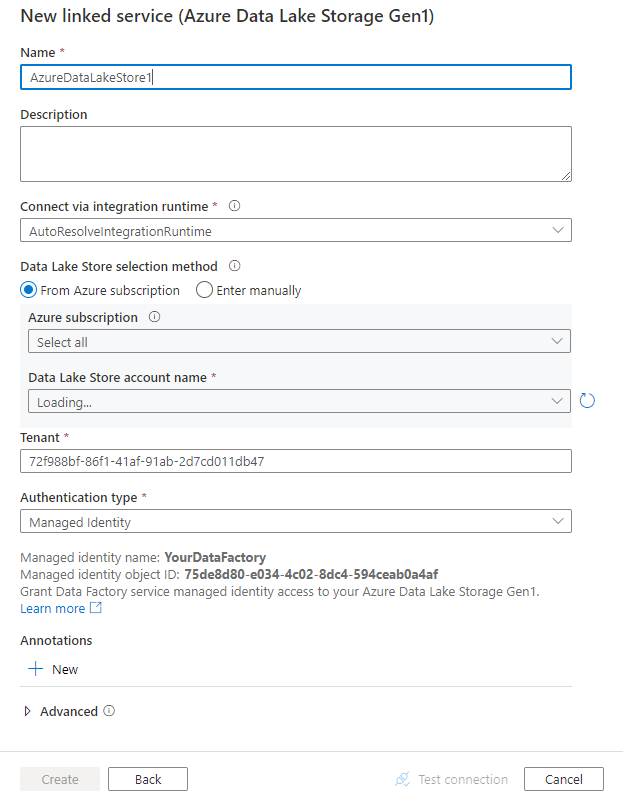

Configure the service details, test the connection, and create the new linked service.

Connector configuration details

The following sections provide information about properties that are used to define entities specific to Azure Data Lake Store Gen1.

Linked service properties

The following properties are supported for the Azure Data Lake Store linked service:

| Property | Description | Required |

|---|---|---|

| type | The type property must be set to AzureDataLakeStore. |

Yes |

| dataLakeStoreUri | Information about the Azure Data Lake Store account. This information takes one of the following formats: https://[accountname].azuredatalakestore.net/webhdfs/v1 or adl://[accountname].azuredatalakestore.net/. |

Yes |

| subscriptionId | The Azure subscription ID to which the Data Lake Store account belongs. | Required for sink |

| resourceGroupName | The Azure resource group name to which the Data Lake Store account belongs. | Required for sink |

| connectVia | The integration runtime to be used to connect to the data store. You can use the Azure integration runtime or a self-hosted integration runtime if your data store is located in a private network. If this property isn't specified, the default Azure integration runtime is used. | No |

Use service principal authentication

To use service principal authentication, follow these steps.

Register an application entity in Microsoft Entra ID and grant it access to Data Lake Store. For detailed steps, see Service-to-service authentication. Make note of the following values, which you use to define the linked service:

- Application ID

- Application key

- Tenant ID

Grant the service principal proper permission. See examples on how permission works in Data Lake Storage Gen1 from Access control in Azure Data Lake Storage Gen1.

- As source: In Data explorer > Access, grant at least Execute permission for ALL upstream folders including the root, along with Read permission for the files to copy. You can choose to add to This folder and all children for recursive, and add as an access permission and a default permission entry. There's no requirement on account-level access control (IAM).

- As sink: In Data explorer > Access, grant at least Execute permission for ALL upstream folders including the root, along with Write permission for the sink folder. You can choose to add to This folder and all children for recursive, and add as an access permission and a default permission entry.

The following properties are supported:

| Property | Description | Required |

|---|---|---|

| servicePrincipalId | Specify the application's client ID. | Yes |

| servicePrincipalKey | Specify the application's key. Mark this field as a SecureString to store it securely, or reference a secret stored in Azure Key Vault. |

Yes |

| tenant | Specify the tenant information, such as domain name or tenant ID, under which your application resides. You can retrieve it by hovering the mouse in the upper-right corner of the Azure portal. | Yes |

| azureCloudType | For service principal authentication, specify the type of Azure cloud environment to which your Microsoft Entra application is registered. Allowed values are AzurePublic, AzureChina, AzureUsGovernment, and AzureGermany. By default, the service's cloud environment is used. |

No |

Example:

{

"name": "AzureDataLakeStoreLinkedService",

"properties": {

"type": "AzureDataLakeStore",

"typeProperties": {

"dataLakeStoreUri": "https://<accountname>.azuredatalakestore.net/webhdfs/v1",

"servicePrincipalId": "<service principal id>",

"servicePrincipalKey": {

"type": "SecureString",

"value": "<service principal key>"

},

"tenant": "<tenant info, e.g. microsoft.onmicrosoft.com>",

"subscriptionId": "<subscription of ADLS>",

"resourceGroupName": "<resource group of ADLS>"

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Use system-assigned managed identity authentication

A data factory or Synapse workspace can be associated with a system-assigned managed identity, which represents the service for authentication. You can directly use this system-assigned managed identity for Data Lake Store authentication, similar to using your own service principal. It allows this designated resource to access and copy data to or from Data Lake Store.

To use system-assigned managed identity authentication, follow these steps.

Retrieve the system-assigned managed identity information by copying the value of the "Service Identity Application ID" generated along with your factory or Synapse workspace.

Grant the system-assigned managed identity access to Data Lake Store. See examples on how permission works in Data Lake Storage Gen1 from Access control in Azure Data Lake Storage Gen1.

- As source: In Data explorer > Access, grant at least Execute permission for ALL upstream folders including the root, along with Read permission for the files to copy. You can choose to add to This folder and all children for recursive, and add as an access permission and a default permission entry. There's no requirement on account-level access control (IAM).

- As sink: In Data explorer > Access, grant at least Execute permission for ALL upstream folders including the root, along with Write permission for the sink folder. You can choose to add to This folder and all children for recursive, and add as an access permission and a default permission entry.

You don't need to specify any properties other than the general Data Lake Store information in the linked service.

Example:

{

"name": "AzureDataLakeStoreLinkedService",

"properties": {

"type": "AzureDataLakeStore",

"typeProperties": {

"dataLakeStoreUri": "https://<accountname>.azuredatalakestore.net/webhdfs/v1",

"subscriptionId": "<subscription of ADLS>",

"resourceGroupName": "<resource group of ADLS>"

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Use user-assigned managed identity authentication

A data factory can be assigned with one or multiple user-assigned managed identities. You can use this user-assigned managed identity for Blob storage authentication, which allows to access and copy data from or to Data Lake Store. To learn more about managed identities for Azure resources, see Managed identities for Azure resources

To use user-assigned managed identity authentication, follow these steps:

Create one or multiple user-assigned managed identities and grant access to Azure Data Lake. See examples on how permission works in Data Lake Storage Gen1 from Access control in Azure Data Lake Storage Gen1.

- As source: In Data explorer > Access, grant at least Execute permission for ALL upstream folders including the root, along with Read permission for the files to copy. You can choose to add to This folder and all children for recursive, and add as an access permission and a default permission entry. There's no requirement on account-level access control (IAM).

- As sink: In Data explorer > Access, grant at least Execute permission for ALL upstream folders including the root, along with Write permission for the sink folder. You can choose to add to This folder and all children for recursive, and add as an access permission and a default permission entry.

Assign one or multiple user-assigned managed identities to your data factory and create credentials for each user-assigned managed identity.

The following property is supported:

| Property | Description | Required |

|---|---|---|

| credentials | Specify the user-assigned managed identity as the credential object. | Yes |

Example:

{

"name": "AzureDataLakeStoreLinkedService",

"properties": {

"type": "AzureDataLakeStore",

"typeProperties": {

"dataLakeStoreUri": "https://<accountname>.azuredatalakestore.net/webhdfs/v1",

"subscriptionId": "<subscription of ADLS>",

"resourceGroupName": "<resource group of ADLS>",

"credential": {

"referenceName": "credential1",

"type": "CredentialReference"

},

"connectVia": {

"referenceName": "<name of Integration Runtime>",

"type": "IntegrationRuntimeReference"

}

}

}

Dataset properties

For a full list of sections and properties available for defining datasets, see the Datasets article.

Azure Data Factory supports the following file formats. Refer to each article for format-based settings.

- Avro format

- Binary format

- Delimited text format

- Excel format

- JSON format

- ORC format

- Parquet format

- XML format

The following properties are supported for Azure Data Lake Store Gen1 under location settings in the format-based dataset:

| Property | Description | Required |

|---|---|---|

| type | The type property under location in the dataset must be set to AzureDataLakeStoreLocation. |

Yes |

| folderPath | The path to a folder. If you want to use a wildcard to filter folders, skip this setting and specify it in activity source settings. | No |

| fileName | The file name under the given folderPath. If you want to use a wildcard to filter files, skip this setting and specify it in activity source settings. | No |

Example:

{

"name": "DelimitedTextDataset",

"properties": {

"type": "DelimitedText",

"linkedServiceName": {

"referenceName": "<ADLS Gen1 linked service name>",

"type": "LinkedServiceReference"

},

"schema": [ < physical schema, optional, auto retrieved during authoring > ],

"typeProperties": {

"location": {

"type": "AzureDataLakeStoreLocation",

"folderPath": "root/folder/subfolder"

},

"columnDelimiter": ",",

"quoteChar": "\"",

"firstRowAsHeader": true,

"compressionCodec": "gzip"

}

}

}

Copy activity properties

For a full list of sections and properties available for defining activities, see Pipelines. This section provides a list of properties supported by Azure Data Lake Store source and sink.

Azure Data Lake Store as source

Azure Data Factory supports the following file formats. Refer to each article for format-based settings.

- Avro format

- Binary format

- Delimited text format

- Excel format

- JSON format

- ORC format

- Parquet format

- XML format

The following properties are supported for Azure Data Lake Store Gen1 under storeSettings settings in the format-based copy source:

| Property | Description | Required |

|---|---|---|

| type | The type property under storeSettings must be set to AzureDataLakeStoreReadSettings. |

Yes |

| Locate the files to copy: | ||

| OPTION 1: static path |

Copy from the given folder/file path specified in the dataset. If you want to copy all files from a folder, additionally specify wildcardFileName as *. |

|

| OPTION 2: name range - listAfter |

Retrieve the folders/files whose name is after this value alphabetically (exclusive). It utilizes the service-side filter for ADLS Gen1, which provides better performance than a wildcard filter. The service applies this filter to the path defined in dataset, and only one entity level is supported. See more examples in Name range filter examples. |

No |

| OPTION 2: name range - listBefore |

Retrieve the folders/files whose name is before this value alphabetically (inclusive). It utilizes the service-side filter for ADLS Gen1, which provides better performance than a wildcard filter. The service applies this filter to the path defined in dataset, and only one entity level is supported. See more examples in Name range filter examples. |

No |

| OPTION 3: wildcard - wildcardFolderPath |

The folder path with wildcard characters to filter source folders. Allowed wildcards are: * (matches zero or more characters) and ? (matches zero or single character); use ^ to escape if your actual folder name has wildcard or this escape char inside. See more examples in Folder and file filter examples. |

No |

| OPTION 3: wildcard - wildcardFileName |

The file name with wildcard characters under the given folderPath/wildcardFolderPath to filter source files. Allowed wildcards are: * (matches zero or more characters) and ? (matches zero or single character); use ^ to escape if your actual file name has wildcard or this escape char inside. See more examples in Folder and file filter examples. |

Yes |

| OPTION 4: a list of files - fileListPath |

Indicates to copy a given file set. Point to a text file that includes a list of files you want to copy, one file per line, which is the relative path to the path configured in the dataset. When using this option, don't specify file name in dataset. See more examples in File list examples. |

No |

| Additional settings: | ||

| recursive | Indicates whether the data is read recursively from the subfolders or only from the specified folder. When recursive is set to true and the sink is a file-based store, an empty folder or subfolder isn't copied or created at the sink. Allowed values are true (default) and false. This property doesn't apply when you configure fileListPath. |

No |

| deleteFilesAfterCompletion | Indicates whether the binary files will be deleted from source store after successfully moving to the destination store. The file deletion is per file, so when copy activity fails, you'll see some files have already been copied to the destination and deleted from source, while others are still remaining on source store. This property is only valid in binary files copy scenario. The default value: false. |

No |

| modifiedDatetimeStart | Files filter based on the attribute: Last Modified. The files are selected if their last modified time is greater than or equal to modifiedDatetimeStart and less than modifiedDatetimeEnd. The time is applied to UTC time zone in the format of "2018-12-01T05:00:00Z". The properties can be NULL, which means no file attribute filter is applied to the dataset. When modifiedDatetimeStart has datetime value but modifiedDatetimeEnd is NULL, it means the files whose last modified attribute is greater than or equal with the datetime value is selected. When modifiedDatetimeEnd has datetime value but modifiedDatetimeStart is NULL, it means the files whose last modified attribute is less than the datetime value is selected.This property doesn't apply when you configure fileListPath. |

No |

| modifiedDatetimeEnd | Same as above. | No |

| enablePartitionDiscovery | For files that are partitioned, specify whether to parse the partitions from the file path and add them as additional source columns. Allowed values are false (default) and true. |

No |

| partitionRootPath | When partition discovery is enabled, specify the absolute root path in order to read partitioned folders as data columns. If it isn't specified, by default, - When you use file path in dataset or list of files on source, partition root path is the path configured in dataset. - When you use wildcard folder filter, partition root path is the subpath before the first wildcard. For example, assuming you configure the path in dataset as "root/folder/year=2020/month=08/day=27": - If you specify partition root path as "root/folder/year=2020", copy activity generates two more columns month and day with value "08" and "27" respectively, in addition to the columns inside the files.- If partition root path isn't specified, no extra column is generated. |

No |

| maxConcurrentConnections | The upper limit of concurrent connections established to the data store during the activity run. Specify a value only when you want to limit concurrent connections. | No |

Example:

"activities":[

{

"name": "CopyFromADLSGen1",

"type": "Copy",

"inputs": [

{

"referenceName": "<Delimited text input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "DelimitedTextSource",

"formatSettings":{

"type": "DelimitedTextReadSettings",

"skipLineCount": 10

},

"storeSettings":{

"type": "AzureDataLakeStoreReadSettings",

"recursive": true,

"wildcardFolderPath": "myfolder*A",

"wildcardFileName": "*.csv"

}

},

"sink": {

"type": "<sink type>"

}

}

}

]

Azure Data Lake Store as sink

Azure Data Factory supports the following file formats. Refer to each article for format-based settings.

The following properties are supported for Azure Data Lake Store Gen1 under storeSettings settings in the format-based copy sink:

| Property | Description | Required |

|---|---|---|

| type | The type property under storeSettings must be set to AzureDataLakeStoreWriteSettings. |

Yes |

| copyBehavior | Defines the copy behavior when the source is files from a file-based data store. Allowed values are: - PreserveHierarchy (default): Preserves the file hierarchy in the target folder. The relative path of the source file to the source folder is identical to the relative path of the target file to the target folder. - FlattenHierarchy: All files from the source folder are in the first level of the target folder. The target files have autogenerated names. - MergeFiles: Merges all files from the source folder to one file. If the file name is specified, the merged file name is the specified name. Otherwise, it's an autogenerated file name. |

No |

| expiryDateTime | Specifies the expiry time of the written files. The time is applied to the UTC time in the format of "2020-03-01T08:00:00Z". By default it's NULL, which means the written files are never expired. | No |

| maxConcurrentConnections | The upper limit of concurrent connections established to the data store during the activity run. Specify a value only when you want to limit concurrent connections. | No |

Example:

"activities":[

{

"name": "CopyToADLSGen1",

"type": "Copy",

"inputs": [

{

"referenceName": "<input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<Parquet output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "<source type>"

},

"sink": {

"type": "ParquetSink",

"storeSettings":{

"type": "AzureDataLakeStoreWriteSettings",

"copyBehavior": "PreserveHierarchy"

}

}

}

}

]

Name range filter examples

This section describes the resulting behavior of name range filters.

| Sample source structure | Configuration | Result |

|---|---|---|

| root a file.csv ax file2.csv ax.csv b file3.csv bx.csv c file4.csv cx.csv |

In dataset: - Folder path: rootIn copy activity source: - List after: a- List before: b |

Then the following files are copied: root ax file2.csv ax.csv b file3.csv |

Folder and file filter examples

This section describes the resulting behavior of the folder path and file name with wildcard filters.

| folderPath | fileName | recursive | Source folder structure and filter result (files in bold are retrieved) |

|---|---|---|---|

Folder* |

(Empty, use default) | false | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

Folder* |

(Empty, use default) | true | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

Folder* |

*.csv |

false | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

Folder* |

*.csv |

true | FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv AnotherFolderB File6.csv |

File list examples

This section describes the resulting behavior of using file list path in copy activity source.

Assuming you have the following source folder structure and want to copy the files in bold:

| Sample source structure | Content in FileListToCopy.txt | Configuration |

|---|---|---|

| root FolderA File1.csv File2.json Subfolder1 File3.csv File4.json File5.csv Metadata FileListToCopy.txt |

File1.csv Subfolder1/File3.csv Subfolder1/File5.csv |

In dataset: - Folder path: root/FolderAIn copy activity source: - File list path: root/Metadata/FileListToCopy.txt The file list path points to a text file in the same data store that includes a list of files you want to copy, one file per line with the relative path to the path configured in the dataset. |

Examples of behavior of the copy operation

This section describes the resulting behavior of the copy operation for different combinations of recursive and copyBehavior values.

| recursive | copyBehavior | Source folder structure | Resulting target |

|---|---|---|---|

| true | preserveHierarchy | Folder1 File1 File2 Subfolder1 File3 File4 File5 |

The target Folder1 is created with the same structure as the source: Folder1 File1 File2 Subfolder1 File3 File4 File5. |

| true | flattenHierarchy | Folder1 File1 File2 Subfolder1 File3 File4 File5 |

The target Folder1 is created with the following structure: Folder1 autogenerated name for File1 autogenerated name for File2 autogenerated name for File3 autogenerated name for File4 autogenerated name for File5 |

| true | mergeFiles | Folder1 File1 File2 Subfolder1 File3 File4 File5 |

The target Folder1 is created with the following structure: Folder1 File1 + File2 + File3 + File4 + File5 contents are merged into one file, with an autogenerated file name. |

| false | preserveHierarchy | Folder1 File1 File2 Subfolder1 File3 File4 File5 |

The target Folder1 is created with the following structure: Folder1 File1 File2 Subfolder1 with File3, File4, and File5 aren't picked up. |

| false | flattenHierarchy | Folder1 File1 File2 Subfolder1 File3 File4 File5 |

The target Folder1 is created with the following structure: Folder1 autogenerated name for File1 autogenerated name for File2 Subfolder1 with File3, File4, and File5 aren't picked up. |

| false | mergeFiles | Folder1 File1 File2 Subfolder1 File3 File4 File5 |

The target Folder1 is created with the following structure: Folder1 File1 + File2 contents are merged into one file with autogenerated file name. autogenerated name for File1 Subfolder1 with File3, File4, and File5 aren't picked up. |

Preserve ACLs to Data Lake Storage Gen2

Tip

To copy data from Azure Data Lake Storage Gen1 into Gen2 in general, see Copy data from Azure Data Lake Storage Gen1 to Gen2 for a walk-through and best practices.

If you want to replicate the access control lists (ACLs) along with data files when you upgrade from Data Lake Storage Gen1 to Data Lake Storage Gen2, see Preserve ACLs from Data Lake Storage Gen1.

Mapping data flow properties

When you're transforming data in mapping data flows, you can read and write files from Azure Data Lake Storage Gen1 in the following formats:

Format-specific settings are located in the documentation for that format. For more information, see Source transformation in mapping data flow and Sink transformation in mapping data flow.

Source transformation

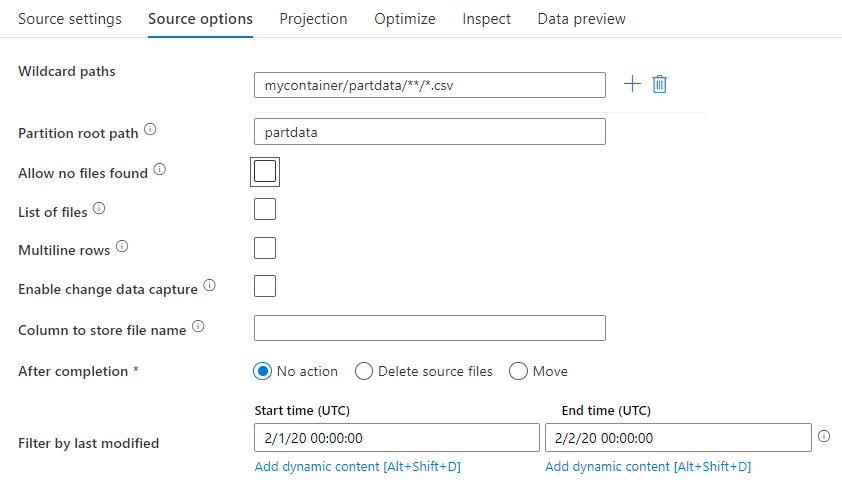

In the source transformation, you can read from a container, folder, or individual file in Azure Data Lake Storage Gen1. The Source options tab lets you manage how the files get read.

Wildcard path: Using a wildcard pattern will instruct the service to loop through each matching folder and file in a single Source transformation. This is an effective way to process multiple files within a single flow. Add multiple wildcard matching patterns with the + sign that appears when hovering over your existing wildcard pattern.

From your source container, choose a series of files that match a pattern. Only container can be specified in the dataset. Your wildcard path must therefore also include your folder path from the root folder.

Wildcard examples:

*Represents any set of characters**Represents recursive directory nesting?Replaces one character[]Matches one of more characters in the brackets/data/sales/**/*.csvGets all csv files under /data/sales/data/sales/20??/**/Gets all files recursively within all matching 20xx folders/data/sales/*/*/*.csvGets csv files two levels under /data/sales/data/sales/2004/12/[XY]1?.csvGets all csv files from December 2004 starting with X or Y, followed by 1, and any single character

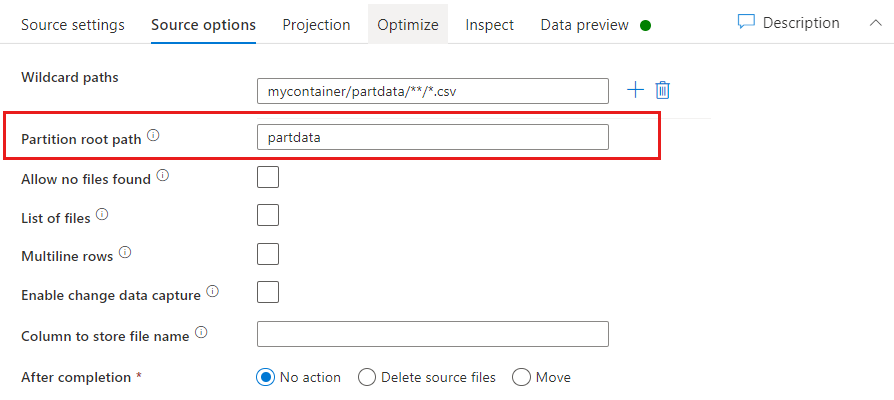

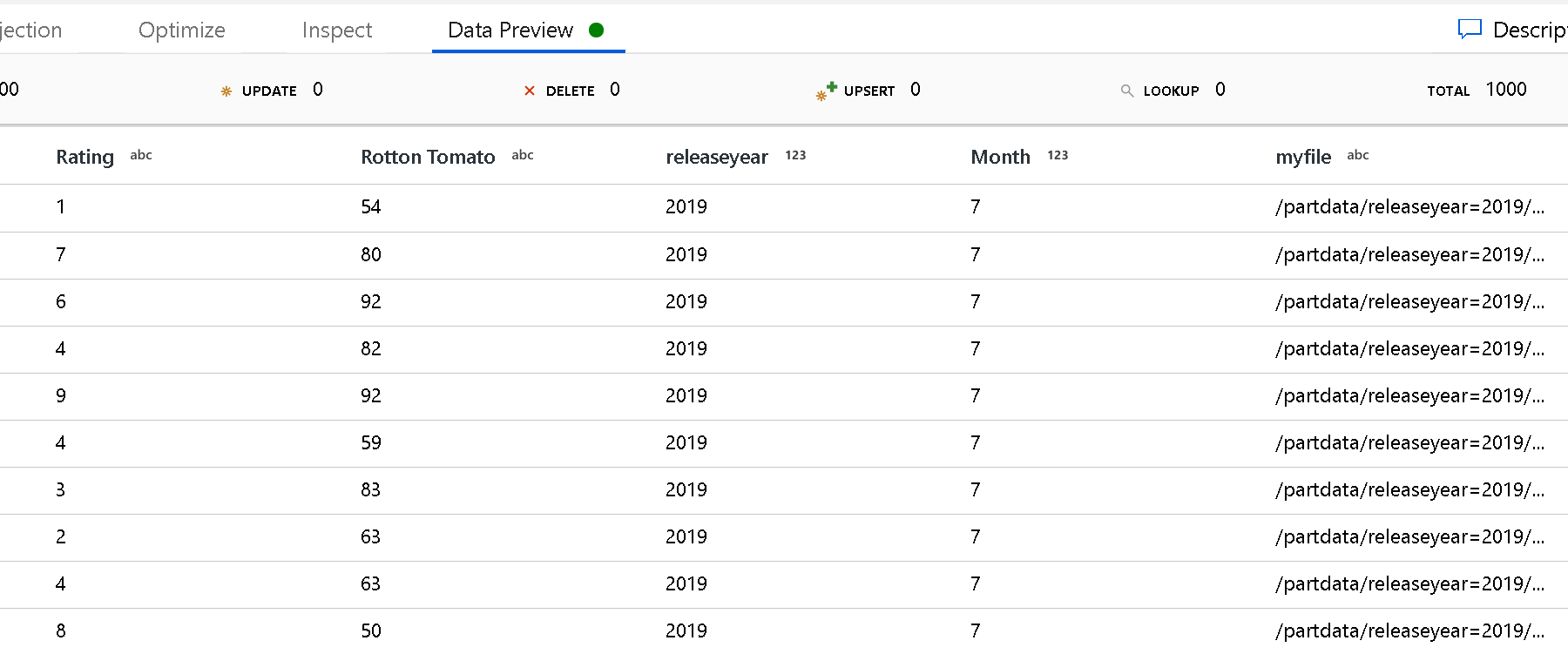

Partition Root Path: If you have partitioned folders in your file source with a key=value format (for example, year=2019), then you can assign the top level of that partition folder tree to a column name in your data flow data stream.

First, set a wildcard to include all paths that are the partitioned folders plus the leaf files that you wish to read.

Use the Partition Root Path setting to define what the top level of the folder structure is. When you view the contents of your data via a data preview, you see that the service adds the resolved partitions found in each of your folder levels.

List of files: This is a file set. Create a text file that includes a list of relative path files to process. Point to this text file.

Column to store file name: Store the name of the source file in a column in your data. Enter a new column name here to store the file name string.

After completion: Choose to do nothing with the source file after the data flow runs, delete the source file, or move the source file. The paths for the move are relative.

To move source files to another location post-processing, first select "Move" for file operation. Then, set the "from" directory. If you're not using any wildcards for your path, then the "from" setting is the same folder as your source folder.

If you have a source path with wildcard, your syntax looks like this below:

/data/sales/20??/**/*.csv

You can specify "from" as

/data/sales

And "to" as

/backup/priorSales

In this case, all files that were sourced under /data/sales are moved to /backup/priorSales.

Note

File operations run only when you start the data flow from a pipeline run (a pipeline debug or execution run) that uses the Execute Data Flow activity in a pipeline. File operations do not run in Data Flow debug mode.

Filter by last modified: You can filter which files you process by specifying a date range of when they were last modified. All date-times are in UTC.

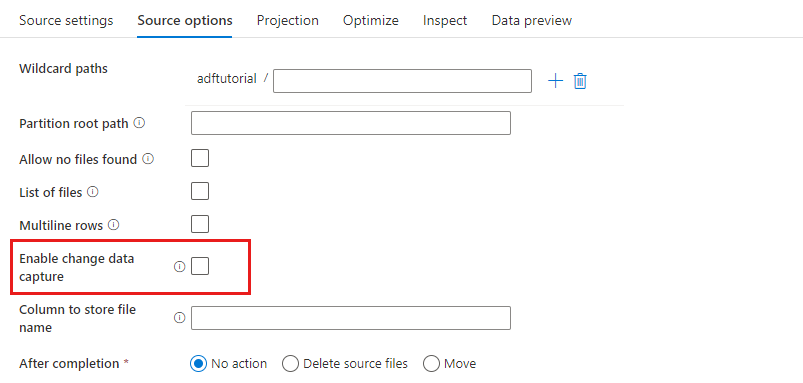

Enable change data capture: If true, you'll get new or changed files only from the last run. Initial load of full snapshot data will always be gotten in the first run, followed by capturing new or changed files only in next runs. For more details, see Change data capture.

Sink properties

In the sink transformation, you can write to either a container or folder in Azure Data Lake Storage Gen1. the Settings tab lets you manage how the files get written.

Clear the folder: Determines whether or not the destination folder gets cleared before the data is written.

File name option: Determines how the destination files are named in the destination folder. The file name options are:

- Default: Allow Spark to name files based on PART defaults.

- Pattern: Enter a pattern that enumerates your output files per partition. For example, loans[n].csv creates loans1.csv, loans2.csv, and so on.

- Per partition: Enter one file name per partition.

- As data in column: Set the output file to the value of a column. The path is relative to the dataset container, not the destination folder. If you have a folder path in your dataset, it is overridden.

- Output to a single file: Combine the partitioned output files into a single named file. The path is relative to the dataset folder. Be aware that the merge operation can possibly fail based upon node size. This option isn't recommended for large datasets.

Quote all: Determines whether to enclose all values in quotes

Lookup activity properties

To learn details about the properties, check Lookup activity.

GetMetadata activity properties

To learn details about the properties, check GetMetadata activity

Delete activity properties

To learn details about the properties, check Delete activity

Legacy models

Note

The following models are still supported as-is for backward compatibility. You are suggested to use the new model mentioned in above sections going forward, and the authoring UI has switched to generating the new model.

Legacy dataset model

| Property | Description | Required |

|---|---|---|

| type | The type property of the dataset must be set to AzureDataLakeStoreFile. | Yes |

| folderPath | Path to the folder in Data Lake Store. If not specified, it points to the root. Wildcard filter is supported. Allowed wildcards are * (matches zero or more characters) and ? (matches zero or single character). Use ^ to escape if your actual folder name has a wildcard or this escape char inside. For example: rootfolder/subfolder/. See more examples in Folder and file filter examples. |

No |

| fileName | Name or wildcard filter for the files under the specified "folderPath". If you don't specify a value for this property, the dataset points to all files in the folder. For filter, the wildcards allowed are * (matches zero or more characters) and ? (matches zero or single character).- Example 1: "fileName": "*.csv"- Example 2: "fileName": "???20180427.txt"Use ^ to escape if your actual file name has a wildcard or this escape char inside.When fileName isn't specified for an output dataset and preserveHierarchy isn't specified in the activity sink, the copy activity automatically generates the file name with the following pattern: "Data.[activity run ID GUID].[GUID if FlattenHierarchy].[format if configured].[compression if configured]", for example, "Data.0a405f8a-93ff-4c6f-b3be-f69616f1df7a.txt.gz". If you copy from a tabular source by using a table name instead of a query, the name pattern is "[table name].[format].[compression if configured]", for example, "MyTable.csv". |

No |

| modifiedDatetimeStart | Files filter based on the attribute Last Modified. The files are selected if their last modified time is greater than or equal to modifiedDatetimeStart and less than modifiedDatetimeEnd. The time is applied to the UTC time zone in the format of "2018-12-01T05:00:00Z". The overall performance of data movement is affected by enabling this setting when you want to do file filter with huge amounts of files. The properties can be NULL, which means no file attribute filter is applied to the dataset. When modifiedDatetimeStart has a datetime value but modifiedDatetimeEnd is NULL, it means the files whose last modified attribute is greater than or equal to the datetime value are selected. When modifiedDatetimeEnd has a datetime value but modifiedDatetimeStart is NULL, it means the files whose last modified attribute is less than the datetime value are selected. |

No |

| modifiedDatetimeEnd | Files filter based on the attribute Last Modified. The files are selected if their last modified time is greater than or equal to modifiedDatetimeStart and less than modifiedDatetimeEnd. The time is applied to the UTC time zone in the format of "2018-12-01T05:00:00Z". The overall performance of data movement is affected by enabling this setting when you want to do file filter with huge amounts of files. The properties can be NULL, which means no file attribute filter is applied to the dataset. When modifiedDatetimeStart has a datetime value but modifiedDatetimeEnd is NULL, it means the files whose last modified attribute is greater than or equal to the datetime value are selected. When modifiedDatetimeEnd has a datetime value but modifiedDatetimeStart is NULL, it means the files whose last modified attribute is less than the datetime value are selected. |

No |

| format | If you want to copy files as is between file-based stores (binary copy), skip the format section in both input and output dataset definitions. If you want to parse or generate files with a specific format, the following file format types are supported: TextFormat, JsonFormat, AvroFormat, OrcFormat, and ParquetFormat. Set the type property under format to one of these values. For more information, see the Text format, JSON format, Avro format, Orc format, and Parquet format sections. |

No (only for binary copy scenario) |

| compression | Specify the type and level of compression for the data. For more information, see Supported file formats and compression codecs. Supported types are GZip, Deflate, BZip2, and ZipDeflate. Supported levels are Optimal and Fastest. |

No |

Tip

To copy all files under a folder, specify folderPath only.

To copy a single file with a particular name, specify folderPath with a folder part and fileName with a file name.

To copy a subset of files under a folder, specify folderPath with a folder part and fileName with a wildcard filter.

Example:

{

"name": "ADLSDataset",

"properties": {

"type": "AzureDataLakeStoreFile",

"linkedServiceName":{

"referenceName": "<ADLS linked service name>",

"type": "LinkedServiceReference"

},

"typeProperties": {

"folderPath": "datalake/myfolder/",

"fileName": "*",

"modifiedDatetimeStart": "2018-12-01T05:00:00Z",

"modifiedDatetimeEnd": "2018-12-01T06:00:00Z",

"format": {

"type": "TextFormat",

"columnDelimiter": ",",

"rowDelimiter": "\n"

},

"compression": {

"type": "GZip",

"level": "Optimal"

}

}

}

}

Legacy copy activity source model

| Property | Description | Required |

|---|---|---|

| type | The type property of the copy activity source must be set to AzureDataLakeStoreSource. |

Yes |

| recursive | Indicates whether the data is read recursively from the subfolders or only from the specified folder. When recursive is set to true and the sink is a file-based store, an empty folder or subfolder isn't copied or created at the sink. Allowed values are true (default) and false. |

No |

| maxConcurrentConnections | The upper limit of concurrent connections established to the data store during the activity run. Specify a value only when you want to limit concurrent connections. | No |

Example:

"activities":[

{

"name": "CopyFromADLSGen1",

"type": "Copy",

"inputs": [

{

"referenceName": "<ADLS Gen1 input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "AzureDataLakeStoreSource",

"recursive": true

},

"sink": {

"type": "<sink type>"

}

}

}

]

Legacy copy activity sink model

| Property | Description | Required |

|---|---|---|

| type | The type property of the copy activity sink must be set to AzureDataLakeStoreSink. |

Yes |

| copyBehavior | Defines the copy behavior when the source is files from a file-based data store. Allowed values are: - PreserveHierarchy (default): Preserves the file hierarchy in the target folder. The relative path of the source file to the source folder is identical to the relative path of the target file to the target folder. - FlattenHierarchy: All files from the source folder are in the first level of the target folder. The target files have autogenerated names. - MergeFiles: Merges all files from the source folder to one file. If the file name is specified, the merged file name is the specified name. Otherwise, the file name is autogenerated. |

No |

| maxConcurrentConnections | The upper limit of concurrent connections established to the data store during the activity run. Specify a value only when you want to limit concurrent connections. | No |

Example:

"activities":[

{

"name": "CopyToADLSGen1",

"type": "Copy",

"inputs": [

{

"referenceName": "<input dataset name>",

"type": "DatasetReference"

}

],

"outputs": [

{

"referenceName": "<ADLS Gen1 output dataset name>",

"type": "DatasetReference"

}

],

"typeProperties": {

"source": {

"type": "<source type>"

},

"sink": {

"type": "AzureDataLakeStoreSink",

"copyBehavior": "PreserveHierarchy"

}

}

}

]

Change data capture (preview)

Azure Data Factory can get new or changed files only from Azure Data Lake Storage Gen1 by enabling Enable change data capture (Preview) in the mapping data flow source transformation. With this connector option, you can read new or updated files only and apply transformations before loading transformed data into destination datasets of your choice.

Make sure you keep the pipeline and activity name unchanged, so that the checkpoint can always be recorded from the last run to get changes from there. If you change your pipeline name or activity name, the checkpoint will be reset, and you'll start from the beginning in the next run.

When you debug the pipeline, the Enable change data capture (Preview) works as well. The checkpoint is reset when you refresh your browser during the debug run. After you're satisfied with the result from debug run, you can publish and trigger the pipeline. It will always start from the beginning regardless of the previous checkpoint recorded by debug run.

In the monitoring section, you always have the chance to rerun a pipeline. When you're doing so, the changes are always gotten from the checkpoint record in your selected pipeline run.

Related content

For a list of data stores supported as sources and sinks by the copy activity, see supported data stores.

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for